Cloud costs are growing faster than ever, and most teams still spend hours digging through billing data trying to understand where the money goes. AI-driven cost optimization platforms make that easier. They automatically detect waste, rightsize workloads, and keep applications running efficiently across AWS, Azure, GCP, and Kubernetes.

In this guide, we’ve rounded up five of the best AI tools that help you reduce cloud bills, simplify FinOps, and stay ahead of runaway infrastructure costs.

Join Index.dev to get matched with global companies working on cutting-edge cloud and AI projects.

1. CloudZero

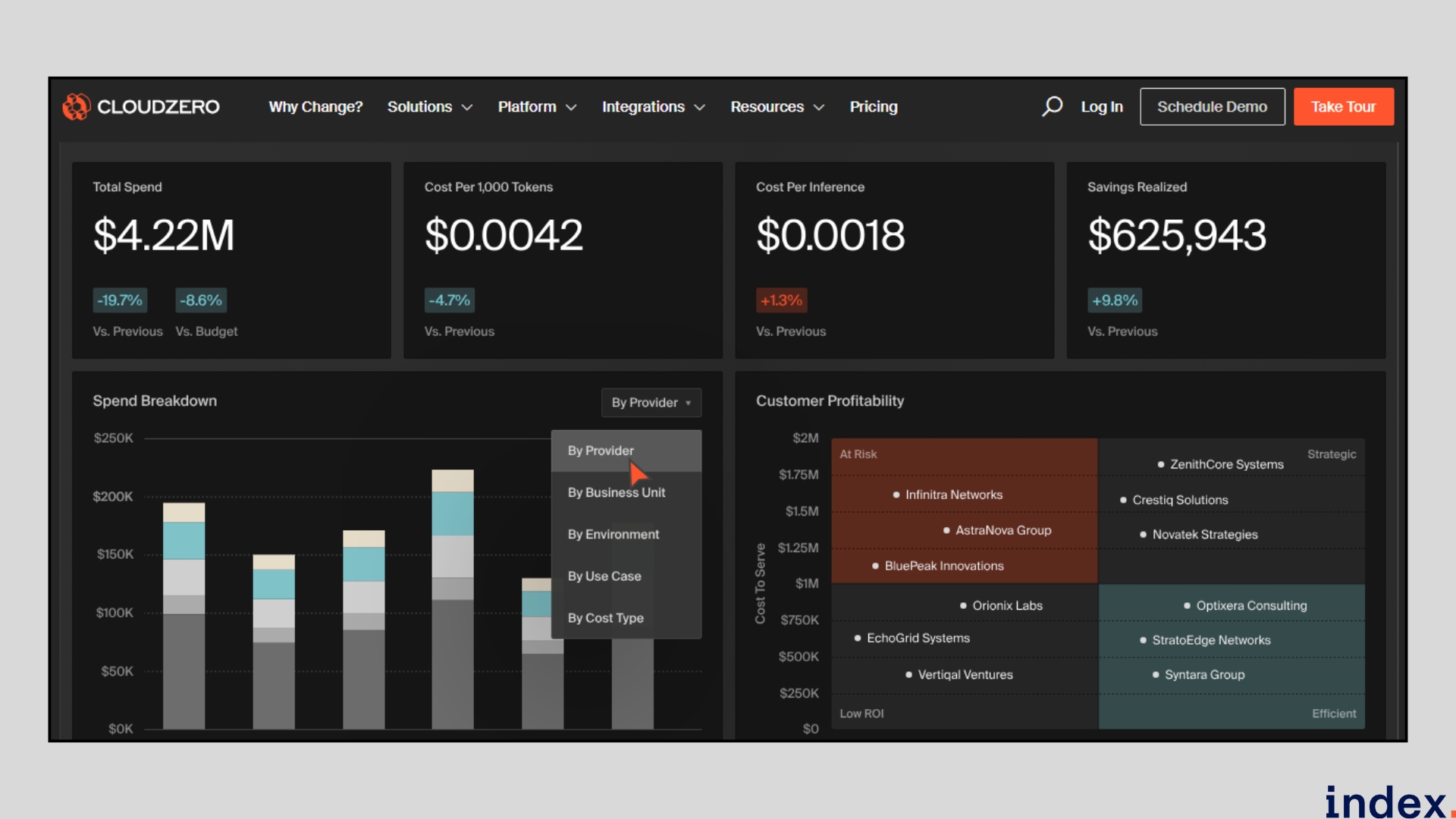

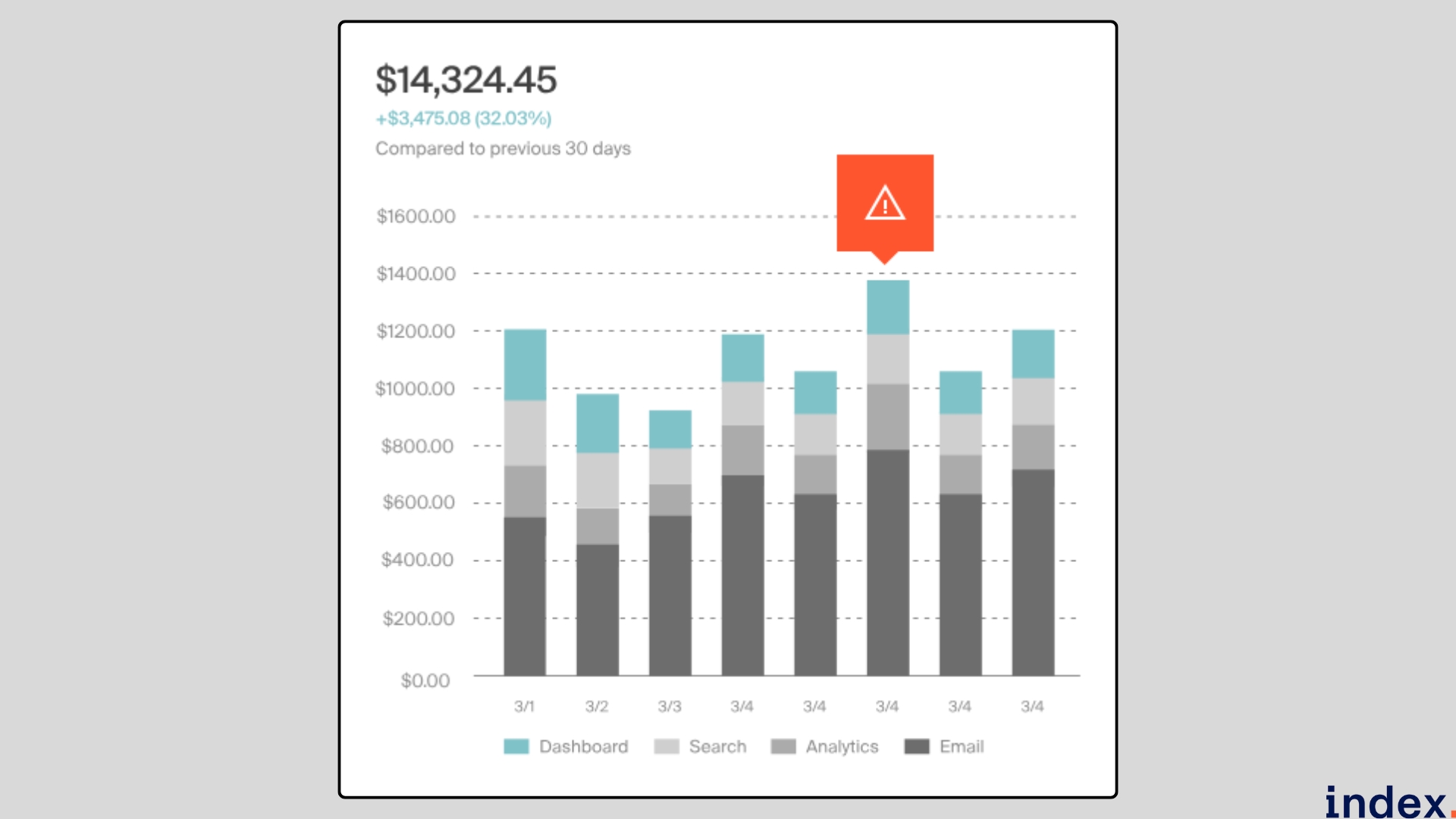

CloudZero is a cloud cost intelligence platform that turns raw billing and telemetry into business-ready insights, helping engineering, FinOps, and product teams make informed cost decisions. It ingests data from multi-cloud environments, Kubernetes clusters, and AI services, then normalises it and attributes spend to meaningful business dimensions such as teams, features, customers, or AI models.

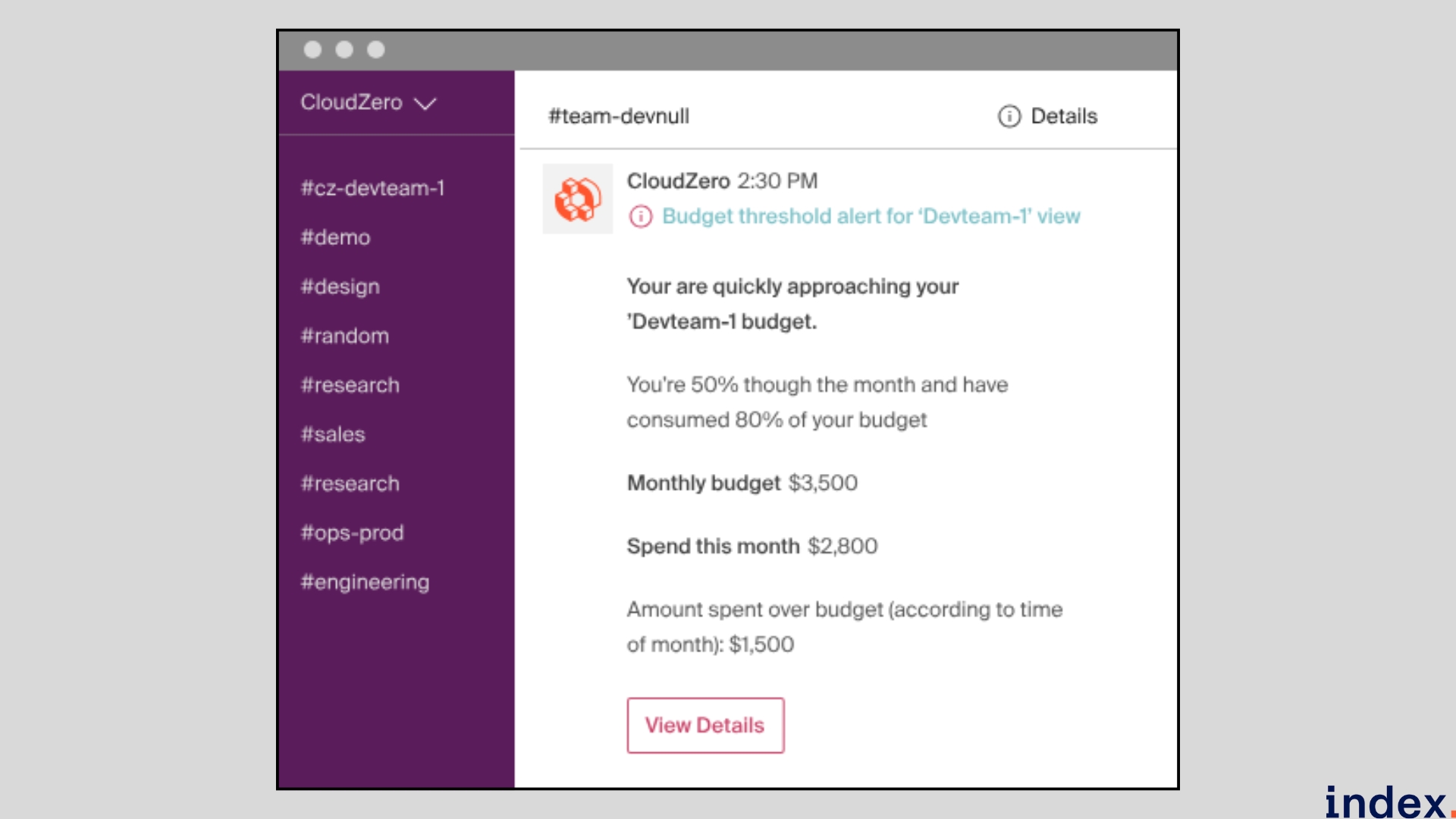

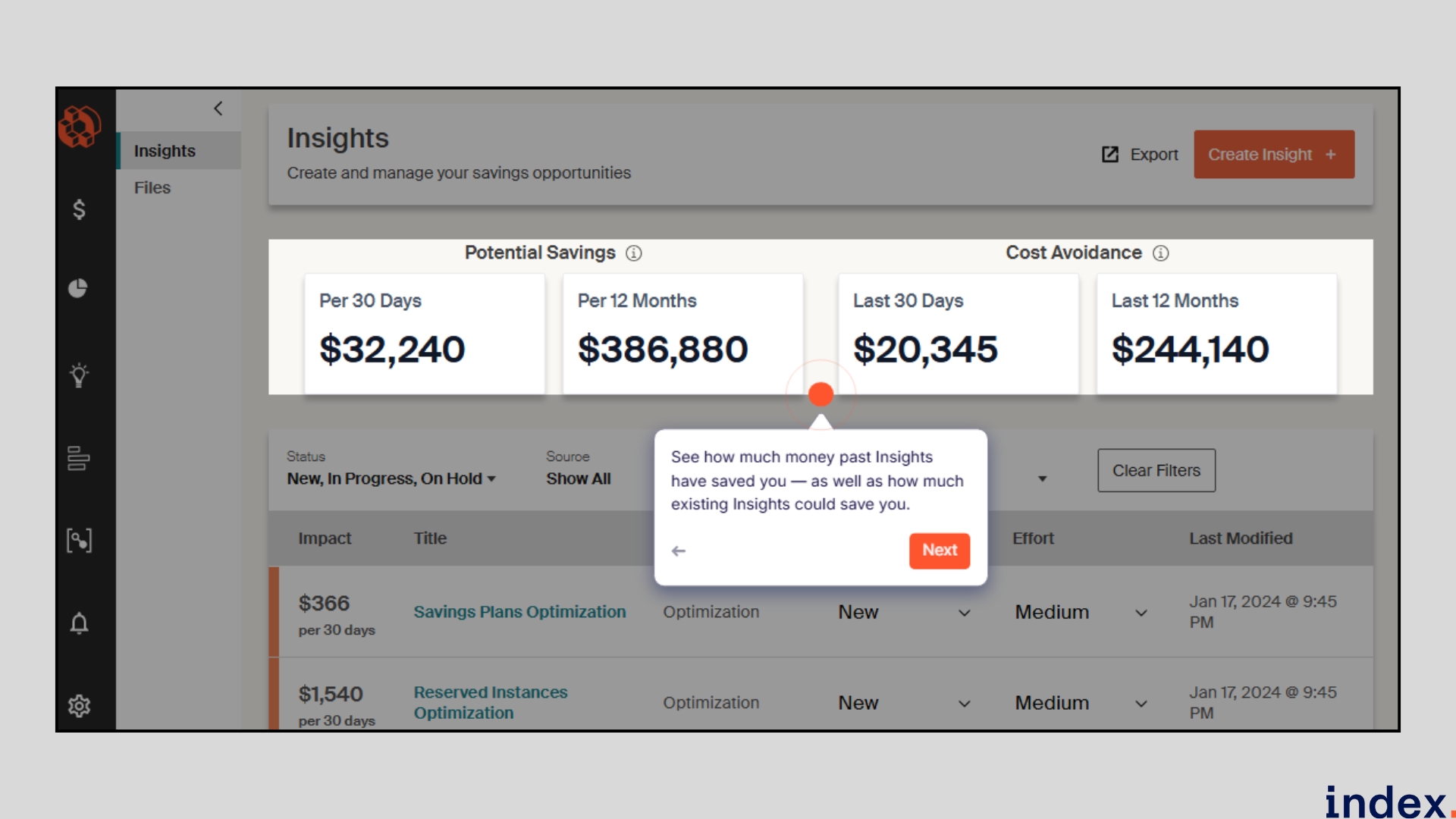

By translating technical usage into unit economics, cost per feature, per customer, or per token, CloudZero enables teams to prioritise optimisations that preserve performance while improving margins. Coupled with real-time anomaly detection and dedicated FinOps guidance, the platform accelerates decision-making and delivers measurable savings without slowing innovation.

Key features

- AI-driven cost allocation: Automatically attributes cloud spend to the right product, team, or feature even when tags are missing.

- Unit economics reporting: Shows the cost per customer, feature, API call, or AI token, so product and finance can make trade-offs.

- Real-time anomaly detection: Flags unusual spend patterns (e.g., token spikes or egress surges) and points to likely causes.

- Kubernetes & AI visibility: Breaks down costs by pod, namespace, and model so modern stacks are tracked accurately.

- Explorer & Analytics: Interactive dashboards that let teams drill from business metrics down to raw billing lines.

- FinOps Account Manager (FAM): Dedicated expert support to map dimensions, prioritise actions, and operationalise savings.

- Multi-cloud & third-party integrations: Native connectors for AWS, Azure, GCP, Snowflake, Datadog, OpenAI, Anthropic, and more.

- Savings tracking & ROI measurement: Measures realised cost reductions, enabling teams to validate impact and refine optimisation.

Why we selected this tool

We selected CloudZero for cloud infrastructure cost optimisation because it converts raw billing into engineer-usable insights that speed remediation and reduce waste. In tests, it accelerated root-cause discovery of infra issues, misconfigured jobs, runaway model token use, and unexpected inter-region data-transfer charges, letting teams fix problems before bills spike. Its precise attribution plus FinOps enablement shortens discovery-to-remediation cycles and scales with minimal overhead.

Pricing

CloudZero uses a tiered, spend-aligned pricing model with predictable monthly rates, unlimited users, custom dashboards, and monthly FinOps account-manager check-ins. You can request a quote (customers typically see ROI in under 3 months).

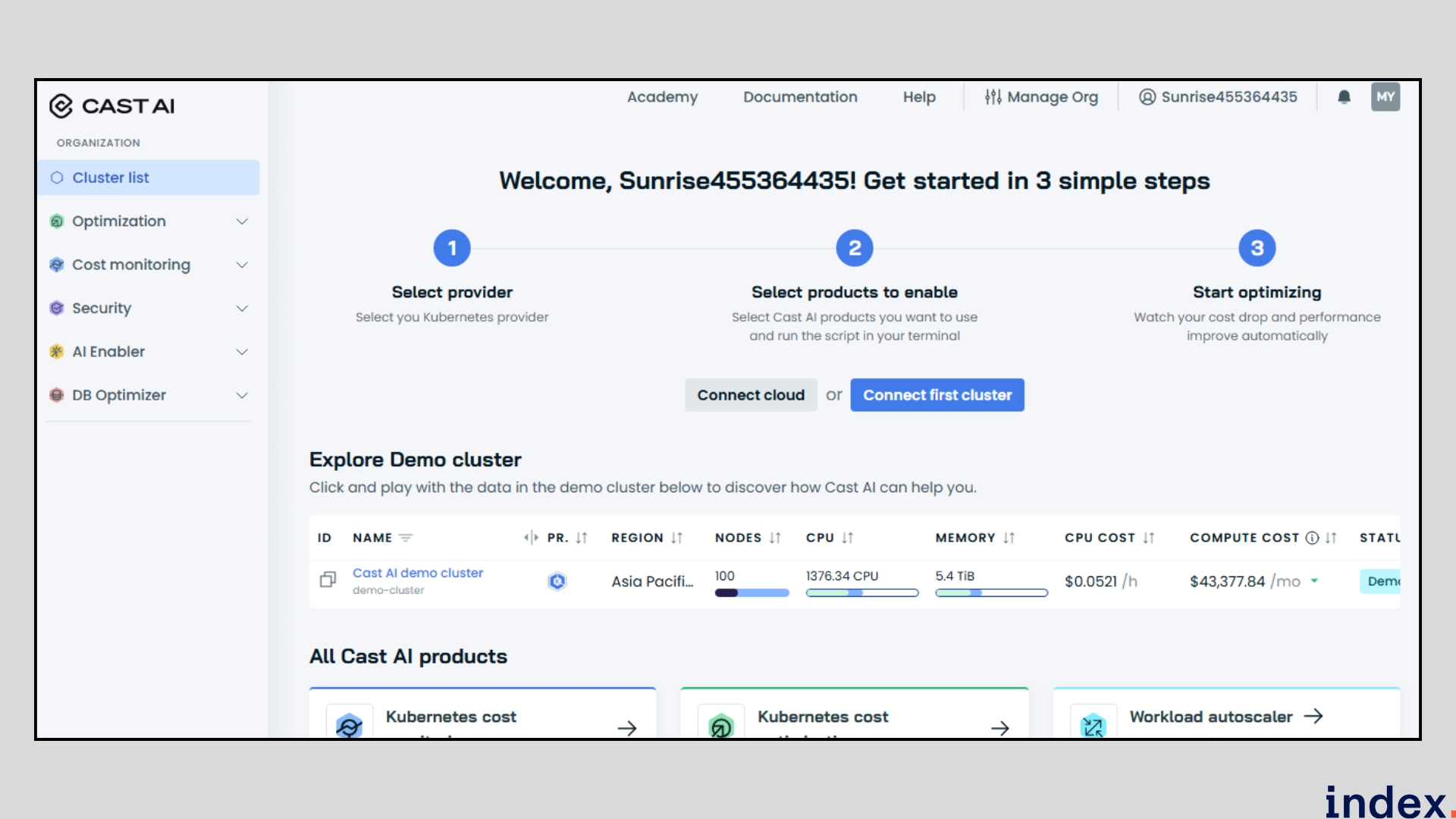

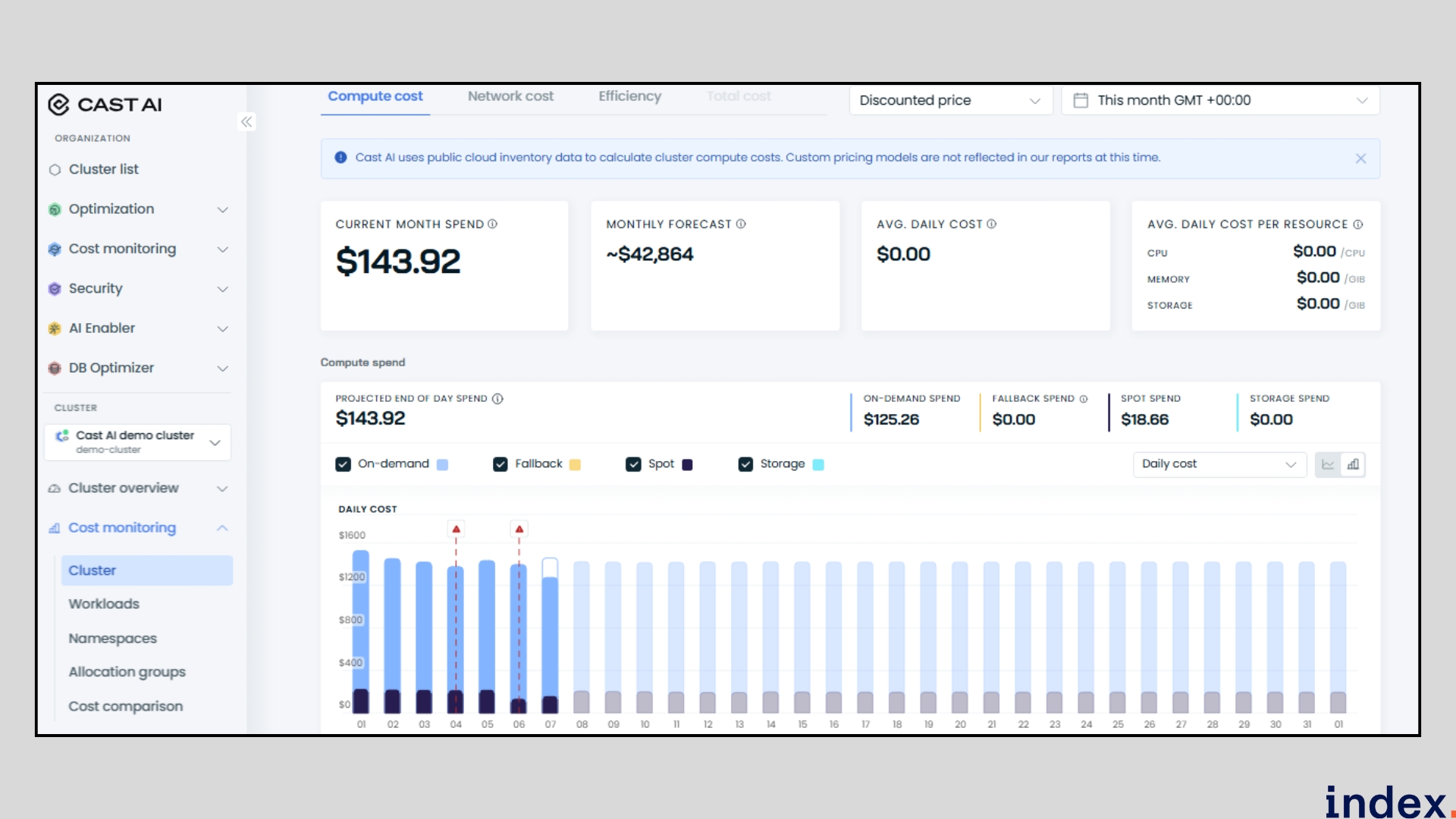

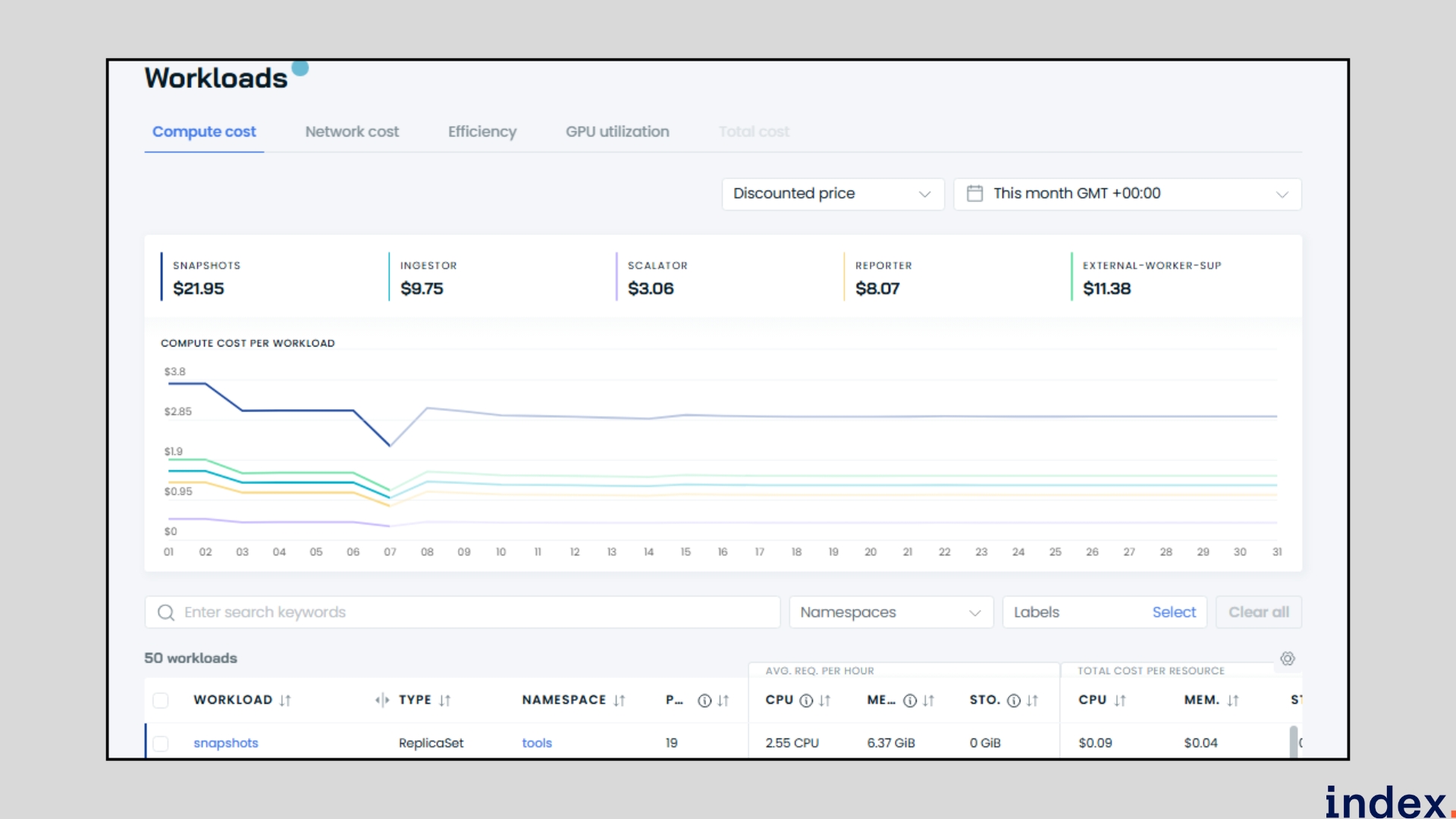

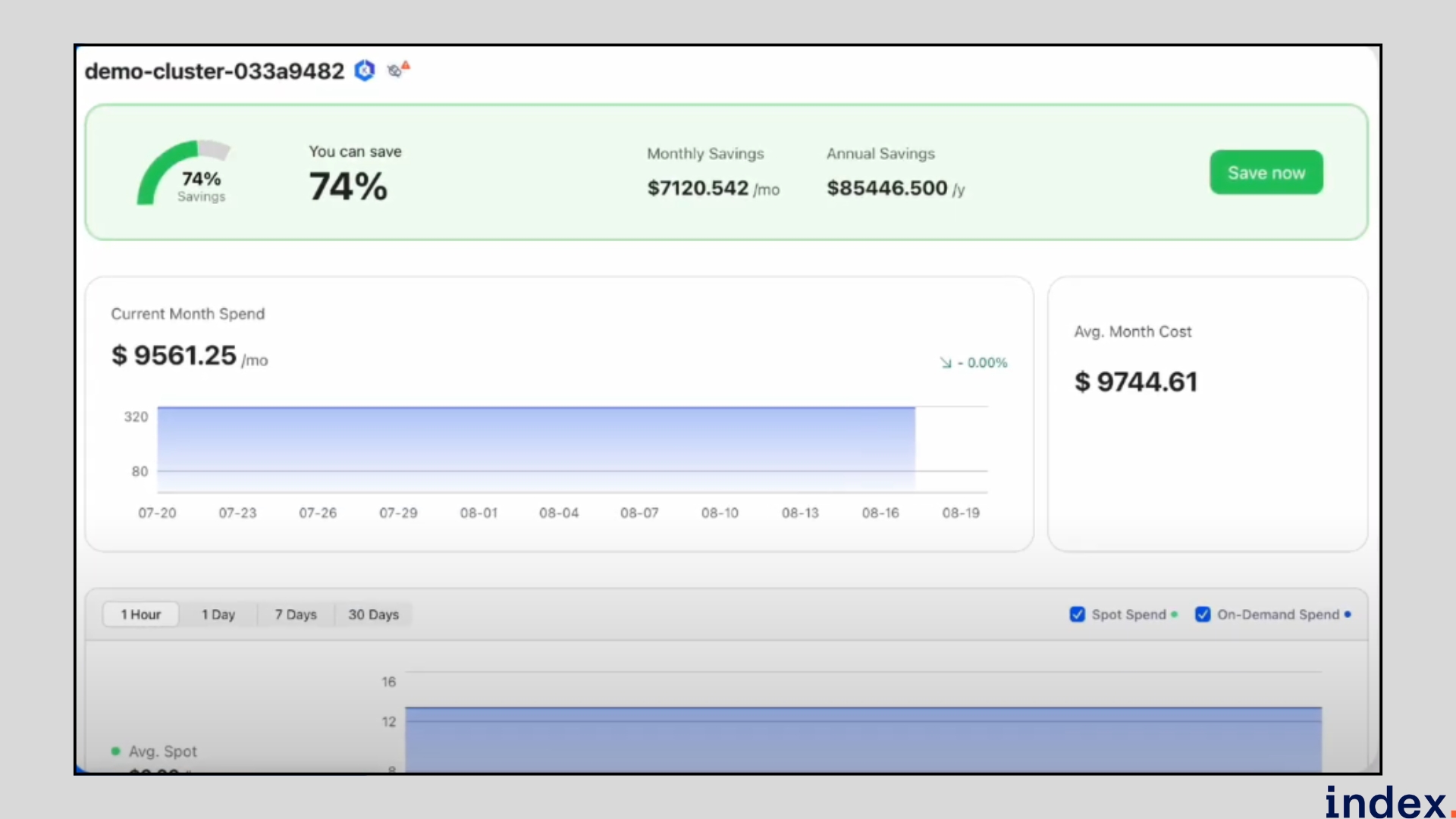

2. Cast AI

Cast AI is an Application Performance Automation platform that focuses on autonomous optimisation of Kubernetes and cloud infrastructure. It continuously analyses cluster telemetry and workload requirements to right-size nodes, bin-pack pods, automate Spot/Preemptible usage, and tune autoscaling, so teams can lower compute costs while preserving (or improving) application performance. CAST AI also provides Kubernetes security checks, LLM, and database cost optimisation, and org-level cost monitoring across AWS, GCP, Azure, and on-prem environments.

Key features

- Autonomous rightsizing & bin-packing: Automatically finds the best mix of instance families and sizes, consolidating workloads to reduce idle capacity and maximise node utilisation without manual intervention.

- Spot / preemptible orchestration: Intelligently blends spot/preemptible instances with on-demand capacity to capture deep discounts while minimising interruption risk using automated fallback strategies.

- Real-time cost & utilisation dashboards: Provide live visibility into costs by cluster, namespace, workload, GPU, and network traffic, so teams can spot waste and validate optimisations quickly.

- Automation policy engine: Apply guardrails and policy controls that determine which actions CAST AI can simulate or execute, ensuring optimisations respect performance and compliance requirements.

- LLM & GPU cost insights: Tracks GPU and LLM spending across providers and simulates cheaper provider/instance alternatives to reduce expensive AI inference and training costs.

- Database & caching optimisation: Identifies over-provisioned database replicas and caching inefficiencies, suggesting resizing or configuration changes that cut costs without code changes.

- Savings simulation & ROI forecasting: Simulates proposed optimisations and projects potential savings so teams can evaluate impact before enabling automation.

- Integrations & IaC compatibility: Works with Terraform, Prometheus, Grafana, Helm, CI/CD pipelines, and major cloud providers for frictionless adoption and automated enforcement.

Why we selected this tool

We selected CAST AI because it turns Kubernetes cost reduction from a manual chore into an automated, low-risk process. It detects waste, tests savings through simulations, and can safely apply optimisations (rightsizing, bin-packing, spot orchestration) under your guardrails. For teams running many clusters or costly GPU/LLM workloads, CAST AI delivers fast, measurable savings while cutting DevOps effort and preserving performance.

Pricing

CAST AI offers a free tier plus usage-based, resource-aligned paid plans; contact sales for a customised enterprise quote.

Next up: Discover the top 10 cloud computing skills to master in 2025, from architecture to AI.

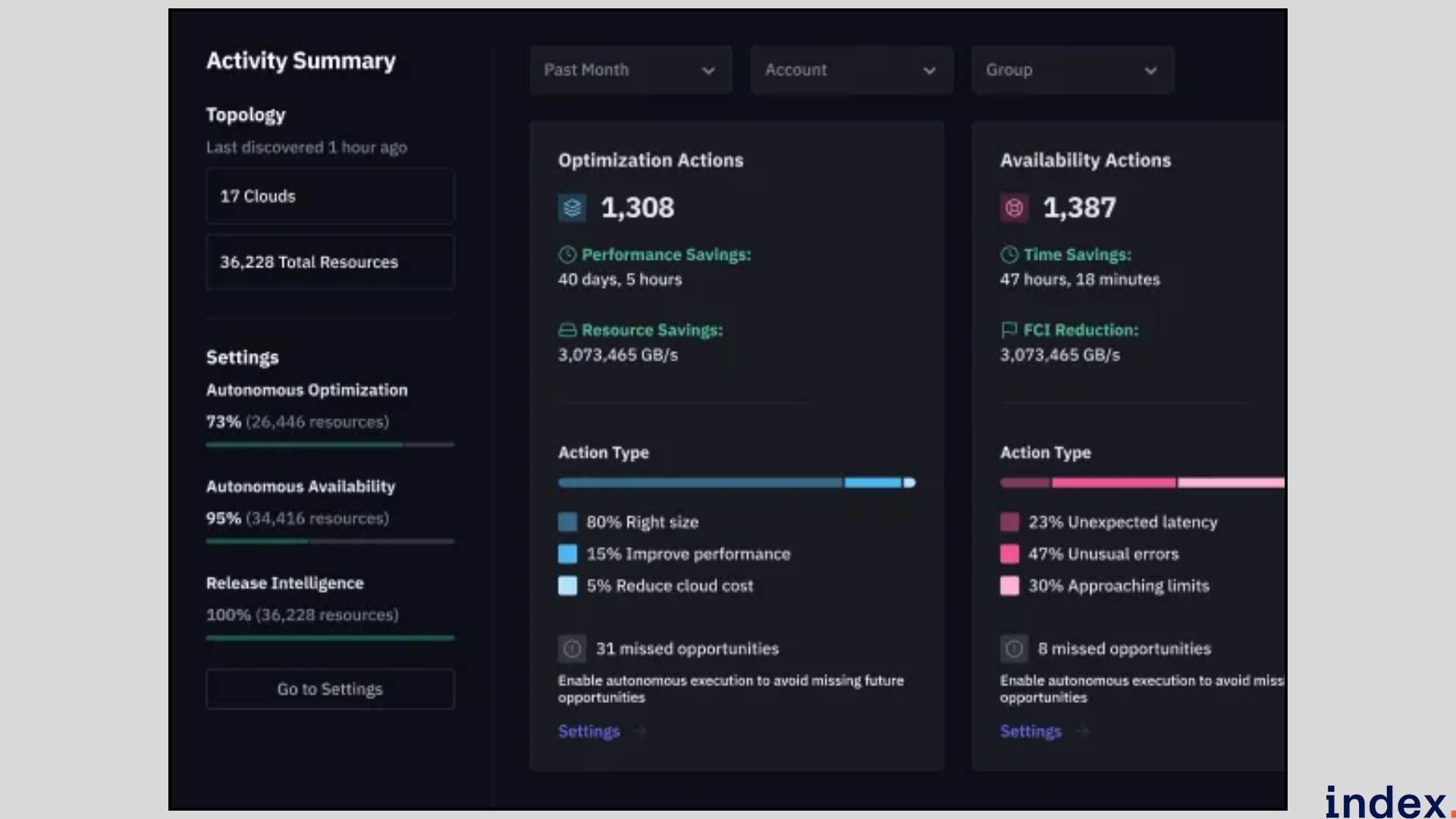

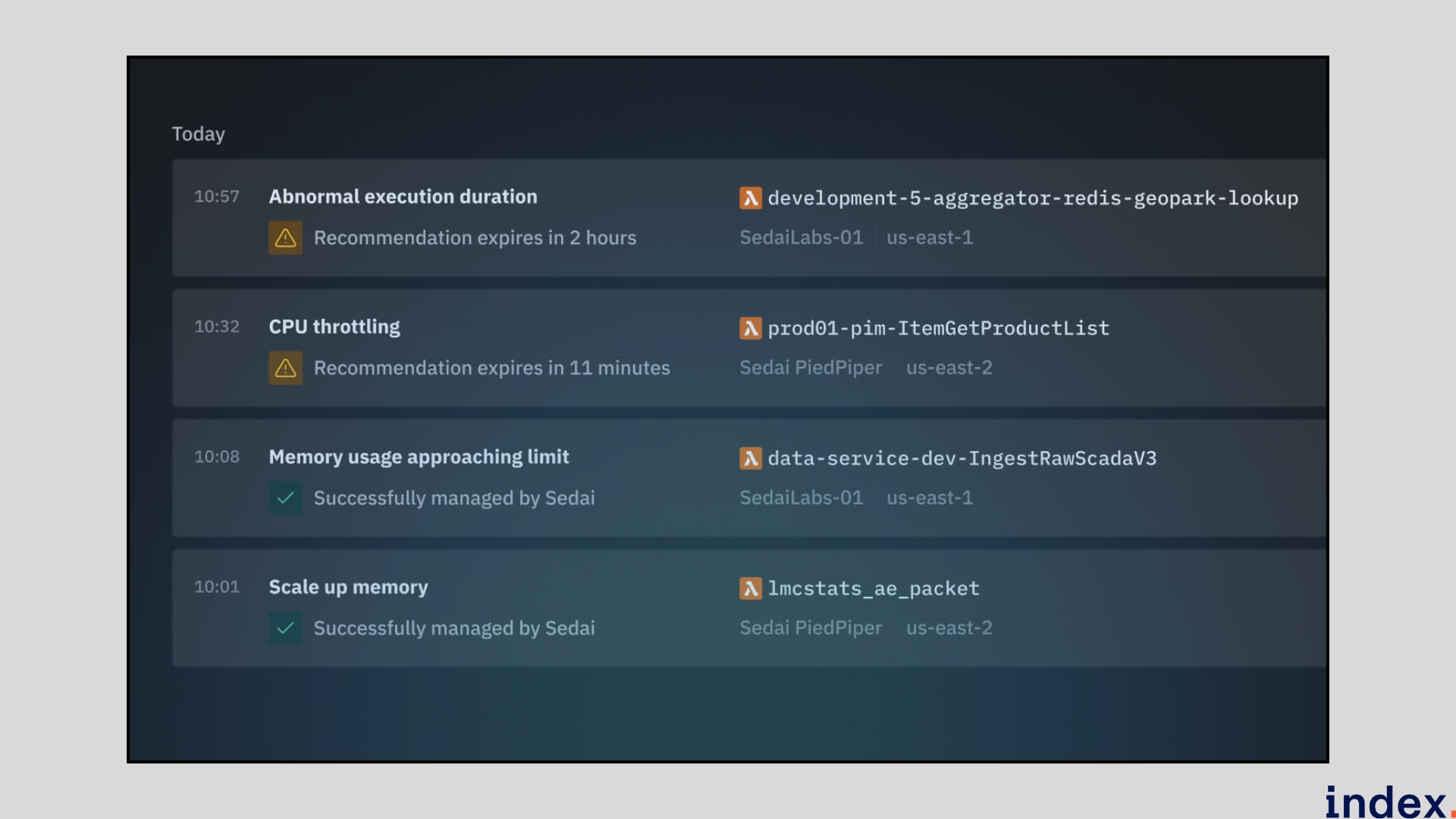

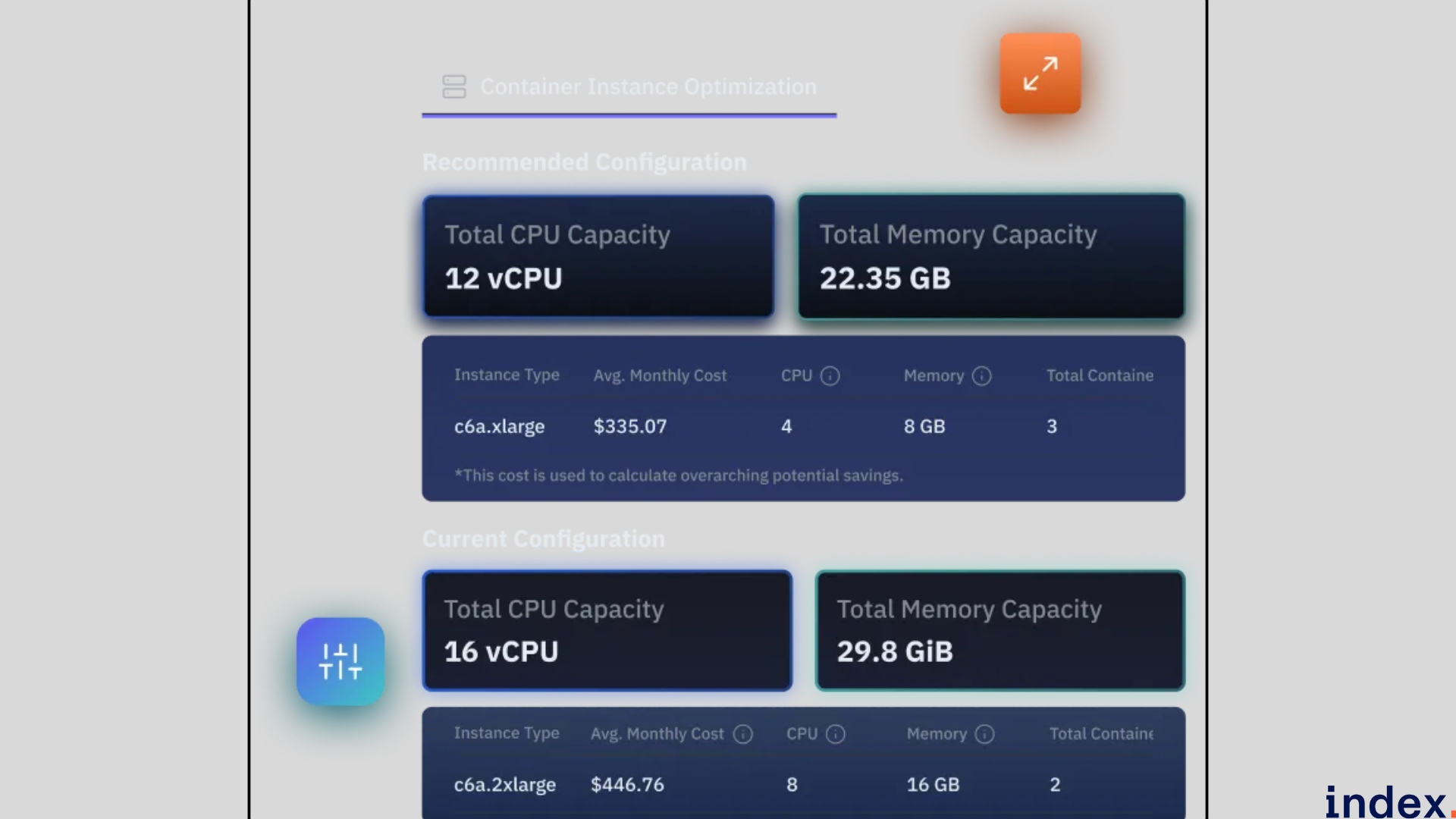

3. Sedai

Sedai is an autonomous cloud optimization platform that uses reinforcement learning and predictive impact simulations to safely change infrastructure and application configurations in production. It adjusts autoscaler policies, VM/instance SKUs, container CPU/memory requests & limits, and serverless concurrency based on real traffic signals, while enforcing Smart SLOs and automatic rollback if a change risks latency or availability. Because Sedai validates actions against learned behaviour before execution, it captures deep cost savings without introducing operational incidents.

Key features

- Workload rightsizing (vertical & horizontal): Analyses real traffic patterns to set CPU/memory requests & limits and replica counts so containerised apps run with the least necessary compute.

- Predictive autoscaling: Learns seasonality and traffic spikes to control HPA/VPA or cloud autoscalers, reducing idle capacity during quiet periods while ensuring headroom for peaks.

- Infrastructure rightsizing & SKU selection: After optimising workloads, Sedai recommends optimal instance types and node group configurations to maximise performance per dollar.

- Purchasing optimisation (post-engineering): Evaluates Savings Plans / on-demand mixes only after engineering optimisations have been applied, ensuring you buy the right commitments.

- Serverless cost tuning: Finds the optimal memory/CPU and concurrency settings for Lambda (or equivalents) to cut cost without hurting latency.

- Release intelligence: Tracks cost and performance changes per release so teams can detect regressions or drifting resource needs and close the loop with developers.

- Safety-first operation modes (Crawl → Walk → Run): Start with suggested opportunities (Datapilot), execute small supervised changes (Copilot), then enable full autonomous actions (Autopilot) once you’re comfortable.

- FinOps & ROI tracking: Built-in views forecast spend, recommend commitments, and measure realised savings so finance teams can validate payback.

- Wide integrations & multi-cloud support: Connects to CloudWatch/Datadog/Prometheus and supports AWS, GCP, Azure, Kubernetes, serverless, and storage services for holistic optimisation.

Why we selected this tool

We selected Sedai because it optimises both application configuration and infrastructure purchases by applying ML-driven rightsizing, predictive autoscaling, and SKU selection in the correct order, ensuring durable, low-risk savings. Its release-aware approach and safety-first Copilot-to-Autopilot workflow let teams validate outcomes in production without incidents, making it ideal for organisations that need aggressive cost reduction while preserving availability and performance.

Pricing

Sedai offers custom, enterprise-tier pricing based on scope and resources managed. You can contact sales for a customized quote and ROI estimate.

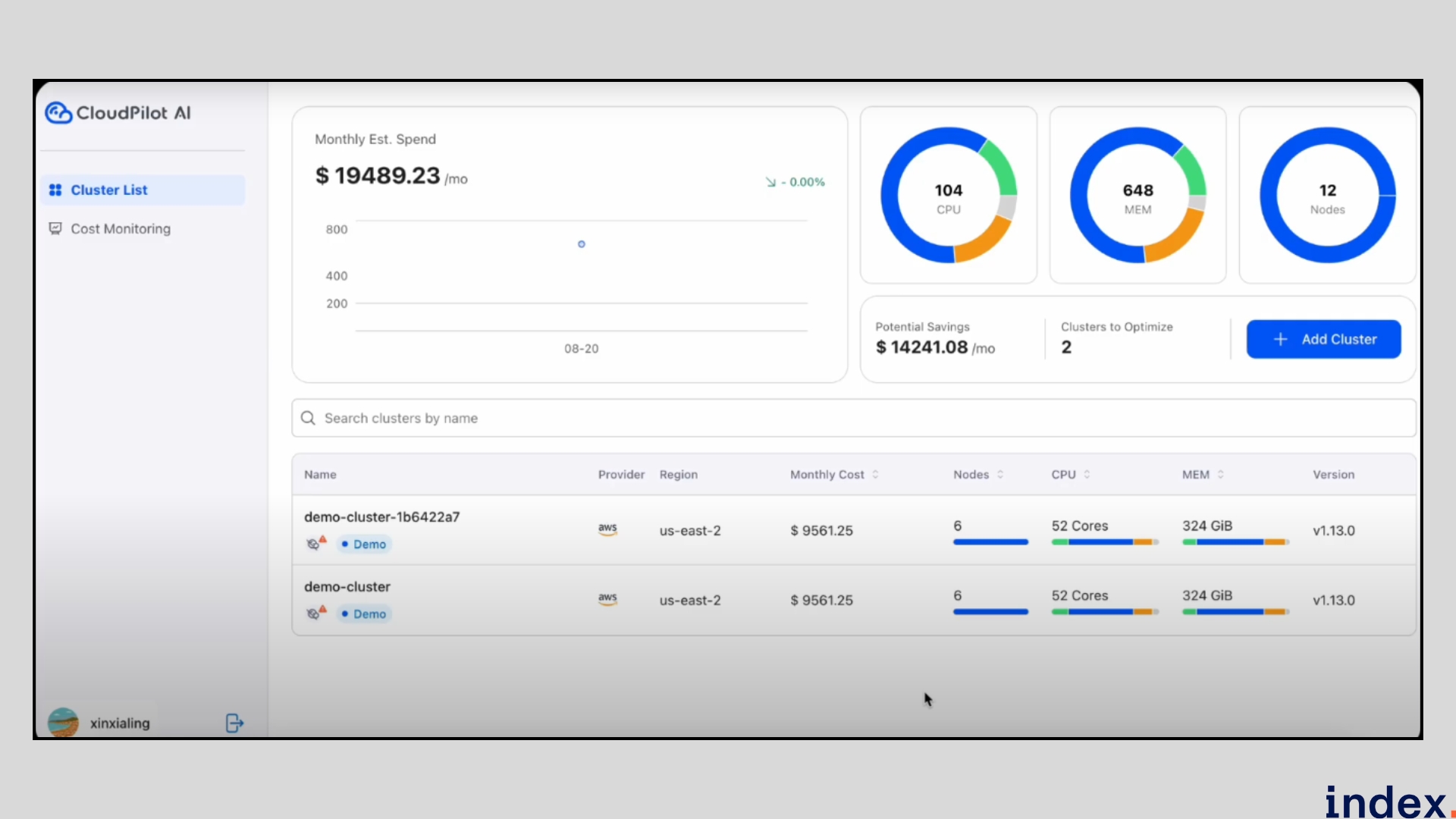

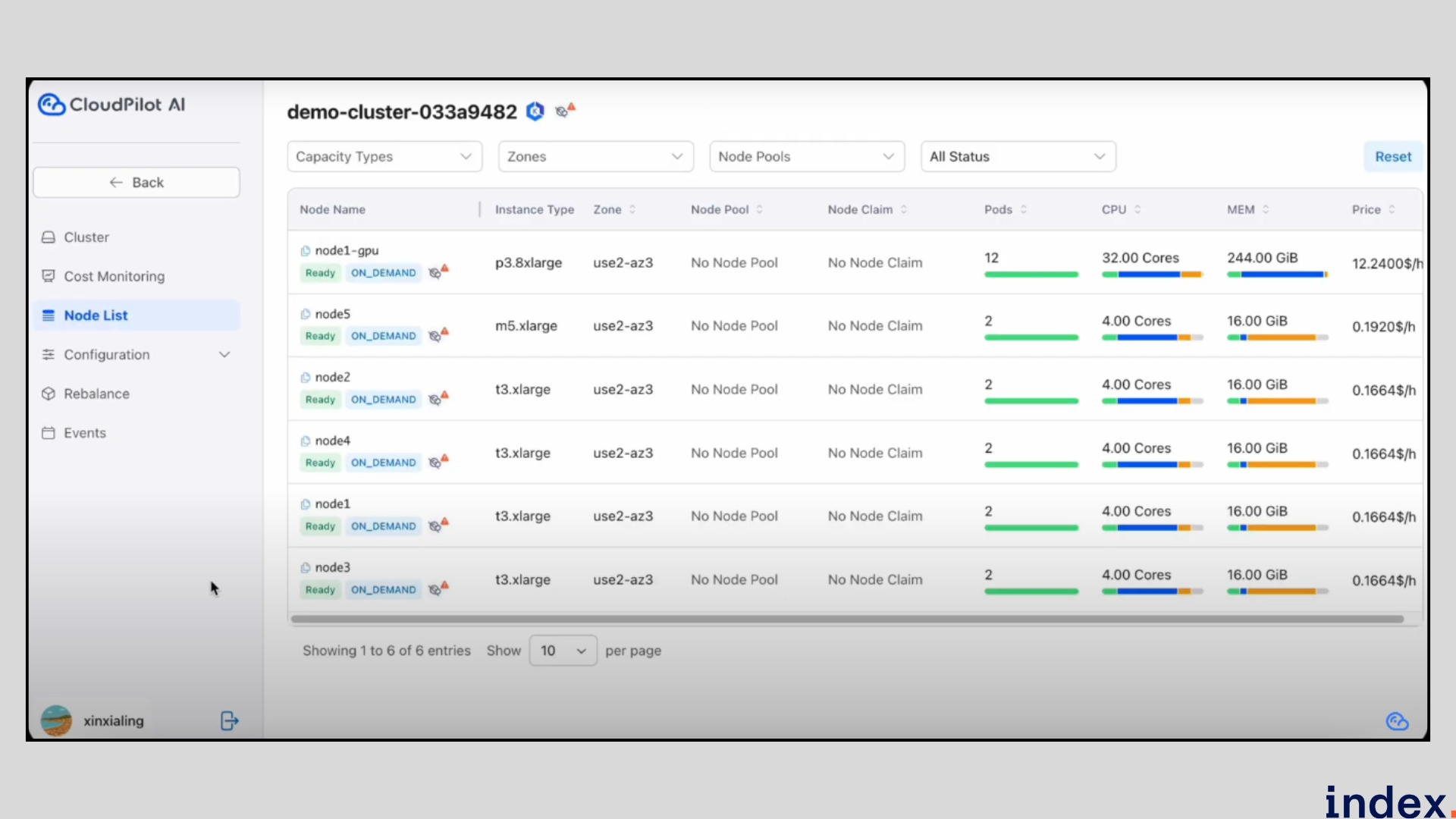

4. CloudPilot AI

CloudPilot AI is an intelligent, Karpenter-powered platform built to make Kubernetes clusters self-optimising for cost, speed, and resilience. It goes beyond simple autoscaling by predicting Spot interruptions before they happen, selecting the most cost-efficient nodes automatically, and continuously tuning cluster resources in real time. The platform provides DevOps teams with full visibility into where every dollar of compute is spent, helping them cut cloud bills by 60–70% while maintaining performance and uptime.

Key features

- Predictive Spot Management: CloudPilot AI forecasts Spot instance interruptions up to 45 minutes in advance, allowing it to reschedule workloads and prevent downtime while maximising savings proactively.

- Autonomous Node Provisioning: The platform intelligently selects, provisions, and consolidates nodes across 800+ instance types and families, ensuring optimal resource allocation and price-performance balance.

- Dynamic Workload Rightsizing: Continuously analyses pod and node utilisation to rightsize CPU, memory, and replica counts, reducing overprovisioning and improving cluster efficiency.

- Event-Level Observability: Provides full visibility into Karpenter’s autoscaling decisions, including node creation, deletion, and rebalancing, to simplify troubleshooting and performance monitoring.

- Real-Time Cluster Cost Insights: Tracks and visualises cost data at node and pod levels, helping teams pinpoint idle resources, inefficiencies, and workload-level cost trends.

- Mixed Scheduling for Reliability: Supports hybrid workload placement across Spot and On-Demand instances, automatically maintaining performance for critical services while achieving maximum savings.

Why We Selected This Tool

We chose CloudPilot AI because it brings together automation, predictive scaling, and deep cost visibility in a single Kubernetes-native solution. Unlike traditional autoscalers, it doesn’t just react to usage spikes; it anticipates them, optimising both Spot and On-Demand capacity intelligently. Its predictive Spot management, event observability, and node-level cost reporting make it an ideal tool for organisations looking to reduce Kubernetes cloud costs without compromising reliability or performance.

Pricing

CloudPilot AI offers a free Community tier, a pay-as-you-go Standard plan billed per CPU core managed, and a custom Enterprise plan with dedicated onboarding and support.

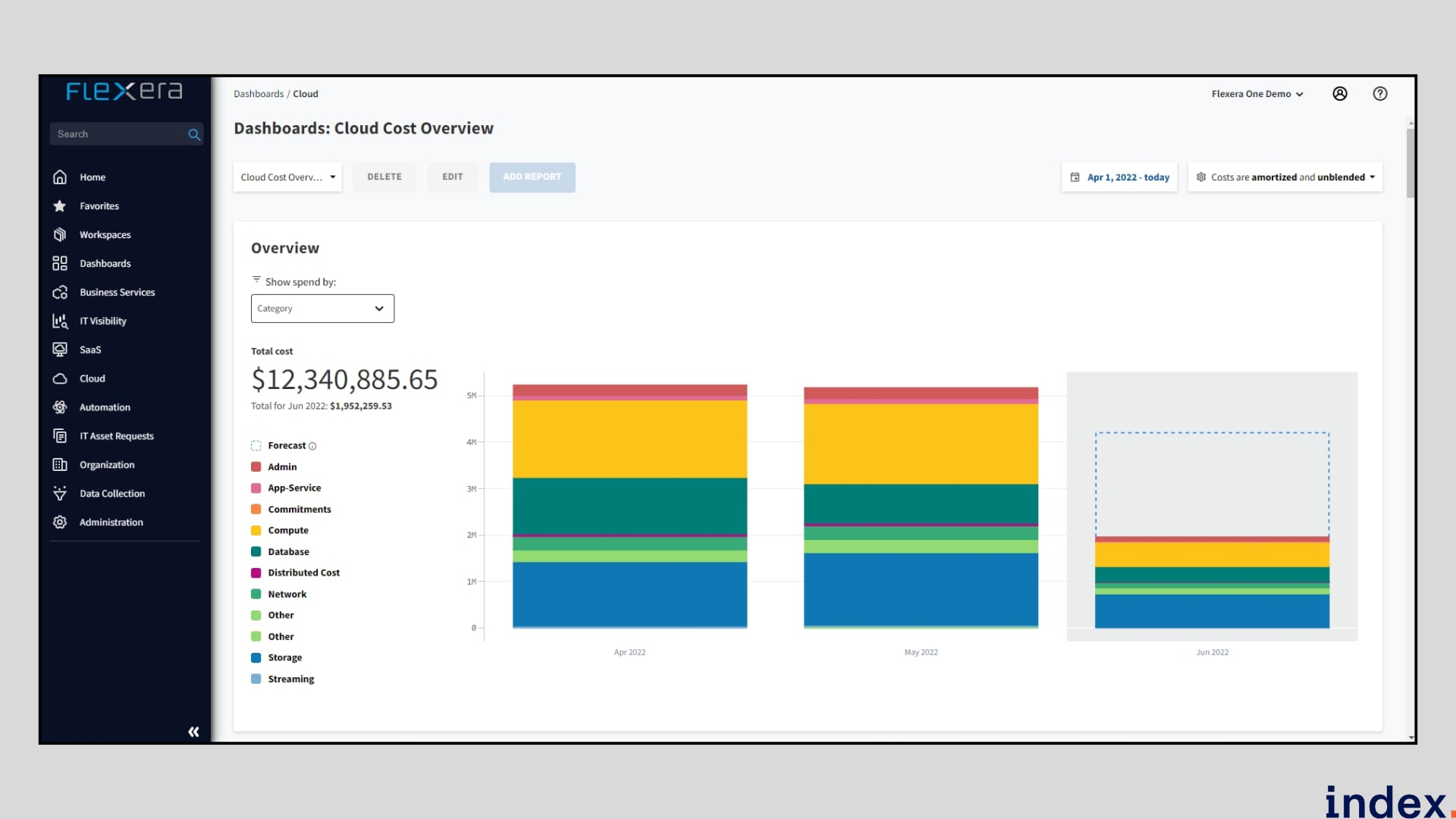

5. Flexera

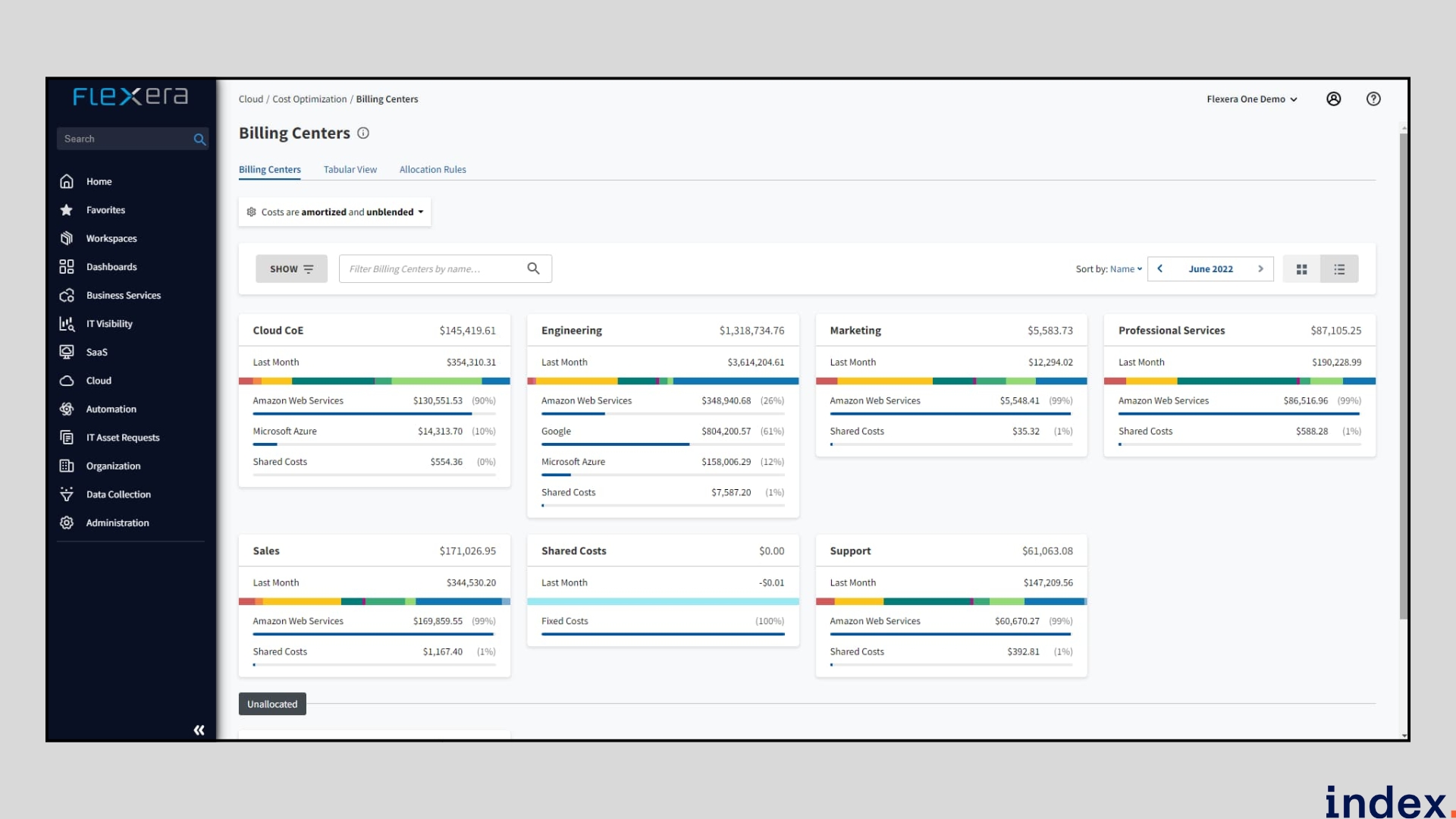

Flexera is a powerful, enterprise-grade cloud cost management and optimisation platform that provides organisations with a unified view of multi-cloud spending across AWS, Azure, GCP, and hybrid infrastructures. It goes beyond cost reporting by enabling automated cost allocation, workload placement, and governance-driven optimisation, ensuring that every dollar spent on cloud resources directly contributes to business outcomes.

Flexera’s FinOps framework helps enterprises align cloud usage with budgets, forecast costs accurately, and standardise cost-saving practices across teams and departments.

Key features

- Automated cost allocation & tagging-based dimensions: Flexera can discover tags in billing data and use them to create structured dimensions, automatically allocating costs to teams, projects, or departments.

- Multi-cloud & hybrid spend visibility: It ingests billing and telemetry across AWS, Azure, GCP, and private/cloud services to give a unified view of cloud and IT spend across the enterprise.

- Budgeting, forecasting & optimisation goal tracking: Flexera lets users define cloud budgets, forecast future consumption, and measure progress against optimisation goals.

- Rule-based dimensions and recommendations: Users can define rules (e.g., custom cost dimensions), and the system provides actionable recommendations to reduce waste or reallocate resources.

- APIs for integration & automation: Flexera exposes Cloud Cost Optimisation APIs for billing, budget, cost queries, rule-based dimensions, and recommendations so you can integrate them into your workflows.

- Sustainability & carbon insights: The platform includes reporting to measure and reduce carbon emissions, along with cost tracking, to track the environmental impact of cloud usage.

Why We Selected This Tool

We chose Flexera because it solves one of the toughest FinOps challenges we faced: accurate multi-cloud cost allocation and workload visibility. Most platforms gave partial insights or struggled with shared resource attribution, but Flexera automated this with deep tag discovery and structured dimensions. It also integrated seamlessly with our hybrid environments, allowing us to connect finance, engineering, and procurement data for true cost accountability and optimisation at scale.

Pricing

Flexera offers custom enterprise pricing based on your organisation’s cloud footprint, number of connected accounts, and automation requirements.

How to choose the right AI platforms for cloud infrastructure cost optimization?

1. Check for Multi-Cloud and Hybrid Compatibility

Start by confirming that the platform supports all the environments your business uses, including AWS, Azure, Google Cloud, and any private or on-premise systems. A tool that brings all of your cloud spend into one unified dashboard ensures that no part of your infrastructure is overlooked, and optimisation can happen consistently across platforms.

2. Evaluate the Level of Automation

Decide how much control you want to retain over optimisation tasks. Some AI tools only provide recommendations, while others automatically rightsize resources or scale workloads in real time. The ideal platform should offer flexibility, allowing you to start with manual approvals and move to full automation as your team builds confidence.

3. Look for Accurate Cost Allocation and Governance

Cloud optimization is most effective when every dollar spent is clearly linked to a team, product, or project. Choose a platform that can automatically tag and allocate costs to business units, track budget performance, and produce financial reports your FinOps and engineering teams can both rely on.

4. Prioritise Predictive and AI-Driven Insights

A strong AI platform should not only tell you where money was spent but also predict where costs are heading. You must look for capabilities like anomaly detection, trend forecasting, and “what-if” simulations. These insights help your team plan proactively rather than reactively.

5. Verify Integration with Your Existing Stack

An effective optimisation platform should easily connect to your existing ecosystem, monitoring tools, CI/CD pipelines, ticketing systems, and reporting dashboards. When cost insights and performance metrics live in the same place, engineers can act faster and make better decisions.

6. Assess Security and Compliance Standards

As these platforms often access sensitive cloud and financial data, you must ensure they meet enterprise-grade security requirements. Role-based access, single sign-on, encryption, and detailed audit logs are essential to maintain data integrity and compliance.

7. Measure ROI and Time to Value

Finally, focus on platforms that deliver measurable savings quickly. The best AI-powered solutions start showing precise results within weeks of deployment. Ask for case studies, customer benchmarks, and a pilot plan so you can validate both cost savings and operational improvements before scaling.

Read next: Find out which programming languages will dominate cloud computing.

Final words

Managing cloud spend doesn’t have to be a guessing game. The right AI platform gives your team visibility, automation, and control, all in one place. Whether your goal is to fine-tune Kubernetes clusters, optimize multi-cloud costs, or build accountability across teams, each of these tools brings something unique to the table.

You can start with a small project, track your savings, and scale what works. Smart optimisation always begins with understanding your cloud, not just cutting it.

Work on high-impact cloud and AI projects with Index.dev

Leverage cutting-edge tools to optimize cloud infrastructure, reduce costs, and accelerate deployments, while building your skills, growing your career, and enjoying fully remote flexibility with top compensation.

Looking to scale your cloud infrastructure with top-tier talent?

Hire vetted Cloud Engineers, DevOps experts, and AI-driven infrastructure specialists through Index.dev — ready to build, optimize, and manage your cloud environments for performance, reliability, and cost efficiency.

Want to take your cloud strategy further?

Explore more practical guides on cloud development, optimization, and talent-driven solutions: learn about the 10 best cloud computing programming languages, follow the 5 essential steps for a successful cloud data migration, or see how Index.dev helped Genemod amplify R&D cloud solutions with high-performing talent. Browse our full collection of cloud and AI-focused articles and uncover insights from Index.dev experts to level up your infrastructure, workflows, and team impact.