Only about one in four AI initiatives delivers expected ROI, and fewer than 20% have been fully scaled across the enterprise. You can throw millions at the problem and still end up with a proof of concept that never ships.

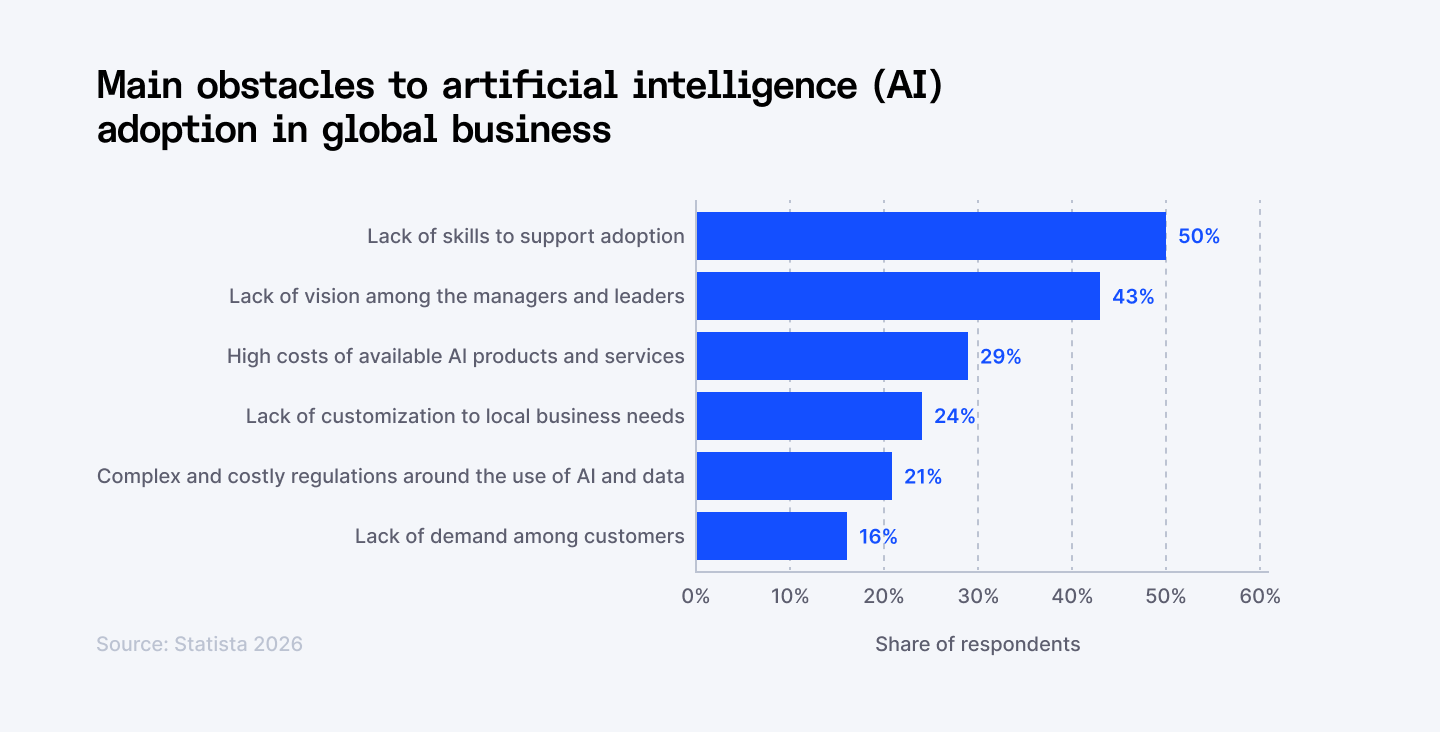

Source: Statista

You probably have the budget. You definitely have the interest. But as Gartner recently pointed out, only about 28% of AI use cases are hitting their ROI targets. We are seeing a massive value gap where companies are busy doing AI, but not necessarily profiting from it.

The truth is, 85% of these initiatives won't fail because the models aren't smart enough. They’ll fail because the organizational scaffolding—your data, your culture, and your workflows—isn't built to support them. And the frustrating part is: most of them have nothing to do with the technology itself.

Here is the breakdown of the big six AI adoption hurdles standing in your way and, more importantly, how you can clear them to unlock tangible results.

Whether you're building AI-enabled products or scaling your engineering capacity, we provide the technical depth and managed delivery support to keep you on track. Scale your AI capacity today →

1. AI skills gap persists

You can buy the best AI tools on the market. Without the right people to deploy them, you have expensive software collecting dust.

AI has jumped from the 6th most scarce tech skill to number one in just 18 months — the steepest surge in any skills shortage recorded in over 15 years. Global demand for AI talent now outpaces supply 3.2 to 1. There are over 1.6 million open AI positions worldwide, with only around 518,000 qualified candidates to fill them. And 85% of tech executives have already postponed or slowed down major AI projects specifically because they lacked the staff to execute.

The real issue is that most organizations are trying to build AI-powered operations with teams that were never trained to think that way. AI-ready employees — people who use AI daily, integrate it into their workflows, and push its limits — are a completely different profile from traditional tech talent. They're multipliers. One AI-fluent engineer can outperform a team of five who aren't.

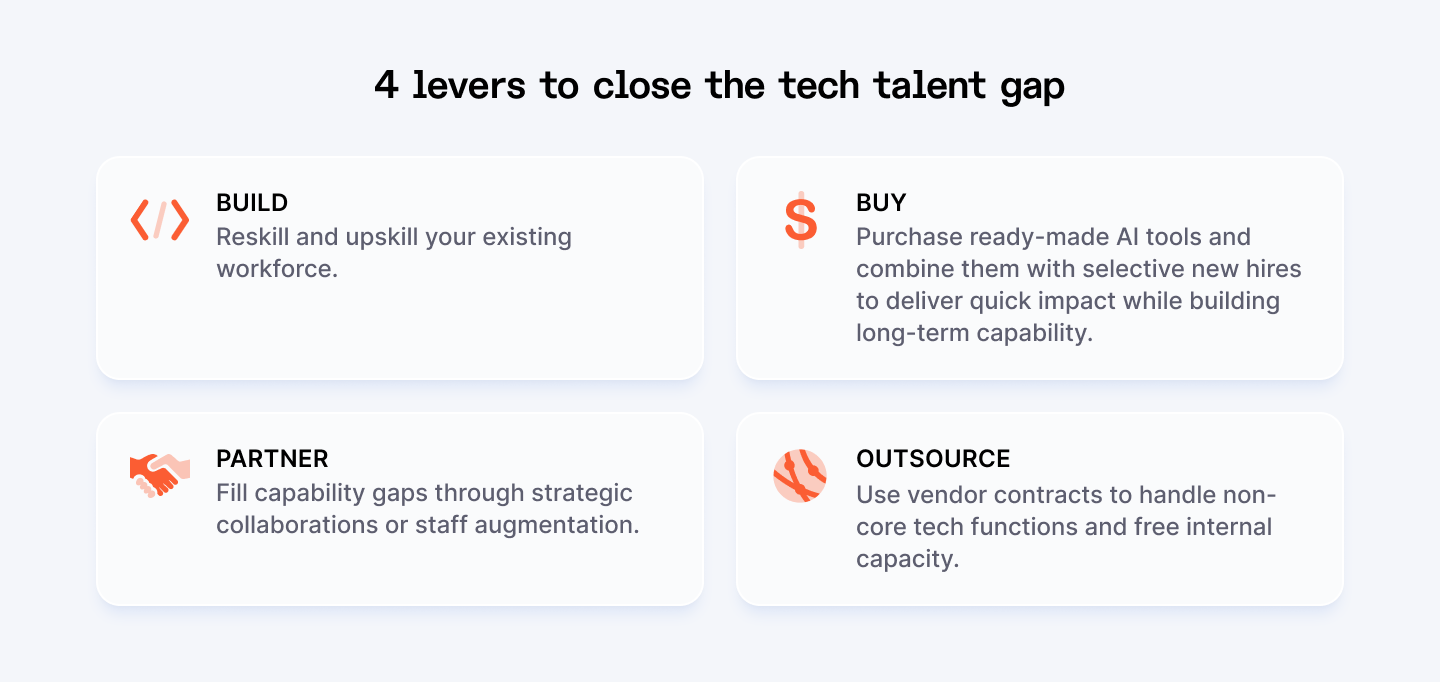

How to close the gap

- Upskill before you hire. Companies that address AI talent shortages effectively achieve 2.3x faster AI adoption and 67% higher AI ROI than those that don't. Start with the team you already have. Run structured AI training programs. Make AI proficiency a core competency.

- Identify your AI champions. 77% of employees using AI are either already champions or have the potential to become one. These people exist in your organization right now. Find them, give them ownership, and let them pull others along.

- Rethink your hiring criteria. Credentials matter less than capability. Skills-based hiring, remote-first roles, and global talent pools can dramatically expand your reach. Only 46% of organizations currently integrate workforce planning into their AI roadmaps — which means most are hiring reactively, not strategically. Map the AI roles you'll need 12 months from now and start building toward them today.

⭢ Explore more: Stay ahead with the latest AI tech trends for 2026 and see which ones will impact your roadmap.

2. Budget constraints remain critical

Average monthly enterprise AI spending hit $85,521 in 2025, a 36% jump from the year before, and the share of organizations spending over $100,000 per month has more than doubled. That's just the tools. Add infrastructure, integration, training, governance, and ongoing maintenance — and the number looks very different from what ended up in your original business case.

What starts as a contained proof of concept at $5,000 per month can balloon to $50,000+ per month in production once you factor in organizational overhead and the complexity of running AI across multiple departments. And despite all this spending, only 51% of organizations can confidently evaluate whether their AI investments are delivering ROI.

That's a strategy problem. Back in 2023, nearly every company planned to increase AI budgets. By 2024, that number had nearly halved, a clear sign that leaders started asking harder questions once the invoices arrived. Budget pressure is real at every level, not just for startups.

How to control the spend

- Start with ROI before you start with tools. Don't greenlight AI investments that can't be tied to a measurable business outcome: cost reduction, revenue impact, time saved. Organizations that commit 20%+ of digital budgets to AI and invest 70% of those resources in people and processes, not just technology, are the ones seeing meaningful returns.

- Audit what you already have. Most enterprises are over-tooled and under-utilized. Before buying another platform, map what's being used and where you're paying for redundancy.

- Treat AI spend like a product, not a project. Projects have budgets that close. Products have owners, metrics, and iteration cycles. Assign ownership of AI initiatives with clear KPIs, track costs in real time, and kill what isn't working fast.

3. Data quality and integration issues

You can deploy the most sophisticated AI model on the market. If the data feeding it is siloed, outdated, or incomplete, the outputs will be unreliable. In business-critical contexts, unreliable is dangerous.

73% of enterprise data leaders identify data quality and completeness as the primary barrier to AI success, ranking it above model accuracy, computing costs, and even talent shortages. 47% of enterprise AI users made at least one major business decision based on hallucinated content. That's not a fringe problem. That's nearly half the room.

Most enterprises are running AI tools on top of fragmented infrastructure: legacy systems, disconnected data pipelines, and inconsistent data standards across departments. The AI doesn't fail because it's poorly built. It fails because the foundation underneath it is broken. And the hallucination problem is real and persistent. Knowledge workers now spend an average of 4.3 hours per week fact-checking AI outputs, which quietly eats into the productivity gains you were promised in the first place.

How to fix it

- Treat data as a prerequisite. Before you scale any AI initiative, audit your data — where it lives, who owns it, how current it is, and whether it's accessible across systems. You don't need perfect data to start, but you need governed data. Assign ownership, establish quality standards, and build pipelines that feed your AI clean, consistent inputs.

- Use RAG to ground your AI in reality. Retrieval-Augmented Generation (RAG) connects your AI to your actual, verified knowledge bases instead of relying purely on what the model was trained on. RAG combined with guardrails reduces hallucinations by 96% compared to standalone language models. For any use case touching customers, legal, finance, or operations, this is non-negotiable.

- Build human-in-the-loop checkpoints for high-stakes outputs. AI should accelerate decisions, not replace the judgment behind them. Define clearly where human sign-off is required, especially in regulated industries or customer-facing workflows, and make oversight a structured part of your AI operating model.

4. Deploying AI without strong cybersecurity

The faster you scale AI, the faster that surface grows — and most companies are scaling faster than they're securing.

87% of organizations were targeted by AI-powered cyberattacks in the last 12 months. And attackers are no longer just exploiting your systems. They're exploiting your AI. 13% of organizations have already reported breaches of AI models or applications, and 97% of those organizations lacked proper AI access controls. AI-assisted attacks have increased 72% since 2024, with phishing surging 1,265% driven by generative tools.

85% of organizations experienced at least one deepfake-related incident in the past — cloned executive voices, fabricated wire transfer approvals, synthetic identities. These are not edge cases anymore.

The gap between AI adoption and AI security governance is where attackers are operating right now. IBM's research shows that organizations using AI and automation extensively in their security operations saved an average $1.9 million per breach and reduced the breach lifecycle by 80 days.

How to close the gap

- Run security risk assessments. Every new AI model, integration, or third-party tool you add needs to be assessed for threat vectors before it goes live — data poisoning, prompt injection, model theft, adversarial inputs. Treat your AI systems with the same scrutiny you'd apply to any critical infrastructure.

- Build AI-specific incident response protocols. Generic cybersecurity playbooks weren't designed for AI threats. When Samsung engineers accidentally leaked proprietary source code through ChatGPT prompts in 2023, the company had no AI-specific protocol in place to contain it — a wake-up call that led to strict internal AI usage policies and purpose-built guardrails. Your response protocols need to explicitly cover model security, prompt logging, adversarial attack detection, and shadow AI.

- Deploy zero-trust architecture across your entire AI stack. Zero-trust architecture adoption is accelerating, strengthened by AI analytics for adaptive network segmentation and policy enforcement. Verify everything. Assume nothing is safe by default — not users, not models, not internal systems. Enforce least-privilege access, encrypt data in transit and at rest, and monitor AI-facilitated transactions continuously.

5. Failing to develop internal AI talent

Buying AI tools is easy. Building a workforce that can use them well is where most companies fall short.

59% of enterprise leaders say their organization has an AI skills gap, even though most are already investing in some form of AI training. The problem is that the training isn't working. Only 35% of organizations have a mature, workforce-wide AI upskilling program.

The cost of this gap is measurable. Organizations with a mature, enterprise-wide AI upskilling program are nearly twice as likely to report significant positive AI ROI compared to those without one. The tools don't drive ROI. The people using them do. BCG's analysis shows that 70% of AI value comes from workforce changes, not the technology itself.

How to build AI capability

- Make AI literacy non-negotiable at every level. Not just for engineers and data scientists — for product managers, operations leads, finance teams, and yes, executives. When employers provide structured AI training, adoption jumps to 76% compared to just 25% without it.

- Replace passive learning with applied practice. Most corporate AI training fails for the same reason: employees watch videos and never use what they learned. The most effective programs are hands-on, workflow-embedded, and measured against real outcomes. Build role-specific learning paths, run live AI sprints on real business problems, and measure skill progression the same way you'd measure any other business metric.

- Identify high-potential AI users within your organization. Future-built companies are four times more likely to have structured AI learning programs and carve out protected time for employees to develop these skills. Identify high-potential employees across functions who are already leaning into AI, give them resources and visibility, and let them pull the rest of the organization forward.

6. Lack of an AI usage policy and privacy safeguards

Despite 90% of enterprises using AI in daily operations, only 18% have fully implemented governance frameworks. Only one in five companies has a mature governance model for autonomous AI agents, even as agentic AI is moving into production across the enterprise.

The regulatory environment is tightening fast. The EU AI Act is now partially enforced, with full requirements for high-risk AI systems kicking in by August 2026, carrying fines of up to €35 million or 7% of global turnover for violations. Gartner estimates that over 60% of enterprises will require formal AI governance frameworks by 2026 to meet rising security, risk, and compliance demands.

Privacy exposure is the other live wire. Shadow AI — employees using unapproved tools outside IT oversight — is already creating violations companies don't even know about. An employee at a healthcare organization pasting patient notes into a personal ChatGPT account has just created a HIPAA violation, regardless of intent.

How to take back control

- Ship an AI usage policy before you ship another AI tool. This doesn't need to be a 40-page legal document. It needs to clearly define what's approved, what's off-limits, what data can and can't enter external AI systems, and who owns accountability. Every team — legal, HR, product, sales — should have role-specific guidance.

- Get visibility on your shadow AI. You cannot govern what you cannot see. Audit the AI tools actually in use across your organization, not just the ones IT approved, but the ones employees are using anyway. Classify each by risk tier: what data does it touch, who owns it, what are the retention terms?

- Pivot to Small Language Models (SLMs) for privacy. To solve the privacy-vs-power trade-off, move your most sensitive operations to on-prem or VPC-hosted Small Language Models. These models are trained on your specific, cleaned data and never ‘phone home.’ Not only does this solve the CCPA/GDPR compliance headache, but it also slashes latency and costs by 30-50% compared to using massive, general-purpose frontier models.

⭢ Up next: Discover how AI-native software is reshaping entire industries and what it means for how you build and scale products.

Conclusion

AI adoption doesn’t fail because of the technology. It fails because of execution. The patterns are clear. Skills are missing. Costs are mismanaged. Data is messy. Security is underestimated. Teams are not ready. Governance is often an afterthought.

If you’re leading AI transformation, your job is not to chase tools. It’s to build capability. That means investing in the right talent, fixing your data foundations, setting clear rules, and scaling what works.

You don't need to solve everything at once. Pick the obstacle that's costing you the most right now, address it with intention, and build from there. The compounding effect of getting AI right — in your workflows, your culture, and your infrastructure — is significant. Early movers report $3.70 in value for every dollar invested, with top performers reaching $10.30. Fullview

The window to build that kind of advantage is still open. But it won't stay open forever.

How Index.dev Accelerates Your AI Delivery

You don't have to navigate this transformation alone. Index.dev helps you bypass the talent shortage by providing immediate access to verified AI engineers and integration specialists globally.

Whether you need a single expert to fix a data pipeline or a full AI engineering pod to build production-ready agentic workflows, Index.dev delivers interview-ready talent in under 48 hours. We don’t just find you developers; we provide the engineering capacity and delivery oversight to ensure your AI initiatives move from experiment to enterprise-grade without the usual hiring friction.

AI isn't going to replace your business, but a competitor with a cleaner data stack and a more agile engineering team just might. The tools are ready. Is your team?