A few years ago, AI in software mostly meant one thing. Add a feature. Maybe a recommendation engine. Maybe a chatbot. That era is already fading.

Jensen Huang, CEO of NVIDIA, said it plainly: "Software is eating the world, but AI is eating software."

Another way to think about it comes from Satya Nadella, CEO of Microsoft, who recently said that AI will fundamentally reshape software itself. In his words, “every application will become an AI application.”

They're both right. And the numbers are starting to prove it.

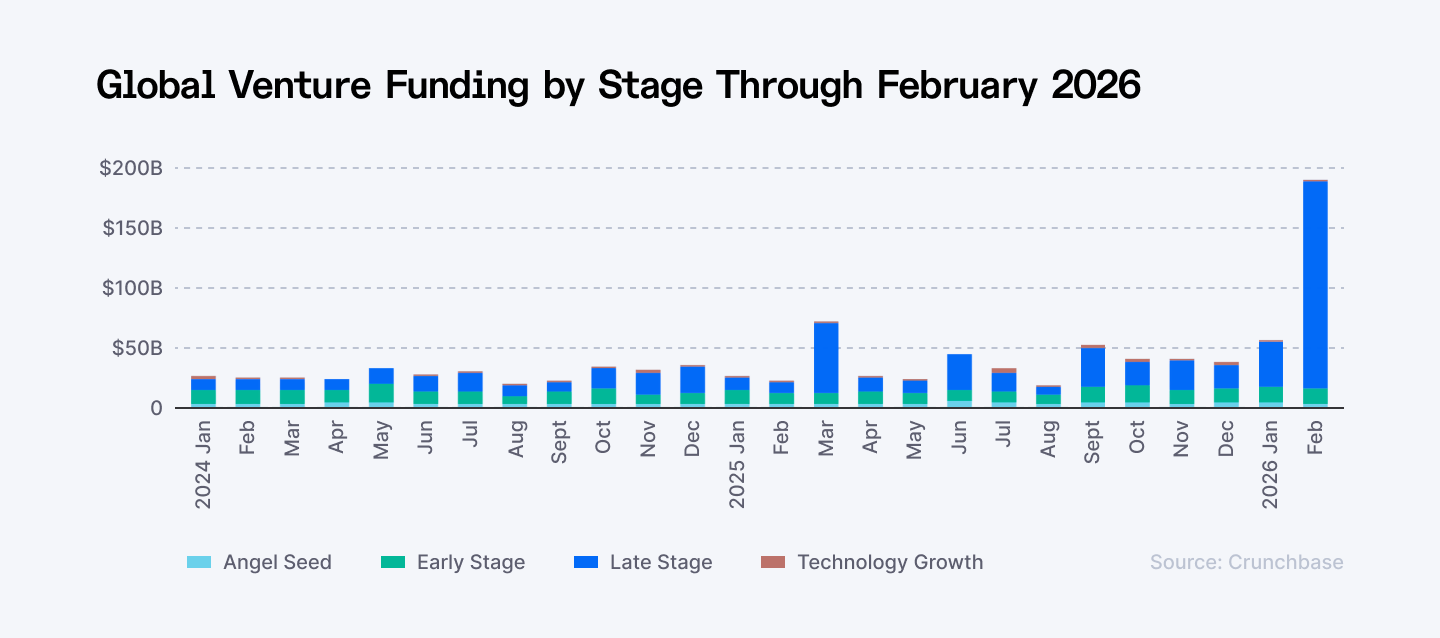

The first two months of 2026 saw a staggering $220 billion poured into AI startups globally. Crunchbase reports a record $189 billion in global VC funding for February alone.

Source: Global Venture Funding by Stage, Crunchbase

We’ve seen historic moves that would have been unthinkable a few years ago:

- OpenAI closed a record-breaking $110 billion round, valuing the titan at $840 billion.

- Anthropic secured a $30 billion Series G.

- xAI raised $20 billion to push the boundaries of frontier models.

- Nscale recently secured $2 billion in Series C funding, becoming Europe's most valuable AI infrastructure player.

Meanwhile, in 2025, 55 US AI startups raised $100M or more. Cursor raised $2.3 billion at a $29.3 billion valuation, Luma AI closed a $900 million Series C, and Genspark hit a $1.25 billion valuation on its Series B.

The software industry is moving from software with AI to software built around AI. In other words, software is becoming AI-native, with more than 80% of companies now baking AI into core products.

If you're a founder, CTO, or head of engineering, this raises important questions:

What exactly makes an application AI-native? How is it different from traditional AI-enabled software? What models and architectures power these systems? Where are the most valuable use cases emerging?

In this piece, we explore what AI-native applications really are, how they differ from AI-enabled software, what powers them under the hood, where they're delivering real results across industries, and what this all means for the future of how software gets built.

Building an AI-native product? Index.dev provides vetted AI engineers, ML specialists, and solution architects, pre-screened for your stack, productive from sprint one. Skip the three-month hiring cycle. →

What Does AI-Native Mean?

When people say “AI-native”, they often mean different things. Let’s simplify it. AI native means you design the product, workflows, and architecture with AI at the core from scratch. AI is why the product exists in the form it does. Intelligence is not an extra feature. It is the engine of the system.

Traditional software follows rules written by developers. If X happens, do Y. AI-native software behaves differently. It learns from data, understands context, and adjusts its behavior over time. Instead of fixed workflows, the system continuously adapts.

From what we see across the industry, AI native products usually share a few common traits:

- Intelligence at the core. The product relies on models that understand language, images, or data. These models generate outputs, not just process inputs.

- Continuous learning. The system improves through feedback loops, usage data, and evolving models.

- New economics of speed and scale. Tasks that once required teams of people can now be done instantly or automatically.

- Some level of proprietary advantage. The strongest AI native products rarely rely only on off the shelf models. They fine tune models, orchestrate multiple systems, or build proprietary data layers.

One important point. AI-native does not mean you have to start that way. Some of today's most compelling AI-native products evolved from cloud-native foundations. Adobe and Microsoft didn't abandon their franchises, they rebuilt them around intelligence. That path is open to you too, but it requires intentional architectural work, not just layering models onto existing systems.

According to a16z's survey of 100 enterprise CIOs, the primary reason buyers prefer AI-native vendors is their faster innovation rate, followed by the recognition that companies built around AI from the ground up deliver fundamentally better products with superior outcomes compared to incumbents retrofitting AI into existing solutions.

AI-Native vs AI-Enabled Applications

A lot of products today claim to be “AI-powered.” In reality, many of them are simply AI-enabled. They add AI features to existing software. A chatbot. A recommendation engine. Maybe some automation. Useful, but limited.

AI native products work differently. AI drives the core functionality. The simplest way to understand it is this.

AI-enabled products add intelligence to existing software.

AI-native products build the entire experience around intelligence.

That distinction affects architecture, performance, and the kind of product you can build.

| Feature | AI-Enabled (The Accessory) | AI-Native (The Foundation) |

| Workflow | Linear & Deterministic | Dynamic & Probabilistic |

| Decision Making | Human-driven with AI "tips" | Autonomous within guardrails |

| Logic | Static code (If/Then) | Learned patterns and reasoning |

| Performance | Performance is capped by code | Performance compounds with data |

AI-Enabled Applications

AI enabled apps usually start as traditional software. Later, AI features are added to improve certain parts of the experience. You might see things like:

- Recommendations

- Automated summaries

- Basic chatbots

- Simple personalization

These features improve the product, but the core system still runs on traditional logic and workflows. AI acts more like a helper than the engine.

AI-Native Applications

AI is responsible for core decisions, outputs, and user interactions. Without AI, the product would not work. The architecture is designed around models, data pipelines, and feedback loops from the beginning.

You usually see:

- Dynamic workflows instead of fixed logic

- Interfaces powered by natural language

- Systems that learn from usage data

- Automation that replaces manual tasks

The product evolves as the models improve.

A Simple Example

Take enterprise search. The AI-enabled version looks like this: you have a traditional search index, keyword matching, maybe some filters, and then you add a language model on top to improve query results or generate summaries. The underlying system still works the old way. AI just makes the surface look smarter.

The AI-native version, which Glean built, is architecturally different from the ground up. The entire system is designed around understanding context, permissions, and meaning across every connected data source in real time. It doesn't retrieve documents and then summarize them. It reasons across your company's knowledge graph and surfaces what's actually relevant to you, right now, given who you are and what you're working on. There's no legacy layer. Intelligence is the foundation.

The Trade-offs Are Real

AI-native startups are out-executing much larger competitors across some of the fastest-growing application categories, despite incumbents having entrenched distribution, data moats, deep enterprise relationships, and massive balance sheets.

Cursor is the clearest example. GitHub Copilot was the first mover with every structural advantage, yet Cursor captured significant share by shipping better features faster, beating Copilot to repo-level context, multi-file editing, and natural language commands.

⭢ Check out the latest AI in application development statistics to see the trends driving innovation.

The Models That Power AI-Native Apps

AI-native apps aren't powered by a single model. They're powered by a stack of specialized models, each doing a specific job, orchestrated to work together:

Large Language Models (LLMs)

The cognitive layer. GPT-4o, Claude, and Gemini handle natural language understanding, reasoning, content generation, and increasingly, multi-step task execution. 2025 has become the year of agents, with LLMs now capable of reasoning through problems iteratively, using external tools, and coordinating across multiple interactions to complete complex tasks.

You see them used for:

- conversational interfaces

- AI copilots

- document analysis

- automated workflows

- knowledge assistants

Embedding and Retrieval Models

The memory layer. Sentence transformers and specialized encoders convert text, images, and structured data into vectors that power semantic search, recommendation systems, and retrieval-augmented generation (RAG). RAG-based models dominated with a 38% revenue share in 2025, reflecting enterprise demand for accuracy, auditability, and context-aware responses over raw generative output.

Computer Vision Models

YOLO, ResNet, and Vision Transformers handle image recognition, object detection, and real-time visual processing. These are the models behind medical imaging tools, quality control systems in manufacturing, and visual search in e-commerce.

They also power features like:

- object detection

- facial recognition

- augmented reality filters

Multimodal Models

GPT-4o and Gemini 1.5 collapse the boundary between text, image, audio, and video into a single reasoning layer. This is where the next generation of AI-native interfaces is being built, products that can see, read, and respond in whatever format is most natural for the task. For example, models like CLIP or multimodal systems developed by OpenAI and Google allow applications to interpret both language and visuals in the same workflow.

Specialized and Fine-Tuned Models

Domain-specific models for code generation, speech recognition, and predictive analytics outperform general-purpose models in narrow tasks, often at significantly lower cost. Enterprises with hyper-specific use cases are still fine-tuning open source models, particularly where domain adaptation matters, like a streaming service fine-tuning for video search query augmentation.

Most serious AI-native products aren't betting everything on one model provider. 37% of enterprises now run five or more models in production simultaneously, with different models handling different tasks based on capability, cost, and latency requirements.

AI-Native Use Cases Across Industries

Across the globe, over 1.5 billion people now use AI tools monthly, and 72% of organizations have fully integrated AI into at least one core function. Here's where that's playing out in practice.

Healthcare

Healthcare alone captures nearly half of all vertical AI spend. The applications are specific and measurable.

Examples:

- Abridge, one of the most cited AI-native apps in clinical settings, transcribes and summarizes physician-patient conversations in real time, reducing documentation time by up to 70% and letting clinicians focus on care instead of charting.

- SkinVision uses deep learning to analyze skin lesion images directly on-device, giving users a risk assessment without sending biometric data to a server.

- Remote patient monitoring apps integrated with wearables now track vitals continuously and surface anomalies before they become emergencies.

- Wearable devices with embedded AI can monitor patient vitals and alert physicians in real time.

Financial Services

Finance was the earliest enterprise adopter of AI. Fraud detection was the entry point. Today, AI-native financial apps run real-time transaction analysis across millions of data points, flagging anomalies with a precision and speed that rule-based systems never achieved.

Examples:

- JPMorgan's COIN platform reviews legal documents using NLP, saving 360,000 hours of manual work annually.

- Apps like Cleo and Monarch Money are built AI-native from the ground up, using behavioral data and spending patterns to deliver advice that feels genuinely personalized, not just templated.

- Risk assessment is also being rebuilt from scratch. AI-native credit platforms analyze thousands of variables in real time, far beyond the credit score and employment check that legacy systems rely on. That's opening credit access to underserved segments while reducing default rates for lenders.

Retail and E-Commerce

Retailers saw 15% higher conversion rates after deploying AI-powered chatbots during the Black Friday weekend, according to Deloitte's 2025 US Retail Industry Outlook. The real footprint may be larger still: Lyro's AI in ecommerce report shows that AI now influences half of all purchase decisions, yet receives attribution credit for less than 1% of web traffic - a gap that standard analytics tools are structurally unable to capture.

Examples:

- Zara uses AI to analyze customer behavior patterns and automate stock replenishment across its global supply chain.

- Sephora's AI tools suggest makeup products based on real-time facial recognition.

- Amazon's recommendation engine, arguably the most mature AI-native product in retail, drives an estimated 35% of all purchases on the platform.

Visual search is the next frontier. Apps that let you point your camera at a product and instantly find it, or something similar, are expected to grow. The underlying architecture is entirely AI-native: computer vision, embedding models, and real-time retrieval working together.

Manufacturing and Logistics

In manufacturing, edge AI enables instant quality checks on production lines without relying on cloud-based systems. AI-native platforms analyze sensor data from equipment in real time to predict failures before they happen, turning reactive maintenance into a proactive discipline. BMW, in collaboration with Monkeyway, developed an AI solution that scans factory assets and creates digital twins that run thousands of simulations to optimize distribution efficiency.

In logistics, AI-native platforms are transforming route optimization, demand forecasting, and inventory management. Domina, a logistics company managing over 20 million annual shipments, used AI to improve real-time data access by 80% and increase delivery effectiveness by 15%.

Travel and Mobility

The travel industry has always been data-rich but insight-poor.

Examples:

- Navigation apps like Google Maps and Waze moved beyond turn-by-turn directions years ago. Today they run predictive traffic models that factor in historical patterns, real-time incidents, weather, and event data simultaneously.

- Smart booking is the next layer. AI-native platforms now predict demand windows, surface personalized itinerary options, and dynamically adjust pricing in real time based on behavioral signals and market conditions. Hopper built its entire product around this, giving travelers predictions on when to buy with enough accuracy to drive genuine loyalty.

- Vehicle monitoring is where the compounding advantage gets most tangible. AI-native apps integrated with connected vehicles track engine health, brake wear, and battery performance continuously, surfacing maintenance alerts before failures happen. For fleet operators, it means lower costs and higher asset utilization.

Education

This is one of the most underdeveloped verticals relative to its potential. AI-native platforms like Khanmigo are built around adaptive learning. Every interaction feeds the system's model of where the learner is, what they're struggling with, and what to surface next. AI-powered adaptive learning systems change difficulty levels, propose resources, and give immediate feedback based on each student's progress.

Legal

Legal has grown into a $650 million AI market, led by AI-native companies like Eve. The demand makes sense. Legal work is dense with unstructured data, repetitive analysis, and high-stakes accuracy requirements. That's exactly where AI-native products create the most value.

Contract analysis, due diligence, regulatory compliance monitoring, and case research are all being rebuilt by AI-native startups. What took a team of associates days now takes minutes. The shift is about letting lawyers operate at a different level of leverage.

Why AI-Native Integration Changes the Game

IBM's 2025 study of 3,500 senior executives found that 66% of organizations have already achieved significant operational productivity improvements using AI.

This is why many engineering teams are now redesigning products around AI. A few things that compound over time:

- Speed and real-time responsiveness. When intelligence lives inside the system rather than making a round-trip to a remote server, response times drop dramatically. In healthcare diagnostics, legal document review, or real-time fraud detection, that latency difference is the difference between a usable product and an unusable one.

- Continuous improvement built in. Every user interaction, every data point, every correction feeds back into the model and makes the system smarter. That feedback loop is a compounding asset.

- Privacy and data efficiency. Security and privacy concerns remain the top barrier to scaling AI in 68% of organizations surveyed by IBM. AI-native architectures built with this in mind from day one sidestep that blocker entirely.

- Lower operational cost. When your AI layer is tightly integrated with your data pipelines and hardware, you're not paying for unnecessary API calls, redundant processing, or bloated cloud compute. That's where the real margin improvement shows up.

- Better decisions, faster. Deloitte's 2026 State of AI in the Enterprise report found that improving decision-making is one of the top three benefits organizations report from AI adoption, cited by 50% of executives.

70 to 85% of AI initiatives still fail to meet expected outcomes, and 42% of companies abandoned most of their AI projects in 2025. The advantages of AI-native architecture are real, but they don't show up automatically.

⭢ Wondering which sectors AI will disrupt next? Explore 5 industries on the edge of an AI transformation and what’s coming next.

Building AI-Native? You Need the Right Team First

Think AI first, build intelligence in from day one, and let your product evolve with your users.

Building an AI-native ecosystem demands a blend of deep mathematical expertise, sophisticated engineering, and a relentless focus on data integrity.

In this new era, the bottleneck is rarely the ideas—it is the specialized talent required to execute them. Right now, execution is a talent problem. In AI, 46% of leaders cite skill gaps as a major barrier to adoption. Finding engineers who can build AI-native products is genuinely hard.

That’s where Index.dev comes in. We provide the right people, the right skills, and the right process.

Scalable AI Talent Solutions

If you're building an AI product from scratch, Index.dev assembles the full team: ML engineers, AI specialists, and STEM experts aligned to your stack and your timeline, and takes your concept all the way to production.

If you need to scale your existing team fast, Index.dev embeds vetted AI specialists directly into your workflow. Pre-screened for your tech stack, tested for communication, and productive from the first sprint.

If your models need work, the team covers data annotation, fine-tuning, and model evaluation, the critical work that determines whether your AI actually performs in production.

The Roles We Place

We provide the specific expertise required for every layer of the AI stack:

- AI Product Engineers: Full-stack talent who build the interface between users and intelligence.

- ML & NLP Engineers: Experts in model architecture, fine-tuning, and deployment.

- AI Solution Architects: Designers of the scalable, high-performance infrastructure AI requires.

- Data Scientists & STEM Experts: The analytical minds ensuring model accuracy and behavioral integrity.

- Data Annotation Specialists: The foundation for high-quality, ethically sourced training data.

The move to AI-native is a marathon, not a sprint. Having the right partners makes all the difference.