Maintenance teams work with drones to monitor bridge conditions in Denmark. Utility workers in Australia rely on AI software to dispatch field staff for clearing storm grates. Auto assembly line employees at Ford deploy computer vision to detect defects in real time. AI isn’t coming, it’s already embedded in how work gets done across industries.

Knowing how to use AI is no longer enough. Real survival, real growth, in 2026 will hinge on AI literacy.

It isn’t about your degree, your job title, or even the size of your paycheck. It’s about understanding AI: what it can do, how it works, the risks it carries, and, most importantly, how to wield it strategically.

Employees who are AI literate understand three things: what AI does, how it can help them, and how to use it responsibly.

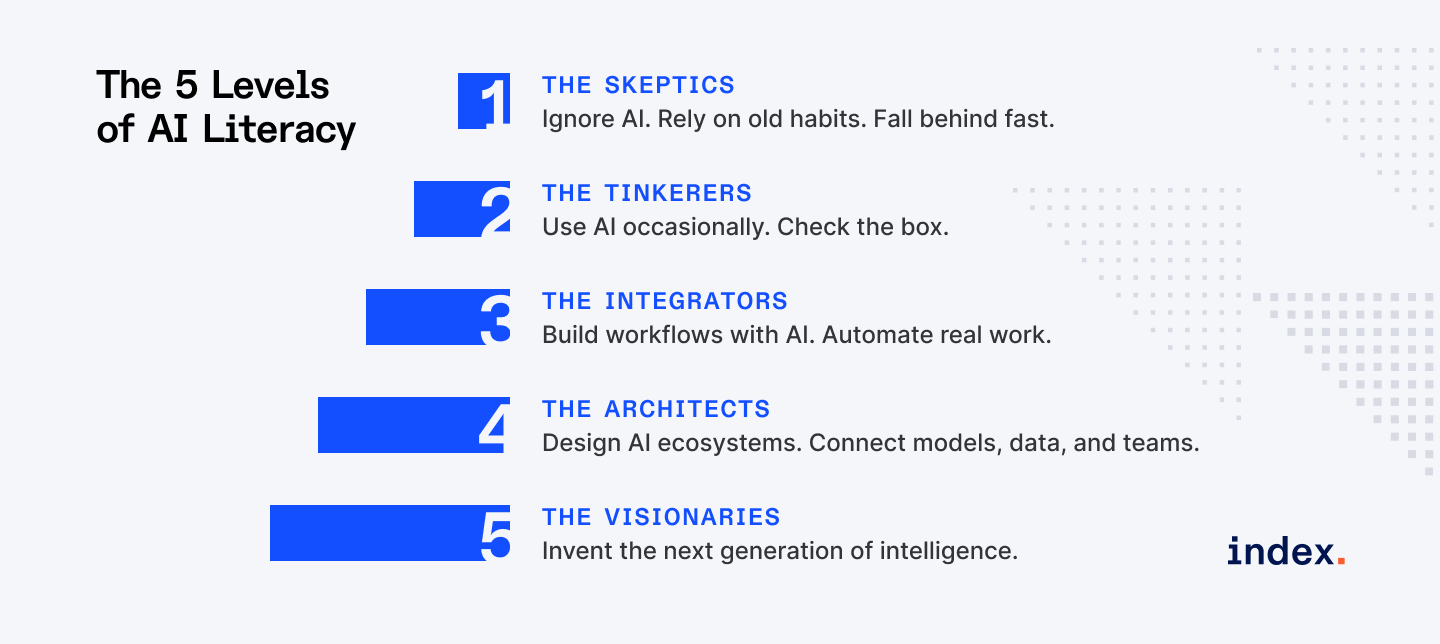

Below, I’ve outlined the five levels of AI literacy in 2026. So where do you stand? Where do you need to be?

Want to level up your AI career? Join Index.dev and get matched with long-term, high-paying remote roles.

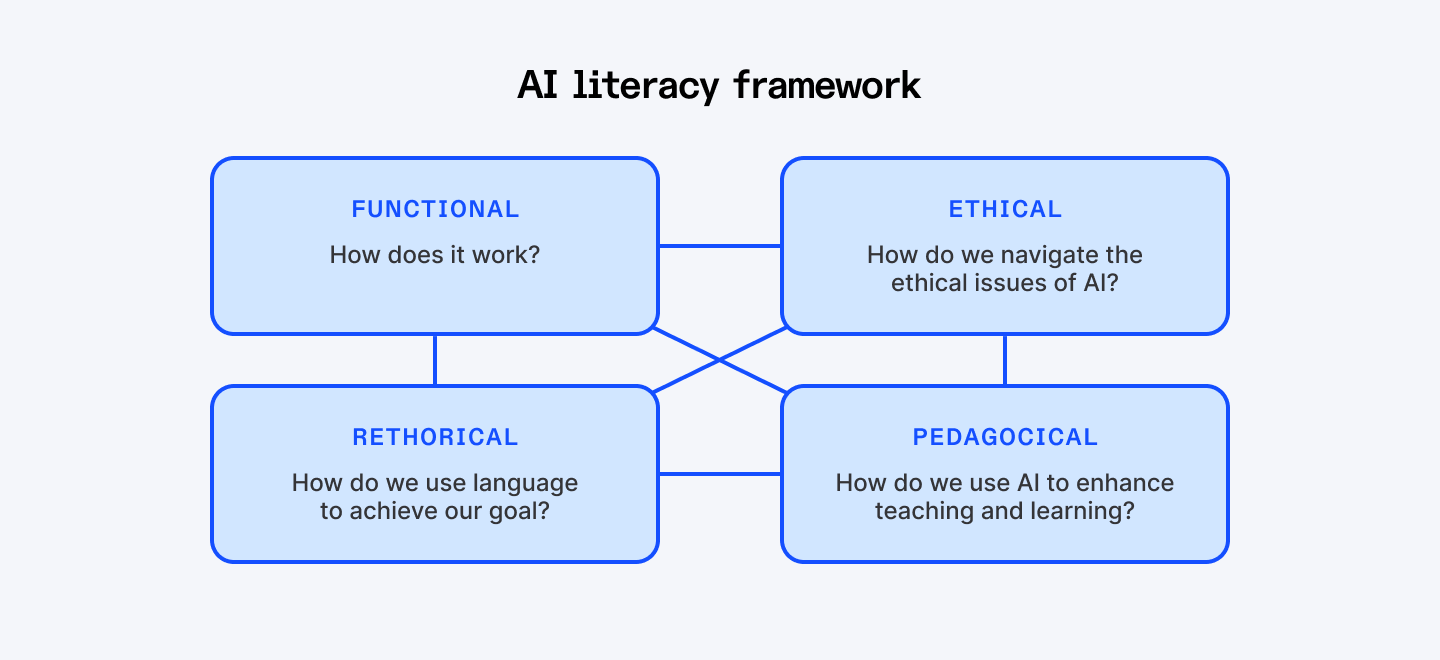

The Components of AI Literacy

AI literacy empowers individuals to wield AI thoughtfully, responsibly, and strategically. By 2026, it will define who thrives, who leads, and who gets left behind.

A diagram of the AI literacy framework

LinkedIn reports that AI literacy skills have grown 177% since 2023. At the same time, nearly half of executives recently surveyed by IBM say their teams lack the AI skills needed to implement and scale artificial intelligence. That’s a huge gap. The truth is AI literacy isn’t binary. You’re not just “literate” or “illiterate.” It’s a spectrum. And like data literacy before it, it has multiple layers, across technical, practical, and ethical dimensions.

Technical understanding

At its core, AI literacy starts with understanding how AI thinks. How does it perceive the world? How does it collect, process, and act on data? Knowing what AI can do, and what it can’t, is critical.

AI is only as smart as the data it learns from. Biases, gaps, and errors in training data will show up in real-world outputs. Executives who ignore this risk are asking for trouble.

Practical understanding

This is the practical art of integration and orchestration. It’s the ability to move beyond basic ChatGPT usage to embedding AI into the core workflows of a department. This means knowing which AI tool to use, when, and how to chain it with other tools to deliver a 10x uplift, not just a 10% gain.

Ethical understanding

This is the highest form of AI literacy, and in 2026, it will be the most valuable. It’s about recognizing that every AI deployment is a societal choice and a legal risk. It is the ability to anticipate the consequences of algorithmic decisions, manage bias, ensure transparency, and comply with rapidly evolving global regulations (like the EU AI Act).

The AI Literacy Levels

Level 1: The Skeptics

They might be highly experienced, respected in their field, but they are fundamentally out of step with the world around them. These are the people who flat out refuse to touch AI tools. Not because they've tried and didn't like them. Because they won't try at all.

Listen to what they say:

- “AI is just hype.”

- “I’ve been doing my job this way for 20 years.”

- “AI will never replace human intuition.”

This mindset feels safe, but it’s a trap. Avoidance doesn’t protect careers, it ends them.

Market reality

- LinkedIn ranked AI literacy the fastest-growing skill across all industries in 2024. Not in tech. All industries. Healthcare, finance, manufacturing, marketing, everywhere.

- Professionals with advanced AI skills are earning up to 56% more than peers without those skills in the same roles.

- Data shows a 13% relative decline in employment for early-career workers in the most AI-exposed roles since the rise of generative AI. Why? Because the mundane, introductory tasks (data entry, basic coding, initial drafting) that used to be the entry point for new talent are now the first tasks to be fully automated.

What happens to them by 2026

Reality hits hard. The entry level positions they might qualify for don't exist anymore because AI absorbed those tasks. The mid level positions require AI fluency as a baseline. They watch people half their age run circles around them, not because they're smarter, but because they're leveraging tools that multiply human capability.

And here's the truly brutal part: they blame the economy. They blame ageism. They blame outsourcing. They blame everything except the actual problem, which is staring them in the face every single day.

Daily work life

They spend hours on repetitive tasks AI could handle in minutes. They manually format reports, compile data, and perform processes that others automate. Meetings drag because they struggle to analyze insights at the speed others can generate them in AI dashboards. Stress is high, output is low, and frustration builds.

Adaptability rate

Very low. About 5%, and dropping. Even if AI training is offered, they approach it with skepticism or outright refusal.

The takeaway

By insisting on being 100% human, they become 0% competitive.

AI isn’t a tool to fear; it’s a skill to master. In 2026, the difference between thriving and being irrelevant won’t be experience or education. It will be the willingness to embrace and harness AI.

Want to speed up your research? Here are the AI tools that make it much easier.

Level 2: The Tinkerers

These people have heard of AI. They've opened ChatGPT once or twice. Maybe they asked it to write an email or summarize something. They tell people "yeah, I've used AI" and technically, they're not lying. But they're not really using it either.

Their mindset:

- “I’ve used ChatGPT a few times; that counts.”

- “AI is interesting, but I don’t really need it right now.”

- “I’ll learn it when I get around to it.”

They dabble, conclude the pilot "didn't work for my workflow," and revert to manual processes

Market reality

- Skills related to AI have increased sixfold in just the past year. Professionals are twice as likely to add AI to their skill set than they were in 2018.

- Survey data indicates employers are willing to pay AI-skilled workers a minimum wage premium of at least 30% across departments, with IT workers seeing up to a 47% gain.

- 20% of companies may eliminate over half of their middle management roles using AI to automate tasks like reporting, scheduling, and resource allocation. The Tinkerer, stuck performing these automatable tasks, becomes a prime target for removal.

What happens to them by 2026

They get stuck. Stuck in declining industries that move too slowly to force adaptation. Stuck watching promotions go to colleagues who learned what they postponed. Stuck playing an endless game of catch up where the finish line keeps moving.

Tinkerers don't get fired. They just become invisible. Passed over for high impact projects. Quietly reassigned to maintenance work. Still employed, technically, but in shrinking niches that matter less every quarter.

Daily work life

Their day is defined by context-switching and cognitive debt. They oscillate between old, familiar manual tasks and clumsy attempts at AI use. They waste time formulating bad prompts and then waste more time fixing the bad AI output, convincing themselves that "the old way is faster" when the real problem is their own low-level literacy.

Adaptability rate

Low. Around 20%. They can adapt if forced, but their approach is reactive and shallow.

The takeaway

Tinkering is worse than avoiding. Avoiders know where they stand. Tinkerers have the illusion of progress. They think checking the box counts. You can't half learn the most transformative technology of today and expect to stay relevant. You're either in or you're out.

Level 3: The Integrators

These professionals have moved past simple consumption of AI tools and are now in the business of orchestrating output.

They operate with the belief that generic tools deliver generic results. Their focus is on maximum leverage through personalization.

- "What is the most high-leverage task I can delegate to a custom-trained agent?"

- "How can I link a custom GPT to my database to automate a key business process?"

- "Human judgment is about setting the strategic rails for the AI to run on."

They approach AI with a practical, results-oriented mindset. They see AI as an extension of their expertise, not a toy or a threat.

Market reality

- IBM reports that 87% of executives expect jobs to be augmented rather than replaced by generative AI. Integrators are those augmented workers.

- The strategic decision for companies now is how they secure their Intellectual Property (IP) within their AI use. By building custom GPTs or internal AI agents trained on proprietary data, the Integrator is the first line of defense against data leakage and the key to turning internal knowledge into an automated asset.

- No-code platforms for custom AI allow non-developers to create bespoke applications. This democratizes the building of competitive tools, making these individuals highly valuable.

What happens to them by 2026

The Integrators will be the most sought-after talent pool for promotion into leadership and strategic roles. They achieve 3–5x productivity gains, freeing time for high-value strategic work. They are the ones designing the internal AI training, setting the rules for data usage, and acting as the bridge between technical and business strategy.

They command salaries that are commensurate with their multi-faceted skill set: part prompt expert, part process engineer, part IT strategist. Their compensation is not based on their role, but on their force multiplier value.

Daily work life

Their day is spent on high-leverage, human-centric tasks. They are meeting with stakeholders, defining complex problems, and then immediately translating those needs into specific, custom AI instructions (e.g., "Build an internal bot that cross-references sales data with support tickets to predict churn risk, and deliver the top five accounts to me by 8:00 AM."). They move from thought to automated execution with minimal friction.

Adaptability rate

High. 50% and climbing. These professionals adapt quickly to new AI tools and methods. They experiment, iterate, and continuously optimize their workflow. They're not worried about the next wave of AI tools because they've already developed the meta skill of learning new AI capabilities.

The takeaway

AI is evolving so fast that yesterday's Integrator becomes tomorrow's Tinkerer if they stop learning.

Level 3 is the safe zone. You're not getting left behind. You're not struggling. You're comfortable.

But being an Integrator is necessary but insufficient for real differentiation. You're keeping pace, but you're not leading. You're in the pack, not ahead of it.

This level requires constant maintenance. You have to keep learning, keep experimenting, keep pushing.

Next, explore how developers are speeding up API development with AI tools that automate half the work.

Level 4: The Architects

These professionals don’t just use AI, they’re building with it. They understand how LLMs, RAG systems, embeddings, and agents work. Not at a PhD level, but at a practical implementation level. They're connecting APIs, building custom GPTs, creating specialized chatbots, and orchestrating tools that talk to each other. And they train teams on AI best practices.

Their primary focus is on maximizing the return on intelligence while minimizing systemic risk. They think in years, not sprints.

- "How can we use Agentic AI to fundamentally eliminate 80% of our supply chain reporting friction?"

- "What are the ethical guardrails required to deploy an LLM on our most sensitive data, and how do we build the RAG architecture to ensure compliance?"

Market reality

- 44% of workers’ skills will be disrupted by 2027, yet most countries have no system to track skill acquisition. Organizations will compete to hire these leaders because they transform the entire department’s productivity and unlock value others can’t see.

- Only a small fraction of senior leaders (around 8%) possess this level of true AI fluency. This scarcity makes them the most valuable resource in the modern economy.

- Salaries for roles like AI Architects and Strategy Consultants are already averaging over $200K. They achieve 10x to 20x productivity over Level 1-2 workers, and their compensation reflects that exponential impact.

What happens to them by 2026

They become indispensable. Leadership positions open up that didn't exist two years ago: AI Strategy roles, AI Operations leads, Transformation directors. They're earning 2 to 3x what Integrators make, not because they work harder, but because they're building the systems everyone else uses. They create the frameworks for prompt best practices, data security protocols, and responsible AI deployment that scale across the entire firm. They're achieving 10x productivity improvements over people still working the old way.

Career examples:

- AI Product Managers

- AI Strategy Consultants

- AI Operations Leaders

- Prompt Engineering Specialists

- AI-Augmented Domain Experts

Daily work life

Their day is spent synthesizing information across departments. They design the automated pipelines that write and contextualize the reports for every stakeholder. They debate risk models, ethical implications, and global compliance, the kind of high-stakes, ambiguous problem-solving that requires human judgment augmented by conceptual AI mastery. They are the human filter that guarantees the integrity of the automated enterprise.

Adaptability rate

Very high. 85% and growing. Their adaptability is not reactive; it is proactive. They're building the future everyone else will work in.

The takeaway

Every Architect started as an Integrator who couldn't stop asking "what if?" They read voraciously. They follow tutorials. They join communities. They're on YouTube learning about RAG architectures. They're in Discord servers talking about prompt engineering. They're building side projects that might not work.

They're not smarter than Integrators. They're more obsessed. And that obsession compounds into capability that looks like magic to everyone else.

Level 5: The Visionaries

They are the inventors of the next generation of intelligence and AI systems. They fine-tune models, train custom systems for highly specific domains, experiment with cutting-edge techniques, and push the boundaries of what AI can achieve. They consider not just immediate impact, but the long-term implications of AI on society, business, and technology.

Their mindset:

- “I’m designing what AI will become.”

- “My work today sets the foundation for AI tools of the next decade.”

- “I lead global collaborations and influence AI policy.”

- “My vision is a future where AI and humanity grow together.”

They directly influence company valuation as their unique algorithms become the most valuable assets on the balance sheet.

Market reality

- The demand for top-tier, PhD-level AI talent is accelerating faster than the supply. Top Research Scientists typically earn base salaries of $320k. They are paid like star athletes because their economic impact is just as unique and non-replicable.

- Roughly 70% of individuals with a PhD in artificial intelligence get jobs in private industry today, up from 20% two decades ago. Why? Because industry has the necessary data volume, the compute power, and the capital to pay for talent and massive computational clusters.

What happens to them by 2026

These are the most highly compensated and intellectually valuable professionals in the world:

- Leadership and Influence:

- They are the directors of Research & Development, Chief AI Architects, or Head of Data Science. They are the CEO's strategic partner on all matters of core technology.

- They are the directors of Research & Development, Chief AI Architects, or Head of Data Science. They are the CEO's strategic partner on all matters of core technology.

- The New R&D:

- They spend their days running experiments, training custom models, and contributing to the advancement of AI capabilities, essentially running the company's R&D function for its most critical asset: its intelligence systems.

- They spend their days running experiments, training custom models, and contributing to the advancement of AI capabilities, essentially running the company's R&D function for its most critical asset: its intelligence systems.

- The Talent Battle:

- Companies fight over this talent pool, paying salaries that are 2x higher than Level 4 architects, recognizing that one Visionary can unlock billions in shareholder value.

- Companies fight over this talent pool, paying salaries that are 2x higher than Level 4 architects, recognizing that one Visionary can unlock billions in shareholder value.

Daily work life

A typical day involves leading research teams, experimenting with novel algorithms, managing massive computational resources, and collaborating with top researchers globally. They review and publish papers, advise institutions, and evaluate the long-term societal and technological impact of AI. Every decision and experiment has the potential to redefine what AI can do.

Tools they use:

- Custom research frameworks

- Massive GPU/TPU clusters

- Novel architectures and algorithms

- Advanced research methodologies, and peer review systems

Technical background:

- PhD-level expertise in AI/ML

- Deep mathematical understanding

- Extensive research experience

- Often leadership in top academic or industrial AI labs

Adaptability rate

Extremely high. 99%. They're defining what others will adapt to.

The takeaway

This level proves that true AI literacy isn't just about using existing technology responsibly; it's about having the mathematical authority to break and rebuild the technology when necessary. While a Level 4 leader makes ethical decisions about deployment, the Level 5 Visionary makes ethical decisions about creation. They are the first line of defense against dangerous or biased model development. They can design systems that expand the Total Addressable Market (TAM). For example, by automating complex processes like insurance appeals, companies can open up entirely new use cases and significantly increase their revenue projections. This is pure, AI-driven business creation.

Where Do You Stand in the New AI Hierarchy

Level | Focus & Mindset | Competitive Edge |

| 1 – The Skeptics | Focus: Preserving old processes. Mindset: "My experience is a sufficient defense." | None. Their work is exponentially more expensive and slower than the market baseline. |

| 2 – The Tinkerers | Focus: Isolated tool use. Mindset: "I'll learn it when I have time." | Marginal. Quick, low-level efficiency gains that plateau quickly. No systemic change. |

| 3 – The AI Integrators | Focus: Customizing AI for leverage. Mindset: “AI handles routine; I focus on strategy.” | High. Achieves 3x-5x productivity by using advanced prompting and no-code tools to create proprietary workflows. |

| 4 – The Architects | Focus: Strategy, Risk, and Governance. Mindset: "I don't just use the system; I architect the business around its capabilities." | Exponential. Drives organizational ROI by designing enterprise-wide AI systems and ensuring compliance and ethical guardrails. |

| 5 – The Visionaries | Focus: Scientific breakthroughs and proprietary intelligence creation. Mindset: "I invent the next generation of AI capabilities." | Defining the Market. Redefines the field; creates paradigms others follow; visionary impact. |

How they differ

- Engagement with AI:

- Levels 1–2 avoid or tinker, Levels 3–4 use strategically and architect systems, Level 5 innovate and define AI.

- Levels 1–2 avoid or tinker, Levels 3–4 use strategically and architect systems, Level 5 innovate and define AI.

- Mindset & impact:

- Progression from fear/resistance → curiosity → strategic leverage → system-level leadership → innovation → vision and global influence.

- Progression from fear/resistance → curiosity → strategic leverage → system-level leadership → innovation → vision and global influence.

- Career trajectory:

- Early levels struggle to survive, mid-levels thrive and lead teams, top levels shape industries and research trends.

- Early levels struggle to survive, mid-levels thrive and lead teams, top levels shape industries and research trends.

How to Climb the AI Literacy Ladder

You’ve seen the table. Your current literacy level determines your market value in 2026. The goal is not just to move up a level, but to close the gap between your current position and The Integrator (Level 3), the new floor for high-value careers.

To Leap from Level 1 (AI Skeptics) to Level 2 (AI Tinkerers)

- Spend thirty minutes a day using a generative tool.

- Your problem is not technical; it’s psychological. Your goal is to move from fear to familiarity.

- Your problem is not technical; it’s psychological. Your goal is to move from fear to familiarity.

- Adopt the mindset of the "AI skeptic as a tester."

- Your initial task is to actively try to break AI. Ask the same question in five different prompt styles – vague, detailed, professional, casual, and antagonistic – and analyze the failure points.

- Your initial task is to actively try to break AI. Ask the same question in five different prompt styles – vague, detailed, professional, casual, and antagonistic – and analyze the failure points.

To Leap from Level 2 (AI Tinkerers) to Level 3 (AI Integrators)

- Move from consumption to customization.

- Your problem is that you treat AI as a generic chatbot. Your goal is to treat AI as a flexible, custom co-pilot designed for your specific work.

- Your problem is that you treat AI as a generic chatbot. Your goal is to treat AI as a flexible, custom co-pilot designed for your specific work.

- The $20 automation challenge.

- Identify your most recurring administrative task (e.g., meeting notes to action items, compiling weekly status reports). Invest the small, monthly fee in a paid AI service or a low-code automation tool.

- Identify your most recurring administrative task (e.g., meeting notes to action items, compiling weekly status reports). Invest the small, monthly fee in a paid AI service or a low-code automation tool.

- Stop asking the AI to do the task (write a draft).

- Start asking it to design the framework for the task (e.g., "Act as a strategy consultant and create a 5-step, template-driven workflow for analyzing client proposals that includes a risk matrix and a resource allocation plan, using markdown format."). This forces you to think like an integrator.

- Start asking it to design the framework for the task (e.g., "Act as a strategy consultant and create a 5-step, template-driven workflow for analyzing client proposals that includes a risk matrix and a resource allocation plan, using markdown format."). This forces you to think like an integrator.

To Leap from Level 3 (AI Integrators) to Level 4 (AI Architects)

- Master governance and strategic risk.

- You are productive, but can you scale your productivity across the firm safely? Your goal is to move from managing your workflow to managing the organization’s risk profile.

- You are productive, but can you scale your productivity across the firm safely? Your goal is to move from managing your workflow to managing the organization’s risk profile.

- Stop asking how to be 5x faster.

- Start asking: "What is the biggest risk my organization faces in the next 18 months, and how can I build an AI workflow (using RAG or internal agents) to simulate scenarios and mitigate that risk?" This is the language of the C-suite.

- Start asking: "What is the biggest risk my organization faces in the next 18 months, and how can I build an AI workflow (using RAG or internal agents) to simulate scenarios and mitigate that risk?" This is the language of the C-suite.

To Leap from Level 4 (AI Architects) to Level 5 (AI Visionaries)

- Invest in intelligence creation.

- This is not about managing people; it’s about sponsoring the next breakthrough.

- This is not about managing people; it’s about sponsoring the next breakthrough.

- The research partnership.

- You don't need a PhD, but you need access to one. Find an academic researcher or a specialized ML engineer (internal or external) working on a problem relevant to your company's core challenge. Allocate a small, strategic budget for them to fine-tune an open-source LLM using a tiny fraction of your proprietary data. Your goal is to prove a measurable performance improvement (e.g., a 15% increase in lead qualification accuracy).

- You don't need a PhD, but you need access to one. Find an academic researcher or a specialized ML engineer (internal or external) working on a problem relevant to your company's core challenge. Allocate a small, strategic budget for them to fine-tune an open-source LLM using a tiny fraction of your proprietary data. Your goal is to prove a measurable performance improvement (e.g., a 15% increase in lead qualification accuracy).

How to Make Your Organization AI Literate: A Leader's Blueprint

Source: Hostinger

The journey starts with your people but extends to processes, products, and decision-making systems. There’s no universal test or framework, no “one-size-fits-all” course you can tick off. That’s both a challenge and an opportunity, because it means you get to define what AI literacy truly means for your organization.

1. Define the right level for the right people

Not everyone needs to be a model trainer or an AI architect. Some employees only need a high-level understanding to make informed decisions; others need deep technical mastery. Think of it like fluency in a language: some speak casually, others write academic papers. The question is “how AI literate does each role need to be to succeed and drive value?”

2. Create experiences

Traditional training is dead. Simply sending employees to a class or e-learning module won’t work in AI. AI literacy requires experimentation, exposure, and context.

Some unconventional approaches:

- Create AI sandbox environments where teams can experiment safely.

- Encourage internal hackathons focused on applying AI to real organizational problems.

- Reward employees for creative AI solutions, not just correct usage.

3. Embed ethics, strategy, and accountability

AI literacy is ethical, strategic, and operational. Employees need to understand the risks of bias and misuse, data privacy implications, and how AI decisions affect customers, partners, and society.

A fully AI-literate organization integrates ethical thinking into every workflow.

Curious how developers get more done in less time? These AI tools can help.

Wrapping Up: Adapt or Be Automated

In the next few years, career success will be defined by your ability to think critically, collaborate across disciplines, and work fluently with AI systems.

The question is which level you will be at. That choice shapes your career trajectory, your earning power, and your place in the workforce of tomorrow. No pressure, but the clock ticks louder every day.

Three hard truths from the front lines:

- Most people overestimate their AI skills. If you rated yourself a "Level 3 Integrator" before reading this, you are probably a Level 2 Tinkerer at best. That's fine. Awareness is the first step toward true mastery.

- The gap is widening fast. In 2023, AI skills were optional. In 2024, they became expected. By 2025, they’re often deal-breakers. In 2026, AI literacy will be as fundamental as computer literacy was in 2000.

- You can’t fake it anymore. Employers now measure AI skills objectively. Writing “proficient in AI” on your resume won’t cut it. Your ability to demonstrate AI fluency matters more than ever.

Every level you climb unlocks new opportunities, higher impact, and greater influence. Which level will you be? Choose wisely.

➡︎ Looking to hire AI-literate talent? Index.dev connects you with vetted developers who operate at Level 3–5 — integrators, architects, and AI-ready experts who can transform your organization.

➡︎ Enjoyed this read? Explore our in-depth guide on the skills AI can't automate and find out whether AI agents will replace software developers. Check out the top AI skills to learn to command a higher salary and discover the 10 must-have AI roles for the future of work. For data-driven insights, dive into 50+ AI in job interview stats, AI growth statistics by country, and developer productivity stats with AI coding tools. Finally, understand the bigger picture with 50+ key AI agent statistics and adoption trends.