Is your AI recruiter screening out top talent?

A leading tech firm found that their AI was rejecting CVs from women for one reason alone: they referenced "women's chess club captain." Another firm found that their video interview system worked perfectly for white men but struggled with darker skin tones.

Source: ResumeBuilder

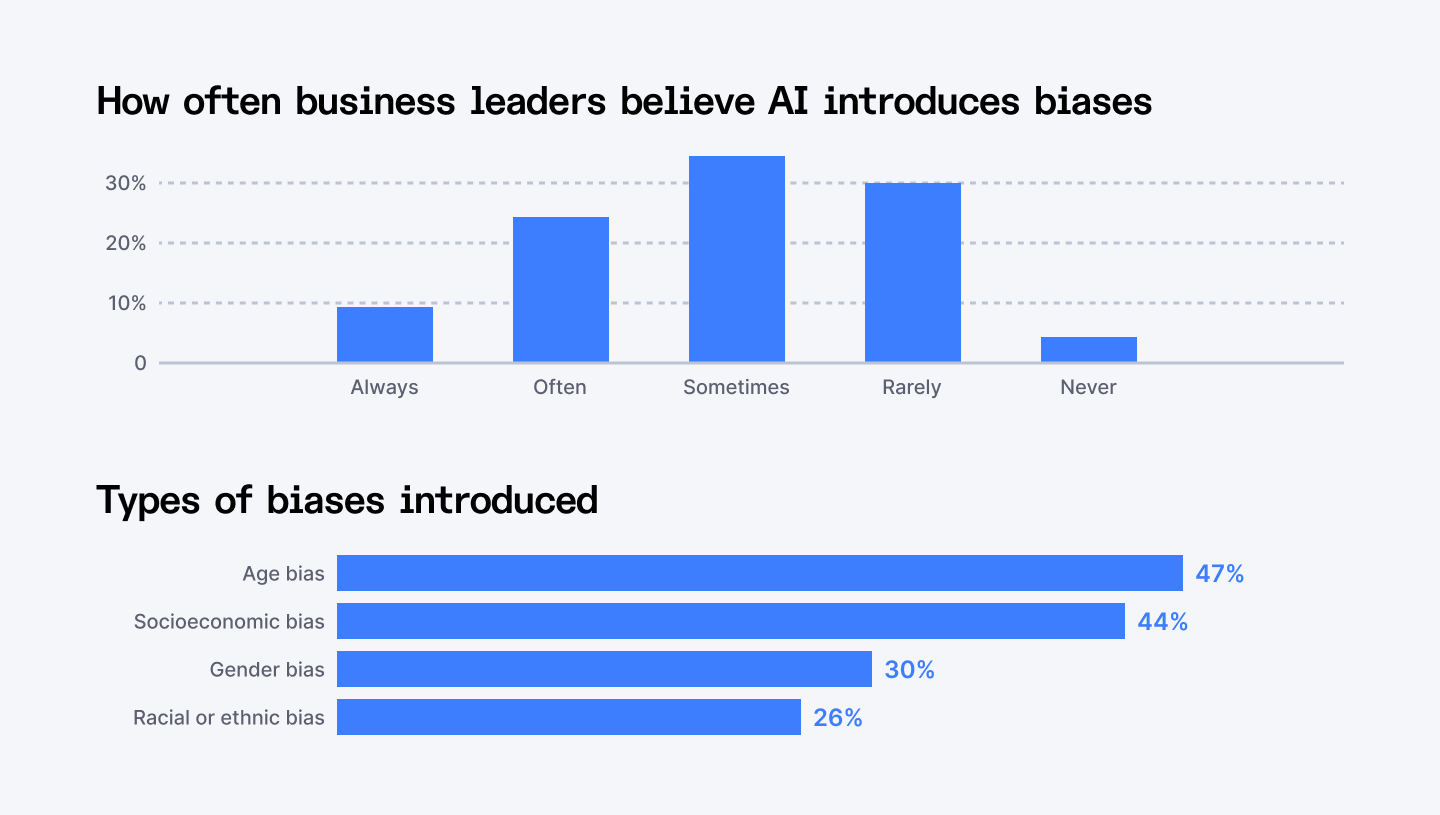

Bias in AI hiring tools isn’t just unfair, it hurts your brand, scares away candidates, and is extremely costly. Understandably, reducing bias in hiring with AI has become urgent as a significant number of AI hiring tools show racial or gender bias, according to MIT research.

Businesses now risk £400,000+ fines under new legislation such as NYC's Automated Employment Decision Tool law. Worse still, 42% of job applicants are suspicious of AI recruitment, possibly driving away great talent.

We’ve built a 10-step checklist so you can spot bias, stay compliant, and win top talent with clear metrics, CTAs, and best practices.

Let’s dive in.

Skip biased AI hiring tools by accessing Index.dev's pre-vetted, diverse tech talent pool now.

The True Price of Biased AI Hiring

Businesses employing biased AI are at risk from three significant hazards:

- Legal sanctions: New laws in New York, the EU, and the UK mandate severe fines

- Reputation loss: Rumors of discriminatory hiring spread rapidly on social media

- Talent attrition: Quality candidates steer clear of biased hiring companies

Index.dev has assisted numerous businesses in overcoming such challenges through delivering clear, auditable AI solutions that work on reducing bias in hiring with AI instead of concealing it.

Explore 17 AI recruiting tools that make finding software engineers easier.

10-Point Bias Detection Framework

1. Who's Monitoring Your AI?

According to Deloitte research, over 60% of companies believe their AIs have material artificial intelligence risks. Without clear ownership, bias issues multiply until they become expensive legal problems.

All companies must have one person who is solely in charge of keeping an eye on AI bias. This can't be your HR manager's part-time job, it needs technical knowledge and real attention.

Test this:

- Do you know the name of the person who checks your AI recruitment tools for bias?

- Do they have the skills and authority to fix problems they find?

Action needed:

Your AI oversight person should produce monthly reports showing how different groups fare in your hiring process.They ought to be monitoring "model drift" too, when AI slowly drifts in behavior over time.

2. Can People Report Issues?

Based on EEOC data, organisations with proper bias reporting resolve issues 32% faster than those without.

Both staff and job applicants must have easy means to report perceived bias. Most companies design elegant AI systems but do not design feedback systems.

Check this:

- If a candidate thinks your AI treated them unfairly, how would they tell you?

- Can they find your contact details easily?

- Do you respond quickly?

Action needed:

Establish a straightforward online complaint form for reporting bias. Commit to replying within 72 hours. Educate your staff to treat these complaints seriously, they usually flag issues your technical audits do not catch.

3. Transparency of Training Data

Only 17% of recruitment datasets meet basic diversity standards. Poor training data causes 48% higher rejection rates for minorities.

AI is trained on past data, which tends to hold past biases. If your AI vendor can't explain their training data, that's a red flag.

Check this:

Ask your vendor about their training data demographics:

- How many women were included?

- What about ethnic minorities?

- Which age groups?

- Which countries?

Action needed:

Reducing bias in hiring with AI starts with diverse, representative training data. Demand documentation showing geographic, gender, racial, and age distributions. Index.dev provides complete training data transparency because we believe clients deserve to know how their tools work.

4. Periodic Bias Audits

Good audits apply the "4/5ths rule," if a selection rate from one group is below 80% of another group, you probably have bias. They also compare error rates to determine if AI makes more errors when it comes to specific groups.

You don't skip fiscal audits, so don't skip bias audits either. NYC law mandates annual audits, but quarterly reviews catch things sooner.

Check this:

How recently did you audit your AI recruitment tools? Do the findings reveal disparate results for disparate groups? Can you discuss differences?

Example:

Out of 100 men, your AI picks 80. Out of 100 equally qualified women, it picks only 60. This implies that women are selected at 75% the rate of men (60 ÷ 80 = 0.75), which falls below the 80% fairness threshold, so you should flag and fix this bias.

Action needed:

Automate audit scripts so your bias owner isn’t manually crunching spreadsheets.

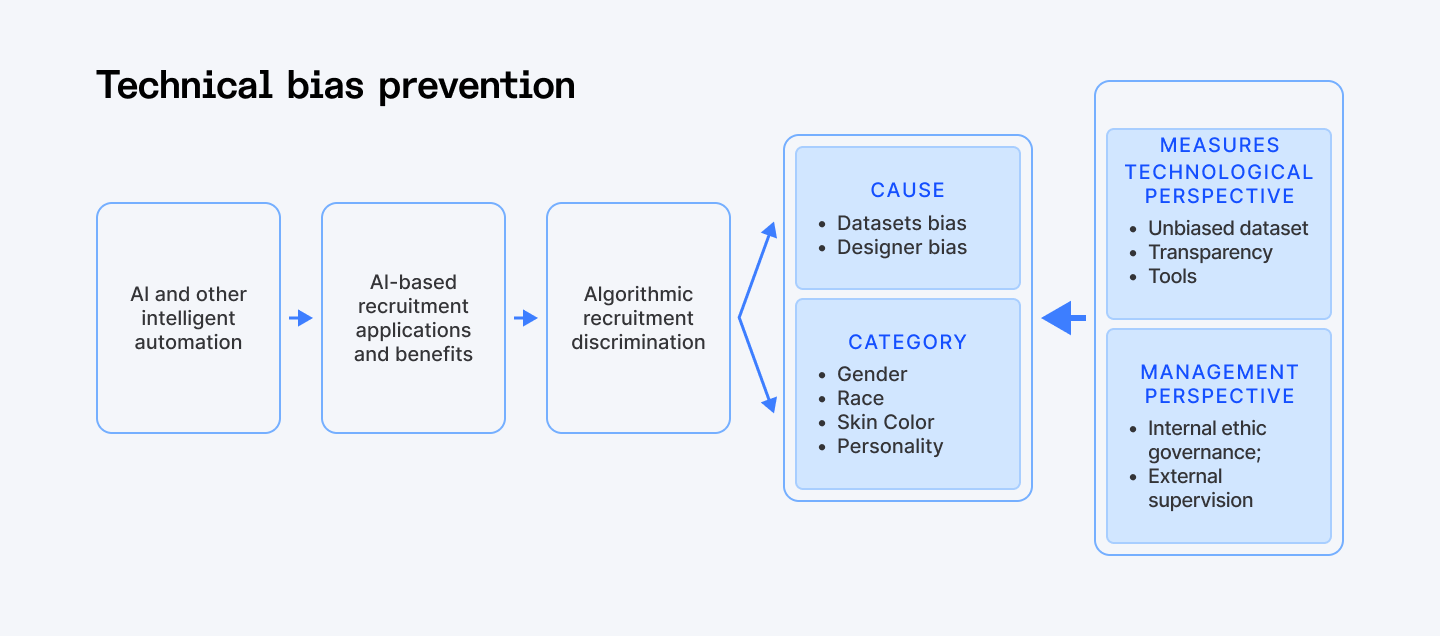

5. Technical Bias Prevention

Contemporary AI systems employ certain methods to avoid bias during training. Tools such as IBM's Fairness 360 and Google's What-If Tool assist in the detection and reducing bias in hiring with AI. Moreover, AI ethics in recruitment requires these technical protections, not merely good intentions.

Verify this:

Ask your vendor what debiasing techniques they employ:

- Do they employ adversarial debiasing, reweighting, or fairness constraints?

- Can they detail how these function?

How Index.dev can help:

Index.dev integrates multiple debiasing techniques, like adversarial debiasing, re-sampling, and fairness audits, so you’re not just signing off on policy, but getting real, measurable bias reduction under the hood.

6. Explainable Decisions

The EU AI Act mandates explainability for hiring choices. Search for systems employing SHAP or LIME methods to decompose intricate choices into comprehensible elements. Candidates appreciate clarity, and regulators demand it.

If your AI is unable to explain its decisions, how do you know you can trust them? Black box systems pose legal and practical issues.

Test this:

When your AI rank-orders candidates, can you even look at why? Can you explain the rank-order to candidates? Do you know which factors were most important?

Actions needed:

Rather than merely stating "Candidate A received 85/100," excellent AI reveals that 30 points were from experience, 25 from education, 20 from competencies, and 10 from other aspects.

7. Human Supervision

As per Cambridge University research, human-AI hybrid systems help in reducing bias in hiring with AI significantly compared to purely automated systems.

AI must assist people in making improved decisions, not eliminate human judgment altogether. Always involve humans in the loop for ultimate hiring decisions.

Verify:

Do humans approve all AI suggestions prior to extending offers? Can recruiters overrule AI if necessary? Do they understand when and why to do so?

Actions needed:

Pure automation magnifies bias. Opt for a hybrid method. Reducing bias in hiring with AI works best when humans and machines work together, each contributing their strengths.

8. Compliance with Regulations

Regulations on AI hiring evolve quickly. Your solutions need to be in accordance with existing legislation and keep up with the latest ones. Missing an update exposes you to fines and reputational damage.

Some key regulations are:

- NYC Local Law 144 (bias audits mandatory)

- EU AI Act (hiring as high-risk)

- GDPR Article 22 (rights for automated decision-making)

Verify this:

- Can your supplier supply compliance reports?

- Do they monitor changes in regulation?

- Have they refreshed their systems to accommodate new obligations?

Actions needed:

Be cautious of sellers who appear to be unaware of these rules, they may risk your business with the law.

Ignorance isn’t a defense—courts hold you accountable even if you outsourced the technology.

9. Independent Verification

Fresh eyes catch hidden issues. Internal audit has its merits, but external verification lends legitimacy and usually discovers blind spots that internal groups overlook.

Trusted auditors include BSI, ICO, and the Algorithmic Justice League. External audits improve fairness metrics by 15% on average.

Check this:

- Has an independent firm audited your AI hiring tools?

- Do they have experience with employment AI?

- Can you see their methodology and results?

Budget tip:

Bundle your bias audit with other compliance audits to save costs.

10. Candidate Experience

Fair hiring isn’t just about code, it’s about the people on the other side of your screen. If candidates feel misled or confused by AI, you’ll lose their trust (and your employer brand).

Check this:

Do you question candidates for their purported experience with AI? Is there an option to exclude automated screening? Do you describe how AI impacts their application?

Actions needed:

Gather anonymous feedback and scan for patterns. If several candidates from the same backgrounds report unfair treatment, treat it as a red flag, share the insights with your bias owner and vendor, and publish a brief update to applicants (e.g. “We’ve improved X based on your feedback”).

Bias Detection Comparison Table

Feature | Basic AI Tools | Index.dev Solution | Best Practice Standard |

| Training Data Transparency | None provided | Full documentation | Complete demographic breakdown |

| Bias Audit Frequency | Annual (if any) | Quarterly | Month%ly monitoring |

| Explainability | Black box | SHAP/LIME supplied | Full decision breakdown |

| Human Oversight | Optional | Required | Always mandatory |

| Compliance Documentation | Limited | Comprehensive | All regulations covered |

| Independent Audits | Rare | Regular | Annual minimum |

Implementation Steps

Inclusive hiring takes systematic effort, not quick fixes. Try implementing your organization’s AI hiring plans in phases:

Phase 1 - Assessment (Week 1-2)

- Finish this checklist for current tools

- Gaps and risks identified

- Compliance status documented

Phase 2 - Vendor Evaluation (Week 3-4)

- Ask vendors to meet all 10 points

- Compliance documentation requested

- Arrange independent audits

Phase 3 - Deployment (Week 5-8)

- Phase in new tools

- Train employees to detect bias

- Set monitoring procedures

Find out how to hire developers faster with AI (without having a recruiter on your team).

Moving Forward

Reducing bias in AI hiring is about building fair, transparent processes that bring you better candidates and a stronger employer brand.

This checklist lays out every step, from naming your bias owner to auditing your data, so you can vet any AI tool with confidence.

Because building fair hiring isn’t just a one-and-done project, it’s a partnership between your team and your AI.

Start today by assigning ownership, running your first audit, and demanding full transparency. The question is not whether you can afford to adopt these practices, it's whether you can afford not to.

Hire from the top 5% of vetted devs with fair, bias-checked processes, 48-hour matching, and a 30-day free trial. Start unbiased hiring today!