Your theorem proofs keep hitting dead ends. You need something that actually understands formal logic. That's where DeepSeek Prover V2 671B comes in—a 671-billion parameter heavyweight that's smashing benchmarks at 88.9% on MiniF2F and solving Putnam competition problems that would stump most of us.

But here's the thing: capability doesn't mean accessibility. You need to know where to run it, how much it costs, and whether it actually integrates with your stack.

We tested five platforms—Together AI, DeepInfra, Baseten, PPInfra, and PPIO—so you get the real story, not the marketing speak. By the time you finish this guide, you'll know exactly where to deploy Prover V2 and why.

Ready to build breakthrough tools like DeepSeek? Join Index.dev and match with global teams shaping the future of AI.

What is DeepSeek Prover V2 671B?

DeepSeek Prover V2 is a smart tool made to understand logic and math. You can type a problem or idea in normal English, and it will help turn it into a step-by-step math proof. It takes what you’re thinking and turns it into something that can be checked and proven using real math rules.

This focus on formal math means the model can tackle problems that even skilled humans find challenging. In fact, DeepSeek Prover V2 achieves near state-of-the-art results on math benchmarks – for example, it solves about 88.9% of the MiniF2F test problems (a standard theorem-proving benchmark), outperforming even GPT-4 (which scores ~75%) on those tasks.

It isn't your typical LLM—it's specialized for formal theorem proving in Lean 4. If you're wondering how it compares to general-purpose models like ChatGPT (spoiler: they solve different problems), check our DeepSeek vs ChatGPT comparison to understand why Prover V2 is purpose-built

What are the key features of DeepSeek-Prover V2?

1. DeepSeek-Prover V2 uses a massive 671B-parameter model built for efficiency

DeepSeek-Prover V2 is built on a Mixture-of-Experts architecture that contains 671 billion parameters in total.

However, the model doesn’t use all of them at once. Instead, it activates only about 37 billion parameters per inference, which makes it powerful yet computationally efficient. This balance allows the model to handle complex mathematical reasoning tasks without requiring extreme hardware resources.

2. It supports extremely long inputs (up to 163,840 tokens)

Unlike most models that struggle with long contexts, DeepSeek-Prover V2 can process up to 163,840 tokens in a single input. This allows the model to handle large mathematical problems, complete multi-theorem proofs, or consider multiple definitions and lemmas at once.

As a result, it stays logically consistent across long and complex reasoning chains.

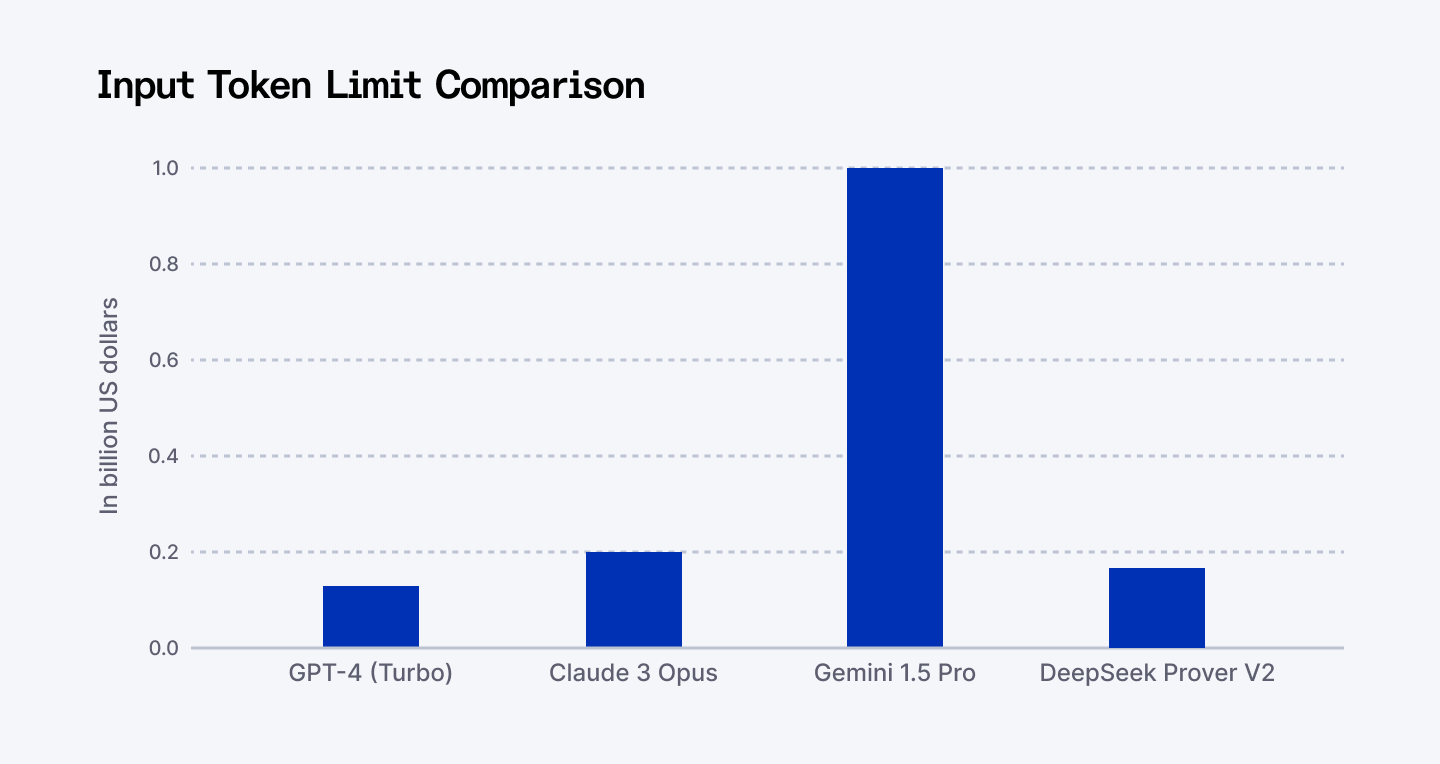

Here is a bar chart showing the input token limit comparison between top models, including DeepSeek Prover V2.

3. The model is fine-tuned to generate formal proofs in Lean 4

DeepSeek-Prover V2 is not a general-purpose model. It is fine-tuned specifically to write formal proofs in Lean 4, a proof assistant used in academic mathematics.

This means it can take an intuitive explanation or an informal chain of thought and turn it into a rigorous, machine-verifiable proof using Lean syntax. This feature is especially valuable for mathematicians, researchers, and formal methods engineers.

4. It learns from a recursive subgoal decomposition training method

To prepare the model for formal reasoning, the researchers designed a cold-start training process. They used DeepSeek-V3 to break down difficult theorems into smaller subgoals.

A smaller 7B model then solved these subgoals. The complete solutions, along with the informal reasoning steps, were combined to create a dataset that teaches the model to connect intuitive thinking with formal logic.

5. The model improves through reinforcement learning based on correctness

After the initial training, DeepSeek-Prover V2 undergoes reinforcement learning. The model receives binary feedback—each proof is either correct or incorrect.

This simple but powerful reward system helps the model learn how to align informal reasoning with Lean's strict proof-checking rules. Over time, the model becomes better at producing logically valid complete proofs.

6. DeepSeek-Prover V2 achieves state-of-the-art performance in math benchmarks

This model outperforms many existing systems in formal mathematics.

It achieves an 88.9% pass rate on the MiniF2F benchmark, which is known for its difficulty. It also successfully solves 49 out of 658 problems from the PutnamBench dataset, which includes advanced university-level competition problems.

These results show that DeepSeek-Prover V2 sets a new standard for open-source theorem-proving models.

7. The creators introduced a new benchmark dataset called ProverBench

To test the model across a wide range of difficulty levels, the team released ProverBench, a dataset with 325 problems.

This includes 15 problems from the AIME competitions and 310 problems from textbooks and math tutorials. Topics covered include calculus, linear and abstract algebra, number theory, real and complex analysis, and probability. This benchmark allows developers and researchers to evaluate the model in both classroom and competition settings.

8. You can choose between two model sizes based on your needs

DeepSeek-Prover V2 is available in two sizes:

- The 7B model is lighter and based on Prover V1.5. It supports up to 32K tokens, making it ideal for experimentation or lower-resource environments.

- The 671B model delivers full power, long-context handling, and top-tier performance for serious formal proof automation tasks.

9. The model is fully open-source and free to use

DeepSeek-Prover V2 stands out because it is open-source under the MIT License, which permits both research and commercial use. The model weights are available to download, and you can also try it online for free via Huggingface.co and OpenRouter.ai.

This accessibility allows developers, educators, and researchers to build custom applications, run experiments, or even fine-tune the model without paying API fees.

Read More: DeepSeek vs. ChatGPT | Is DeepSeek the Better AI Model?

Who is DeepSeek Prover V2 for?

DeepSeek Prover V2 is best suited for individuals and teams working in fields that require high levels of mathematical or logical precision.

Researchers using Lean 4 will find it especially useful for constructing and verifying formal proofs across a wide range of mathematical domains. Developers building safety-critical software systems can use the tool to ensure that their code meets strict correctness requirements through formal verification. It is also a valuable resource for students learning formal methods, offering step-by-step guidance in constructing proofs and deepening their understanding of logic.

Additionally, AI safety researchers can leverage DeepSeek Prover V2 to formally analyze and verify AI behaviour, making it a powerful tool for those working on alignment and trustworthy AI systems.

DeepSeek has released several specialized models for different tasks. Prover V2 is purpose-built for theorem proving, while their newer models like R1 focus on reasoning, and Grok 3 handles different workloads entirely.

If you're trying to understand how these compare—and whether Prover V2 is the right choice for your use case—we've compared Grok 3 vs DeepSeek R1 in detail here. It gives context for where Prover V2 sits in the broader landscape.

The 5 Platforms We Tested: Which One's Right for You?

Not all inference platforms are created equal. We tested speed, cost, integration, and reliability. Here's the honest breakdown—including the gotchas most platforms won't tell you about.

Platform | Model ID | Pricing Model | API Compatibility | Context Support | Status |

| Together AI | deepseek-ai/DeepSeek-Prover-V2-671B | Pay-per-token | OpenAI-compatible | Up to 163K tokens | Active |

| DeepInfra | deepseek-ai/DeepSeek-Prover-V2-671B | Pay-per-token (~$0.027/1K tokens) | OpenAI-compatible | Full context | Redirected* |

| Baseten | deepseek-prover-v2-671b | Usage-based | OpenAI-compatible | Full context | Active |

| PPInfra | deepseek-prover-v2-671b | Pay-per-token | OpenAI-compatible | Full context | Active |

| PPIO | deepseek-prover-v2-671b | Pay-per-token | REST API | Full context | Active |

| OpenRouter | deepseek/deepseek-prover-v2 | Aggregated pricing | OpenAI-compatible | Full context | Active (Free tier available) |

| Fireworks AI | fireworks/deepseek-prover-v2 | Pay-per-token | OpenAI-compatible | 163K tokens | Active |

*Note: DeepInfra has redirected DeepSeek Prover V2 671B requests to DeepSeek-V3-0324 due to low usage. For dedicated Prover V2 access, consider Together AI or Baseten.

How to Access DeepSeek Prover V2 671B on Each Platform

Together AI DeepSeek Prover V2 671B

Together AI offers straightforward access to DeepSeek Prover V2 671B with OpenAI-compatible endpoints:

python

from openai import OpenAI

client = OpenAI(

base_url="https://api.together.xyz/v1",

api_key="YOUR_TOGETHER_API_KEY"

)

response = client.chat.completions.create(

model="deepseek-ai/DeepSeek-Prover-V2-671B",

messages=[{"role": "user", "content": "Prove that sqrt(2) is irrational in Lean 4"}]

)

DeepInfra DeepSeek-Prover-V2-671B

DeepInfra provides low-latency inference with competitive pricing:

bash

curl "https://api.deepinfra.com/v1/openai/chat/completions" \

-H "Authorization: Bearer $DEEPINFRA_TOKEN" \

-d '{"model": "deepseek-ai/DeepSeek-Prover-V2-671B", "messages": [{"role": "user", "content": "Your theorem here"}]}'

Baseten DeepSeek Prover V2 671B

Baseten offers managed deployment with automatic scaling for production workloads. Access via their model library with OpenAI-compatible SDK integration.

PPInfra & PPIO DeepSeek Prover V2 671B

Both PPInfra and PPIO provide cost-effective alternatives for batch processing and high-volume inference tasks. Check their respective documentation for current availability and rate limits.

Tested results of Deepseek Prover V2

1. Prompt Used:

“What is the positive difference between 120% of 30 and 130% of 20?”

Response:

2. Prompt Used:

“Complete the following in Lean 4 code

before producing lean 4 code to prove the given problem, provide a detailed prove plan outlining the main proof steps and strategies.

the plan should highlight the key ideas, intermediate lemmas, and proof structure that will guide the constructions of the final formal proof.”

Response:

Detailed Proof and Abstract Plan

Lean 4 Code and Explanation:

3. Prompt Used:

“Alice wants to prove to Bob that she knows the square root of a number `N` without revealing the actual square root.”

Response:

Deepseek Prover V2 responded with three different proof methods for this.

Proof 1:

Proof 2:

Formal zero-knowledge proof for square root.

Response 3:

Zero-knowledge proof using Fiat-Shamir Heuristic

4. Prompt used:

“Prove that for any two sets A and B, their intersection is a subset of A; that is. A ∩ B ⊆ A.Provide the complete Lean 4 proof and a one-sentence explanation of the key step.”

Response:

5. Prompt used:

“Here is a recursive function in Lean 4 that computes the sum of the first n natural numbers:

def sum_upto : ℕ → ℕ

| 0 => 0

| n + 1 => (n + 1) + sum_upto n

Prove that this function correctly computes n(n+1)/2 for all n ≥ 0. Provide the Lean 4 proof and explain how induction is applied.”

Response:

Explanation:

Choosing the Right Platform

First time with Prover V2? Start here:

Use OpenRouter's free tier. No credit card. No setup fees. Prove one theorem end-to-end. This takes 10 minutes and costs nothing. If you hate it, you lost no money. If you love it, you're now informed.

Running production workloads?

Together AI or Baseten. Both have actual SLAs, proven uptime, and support teams that answer emails. You'll spend more, but you won't be debugging failures at 2 AM.

But before you pick a platform, understand that using DeepSeek Prover V2 involves data flowing through DeepSeek's systems. Check our guide on DeepSeek security and privacy risks if you're in a regulated industry or handling sensitive user data. Some teams opt for alternative models based on compliance requirements.

Scaling to thousands of proofs per week?

PPInfra or PPIO. Cost-effective for bulk processing. Pricing is usually 30–40% cheaper than Together AI, but you're doing more DevOps yourself.

Need sub-50ms latency?

DeepInfra or Fireworks AI. Fast infrastructure, but double-check that Prover V2 671B is actually available (some platforms deprioritize smaller-usage models).

Determined to self-host?

The honest answer: it's cheaper to use Together AI unless you're running millions of inferences per month. Self-hosting needs:

- A GPU with ~250GB VRAM (for 671B MoE)

- Or stick with the 7B model on a 4090 (doable, but tight)

- Infrastructure expertise to handle failures

- Patience for scaling headaches

If you have those, Hugging Face has the weights (just download them at deepseek-ai/DeepSeek-Prover-V2-671B). If you don't, use a platform.

Final words

DeepSeek Prover V2 isn't hype. The 88.9% benchmark on MiniF2F and the Putnam competition wins are real. But here's what matters: can you actually use it?

The answer is yes, and it's easier than you think. You don't need to be a researcher with institutional access. You don't need to self-host on a $50K server.

Start small: Spend 10 minutes on OpenRouter's free tier. Prove a theorem. See how it feels.

Go bigger: Pick Together AI or Baseten when you're ready to integrate into your workflow.

Scale fast: When you're proven-in, PPInfra or PPIO handle bulk workloads cheaply.

The formal verification gap is closing. Prover V2 is a big part of why. Don't get left behind.

For Developers:

Ready to build the future of AI like DeepSeek Prover V2? Join Index.dev and get matched with top global companies!

For Clients:

Need top AI engineers fast? Hire the vetted 5 % on Index.dev—matched in 48 hrs, 30‑day trial included.