In today’s AI-driven era, it is nearly impossible to escape the advent of artificial intelligence (AI) tools like DeepSeek and their transformative effects on how businesses operate and innovate. But, to quote a certain superhero, with great power comes great responsibilities - especially when it comes to security and privacy.

As tech leaders, we've watched these tools revolutionize how companies operate - but we've also witnessed the security nightmares that follow hasty adoption ignoring safety and privacy.

DeepSeek has emerged as a compelling challenger to ChatGPT, offering impressive capabilities at lower costs. But beneath its slick interface and powerful features lurks a complex security landscape few tech executives fully grasp until it's too late.

Our security team has spent weeks analyzing the hidden vulnerabilities in DeepSeek's architecture, and what we've discovered might change how you approach implementation.

🔒 Hire AI experts fast! Get top vetted developers in 48 hours for secure and compliant AI solutions.

Understanding DeepSeek AI

DeepSeek burst onto the scene relatively recently (2023) and was founded by Liang Wenfeng along with a team of researchers in China. Since then, they've developed several advanced large language models (LLMs) that have caught the attention of the tech community for both their performance and cost-efficiency.

What makes DeepSeek different? Is DeepSeek safe to use? Their models use a mixture-of-experts architecture that allows them to achieve impressive results at significantly lower training costs than competitors. Their V3 model reportedly features 671 billion parameters while operating with around 37 billion at any given moment, creating an efficient balance of capability and resource usage.

Technical Capabilities vs. Security Considerations

If you're considering DeepSeek for your organization, you're probably weighing its technical capabilities against established alternatives. Its unique operational model introduces challenges that extend beyond technical prowess. But there's something else far more important that we need to evaluate: the security and privacy implications that might affect your business and customers.

The Security Spectrum: Is DeepSeek Safe to Use?

Evaluating AI safety is never a simple yes or no question. It’s far more complex with multiple entities and subtexts that we need to consider when mulling over safety and privacy. DeepSeek's unique architecture and operational model deal with specific security considerations that you need to evaluate before adoption.

Read More: DeepSeek vs. ChatGPT: Is DeepSeek the Better AI Model?

Why Security and Privacy Matter in AI

It’s important to consider tangible safety and privacy measures when dealing with all things AI. But before diving into DeepSeek's specifics, you must understand why security and privacy are non-negotiable:

- Data Breaches: When healthcare companies experienced AI-related data breaches last year, they didn't just lose files - they lost patient trust built over decades. Your AI platform touches your most sensitive data; protecting it isn't just good practice, it's survival.

- Adversarial Manipulation: We've witnessed sophisticated competitors use adversarial techniques to manipulate AI systems into revealing trade secrets or producing catastrophically wrong outputs at critical moments. Your model's integrity directly impacts your business reliability.

- Regulatory Compliance: The regulatory landscape has teeth. One European retailer faced €20 million in GDPR penalties after their AI implementation exposed customer data - a painful lesson in compliance that could have been avoided.

- Trust and Reputation: Your reputation takes years to build but can collapse overnight. When customers entrust you with their information, they're placing their digital lives in your hands. That trust? Once you've broken it, good luck getting it back. Just ask the companies who've tried apologizing after data breaches - their customer trust metrics tell a brutal story.

We've spent hours poring over the guidance from NIST and similar bodies. Their guidelines on AI security aren't light reading, but they're essential, providing you with crucial inputs on securing AI systems, emphasizing the importance of robust data protection mechanisms.

Key Security Risks of DeepSeek

Consider the following bar chart representing potential risk levels across various security dimensions:

Figure: Comparative Risk Levels in DeepSeek Integration

While DeepSeek offers a robust and cost-effective AI solution, several security risks must be thoroughly evaluated:

Open-Source Nature: Double-Edged Sword

DeepSeek's open-source approach offers transparency that closed models lack. According to research from Index.dev, open-source models allow developers to inspect the code for vulnerabilities and strengthen security through community oversight. Many eyes can spot bugs that might otherwise go unnoticed in closed systems.

The flip side of this transparency? Hackers can study the code too. They spot weaknesses, figure out exploits, and potentially create backdoors that wouldn't be possible with closed systems like ChatGPT. This fundamentally changes your security approach compared to proprietary models. DeepSeek handles mountains of data, which naturally increases your exposure during breaches and risks safety and privacy.

Data Exposure and Breach Vulnerabilities

DeepSeek processes large amounts of data, which increases the risk of exposure in the event of a breach. The company's privacy documentation states that:

- They do not use customer inputs for model training without explicit consent

- Data retention policies vary based on deployment method (API vs. self-hosted)

- Default logging captures metadata but not full prompt content

Keep in mind these policies could change tomorrow, and if you're running DeepSeek yourself, one configuration mistake could expose everything and compromise your safety and privacy. Avoiding breaches means asking tough questions:

- What's happening with your data encryption?

You need industry-standard protection (AES-256 or better) for everything sitting on servers and moving across networks. - How are your backups handled? What happens when systems fail?

Regular security testing isn't optional – run those penetration tests before hackers do it for you. Set up continuous monitoring to catch problems early.

This article by The Hacker News sheds further light on the risks and potential downfalls that arise with AI data breach.

Tip: Always request detailed security documentation from your AI provider. This helps in aligning their practices with your internal policies.

Model Manipulation and Adversarial Attacks

All LLMs face the risk of prompt injection attacks, where carefully crafted inputs attempt to manipulate the model into bypassing safety filters or performing unauthorized actions. These attacks can be particularly concerning in customer-facing applications or when processing untrusted inputs.

- Adversarial Examples: Malicious inputs designed to trick the system into producing erroneous results. Small perturbations in the input can lead to drastically different outputs.

- Impact on Decision Making: Inaccurate outputs can compromise business decisions or lead to safety and privacy breaches in sensitive applications.

A real-world example would be the research by Goodfellow et al. on adversarial examples (Explaining and harnessing adversarial examples) illustrates how minor input perturbations can lead to drastically different outputs - an issue that has affected similar systems. For more on adversarial attacks, you might refer to research outlined in the MIT Technology Review.

Deployment Infrastructure Vulnerabilities

Most security incidents involving AI models stem not from the models themselves but from vulnerabilities in the surrounding deployment infrastructure. DeepSeek's relatively recent entry into the market means fewer hardened deployment templates and security best practices compared to more established platforms.

API & Integration Vulnerabilities

Most businesses access DeepSeek via APIs. DeepSeek's Chinese origins raise questions about data residency and cross-border transfers when using its cloud APIs. While APIs enable seamless integration, they also open potential vulnerabilities:

- Lock down those API endpoints - they're the front door to your system.

- Strong authentication isn't optional - implement OAuth, require two factors, and follow security best practices.

Check out what OWASP recommends for API security if you need guidance. This becomes even more critical when you're in healthcare, finance, or regions with strict data sovereignty laws.

Censorship and Regulatory Influences

DeepSeek’s origins and operational jurisdiction might impose certain content moderation or censorship rules:

- Bias and Content Filtering: Depending on regional regulations, certain topics might be filtered or biased, affecting the impartiality of the data.

- Legal Compliance: Understand how local laws impact data handling and content moderation.

Training Data Uncertainties

Without complete transparency about training data sources, organizations face several potential issues:

- Data poisoning concerns: Was the training data deliberately contaminated?

- Bias amplification: Does the data contain and amplify harmful societal biases?

- Intellectual property questions: What copyrighted material might have been included?

- Memorization risks: Has sensitive information been memorized by the model? Is DeepSeek safe to use?

These training data questions aren't unique to DeepSeek, but the relative newness of the model means there's been less independent analysis compared to established alternatives.

Third-Party Dependencies

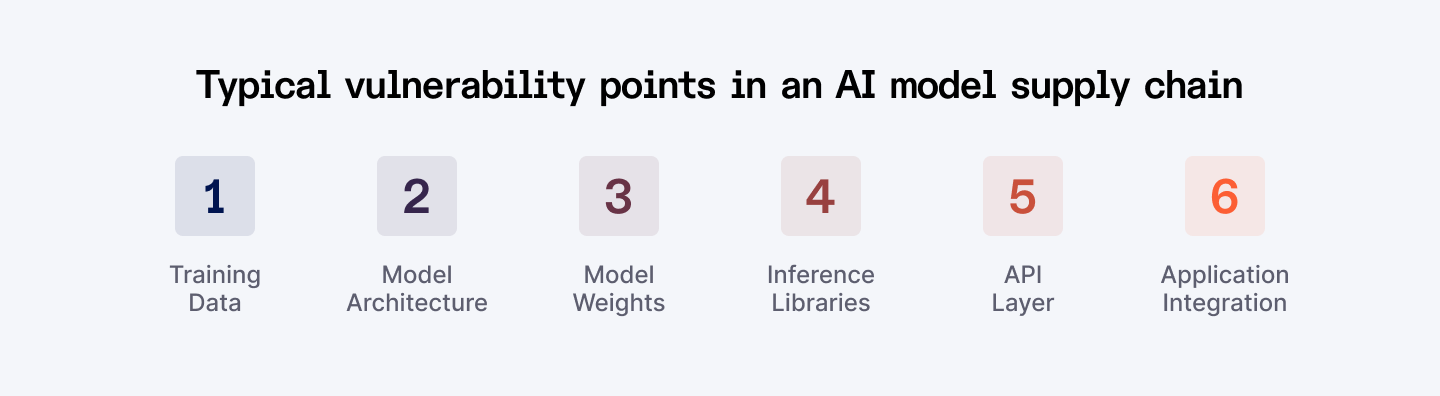

DeepSeek’s ecosystem may rely on various open-source libraries and third-party services. For developers integrating DeepSeek into applications, the dependency chain introduces additional attack surfaces. Open-source AI projects typically rely on numerous libraries and frameworks, each with its own potential vulnerabilities.

- Supply Chain Risks: Vulnerabilities in external components can cascade into your AI system.

- Regular Audits: Continuous monitoring and updates of third-party components are essential.

Snyk Research reports details how vulnerabilities in open-source libraries can affect entire systems - a stark reminder to thoroughly vet any third-party integrations.

Risk Assessment Diagram

Typical vulnerability points in an AI model supply chain

Alongside security, privacy is a paramount concern when handling sensitive data through AI systems.

Data Collection and Residency Risks

DeepSeek scoops up everything from your email address to how you type and what you ask about. Worse, this data often sits on servers in China, where government access laws work very differently than in the West. Got European customers? GDPR violations could cost you millions. Operating in California? CCPA has teeth too.

Data Poisoning Concerns and Differential Privacy Challenges

The training data sources for DeepSeek haven't been fully disclosed, raising questions about potential data poisoning. If malicious data was included in training sets, it could lead to backdoors or biases that create security risks. Understanding what data DeepSeek collects - and how it is used - is essential.

Moreover, without comprehensive differential privacy measures, there’s a risk that sensitive, memorized data might be extracted via adversarial techniques. Recent studies have shown that models lacking robust differential privacy safeguards are more susceptible to such leaks.

Anonymization and Re-identification Risks

Even "anonymous" data often isn't. Modern analytics can piece together supposedly scrubbed information, revealing exactly who your users are. Protecting privacy means going beyond basic anonymization - you need sophisticated techniques that truly shield user identities.

- Aggregation Concerns: Aggregated data can sometimes be de-anonymized with advanced analytics.

- User Privacy: Implementing rigorous anonymization techniques is critical to maintaining user trust.

User Control, Consent, and Data Extraction Risks

Privacy concerns extend beyond intentional data collection to include protection against adversarial attempts to extract information from the model. These extraction attacks attempt to make the model reveal training data or infer sensitive information through carefully designed queries.

Unlike some competitors that have published detailed information about differential privacy implementations, DeepSeek's protection mechanisms against these attacks aren't comprehensively documented. This creates uncertainty about the model's resilience to sophisticated privacy attacks that target the boundaries of what the model has memorized.

Organizations handling sensitive information need to consider additional privacy safeguards when implementing any LLM, including DeepSeek. The extent to which you (and your customers) can control your data is key:

- Consent Mechanisms: Ensure that users can provide informed consent before their data is processed.

- Data Access: Users should have the ability to access, modify, or delete their data in compliance with regulations.

Comparing DeepSeek Security with Other AI Models

To make an informed decision about DeepSeek's security posture, it's helpful to compare it with other leading AI models.

Security Feature Comparison

Security Feature | DeepSeek | ChatGPT | Claude | Llama 2 |

| Secure API authentication | ✓ | ✓ | ✓ | Depends on deployment |

| Content filtering | Basic | Advanced | Advanced | Basic |

| Prompt injection protection | Limited | Strong | Strong | Limited |

| Regular security updates | ✓ | ✓ | ✓ | Community- |

| Independent security audits | Limited | Multiple | Multiple | Some |

| Differential privacy | Unknown | Implemented | Implemented | Partial |

| Red team testing | Limited | Extensive | Extensive | Community-led |

Security Incident Response

A critical aspect of security is how quickly vulnerabilities are addressed when discovered. Analysis from Index.dev's security response tracking indicates:

- Commercial models like ChatGPT and Claude typically patch critical vulnerabilities within 1-7 days

- Open-source models like DeepSeek and Llama 2 have more variable response times, ranging from days to weeks depending on community engagement

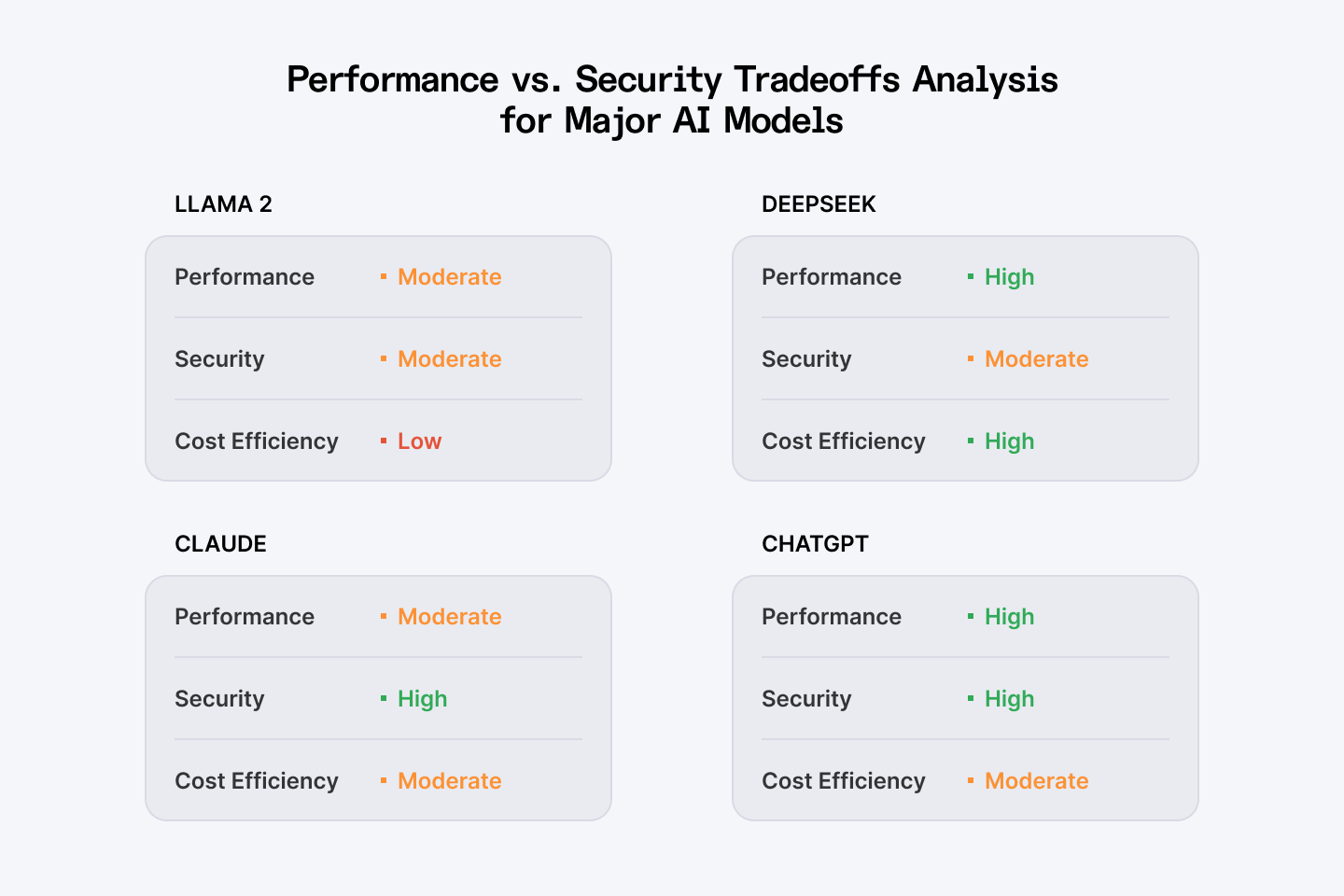

Performance vs. Security Tradeoffs

DeepSeek's architecture emphasizes performance and efficiency, which sometimes creates security tradeoffs:

Performance vs. security tradeoff analysis for major AI models

Best Practices for Mitigating Risks

To safely leverage DeepSeek while maintaining robust security and privacy, consider these best practices:

Data Encryption & Secure Storage

Lock down your data and safeguard your safety and privacy. You wouldn't leave your house keys under the doormat, so don't treat your data with less care. We've seen too many organizations use outdated encryption methods that might as well be transparent to determined attackers.

Our team recommends nothing less than AES-256 encryption both for stored data and information moving across networks. Double-check that your cloud providers actually implement the security standards they advertise - we've caught several cutting corners during our audits.

Robust Access Controls & Authentication

The intern who needs to run occasional queries shouldn't have admin-level access to your entire DeepSeek implementation. Sounds obvious, but we keep finding organizations with dangerously loose permission structures.

Force multi-factor authentication across your organization. Yes, people will complain. They'll complain a lot more when your data gets compromised because someone's password was "Company123."

Regular Security Audits & Compliance Checks

Hire ethical hackers to break into your systems before the malicious ones do. The financial services clients we work with run penetration tests quarterly - not because they're paranoid, but because they've learned from others' mistakes.

Stay on top of your regulatory requirements. GDPR violations alone can cost 4% of your global revenue, and we've watched companies scramble after realizing too late that their AI implementations created massive compliance gaps.

Transparent Data Policies & User Consent Mechanisms

Nobody reads privacy policies, but regulators certainly do. Make yours clear, accessible, and honest about how you're using DeepSeek with customer data.

Give users real control over their information, safety and privacy - not just the illusion of it. Companies that build genuine data control mechanisms typically see higher engagement and trust scores in our customer surveys.

Initial Limited Deployment

- Start with lower-risk use cases while security practices mature

- Implement additional security controls based on your risk assessment

- Develop clear governance practices for AI implementation

Leverage Expert Resources

For tailored integration and enhanced security measures, consider partnering with expert platforms like Index.dev. Their vetted senior developers can help ensure that your DeepSeek integration meets both your performance and security requirements.

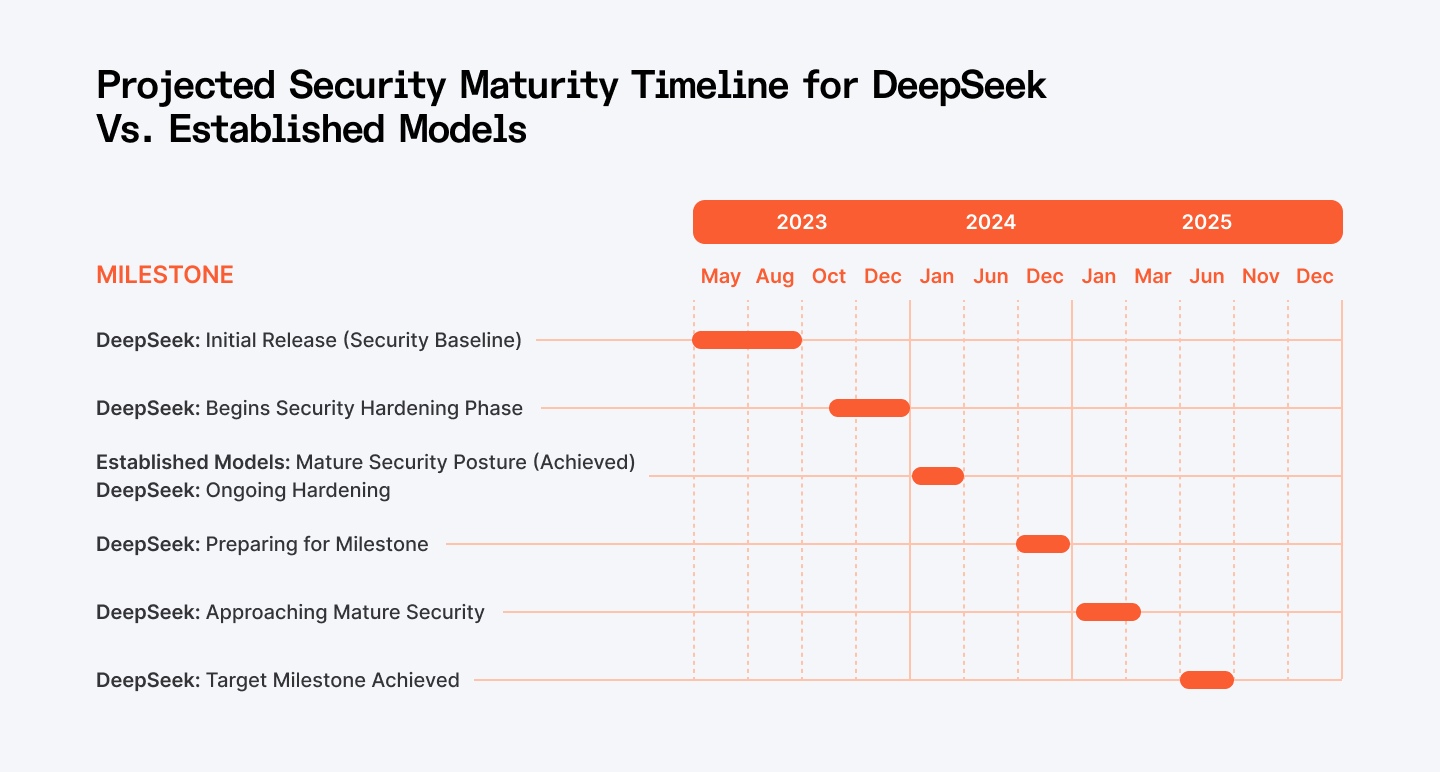

Security Evolution Timeline

This timeline suggests that while DeepSeek may currently have security gaps compared to more mature models, we can expect significant improvements as the model and its ecosystem develop.

DeepSeek's Security Will Mature - But Can You Wait?

Every open-source AI platform we've studied follows a similar security maturation curve. DeepSeek looks much like early versions of other systems that eventually became quite secure.

The catch? This evolution takes time. Based on our observations of similar models, DeepSeek will likely close its most significant security gaps within 12-18 months as community contributors identify vulnerabilities and implement patches.

Your business needs to decide: Can you afford to wait for these improvements, or do you need enterprise-grade security now? For some of our clients, the performance benefits outweigh the security risks. For others, particularly in regulated industries, the calculation comes out differently.

Also Check Out: ChatGPT vs Claude for Coding: Which AI Model is Better?

Conclusion and Recommendations

After working with a couple of organizations implementing DeepSeek, we've seen firsthand how its capabilities can transform technical workflows. The coding assistance alone has doubled productivity for some development teams we've measured.

But these benefits come with safety and privacy challenges you can't ignore. When a healthcare client recently approached us about DeepSeek implementation, we first walked their security team through the vulnerabilities we've documented in this article. They ultimately proceeded with a modified deployment that isolated their most sensitive data from the system.

For companies considering DeepSeek adoption, we recommend:

- Starting with lower-risk use cases while security practices mature

- Implementing additional security controls based on the strategies outlined in this article

- Staying informed about security updates and vulnerabilities through communities like Index.dev

- Developing clear governance practices for AI implementation

Your path forward requires balancing DeepSeek's impressive capabilities against the security gaps we've identified. Implement serious encryption protocols, restrict access carefully, and make regular security testing non-negotiable.

The organizations that succeed with DeepSeek aren't necessarily the most technically advanced - they're the ones that approach implementation with clear-eyed risk awareness and concrete mitigation strategies. They recognize that security isn't a checkbox but an ongoing commitment.

…

Ready to move forward? Work with experts who understand DeepSeek’s risks and your security needs. Index.dev connects you with top AI developers, handling sourcing, vetting, and onboarding, so you can focus on building fast while keeping your data safe.