Debugging is a fundamental yet resource-intensive part of software development. It is where productivity meets frustration. Logical flaws, edge case failures, and unclear error origins can cost developers hours—if not days—of effort.

That’s where Large Language Models (LLMs) like ChatGPT, Claude, Gemini, Deepseek, and LLaMA are changing the game.

These AI models are now more than just assistants— they’re becoming reliable debugging companions. From spotting hidden logic bugs to rewriting code for better maintainability, today’s LLMs bring contextual reasoning, code understanding, and multi-turn memory to your workflow.

But which one actually performs best when tested head-to-head?

This hands-on comparison evaluates the best LLM models across eight real-world debugging tasks to help you decide.

Want to build next-gen tools with world-class teams? Join Index.dev and get matched with top global companies working on cutting-edge AI.

How We Ranked These Top LLMs

To find the best LLMs for debugging and error detection, we ran real-world, hands-on tests instead of relying on assumptions. Each model—ChatGPT, Claude, Gemini, Deepseek, and LLaMA—was evaluated across eight practical parameters:

- Bug Detection Accuracy: Finding hidden logic errors that don't trigger syntax issues.

- Reasoning & Explanation Quality: Explaining why and where the code fails, not just fixing it.

- Fix Quality: Applying stable, accurate fixes without breaking valid logic.

- Test Case Handling: Covering edge cases like negative numbers, boundary values, and large primes.

- Multi-Turn Interaction: Maintaining context and applying updates through multiple correction rounds.

- Confidence Estimation: Admitting uncertainties, suggesting improvements, and flagging potential risks.

- Obfuscated Code Refactoring: Making unclear, messy code readable without changing behavior.

- Developer Helpfulness: Recommending better practices for maintainability, validation, and defensive coding.

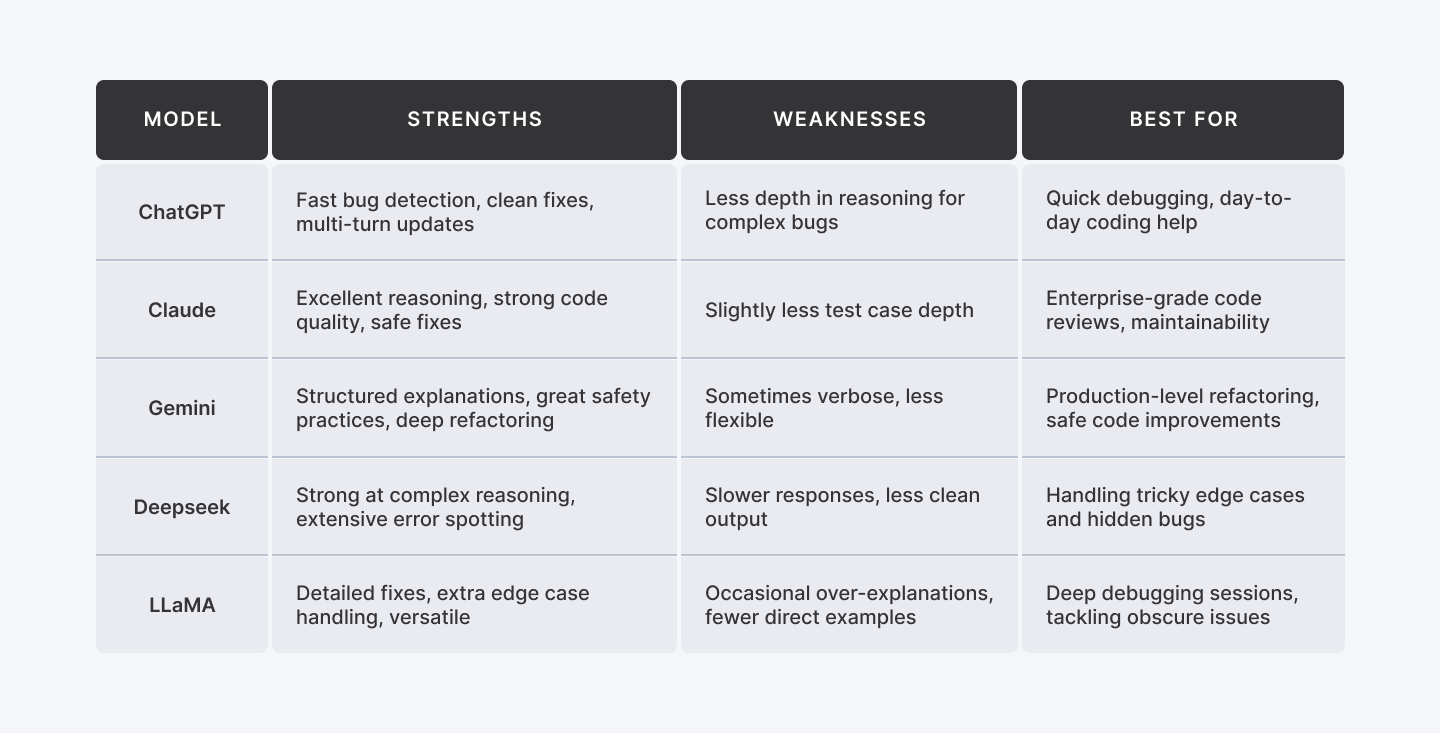

Here are the top LLMs for debugging and error detection at a glance:

| Model | Strengths | Weaknesses | Best For |

| ChatGPT | Fast bug detection, clean fixes, multi-turn updates | Less depth in reasoning for complex bugs | Quick debugging, day-to-day coding help |

| Claude | Excellent reasoning, strong code quality, safe fixes | Slightly less test case depth | Enterprise-grade code reviews, maintainability |

| Gemini | Structured explanations, great safety practices, deep refactoring | Sometimes verbose, less flexible | Production-level refactoring, safe code improvements |

| Deepseek | Strong at complex reasoning, extensive error spotting | Slower responses, less clean output | Handling tricky edge cases and hidden bugs |

| LLaMA | Detailed fixes, extra edge case handling, versatile | Occasional over-explanations, fewer direct examples | Deep debugging sessions, tackling obscure issues |

Explore More: Llama 4 vs ChatGPT-4 for Coding

Hands-On Debugging Tests: LLM Scorecard Behind the Rankings

1. Bug Detection Accuracy

What We’re Testing:

👉The model’s ability to identify logical flaws that don’t trigger syntax errors but cause incorrect outputs, especially around edge cases and comparisons.

Prompt used:

"This function should return the largest of three numbers. It gives the wrong output when two values are equal. Can you find and fix the bug?"

ChatGPT:

ChatGPT started by sharing a short example of the code error:

Then, provided an improved code snippet with results of the test cases below.

Claude:

Claude shared a detailed description of the current error, then provided the updated solution.

Gemini:

Gemini first shared the error in a simple statement and then provided the modified code.

Finally, shared the test cases, highlighting the output from the initial code versus the updated version, although the comparison is a bit difficult to scan quickly.

DeepSeek:

DeepSeek provided an updated code version along with test cases, but did not provide any example on what was wrong in the given code.

Llama:

Llama also provided a detailed analysis on what was wrong with the code, then shared a fixed solution, and explained it with test cases.

Additionally, Llama explained an extra test case scenario involving negative numbers.

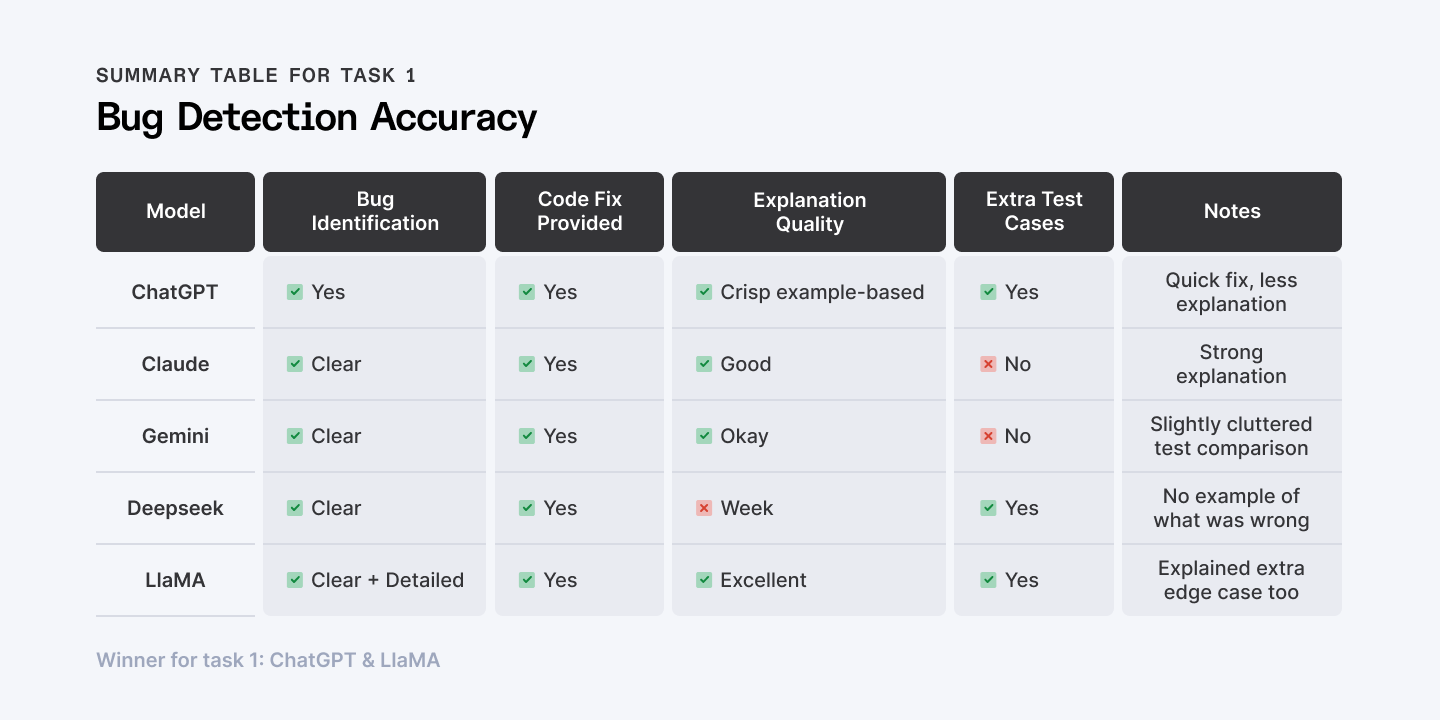

Summary Table for Task 1: Bug Detection Accuracy

Model | Bug Identification | Code Fix Provided | Explanation Quality | Extra Test Cases | Notes |

ChatGPT | ✅ Yes | ✅ Yes | ✅Crisp example-based | ✅ Yes | Quick fix, less explanation |

Claude | ✅ Clear | ✅ Yes | ✅ Good | ❌ No | Strong explanation |

Gemini | ✅ Clear | ✅ Yes | ✅ Okay | ❌ No | Slightly cluttered test comparison |

Deepseek | ✅ Clear | ✅ Yes | ❌ Weak | ✅ Yes | No example of what was wrong |

Llama | ✅ Clear + Detailed | ✅ Yes | ✅ Excellent | ✅ Yes | Explained extra edge case too |

Winner for Task 1: ChatGPT & Llama.

2. Reasoning & Explanation Quality

What We’re Testing:

👉The model’s ability to reason about unreachable code, and logically explain the flow of conditionals.

Prompt used:

"This function should assign letter grades. Explain what’s wrong and why the last return is never triggered."

ChatGPT:

ChatGPT provided an explanation of the code error with a set of examples.

Then, shared a solution for that update code version.

Claude:

Claude shared an updated version of the code after testing that it wouldn't hit the invalid state with any given numbers.

Gemini:

Gemini shared a detailed analysis on where the code is going wrong or why the invalid statement is unreachable.

Then shared an updated code version with the following explanation.

DeepSeek:

Deepseek did a remarkable job here. Though it takes a bit more time to process every action, it fixed the score range from 0 to 100 for grading. This made the code work perfectly, covering all possible cases.

Llama:

Llama shared similar insights to Deepseek, fixed the score range, managed to return an error in case of non-numeric inputs, and implemented a perfect grading system.

Summary Table for Task 2: Reasoning & Explanation Quality

Model | Flaw Detection | Flow Reasoning | Code Fix Provided | Edge Case Handling | Notes |

ChatGPT | ✅ Yes | ✅ Basic | ✅ Yes | ⚠️ Minimal | Straightforward but not deep |

Claude | ✅ Yes | ✅ Clear | ✅ Yes | ⚠️ Minimal | Clear logic, less coverage |

Gemini | ✅ Yes | ✅ Strong | ✅ Yes | ⚠️ Minimal | Great balance of clarity and fix |

Deepseek | ✅ Yes | ✅ Excellent | ✅ Yes | ✅ Extensive | Most robust and complete |

Llama | ✅ Yes | ✅ Excellent | ✅ Yes | ✅ Extensive | Comparable to Deepseek, slightly less concise |

Winner for Task 2: Llama & Deepseek

3. Fix Quality

What We’re Testing:

👉Can the model precisely repair the logic and avoid introducing new errors? We're testing its ability to implement a fix that works without disrupting valid parts of the code.

Prompt used:

"Fix the logical error in the palindrome check function in Java."

ChatGPT:

ChatGPT shared which line is creating an error, and explained what went wrong, along with what it should be, in a very quick manner.

Then, they provided a code with a set of test cases.

Claude:

Claude explained the error case and shared the corrected version.

Gemini:

Gemini explained the code error with an example, then shared the updated version.

DeepSeek:

Deepseek also explained what went wrong, shared the updated code snippet, and explained the changes made to keep it properly working.

Llama:

Llama also provided a similar solution with examples, along with an additional checking method that starts from the back. Although it is not required, it took extra effort.

Summary Table for Task 3: Fix Quality

Model | Bug Identification | Fix Accuracy | Code Stability | Test Cases | Notes |

ChatGPT | ✅ Yes | ✅ Yes | ✅ Stable | ✅ Yes | Fast and clear |

Claude | ✅ Yes | ✅ Yes | ✅ Stable | ❌ No | Minimalistic |

Gemini | ✅ Yes | ✅ Yes | ✅ Stable | ❌ No | Could add more variations |

Deepseek | ✅ Yes | ✅ Yes | ✅ Stable | ❌ No | Safe, slightly wordy |

Llama | ✅ Yes | ✅ Yes | ✅ Stable | ✅ Yes | Clear, but added a non-required code |

Winner for Task 3: ChatGPT

4. Test Case Handling

What We’re Testing:

👉The model’s understanding of test coverage, especially for edge inputs. We’re looking for completeness and correctness of the tests it proposes.

Prompt used:

"Write test cases for this function, including edge cases (e.g. negative numbers, 1, large primes)."

ChatGPT:

ChatGPT shared a quick note on test cases:

Along with a modified code version.

Claude:

Claude also included an improved implementation that checks divisibility only up to the square root of n and utilizes optimizations such as skipping even numbers and multiples of 3. This makes the function much more efficient for large numbers.

Gemini:

Gemini executed a comprehensive set of test cases, ensuring the code was thoroughly validated. However, it did not suggest any improvements or share a more optimized version of the implementation.

DeepSeek:

Explained all relevant test cases and also noted that the case for a very large prime, such as the Mersenne prime 2,147,483,647 (2³¹−1), was intentionally omitted due to the current algorithm's inefficiency in handling such large numbers.

Llama:

Shared fewer insights on test cases, but shared a more optimized code to test prime numbers.

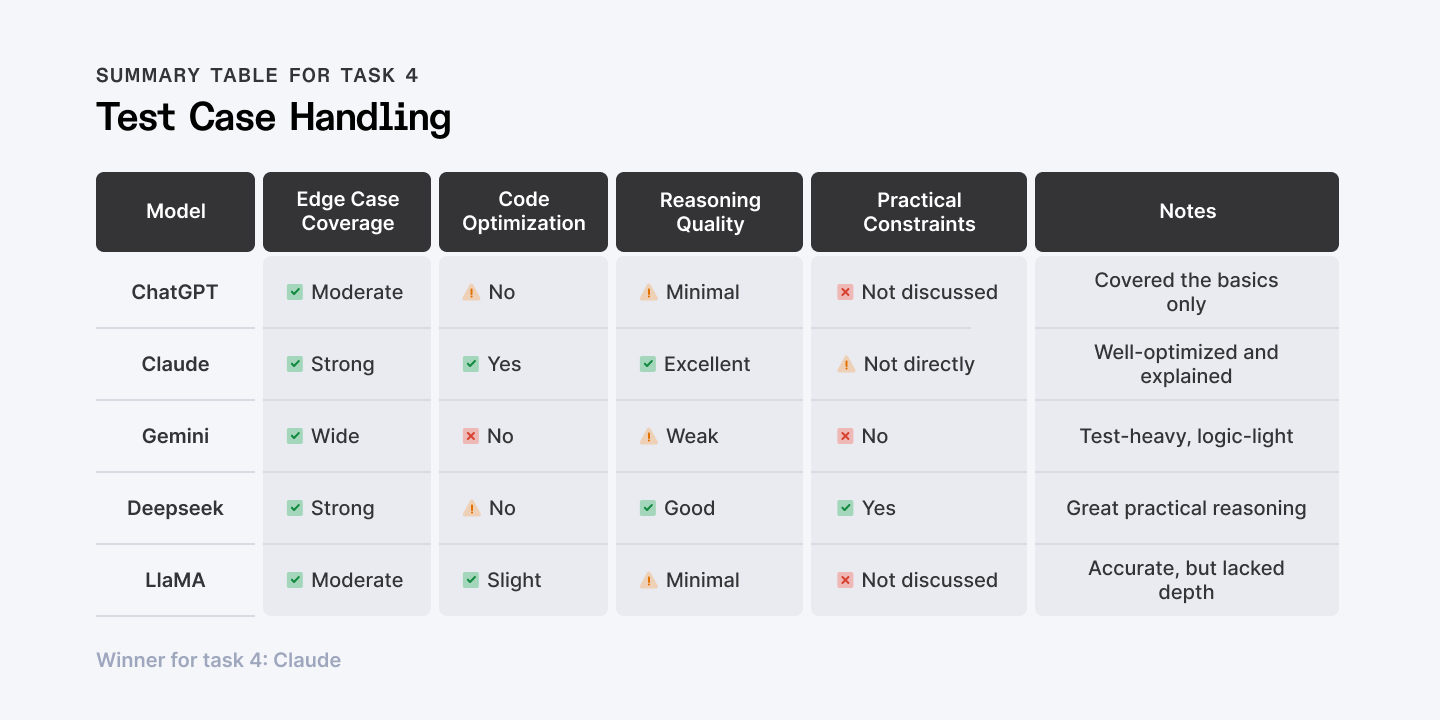

Summary Table for Task 4: Test Case Handling

Model | Edge Case Coverage | Code Optimization | Reasoning Quality | Practical Constraints | Notes |

ChatGPT | ✅ Moderate | ⚠️ No | ⚠️ Minimal | ❌ Not discussed | Covered the basics only |

Claude | ✅ Strong | ✅ Yes | ✅ Excellent | ⚠️ Not directly discussed | Well-optimized and explained |

Gemini | ✅ Wide | ❌ No | ⚠️ Weak | ❌ No | Test-heavy, logic-light |

Deepseek | ✅ Strong | ⚠️ No | ✅ Good | ✅ Yes | Great practical reasoning |

Llama | ✅ Moderate | ✅ Slight | ⚠️ Minimal | ❌ Not discussed | Accurate, but lacked depth |

Winner for Task 4: Claude

5. Multi-Turn Interaction

What We’re Testing:

👉 The model’s ability to handle multi-step corrections, incorporating feedback over turns without forgetting previous logic.

Prompt used 1:

"The loop crashes the program.”

Prompt used 2:

"Now modify the loop to also skip any negative numbers in the array."

ChatGPT:

ChatGPT explained the indexation of the C language and explained where it went wrong.

Then, shared a fix to this error with two possible ways and derived the sum as 65 from the given array.

Now, after the second prompt, ChatGPT updated the sum_array function and the main function.

And derived the answer perfectly, eliminating the negative numbers.

Claude:

Claude found the bug and suggested two improvised ways.

- One with the function i < n.

- Another version with the i <= n condition

With a note: In C, arrays are zero-indexed, so for an array of size 5, the valid indices are 0 through 4. Be careful with your loop boundary conditions to avoid accessing memory outside the array bounds.

After the second prompt, it provided.

Shared a new code with an example while keeping up with the previous changes perfectly.

Gemini:

Gemini shared an explanation before suggesting the improvised code, explaining where it was getting an error and how to change it.

Now, after the second prompt, it added a code statement to check if the numbers are positive or negative as asked.

DeepSeek:

Deepseek resolved the error, but comparatively took a longer time to fix it.

After the second prompt, Deepseek provided the required code along with some test cases to see if the improved code could handle edge cases.

Llama:

Llama clearly explained what was going wrong and shared an optimized solution to address the problem.

When passing the array size to sum_array, pass the actual size (size) and use i < n in the loop to process all elements correctly. Passing size - 1 would skip the last element.

And after that, it also shared another case of using a for loop where this error can be avoided.

After the second prompt, llama provided an extra statement to add on for both while and for loop cases.

This checks out the negative numbers from the addition, keeping the previous changes in mind, but does not provide any test cases.

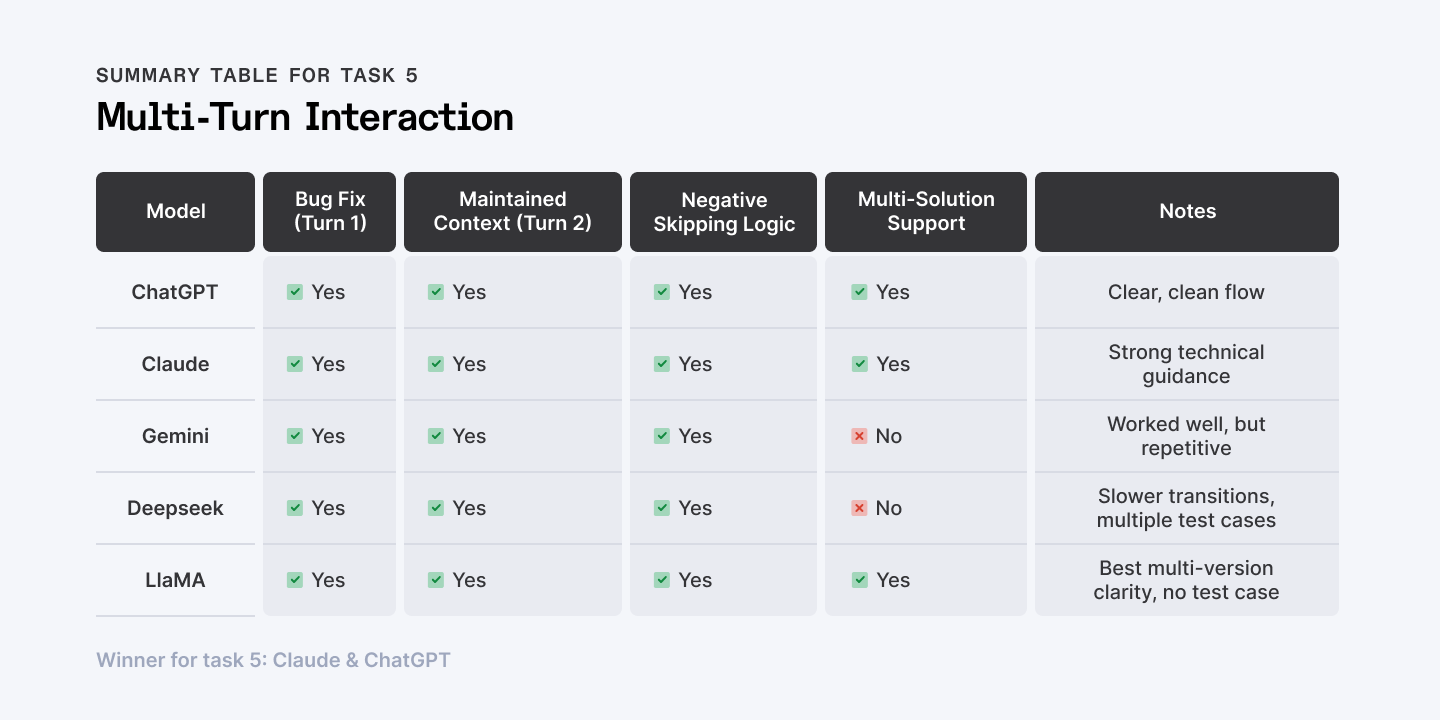

Summary Table for Task 5: Multi-Turn Interaction

Model | Bug Fix (Turn 1) | Maintained Context (Turn 2) | Negative Skipping Logic | Multi-Solution Support | Notes |

ChatGPT | ✅ Yes | ✅ Yes | ✅ Yes | ✅ Yes | Clear, clean flow |

Claude | ✅ Yes | ✅ Yes | ✅ Yes | ✅ Yes | Strong technical guidance |

Gemini | ✅ Yes | ✅ Yes | ✅ Yes | ❌ No | Worked well, but repetitive |

Deepseek | ✅ Yes | ✅ Yes | ✅ Yes | ❌ No | Slower transitions, multiple test cases |

Llama | ✅ Yes | ✅ Yes | ✅ Yes | ✅ Yes | Best multi-version clarity, no test case |

Winner for Task 5: Claude & ChatGPT

6. Confidence Estimation

What We’re Testing:

👉Can the model admit ambiguity, suggest improvements with rationale, and flag potential runtime errors due to missing structure or unchecked behaviour?

Prompt used:

"This function seems incomplete. What could go wrong?"

ChatGPT:

ChatGPT explained the test cases as below:

ChatGPT shared an improved code:

To mention, its functioning shared a test case also:

Claude:

Claude flagged some possible errors in the given code.

- No error handling for when get_data_from_api() fails or times out

- No validation of the user_id format before making the API call

- Missing an else clause to handle when user_id is empty or None

- No definition for get_data_from_api(), which is called by the function

- Input isn't sanitized or validated before being passed to the function

Gemini:

Gemini did not share any fully updated code version. But flagged five error cases and suggested some implementation for the code.

- Define get_data_from_api: Implement the logic to actually fetch data from an API.

- Handle Falsy user_id: Add an else block to specify what should happen if no user_id is provided.

- Implement Error Handling within get_data_from_api: Use try...except to catch potential network errors, API errors, and data parsing issues. Return appropriate error indicators or raise custom exceptions.

- Consider Type Conversion: Ensure the user_id is in the expected format for get_data_from_api.

- Add Error Handling in fetch_user_data (Optional but Recommended): You should add a try...except block around the call to get_data_from_api in fetch_user_data. to handle any exceptions raised by

DeepSeek:

Deepseek pointed out four error cases along with a sample code.

- No Input Validation: input() returns a string, but get_data_from_api expects an integer. Non-integer inputs (e.g., "abc") or whitespace ("", " ") can cause errors or unintended behaviour.

- Silent Failure: Empty or falsy user_id returns None without feedback, leading to unclear downstream issues.

- No Error Handling: API-related exceptions (e.g., network issues) are not caught, which may cause the program to crash.

- Edge Case Handling: Inputs like "0" may be treated as valid but could be semantically invalid depending on the context. The code doesn’t differentiate.

Llama:

Llama shared four potential issues and three update recommendations along with an updated code.

- Input Validation: The fetch_user_data function doesn't validate the type or format of the user_id. If the input is not a valid ID (e.g., a string, a float, or an empty string), it might cause issues with the get_data_from_api function or the API itself.

- Error Handling: The function doesn't handle potential errors that might occur when calling get_data_from_api. If the API call fails or returns an error, it will propagate to the caller without any meaningful error message.

- Empty Input: Although the function checks for if user_id, it doesn't account for cases where user_id might be an empty string or a string containing only whitespace characters.

- API Call: The get_data_from_api function is not defined in this snippet. If it's not properly implemented or if the API endpoint is incorrect, the function will fail.

Along with an updated code:

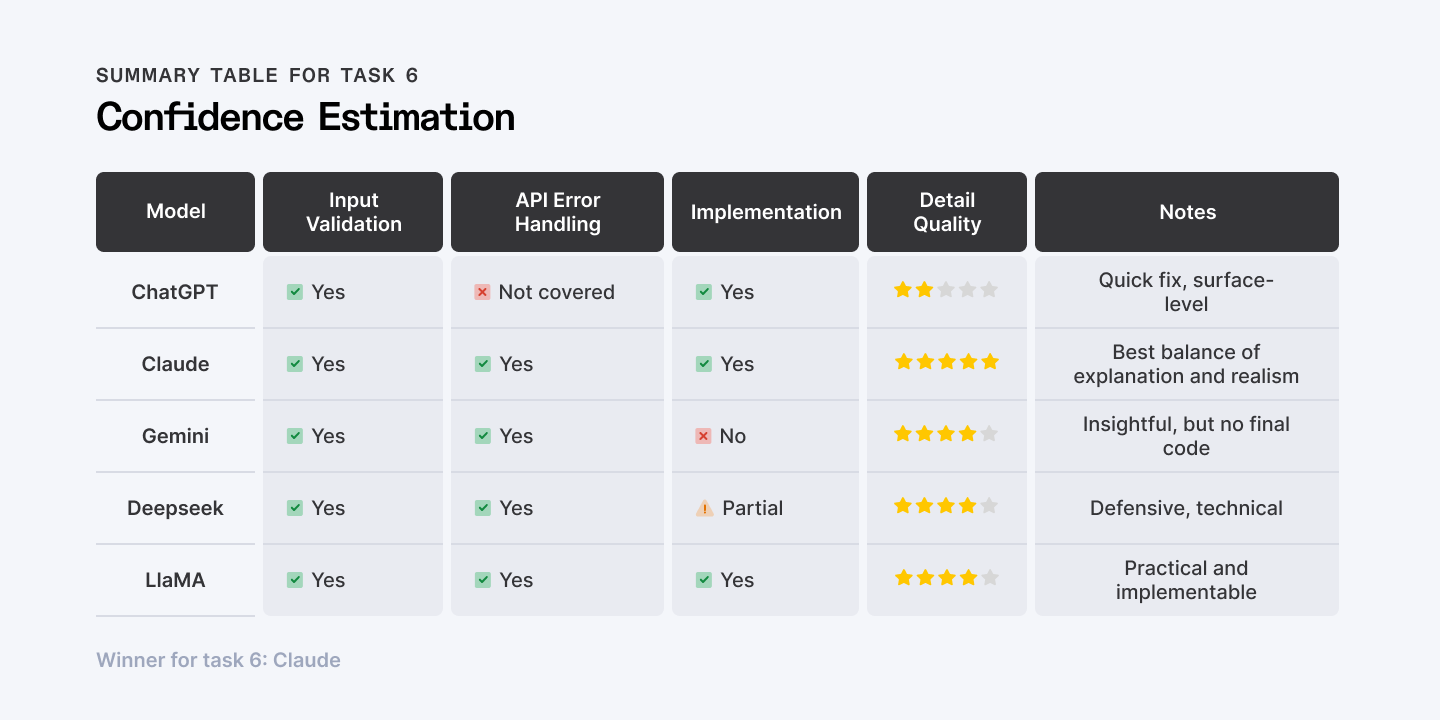

Summary Table for Task 6: Confidence Estimation

Model | Input Validation | API Error Handling | Implementation | Detail Quality | Notes |

| ChatGPT | ✅ Basic | ❌ Not covered | ✅ Yes | ⭐⭐☆☆☆ | Quick fix, surface-level |

| Claude | ✅ Strong | ✅ Yes | ✅ Yes | ⭐⭐⭐⭐⭐ | Best balance of explanation and realism |

| Gemini | ✅ Yes | ✅ Suggested | ❌ No | ⭐⭐⭐⭐☆ | Insightful, but no final code |

| Deepseek | ✅ Yes | ✅ Yes | 🔶 Partial | ⭐⭐⭐⭐☆ | Defensive, technical |

| Llama | ✅ Strong | ✅ Strong | ✅ Yes | ⭐⭐⭐⭐☆ | Practical and implementable |

Winner for Task 6: Claude

7. Obfuscated Code

What We’re Testing:

👉The model’s skill in reverse engineering code behaviour and refactoring for clarity, without changing the function’s logic.

Prompt used:

"What does this function do? Can you rewrite it with better naming and structure?"

ChatGPT:

GPT shared a short, crisp explanation as below:

- g(a) starts with r = 1.

- It loops from 1 to a, multiplying r by each i.

- The result is the product of all integers from 1 to a, which is the factorial of a (a!).

Then shared an updated code version with input validation and a docstring.

Claude:

First, it explained how the given code functions.

Then shared a set of code with better naming and structure.

Mentioning all the improvised areas.

- Using descriptive names (calculate_factorial, number, result) instead of single letters

- Adding docstring documentation explaining what the function does

- Adding proper spacing according to Python style guidelines (PEP 8)

- Keeping the same efficient implementation logic

Gemini:

In this improved version, Gemini mentioned:

- g is renamed to calculate_factorial, which clearly indicates the function's purpose.

- The parameter a is renamed to number, which is more descriptive of the input.

- A docstring is added to explain what the function does, its arguments, and what it returns. This makes the function's purpose and usage much clearer.

- It has included checks for non-integer and negative input, returning None for negative numbers and raising a TypeError for non-integer input, as the factorial is typically defined for non-negative integers.

- The variable r is renamed to result, which is more intuitive.

DeepSeek:

Mentioning key improvements like:

- Descriptive names: factorial instead of g, n instead of a, result instead of r

- Type hints: Added parameter and return type annotations

- Readable structure: Proper line breaks and indentation

- Docstring: Explains the function's purpose and behaviour

- Clear loop variable: current_number instead of i (though i is still acceptable)

Along with a note that it can handle factorial zero(0!) as well.

Llama:

In this test case, Llama shared three alternative improvements without explaining what the actual given code does with examples.

1. Version with better naming and structure:

2. Version for negative numbers or non-integers:

3. Version with math.factorial function:

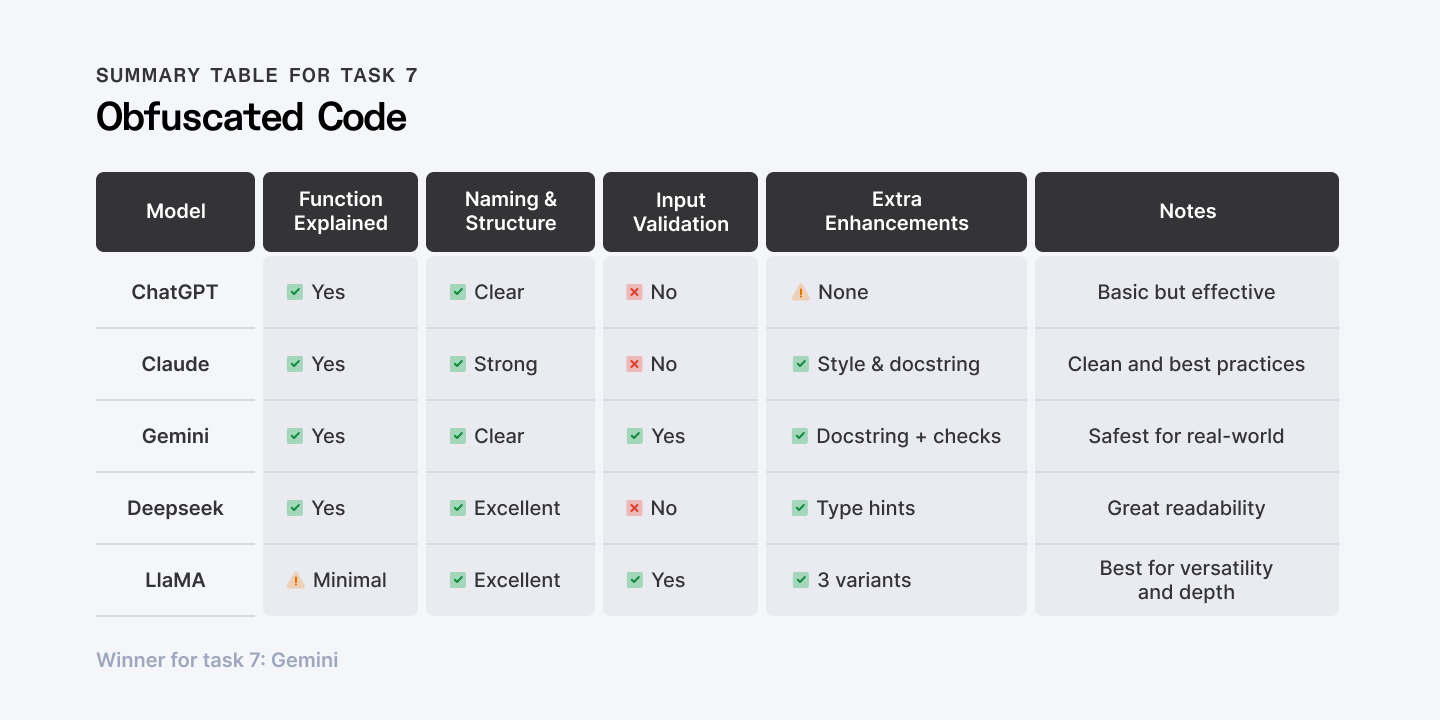

Summary Table for Task 7: Obfuscated Code

Model | Function Explained | Naming & Structure | Input Validation | Extra Enhancements | Notes |

ChatGPT | ✅ Yes | ✅ Clear | ❌ No | ⚠️ None | Basic but effective |

Claude | ✅ Yes | ✅ Strong | ❌ No | ✅ Style & docstring | Clean and best practices |

Gemini | ✅ Yes | ✅ Clear | ✅ Yes | ✅ Docstring + checks | Safest for real-world |

Deepseek | ✅ Yes | ✅ Excellent | ❌ No | ✅ Type hints | Great readability |

Llama | ⚠️ Minimal | ✅ Excellent | ✅ Yes | ✅ 3 Variants | Best for versatility and depth |

Winner for Task 7: Gemini

8. Developer Helpfulness

What We’re Testing:

👉 Can the model go beyond debugging and suggest refactoring for maintainability, encapsulation, or defensive programming practices?

Prompt used:

"Refactor this code to make it more maintainable and safe. Suggest improvements for field access and validation."

ChatGPT:

Explanation Provided:

- Encapsulation: Fields are made private, with public getters and setters.

- Validation: Ensure quantity and price are not negative.

- Naming: Improved method and variable naming for clarity.

- Immutability (optional): We can go further by using constructors for immutability, but here we keep it mutable with validation.

Also suggested some optional improvements.

- Make it immutable by removing setters and validating in the constructor only.

- Add BigDecimal instead of double for financial calculations.

- Override toString() to provide a summary of the order.

Claude:

Explanation Provided:

- Encapsulation: Changed public fields to private and added getters/setters to enforce controlled access.

- Validation: Added checks in setters and constructors to prevent invalid values (e.g., negative price, zero quantity).

- Constructor Initialization: Added a constructor to ensure objects are always initialized with valid data.

- toString() Override: Implemented toString() method for better readability, debugging, and logging.

Benefits:

- Improves data integrity and reduces bugs from invalid object states.

- Enables safe and controlled access to fields.

- Allows flexible internal changes without breaking external code.

- Prepares the class for scalability and future enhancements.

Gemini:

Explanation Provided:

1. Field Encapsulation (Using private)

- Why: Prevents direct external access to quantity and price.

- Benefits:

- Ensures data integrity.

- Allows internal logic changes without breaking external code.

- Guards against accidental/malicious changes.

2. Getter and Setter Methods

- Why: Controlled access via getQuantity(), getPrice(), setQuantity(), setPrice().

- Benefits:

- Adds validation.

- Enables logging or triggers on change.

- Hides internal representation details (abstraction).

3. Constructor for Initialization & Validation

- Why: Ensures the Order is created with valid initial values.

- Implementation: Order(int quantity, double price) calls validateOrder().

- Benefit: Prevents invalid objects from being created.

4. Input Validation

- Why: Ensures that data is valid during modifications.

- Checks:

- quantity > 0

- price >= 0

- Benefits:

- Safer state management.

- Centralized, maintainable validation logic.

- Uses IllegalArgumentException for clear error signaling.

5. Dedicated Validation Method (validateOrder)

- Why: Centralizes and simplifies validation.

- Benefits:

- Cleaner constructor.

- Easy to maintain and update rules.

- Uses IllegalStateException for internal consistency checks.

Further Potential Improvements

- Immutability:

- Remove setters.

- Use a constructor for full initialization.

- Create new objects to reflect changes.

- Use BigDecimal for price to avoid floating-point issues.

- Custom Exceptions:

- e.g., InvalidQuantityException, NegativePriceException for more clarity.

The explanation, along with the code Gemini provided, was better than any other llms response because it followed a logical approach..

DeepSeek:

Explanation Provided:

1. Encapsulation

- quantity and price are now private to restrict direct access.

- Promotes data hiding and protects internal state.

2. Validation

- Setters (setQuantity, setPrice) validate inputs to ensure they are non-negative.

- Throws IllegalArgumentException if invalid data is passed.

3. Constructors

- Parameterized Constructor: Initializes fields using setters to ensure validation is enforced.

- Default Constructor: Included for flexibility if needed.

4. Controlled Access

- Getters (getQuantity, getPrice) provide read-only access.

- Setters ensure only valid changes are allowed.

5. Maintainability

- Centralized validation logic in setters makes updates easier and consistent.

- Prevents invalid object creation via direct field access.

Provided additional Recommendations:

- Immutability (if applicable):

- If Order should be immutable, make fields final, remove setters, and validate in the constructor:

- Precision for Monetary Values:

- Consider using BigDecimal instead of double for price to avoid floating-point precision issues in financial calculations.

- Documentation:

- Add Javadoc comments to clarify validation rules and usage.

Llama:

Explanation Provided:

Encapsulation

- The quantity and price fields are now private.

- Access is controlled through getter and setter methods.

Benefit: Hides internal state and ensures modifications are made in a controlled way.

Validation

- The setter methods (setQuantity, setPrice) validate input values.

- Throws IllegalArgumentException for invalid values (e.g., negative numbers).

Benefit: Ensures the object remains in a valid state.

Constructor

- Initializes the object with valid values upon creation.

Benefit: Guarantees that each Order instance starts in a consistent state.

Immutability (Optional Enhancement)

- Remove setter methods and make fields final.

- To update values, create a new instance with the updated data.

Benefit: Prevents state changes after object creation.

Additional Suggestions

Use BigDecimal for Monetary Values

- Avoids floating-point precision issues inherent in double.

- Recommended for handling financial values accurately.

Consider Using a Builder Pattern

- Helpful for classes with many fields or complex initialization.

Benefit: Improves object creation readability and maintainability.

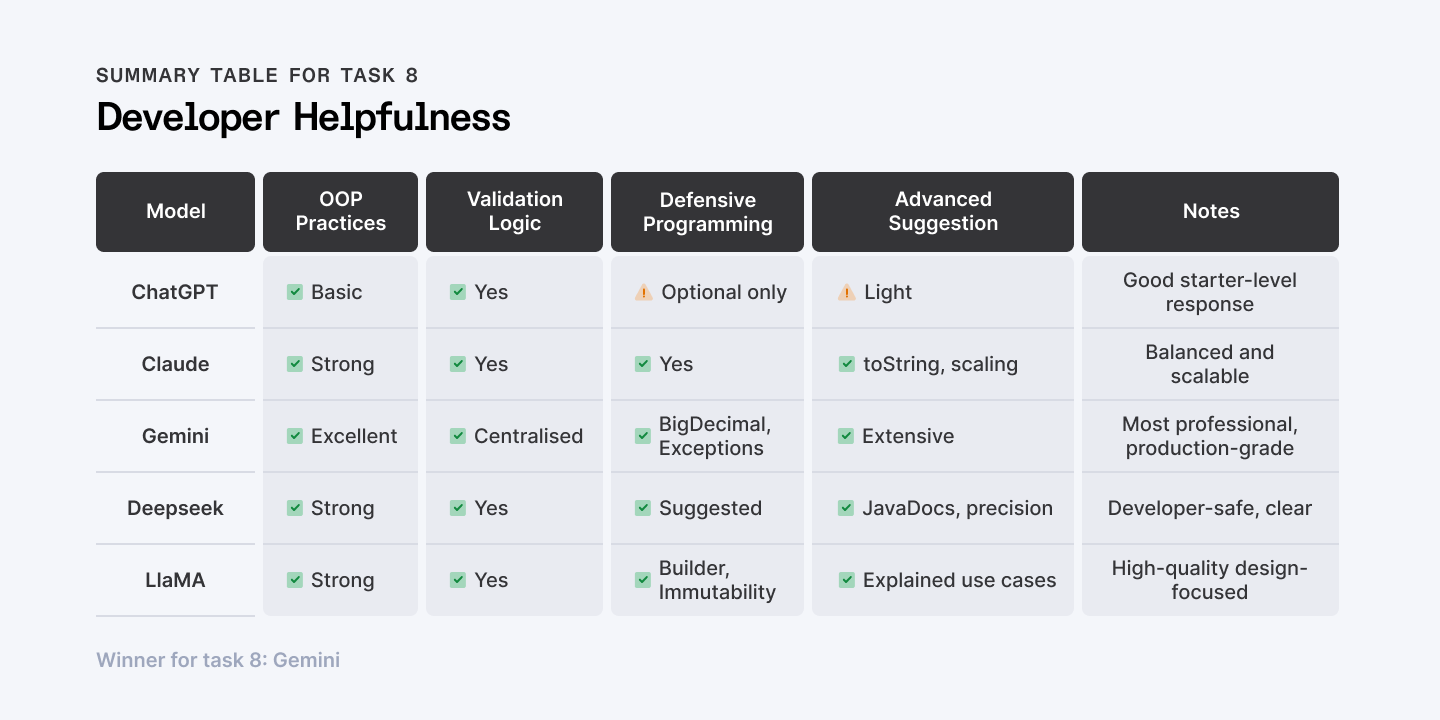

Summary Table for Task 8: Developer Helpfulness

Model | OOP Practices | Validation Logic | Defensive Programming | Advanced Suggestions | Notes |

ChatGPT | ✅ Basic | ✅ Yes | ⚠️ Optional only | ⚠️ Light | Good starter-level response |

Claude | ✅ Strong | ✅ Yes | ✅ Yes | ✅ toString, scaling | Balanced and scalable |

Gemini | ✅ Excellent | ✅ Centralized | ✅ BigDecimal, Exceptions | ✅ Extensive | Most professional, production-grade |

Deepseek | ✅ Strong | ✅ Yes | ✅ Suggested | ✅ JavaDocs, precision | Developer-safe, clear |

Llama | ✅ Strong | ✅ Yes | ✅ Builder, Immutability | ✅ Explained use cases | High-quality design-focused |

Winner for Task 8: Gemini

Final Takeaways

There’s no one-size-fits-all winner when it comes to using LLMs for debugging, but each model brings distinct strengths that align with different types of developers.

ChatGPT combines speed, clarity, and adaptability, offering precise bug detection, effective code fixes, and strong multi-turn performance. It’s well-suited for both quick debugging tasks and deeper logic evaluation, making it a dependable choice across a wide range of use cases.

Claude and Gemini stand out for their structured and software design sensibility. They consistently suggest improvements that align with best practices—like encapsulation, validation, and maintainability—making them ideal for developers who prioritise long-term code health and architectural soundness.

When it comes to complex logic, edge cases, and detailed error handling, Deepseek and LLaMA perform with impressive depth in reasoning. They not only solve problems but also uncover hidden pitfalls, making them valuable allies in high-stakes or performance-sensitive environments.

In collaborative and enterprise-grade settings, Claude and Gemini shine with their production-level recommendations and defensively written code. Their consistency and foresight make them excellent for large teams managing scalable applications and long-term codebases.

Also Check Out: Gemini vs ChatGPT for Coding

Ultimately, the best model for you depends on whether your focus is rapid iteration, strong architectural practices, or in-depth code analysis.

What’s clear, however, is that LLMs are now serious contenders as debugging partners in modern software development.