For years, whiteboard interviews were considered the gold standard in technical recruiting. However, technical executives and recruiting managers are rapidly understanding that their systems are faulty.

Whiteboard-style interviews frequently reduce difficult, real-world technical talents to a series of fake, time-limited challenges. They reward performance under duress rather than problem-solving in context.

What was the result?

High-potential individuals are filtered out for the wrong reasons, and businesses squander significant time on hiring exams that do not represent on-the-job requirements.

This article provides a realistic approach for creating current, role-specific candidate exams that prioritize real-world technical abilities above memory recall or performative coding. Whether you're recruiting a high-qualified front-end developer or a senior DevOps engineer, you'll understand how to tailor your technical skills assessment method to the role's level and type.

It's time for a wiser, fairer, and more productive recruiting process.

Stop wasting time on broken interviews. Hire developers vetted for real-world skills, 48-hour matching, 30-day trial, only at Index.dev.

The Problem with Whiteboard Interviews

They evaluate performance under pressure, not skill.

Whiteboard interviews establish a high-stakes atmosphere in which applicants must write faultless code in front of interviewers, frequently without the use of their typical tools, documentation, or collaborative context. This model disproportionately advantages individuals who thrive under performance pressure, rather than those who flourish on the job.

According to a HackerRank poll, more than half of engineers said whiteboard-style interviews made them anxious and did not represent their true ability.

Lack of real-world context.

Engineers in modern software teams collaborate, iterate, and debug in settings that are very different from a whiteboard. Real-world engineering includes architecture trade-offs, version control, pair programming, and making decisions in the face of uncertainty. However, standard employment tests frequently restrict evaluation to abstract algorithms.

This mismatch results in a faulty signal. For example, knowing how to reverse a binary tree does not imply that a back-end developer can create a viable API under real-world restrictions. It is time to emphasize applicant assessments that represent the role's daily reality.

Inherent bias and gatekeeping.

Whiteboard interviews also create challenges for candidates from nontraditional backgrounds. Those without formal computer science degrees, those who are neurodivergent, and those who have not had interview preparation coaching are unfairly disadvantaged.

NCWIT discovered that women and underrepresented races are disproportionately underrepresented in traditional technical interviews due to prejudiced assumptions and unfamiliar environments.

Companies that rely on performative rather than actual technical ability assessments risk losing out on a diverse, highly talented pool of individuals. Instead, new, organized, AI-powered evaluations offer a more equitable path forward.

What Makes an Effective Candidate Assessment Strategy?

A successful candidate evaluation technique extends beyond examining isolated technical knowledge. It assesses a candidate's capacity to succeed in real-world circumstances, including the exact position, the required level, and the actual work environment.

The most successful engineering teams recognize that what you assess and how you analyze it are both crucial.

1. Aligned with the role requirements

The beginning step for every effective candidate assessment is role relevance. The duties you provide should correspond to the everyday obligations of the role. A front-end engineer, for example, may be evaluated on creating a UI component with React, but a DevOps applicant could be tested on implementing CI/CD pipelines.

By connecting hiring tests with actual work, you can verify that you're assessing the correct skills, such as debugging, architecture comprehension, teamwork, or tool expertise. According to DevSkiller's IT Skills Report, tests targeted to real-world activities result in 92% increased trust in recruiting decisions.

2. Role and level-specific mapping

Most technical skill tests fall short because they use a one-size-fits-all approach. Here's how you may customize assessments based on experience level:

- Junior Engineers

- To assess junior engineers, use simple activities such as debugging or algorithmic problems to measure their logical thinking and coding skills. Tools like CoderPad and HackerRank provide excellent formats for this level.

- To assess junior engineers, use simple activities such as debugging or algorithmic problems to measure their logical thinking and coding skills. Tools like CoderPad and HackerRank provide excellent formats for this level.

- Mid-Level Engineers

- More practical evaluations are required, such as creating a feature in a simulated production environment or conducting code reviews. These tasks mirror the obligations that they will bear.

- More practical evaluations are required, such as creating a feature in a simulated production environment or conducting code reviews. These tasks mirror the obligations that they will bear.

- Senior Engineers

- Complex assessments are required, which include architectural design, scalability considerations, and leadership judgment. Consider having them develop a system in a live or asynchronous environment, using a defined rubric to assess tradeoffs and communication clarity.

- Complex assessments are required, which include architectural design, scalability considerations, and leadership judgment. Consider having them develop a system in a live or asynchronous environment, using a defined rubric to assess tradeoffs and communication clarity.

This customized method guarantees that your applicant assessments match true expectations, which improves both candidate experience and hiring accuracy.

3. Low time to value for the candidate

Another important, but often overlooked, feature of a modern employment evaluation method is reducing friction for the candidate.

Avoid multi-day take-home tasks unless you are reimbursed. These may disproportionately disfavor candidates with full-time employment or caring commitments.

- Be transparent:

- Clarify time constraints, formats, tools, and assessment criteria.

- Clarify time constraints, formats, tools, and assessment criteria.

- Provide quick feedback:

- Candidates value quick information, even if it is a courteous rejection. It reflects positively on your employer brand.

- Candidates value quick information, even if it is a courteous rejection. It reflects positively on your employer brand.

Modern hiring is a two-way street. The quality of your applicant assessments demonstrates how much you appreciate the candidate's time and experience.

Finally, a good evaluation technique is transparent, contextual, and calibrated—not only to confirm technical skills, but also to promote a fair and engaging recruiting process. Organizations that use such frameworks regularly outperform their peers in terms of hiring quality and retention.

Modern Alternatives to Whiteboard Interviews

The failure of whiteboard interviews has resulted in a new generation of technical skills evaluations that match real-world software engineering requirements. These new forms provide more accurate assessments of a candidate's capacity to contribute significantly in a collaborative and production-oriented workplace.

Asynchronous coding challenges

Asynchronous assessments are time-sensitive coding activities conducted remotely. They are useful for the first screening stages because:

- Scalable: You can examine several applicants at once.

- Objective: Automated scoring decreases prejudice.

- Convenient: Candidates can finish them at any time that suits them.

Platforms such as Codility, HackerRank, and TestGorilla provide automated grading, plagiarism detection, and configurable test banks. According to SHRM, organizations that employ structured, automated evaluations have a 36% increase in interview-to-offer conversion rates.

Pair programming sessions

Unlike whiteboard interviews, live pair programming replicates actual developer teamwork. Candidates collaborate with an interviewer to solve a challenge in real time, using their preferred IDEs or cloud-based environments.

This technique assesses not just problem-solving abilities, but also communication, flexibility, and teamwork—all of which are essential skills in any engineering profession. It's especially useful for mid-level hiring, when cross-functional teamwork is more important.

For interactive environments on the web, use applications such as CodeInterview, CoderPad, or even GitHub's Codespaces.

System design review

Technical competence evaluations for senior jobs must go beyond code writing and include an evaluation of architectural thinking. A decent system design interview test:

- Understanding of size and trade-offs

- Able to manage uncertainty

- Communication clarity

Use structured rubrics to assess how well the candidate communicates judgments concerning caching, queuing, data storage, fault tolerance, and user load. Tools such as Miro and Excalidraw provide interactive whiteboarding for remote interviews, although they must be more organized than regular whiteboard sessions.

Technical portfolios & open source contributions

Experienced applicants frequently have real-world examples to offer, such as GitHub repositories, Stack Overflow profiles, and open-source contributions. These can give detailed insights into:

- Code quality and structure

- Project's breadth and complexity

- Community engagement and documentation

While optional, studying a candidate's portfolio provides valuable contextual cues that no manufactured task can provide. It is particularly useful when hiring for highly specialized or senior positions.

Companies may use these current options to establish a technical skills evaluation process that is more inclusive, predictive, and reflective of real-world engineering work. It's more than just evaluating code; it's about hiring with clarity, justice, and confidence.

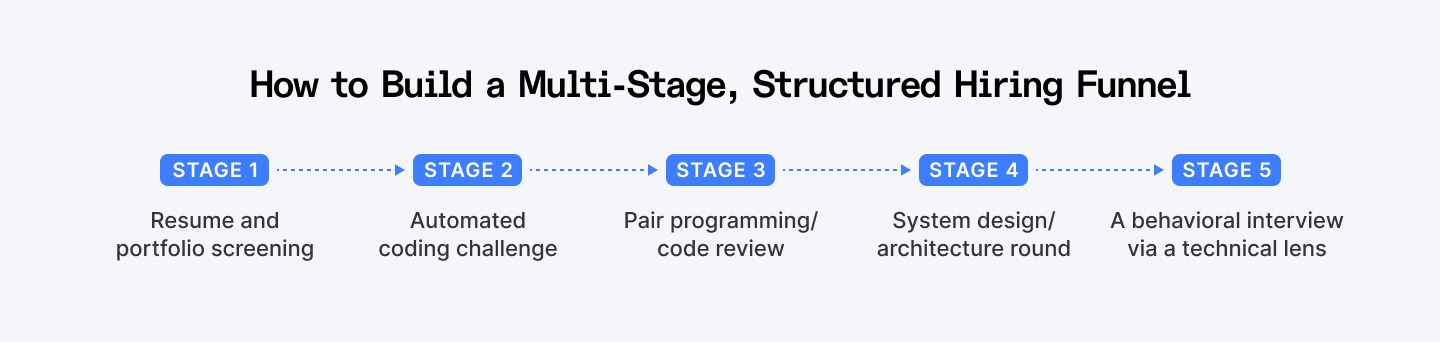

Building a Multi-Stage, Structured Hiring Funnel

A well-designed hiring funnel guarantees that candidates are evaluated fairly, consistently, and according to the role's requirements. Rather than depending on a single whiteboard interview or coding task, divide the process into specialized applicant tests, each designed to reveal distinct abilities.

Stage 1: Resume and portfolio screening

Go beyond matching keywords to job descriptions. Consider project ownership, open-source contributions, measurable outcomes (such as performance improvements or delivery cycles), and context-specific experience. Tools such as Recruitee and Greenhouse enable organized review procedures and annotations.

Stage 2: Automated coding challenge

Use AI-powered evaluations to evaluate applicants objectively and efficiently. Platforms such as HackerRank, Codility, and CodeSignal provide automated assessments based on real-world activities that are linked with your technology stack. Make sure the challenges mirror day-to-day tasks, such as diagnosing a failed endpoint or optimizing a database query.

This process should be timed (60-90 minutes) and properly described to the candidate in order to ensure transparency and minimize friction.

Stage 3: Pair programming / code review

At this step, evaluate how candidates interact, communicate, and problem solve in real time. You may participate in a live coding session or examine a pull request together. Code walkthroughs may be created using technologies such as CoderPad, Tuple, or GitHub. These strategies send a clear clue about how the prospect will fit into your team dynamics.

Stage 4: System design/architecture round

This level is critical for senior engineering employment since it assesses strategic thinking, abstraction abilities, and a knowledge of tradeoffs. Provide a prompt that is relevant to your product (for example, "Design a notification system at scale") and evaluate it based on architectural considerations, scalability, fault tolerance, and communication clarity.

Stage 5: A behavioral interview via a technical lens

Ask applicants to walk you through previous choices on deployed features, team problems, or technical trade-offs. Behavioral inquiries such as "Tell me about a time you had to refactor legacy code under tight deadlines" reveal details about their beliefs, flexibility, and ownership attitude.

By organizing your hiring assessments into these five steps, you can create a process that is both thorough and candidate-friendly. It reduces recruiting bias, enhances signal quality, and boosts retention by picking engineers who are compatible with the position and corporate culture.

Discover 7 proven strategies to build a talent pipeline that helps you hire faster and secure top talent before your competitors do.

AI-powered Assessments: A Better Way to Scale

The desire for quick, fair, and effective technical competence tests has made AI-powered exams an essential component of modern engineering recruiting.

These technologies add scalability, objectivity, and deeper candidate insights at the top of the funnel, all without sacrificing quality.

Unbiased scoring and plagiarism detection

AI-enabled systems utilize standardized grading rubrics and code analysis models to eliminate unconscious bias from the evaluation process. Unlike subjective whiteboard input, AI-powered systems systematically evaluate code efficiency, accuracy, and style. Tools like CodeSignal and DevSkiller also include built-in plagiarism detection, confirming the accuracy of the results.

According to a recent LinkedIn Talent Solutions research, 76% of talent professionals believe AI has "a significant positive impact" on recruiting quality and decision-making.

Effective initial screening

Using AI technologies to assess fundamental programming ability or domain-specific expertise enables teams to quickly filter down a vast candidate pool. This is especially critical in high-volume recruiting, when manual screening is not feasible. With AI, recruiters can focus more on high-signal applicants and less on resume triage.

Skill diagnostics and analytics

Beyond scoring, current AI-powered evaluations include detailed diagnostics, such as determining a candidate's problem-solving technique, code cleanliness, and time-to-solution. Hiring teams may use benchmark comparisons, skill heatmaps, and advancement patterns to make data-driven decisions.

Balanced automation and human review

While automation helps with early filtering, the most efficient hiring channels find a balance. Use AI techniques to shortlist and evaluate candidates, but keep human-led interviews for later phases such as system design and behavioral evaluation, when soft skills and strategic thinking are essential.

AI does not replace human judgment. It improves it by decreasing inefficiencies and lowering errors at the top of the funnel.

As AI continues to impact the future of recruiting, firms that use AI-powered evaluations will be able to construct faster, more equitable, and predictive hiring systems, at scale.

Explore the 15 best developer assessment tools (both free and paid options) to effectively evaluate technical skills.

How Index.dev Facilitates Modern Candidate Assessments

Traditional interviews are flawed, but so is the method most firms use to replace them. Index.dev does more than simply help you uncover exceptional talent; we also teach you how to assess it successfully, employing a contemporary, scalable approach to candidate assessments.

Our technology automates your whole hiring assessment funnel using technologies designed to mimic how real engineering teams function. Whether you're looking for a freelance React developer or establishing a fully managed AI engineering pod, we'll help you make smarter, quicker, and more equitable judgments.

Built-in assessment tools

- Automated coding tasks for screening technical skills rapidly and at scale.

- Virtual whiteboards for architectural and system design reviews.

- Dashboards for candidates that indicate their progress, expectations, and feedback

AI and human insight

Index.dev conducts approximately 12,000 technical and cognitive exams each month, using AI to identify top performance in important domains:

- Front-end, back-end, full stack, DevOps, AI/ML, quality assurance

- Web and UX/UI designers

- Data engineers, product managers, and others

Our AI assesses coding aptitude, cognitive strength, and collaborative potential. Then, our talent experts hand-match applicants based on team fit, project objectives, and corporate culture. What was the result? 97% first-hire rate and teams that ship quicker.

Globally compliant. Instantly scalable.

Index.dev enables you to select, employ, and manage remote developers and entire pods in 160+ countries. Whether you're growing from one freelancer to a dedicated product team, you'll have compliant contracts, payroll, and performance tracking all in one integrated platform.

Are you ready to scale smarter with structured AI-powered applicant assessments? Try Index.dev and a demo of MIND.dev now.

Conclusion: Skill-Based Hiring Is the Future

Whiteboard interviews are relics from the past. Today's tech teams require a hiring process that prioritizes real-world experience over on-the-spot performance. Companies that use multi-format, contextual candidate exams can find engineers who solve issues rather than just perform under duress.

"What you test is what you get."

Skill-based recruiting is no longer a pipe dream thanks to systems like Index.dev, which use AI to make it a scalable reality.

Hire quickly. Assess smarter. Build better.