AI-powered hiring solutions promise fairness, but hidden bias in AI hiring tools can quietly filter out top talent by race, gender, age, disability, and more. In today’s tight talent market, missing top talent isn’t just unfair, it’s bad business.

Here’s what you need to know right now:

- 85% vs. 9%: Resumes with white-sounding names advanced 85% of the time vs. just 9% for Black-sounding names in a study by University of Washington.

- 100% exclusion: In controlled tests, Black men’s resumes were passed over entirely when names changed alone.

- Lawsuits & complaints: HireVue and Workday face legal challenges for disadvantaging deaf, non-white, older, and disabled applicants.

- AI “black box” risk: Proprietary algorithms often hide these patterns until a complaint or audit forces them into daylight.

- Business cost: Employers are now legally accountable for discriminatory AI outcomes.

AI recruitment technology vows to be effective and unbiased but in actuality represses ingrained biases. These biases are now brought to light by high-profile cases: a federal complaint uncovered that HireVue's video interview software unfairly rated deaf and non‑white candidates, and a complaint filed against Workday claims its hiring system systematically excludes older, Black, and disabled candidates.

Avoid biased hiring tech. Index.dev helps you access top 5% vetted developers with ethical, AI-powered matching, in just 48 hours, with zero hiring risk.

What Is Hidden Bias in AI Hiring Tools?

Hidden bias means an AI system makes unfair decisions where automated systems replicate past prejudices, without it being obvious in the software’s code or marketing. These tools learn from historical data so if last year’s hires skewed toward one group, the AI “learns” to prefer that group automatically. In practice, “hidden” bias arises in several subtle ways: legacy data imbalances, proxy variables, or flawed assumptions embedded in the model. Common sources include:

- Skewed training data (e.g., 90% white engineers teaches the AI to favor white candidates)

- Proxy variables (college name, hobbies, work-gap patterns)

- Opaque algorithms (proprietary “black boxes” with no explainability)

Who Is Affected by Hidden Bias?

AI biases overwhelmingly hurt historically marginalized groups. Any candidate whose profile diverges from the “default” (usually a young, white, male profile) may be subtly penalized.

AI bias hits historically under‑represented groups hardest.

The most commonly affected include:

- Racial and ethnic minorities (candidates with non‑Anglo names or accents often get downgraded)

- Women, especially in male‑dominated fields

- Older professionals (tools skew toward “young workforce” traits)

- Candidates with disabilities (e.g., speech‑recognition fails deaf applicants)

- Intersectional profiles (for instance, older women of color face amplified filtering)

Name‑based bias:

In one test of three popular AI resume scanners, white‑associated first names advanced 85% of the time versus only 9% for Black‑associated names while male names scored 52% higher than female names, even in roles typically held by women.

Studies highlight biases against immigrants and people with non‑English names with AI parsers often flagging non‑standard language or gaps as negative.

Which is why, HR managers should recognize that bias affects all protected classes; anyone outside the majority demographic is a potential casualty of hidden AI prejudice.

When and Where Does Bias Emerge?

Hidden bias lurks across every hiring stage. More often than not, it first shows up in resume screening and applicant ranking, where AI’s learned patterns most directly influence who makes the cut.

Job descriptions

- Gender-biased language such as "rockstar" or "ninja" or excessive technical jargon discourage women, non-technical, and diverse candidates from applying at all.

Resume screening

- Automated parsers pick up on proxies for protected traits.

- Example: If a model has learned to reward “repaired,” “competed,” or “led,” it may downgrade resumes using “cared,” “coordinated,” or “supported,” unintentionally filtering out candidates who favor more collaborative language.

Assessments & interviews

- Video‑analysis and voice‑pattern tools often struggle with non‑native accents or speech differences, penalizing disabled or international candidates.

Quick fact: An estimated 99% of Fortune 500 companies now use some form of automated hiring tool, so these biases can scale very quickly.

Because many AI hiring solutions are proprietary “black boxes,” these problems often remain invisible until after deployment. You might only notice when your diversity metrics stall, or worse, when a legal complaint forces an audit.

Key takeaway:

Bias doesn’t just “happen” at screening; it hides in how we use AI (in which stages we automate) and when (often only when analyzing outcomes).

They can lurk in your job ads and assessments.

The antidote? Systematic vigilance at every stage: monitor language in your postings, audit screening results, and review AI‑driven assessments for demographic anomalies.

Why Does Hidden Bias Persist?

AI hiring tools don’t invent bias; they inherit and amplify it from the data they’re trained on. In the words of one expert, “garbage in, garbage out” - flawed inputs yield flawed outputs. Here’s where the bias roots itself:

- Skewed training data

- If 90% of past hires were white, the AI will lean that way. Even if equally qualified diverse candidates apply.

- Example: A 2025 Pymetrics review revealed neurodivergent candidates scored 30% lower on gamified tests simply due to underrepresentation in the training set.

- If 90% of past hires were white, the AI will lean that way. Even if equally qualified diverse candidates apply.

- Proxy variables

- Even when “race” or “gender” aren’t fed into the model, AI can learn to associate indirect signals (like college prestige, extracurriculars, or writing style) with protected traits.

- Example: Analysis of a major tech firm’s resume parser revealed candidates from mid-tier universities were 40% less likely to progress than those from Ivy League schools, even when GPAs and experience were identical, because the AI equated “prestige” with “fit.”

- Even when “race” or “gender” aren’t fed into the model, AI can learn to associate indirect signals (like college prestige, extracurriculars, or writing style) with protected traits.

- Algorithmic opacity

- Many hiring platforms are “black boxes.” HR teams can’t inspect model logic or weightings.

- Without explainability, biases stay hidden until flagged by an audit or complaint. Regulators note there’s still “no independent audit” requirement for most systems.

- Many hiring platforms are “black boxes.” HR teams can’t inspect model logic or weightings.

- Lack of oversight & standards

- Only recently did the U.S. Department of Labor publish its Inclusive Hiring Framework (NIST‑based) to guide fair AI use.

- Without proactive audits, AI won’t self-correct.

- Only recently did the U.S. Department of Labor publish its Inclusive Hiring Framework (NIST‑based) to guide fair AI use.

By recognizing these underlying causes (skewered data, proxy variables, black box models, etc.) you can concentrate your efforts on cleaning training sets, mandating model transparency, and using industry standard frameworks to break the cycle of bias.

How Can You Spot Hidden Bias in AI Hiring Tools?

The good news is hidden biases can be identified, but as previously mentioned, it takes proactive effort. Here are detailed, practical steps HR teams should take:

1. Audit Outcomes by Demographic

- What to do: Export pass‑rate and score reports by gender, race, age, and disability.

- Why it matters: Systematic under‑representation signals bias.

- Quick tip: Compare identical resumes across groups so that you can spot gaps and document them.

2. Run “What‑If” Tests

- What to do: Swap only names or demographic details (e.g., “Emily” → “Ahmed”) on strong resumes.

- Why it matters: Score swings reveal proxy bias in action.

- Example: The UW study saw white‑associated names advance 85% vs. 9% for Black‑associated names.

3. Demand Vendor Transparency

- What to do: Insist on third‑party bias‑audit reports and model datasheets.

- Why it matters: You’re legally accountable for AI‑driven decisions.

- Checklist: Verify that training sets include non‑native speakers and disabled profiles.

4. Integrate Human Oversight

- What to do: Require recruiters to manually review all AI‑rejected and AI‑shortlisted candidates.

- Why it matters: Human judgment catches errors and counters “automation bias.”

- Pro tip: Assemble diverse panels to double‑check AI recommendations.

5. Clean Up Job Descriptions

- What to do: Run postings through inclusive‑language tools (e.g., Textio).

- Why it matters: Neutral wording widens your candidate pool.

- Action: Remove unnecessary degree or skill requirements that historically favored one group.

6. Adopt Industry Frameworks

- What to do: Follow the DOL’s (Department of Labor) Inclusive Hiring Framework, EEOC AI guidelines, and NIST AI Risk Management.

- Why it matters: These guidelines are your legal and ethical roadmap.

- Next step: Train HR on civil‑rights obligations for AI tools.

7. Monitor & Iterate Continuously

- What to do: Set quarterly bias-audit KPIs and track diversity metrics over time.

- Why it matters: Models drift; regular checks prevent regression.

- Reminder: Maintain logs of AI decisions to diagnose emerging issues.

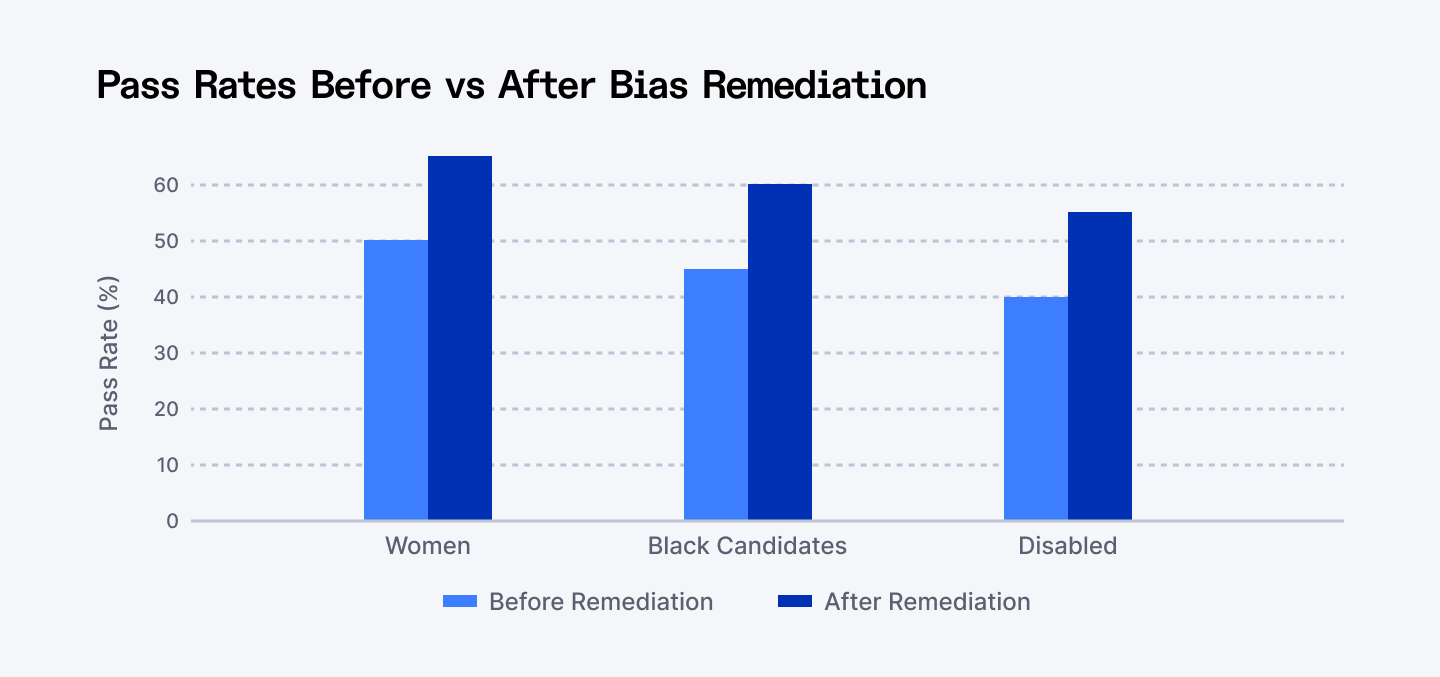

Pass-rates before and after bias remediation, diversity improves by up to 30% when audits are enforced

By embedding these steps into your workflow, you’ll transform hidden bias in AI hiring tools into transparent, manageable risks which ensure your processes stay fair, inclusive, and legally sound.

It’s exactly the due diligence that authorities now expect: for example, one EEOC consent decree in 2023 emphasized that companies must vet any AI assessments for accessibility and fairness. In other words, businesses are legally responsible for the impacts of their hiring tools.

Integrating Fair AI: Examples and Next Steps

Several organizations lead by example:

- Index.dev offers an AI hiring assistant focused on skill-based screening and built-in bias detection. Our platform is built to focus on skills and objective metrics thereby offering clients the ability to test drive bias detection in real-time with developer screening tools.

- HireVue now publishes third-party bias-audit reports and bias-detection flags which is a major step toward transparency (once burnt, twice shy right?). You just need to put in a request for their documentation.

- The PEAT/NIST Toolkit, funded by the DOL, provides practical exercises, roles, and checklists to embed fairness. You can download sample evaluation activities and policy templates directly from their portal.

Explore top 17 AI recruiting tools for hiring software engineers.

Here’s how to move forward with confidence:

Choose AI vendors who share accountability:

Only work with platforms that provide model documentation, training data sources, and bias-testing logs. Ask vendors to certify that their models have been audited and clarify how often those audits are updated.

Write fairness into your contracts:

Include clauses that require vendors to disclose bias-mitigation strategies, allow internal audits, and flag performance gaps. Make it clear that bias isn’t just the vendor’s problem but a shared responsibility.

Use frameworks, not just tools:

Don’t rely solely on platforms. Align your hiring practices with frameworks like:

✔ NIST AI Risk Management Framework

✔ EEOC’s AI Selection Guidelines

✔ PEAT Inclusive Hiring Principles

These help structure decision-making beyond the software layer.

Build a bias-aware culture:

Culture and policy must align with the tech. Equip recruiters, hiring managers, and decision-makers with training on how AI decisions are made and help them spot how AI can go wrong. For example:

✔ Encourage teams to question patterns: “Why are we only seeing candidates from X university?” or “Why are so few women getting interviews for our sales team?”

✔ Launch anonymous feedback loops to spot disparities earlier.

✔ Publicize your commitment to fair hiring.

Pair AI with human insight:

AI is best at spotting patterns, not making judgment calls. Use AI to assist, not replace, your team. Every AI-shortlisted and AI-rejected candidate should be manually reviewed at key checkpoints to reduce automation bias.

FAQs

1. What causes AI hiring bias?

Biased training data, proxy variables (e.g., college names), and opaque algorithms without transparency.

2. How often should I audit my AI tools?

At minimum quarterly, but more frequently in high-volume hiring seasons.

3. Can I rely solely on vendor claims?

No. Always verify with blind tests and demographic outcome audits.

4. Which tools help write inclusive job descriptions?

Textio, Gender-Decoder, and similar inclusive-language platforms can help you write inclusive descriptions.

Conclusion

Hidden bias in AI hiring tools is real, but it’s also detectable and correctable. With an integration of leading research and field testing, your HR department can open up the "black box" and make it an open book. Thus, you can be certain that great candidates are not filtered out by hidden algorithms, which is particularly important in the current tight labor market with overall labor shortages.

With vigilance, regular audits, and industry-backed guidelines for fairness, you can leverage AI's power of efficiency without compromising diversity and fairness.

Fair AI is not just about compliance; it's creating processes that enhance inclusion, simplicity, and trust. Fairness-bound organizations don't only reduce legal risk, rather, they tap a more diverse, richer talent pool and are pioneers in responsible innovation.

Lastly, treating candidates fairly isn't merely the ethical thing to do. It's good, no actually, it’s smart business.

Hire smarter, not riskier. Access the elite 5% of vetted developers with Index.dev. Fast matching, free trial, and no hidden bias.