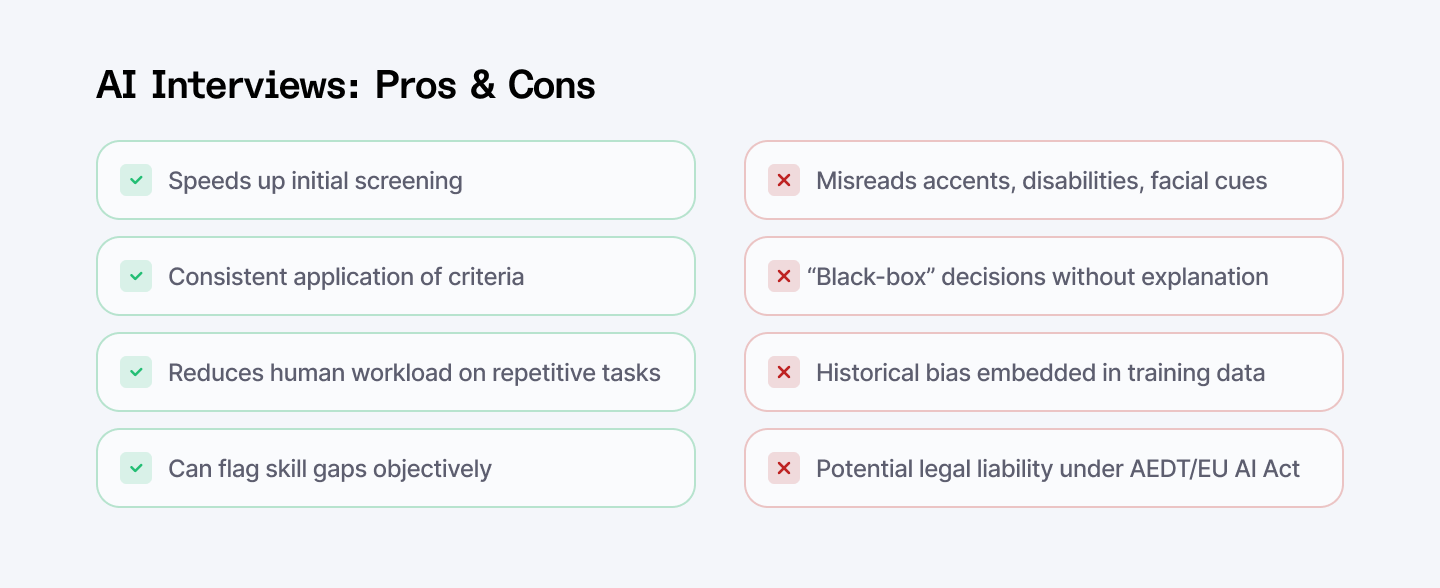

Are AI‑powered interviews fair? At first glance, AI‑driven video and voice interviews promise to sift through thousands of candidates in minutes by using speech analytics, micro‑expression scoring, and automated ranking to find the “ideal” hire.

But is there proof to back these claims?

Recent research shows these systems misinterpret non‑native accents, over‑penalize older or disabled applicants, and act as black boxes that leave HR teams and candidates in the dark.

In one telecom trial, AI flagged 38 percent of accented speakers as “low engagement” versus just 12 percent of native speakers despite equal response quality. Meanwhile, Mobley v. Workday alleges AI tools unlawfully screened out older and disabled applicants, and both New York’s AEDT Act and the proposed EU AI Act now classify recruitment AI as “high‑risk,” mandating bias audits and transparency on every résumé scan and video question.

In this article, we cut straight to what you need to know. From bias drivers, interests of the law, transparency solutions, and practical best practices.

Avoid biased hiring tech. Index.dev connects you with vetted developers using ethical, AI-powered vetting. Fast, fair, and risk-free.

Key Takeaways

- AI interviews can make you more effective but not necessarily impartial. If your training data mirrors past biases, the AI will too. Always question what the model is learning from.

- Laws and standards are tightening. New regulations (NYC AEDT, EU AI Act, etc.) demand audits and transparency. Non-compliance can risk discrimination lawsuits.

- Transparency builds trust. Use explainable AI techniques and clear candidate feedback. Research shows that when candidates get understandable AI explanations, they feel the process is fairer.

- Watch for vulnerable groups. Test your AI hiring tools on candidates of different ages, races, genders, and abilities. A bias “in one corner” can lock out entire communities.

- Blend AI with humans. Platforms like Index.dev highlight that combining AI screening with expert human judgment tends to produce better outcomes. In practice, keep human recruiters in the loop for final decisions.

- Keep learning. The AI hiring field is evolving. Stay updated on best practices (and tools like IBM’s fairness library) so we can all make our interviews as fair and inclusive as possible.

Bias and Discrimination in Speech‑Based Interviews

AI systems learn from data, so if past hiring was biased, the AI can mimic those patterns. Imagine training a model on years of resumes from a tech firm that has mostly hired men.

That AI might “learn” to favor male candidates, even if it never “knows” what gender is. University research confirms the effect.

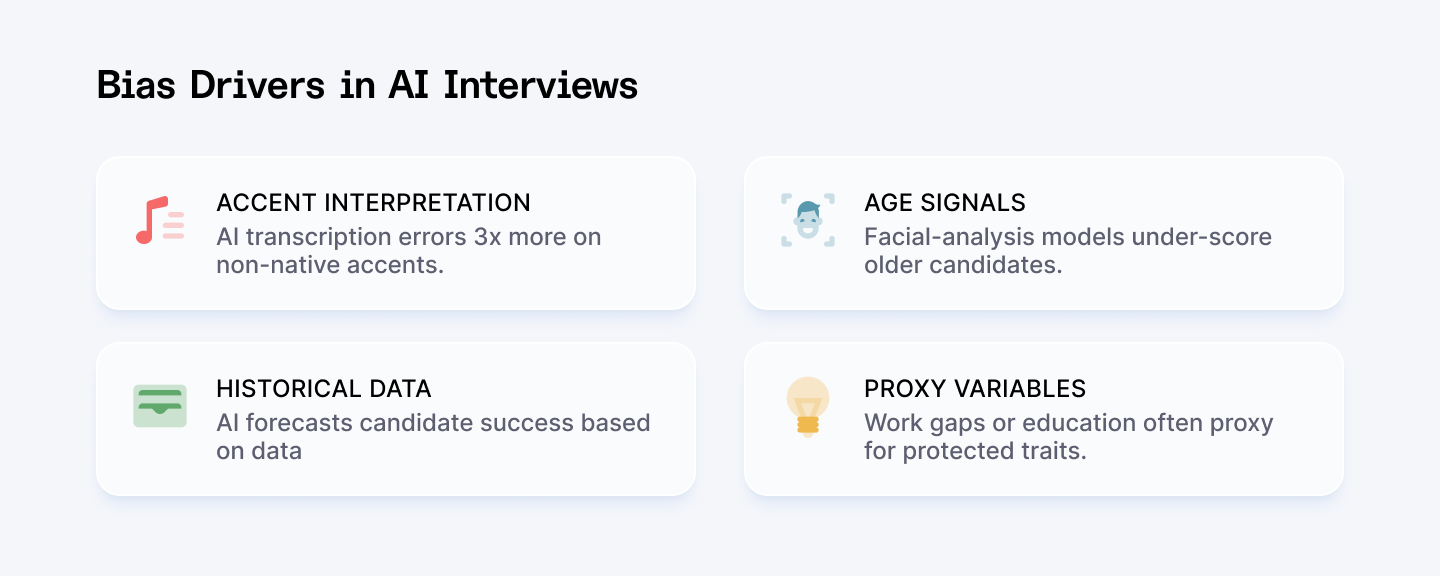

Accent Misinterpretation:

Queensland University’s 2024 report found AI transcription error rates of 35 percent for African and South Asian accents versus 10 percent for native speakers, leading to lower “clarity” and “engagement” scores for non‑native candidates.

Age and Disability Bias:

An Australian study revealed some vendors’ facial‑analysis models under‑score candidates with Parkinson’s tremors or darker skin tones, echoing concerns that AI can “enable, reinforce, and deepen discrimination” if unchecked.

Key takeaway:

AI learns from historic data. If past hiring favored certain groups, the AI will perpetuate those patterns.

These algorithms essentially echo historic patterns. As one ethics study notes, AI hiring tools often end up “reflecting the biases embedded in historical hiring data”.

These examples underline a simple truth:

AI can amplify bias if we aren’t careful.

Besides gender and race, other signals in data can act as proxies. For instance, gaps in work history might correlate with caregiving (more common for women), and accent or education level might proxy for nationality.

A physics of discrimination can arise: one study witnessed an AI discriminate against job applicants who were dressed in religious gear, and others detailing video-interview technology consistently failing to support disabled candidates.

In short, if we train AI on discriminatory information or neglect edge cases, we'll automate inequality, rather than eliminate it.

Need better tech hiring? Explore 17 AI recruiting tools that make finding software engineers easier.

Legal and Ethical Frameworks

As AI enters hiring, legal and ethical frameworks are racing to catch up. In many jurisdictions, automated hiring tools are now subject to anti-discrimination laws. In practice, this means organizations must audit and document their AI tools.

Mobley v. Workday (2025):

The EEOC alleges systematic exclusion of older and disabled applicants by an AI resume screener. Regulatory changes are underway.

NYC AEDT Act (2023):

Requires mandatory bias audits and candidate disclosure before deploying any AI hiring tool.

H.B. 3773, 2024 and SB 24-205:

Illinois's new law (H.B. 3773, 2024) prohibits AI job platforms with disparate impact on protected classes, and Colorado's SB 24-205 (2024) also calls for caution to avoid known discrimination risks.

EU AI Act (draft):

Classifies recruitment AI as "high‑risk," subjecting it to impact assessments, scoring algorithm transparency, and ongoing monitoring.

Why it matters: Noncompliance can result in civil‑rights litigation, significant fines under GDPR, and reputational harm.

Ethically, the consensus is: you can’t set AI loose and hope for the best.

Firms are advised to build “hybrid” processes where you let AI filter or rank candidates, but always have human experts overseeing final decisions and auditing outcomes.

As a judge in the Workday case explained, an AI recruiter isn’t just a passive tool, it “participates in decision-making”, so it can be held to the same fairness standards as a human recruiter.

Transparency and Explainability

Another ethical pillar is transparency. Some aspects that you need to consider:

Black‑Box Risks

Without clear reasoning, candidates can’t appeal or learn from AI decisions, and HR teams can’t diagnose flawed models. Researchers emphasize that making AI decisions interpretable helps everyone.

Explainable AI (XAI)

Tools like IBM’s AI Fairness 360 and Google’s What‑If Tool offer visualizations showing which features (e.g., years of experience, speech tempo) drove a “pass” or “fail.”

Best Practice

Log every decision, publish third‑party audit summaries, and provide candidates with simple feedback such as “Your response lacked two examples of leadership,” which research shows improves perceived fairness.

Regulators are catching on. As previously mentioned, the EU AI Act and revised GDPR explicitly require that high-risk AI (like hiring tools) be documented and explainable.

The U.S. is moving in that direction too, with new EEOC guidance urging companies to understand their algorithms’ logic.

In Australia, experts like Dr. Natalie Sheard calls for “greater transparency around the workings of AI systems” and their training data.

In practice, transparency can mean keeping logs of the AI’s decisions, conducting open “red-team” tests, or publishing third-party audit reports. Some vendor platforms even build in explainability features. The goal is to make AI recruitment a transparent process. Managers and candidates must know how and why they are making choices, and only then can we guarantee fairness in the process.

Impact on Marginalized Groups

Who stands to lose if AI hiring goes wrong? Unfortunately, research highlights the usual suspects: people from historically marginalized groups.

Studies list,

“Women, older workers, people with disabilities, and non‑native speakers are at heightened risk of AI‑driven exclusion.” - Australian Human Rights Commission report, 2025

For example, Dr. Sheard’s interviews with Australian recruiters revealed worries that voice-based AI interviews often misunderstand non-native accents or atypical speech, leading to unfair scoring.

In fact, when one AI tool was tested, its transcription error rate jumped from ~10% for native speakers to 22% for a Chinese-accented English speaker. Without adjustment, such gaps in understanding can shut out qualified applicants.

- Intersectional Bias: A middle‑aged Latina with a speech impediment may face compounding penalties.

- Digital‑Access Gap: AI video interviews presume high‑speed internet and silent spaces and leave behind individuals with no reliable access or quiet places.

Basically, if we’re not careful, AI could end up locking disadvantaged groups out of jobs at unprecedented scale. Your role is to catch these.

Practically, that means testing hiring AIs on real-world populations and checking outcomes by demographic group. It means making accommodations: for example, permitting sign-language interpreters or phone interviews if the candidate has hearing loss.

If you're employing AI to recruit, you must ask:

How will our process treat older candidates, or candidates with alternative accents?

HR steps of action:

- Pilot your tools in different pilot groups

- Monitor pass‑rate variation by gender, ethnicity, age, and ability

- Make changes (e.g., permitting phone interviews for individuals with hearing differences).

Real-World Implementations and Audits

In the hiring marketplace, implementations vary widely. Some companies simply plug in an AI resume scanner or video-interview robot and call it a day. Others build more careful workflows.

For example, talent networks like Index.dev (which connects employers to top tech talent) emphasize combining AI vetting with human-led matching. As they see it, AI can filter out applicants, but “human involvement is essential to ensure fairness” through follow-up questions and assessment of soft skills. Likewise, leading HR platforms (such as HireVue, Pymetrics, etc.) collaborate with industrial-organizational psychologists to validate their tests more and more.

Still, independent auditing is rare. Outside a few city laws (e.g. NYC’s audit mandate), most U.S. companies aren’t forced to prove their AI is fair. A recent UW researcher noted that “outside of a New York City law, there’s no… independent audit of these systems”, meaning biases can slip through. This gap is partly because many tools are proprietary black boxes. One workaround: some nonprofits and regulators press vendors to share data. For instance, the Workday suit and the Amazon case both relied on leaked info and reverse-engineering to uncover bias.

On the positive side, awareness is growing. HR leaders report skyrocketing AI adoption (HireVue says AI use in hiring jumped from 58% in 2024 to 72% in 2025), but candidate backlash is also rising. In response, some companies are conducting algorithmic audits; internal or third-party reviews of their hiring models. Tech frameworks now exist to help with this: IBM’s AIF360 toolkit and Google’s What-If Tool are examples that allow data scientists to simulate how changes in inputs affect outcomes.

Professional organizations (e.g., SHRM and ISACA) suggest that any audit should check the accuracy between groups, confirm compliance with law and record assumptions. In short, practice is in a mixed state: some conscientious groups are conducting rigorous audits and recording decisions, but many others haven't yet scratched the surface.

In our view, no single approach guarantees fairness. But research suggests effective strategies. For instance, retraining models on more diverse samples or applying “de-biasing” algorithms can help (this is a growing ML research area).

Another important method is human-AI collaboration: have the algorithm suggest but always have a human check marginal cases. Regularly checking results by race, gender, age, etc., is important too: if your data indicates fewer protected class hires, ask why.

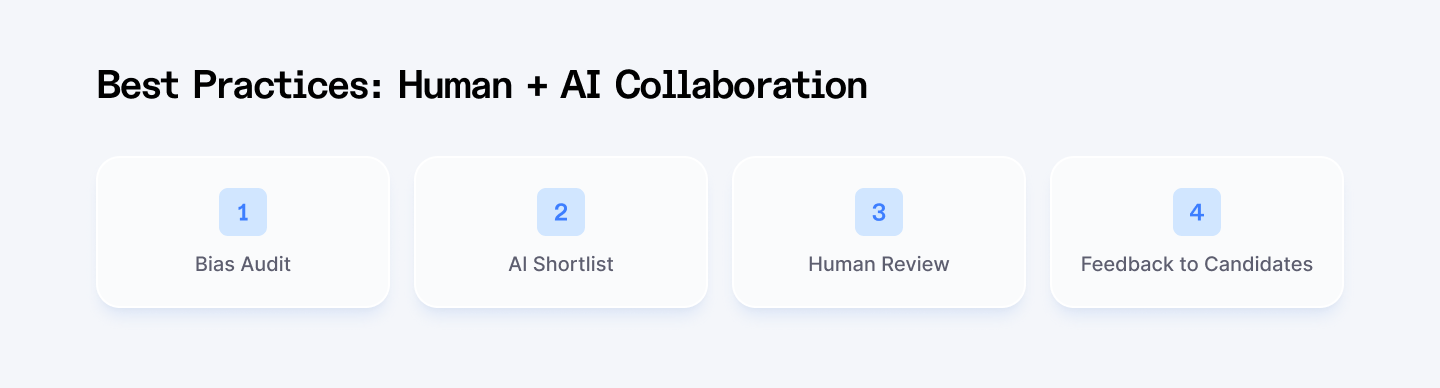

Best Practices: Human + AI Collaboration

Based on industry and academic insights, fair AI hiring workflows share these features:

Pre‑Deployment Bias Audit:

Use open‑source toolkits to test models on synthetic and real datasets.

Hybrid Review Panels:

Let AI shortlist candidates, but require at least two human experts to review all rejections.

Continuous Monitoring:

Measure hire‑rate equity monthly by investigating any drop in offers to protected groups.

Transparent Candidate Feedback:

Offer clear, example‑based feedback so applicants understand AI decisions.

Index.dev spotlight:

Index.dev’s proprietary AI hiring recommender system MIND.dev accelerates candidate‑role alignment, while seasoned recruiters conduct live follow‑ups thereby ensuring that nuanced skills and soft‑qualities are evaluated.

Your action checklist:

- Conduct pre‑deployment audits with open‑source fairness tools.

- Keep human experts in the loop for all borderline or rejected candidates.

- Provide clear, example‑based feedback to every applicant.

- Monitor outcomes by protected characteristic and adjust models as needed.

With these steps (and by partnering with platforms like Index.dev to combine AI efficiency and human judgment) we can ensure that AI‑powered interviews become a force for inclusion rather than exclusion.

Learn the best practices for vetting software developers, including technical skills, soft skills, culture fit, and more.

FAQs

Q1: How do AI interviews misjudge accents?

AI speech models trained on native English data often struggle with non‑native phonemes, elevating transcription errors that feed into engagement and clarity scores.

Q2: What is the AEDT Act?

New York City’s Automated Employment Decision Tools Act (2023) mandates annual bias audits and candidate notification for any AI screening tool used by employers.

Q3: Can AI tools be made fair?

Yes, by retraining on diverse data, using de-biasing algorithms, with human supervision to ensure, and being open about the process.

Conclusion

AI recruitment is a mighty tool but it's no magic wand. Used responsibly, with restraint and human judgment, it can assist us in discovering excellent candidates in a more efficient way.

Applied indiscriminately, however, it can reflect the same unwarranted biases we aimed to supersede. By being aware of the issues we mentioned above and addressing them to correct them, you (as HR professionals or tech-savvy decision makers) can reap the strengths of AI without compromising our commitment to equity.

Hire the best, without bias. Access the top 5% of vetted developers with Index.dev’s fast, fair, and risk-free hiring.