Generative AI is a branch of learning that involves the AI coming up with its content, be it in the form of text, images, or music.

It is applied in different areas, but not limited to the following, content creation, drug discovery, other arts, and graphics. These applications are built on a layered architecture, which determines the possibilities of the model and its efficiency.

It is crucial to understand generative AI’s architectural design, especially concerning Large Language Models (LLMs), for several reasons. Firstly, the assessment of the model allows understanding what the model does well and what it does not as well as to decide whether it is suitable for particular applications. Second, there is a need to have a proper understanding of the architecture in order to fine-tune the model’s performance, minimize computational load, and eliminate bias. Furthermore, it also helps in creating new generative AI applications by allowing the modeler to experiment with innovative architectural designs.

In this article we will discuss generative AI architecture in extensive details especially concerning its aspects, design criteria and uses with specific reference to LLMs. Thus, through giving a large number of details, our purpose is to help readers to be well-prepared to deal with the choices and opportunities of generative AI in different fields.

Read also: What is a Large Language Model? Everything you need to know

Looking for senior LLM engineers? Index.dev connects you with the most thoroughly vetted ones ready to work on your most challenging projects. Hire in under 48 hours!

Understanding Generative AI Architecture

The architecture of a generative AI system can be designed as a system of data and models and feedback between them. It forms the foundation, upon which new and of high value, is produced. Now it is time to look deeper into the components that make up this architecture.

Core Components of Generative AI Architecture

Data Processing Layer

Whatever generative AI system is under discussion, data underpins it. This layer consists of processes of data collection, data cleansing, data pre-processing and data transformation.

- Data Acquisition: The act of identifying information that would be useful on a specific topic from various sources. It includes two forms of data, namely the structured and unstructured depending on the model’s specifics.

- Data Cleaning: Cleaning data involves detecting and eliminating mistakes, gaps or outliers in a given population data. It comprises dealing with such cases as missing value inputs, eliminating duplicity and format normalization.

- Preprocessing: Cleanliness or preparing raw data in a format that the model can easily take. The methods include normalization of data, scaling of data as well as tokenization of the data.

- Feature Engineering: Extracting meaningful features from the data to enhance model performance. This involves selecting, creating, and transforming variables that capture relevant information.

The quality and quantity of data significantly impact the generative model's capabilities. A well-curated dataset is essential for training robust and effective models.

Generative Model Layer

In the case of generative AI, the generative model is at the core of the system. This part is concerned with generating new text based on the patterns that have been derived from the data.

- Overview of different generative models:

- Generative Adversarial Networks (GANs): Due to the use of a generator and a discriminator in its structure, GANs are excellent at producing impressively realistic data.

- Variational Autoencoders (VAEs): The framework of VAEs combines probabilistic methods for encoding a data set in latent variables suitable for generation as well as other versions of the set.

- Transformers: Originally built to solve sequence data, transformers have come into the spotlight in natural language processing and are slowly extending to other domains.

- Model Selection and Training: Selecting the right model structures by considering the required result type and data available for training. Training entails the process of feeding the model with large amounts of data and adapting the parameters in the backward propagation process.

- Hyperparameter Tuning: The ability to make slight adjustments to the training parameters such as: learning rates, batch size, and the number of layers in a model. This is usually a process that is done with a trial-and-error approach where some methods are tested prior to others being evaluated.

The choice of generative model and the effectiveness of the training process significantly influence the quality and diversity of generated outputs.

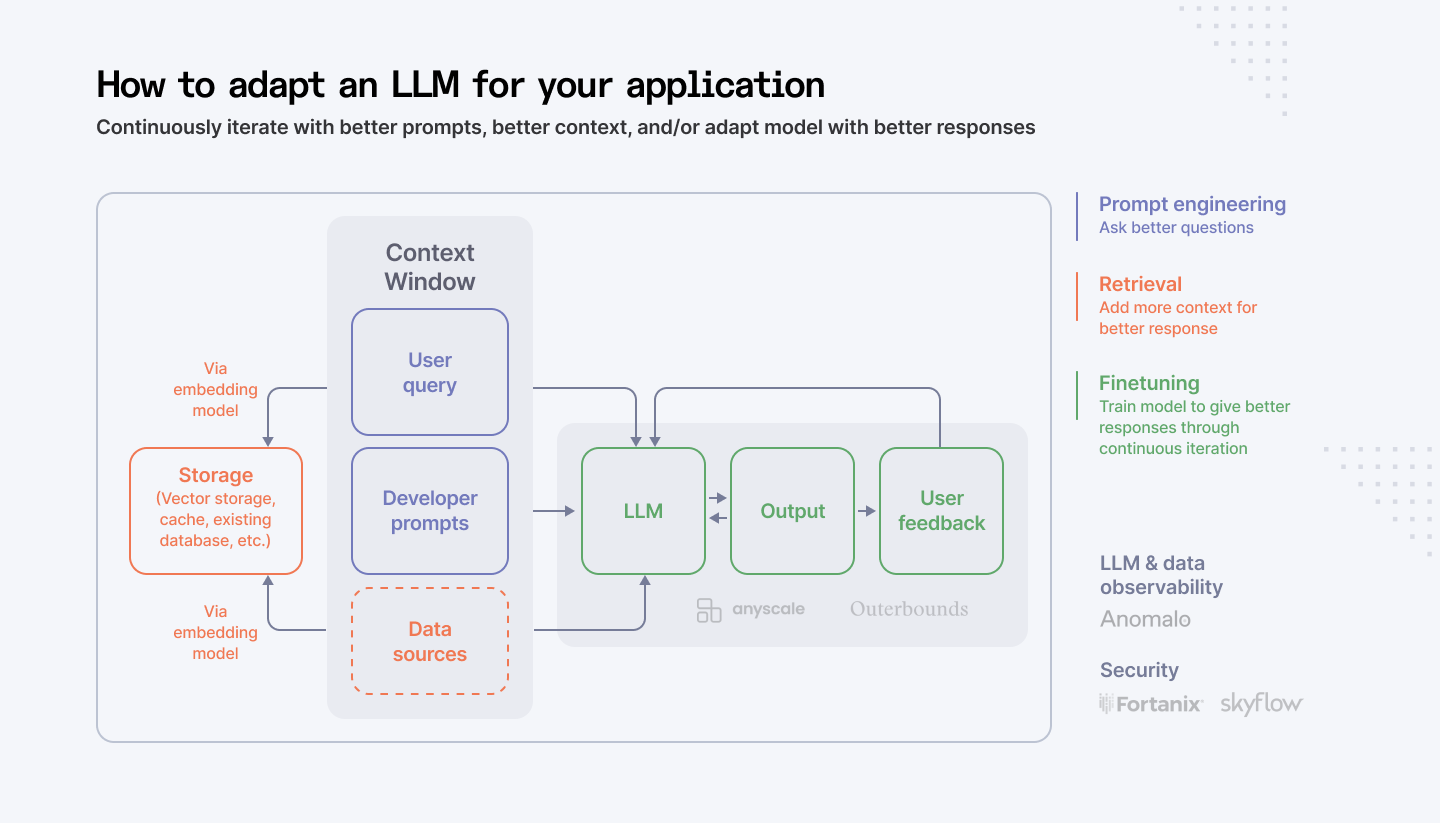

Feedback and Improvement Layer

Continuous improvement is essential for the evolution of generative AI systems. This layer focuses on evaluating model performance, incorporating user feedback, and refining the model accordingly.

- Model Evaluation: Assessing the quality of generated content using relevant metrics. This includes metrics like fidelity, diversity, and coherence.

- Human-in-the-loop Feedback: Incorporating human judgement to guide model development and improve generated outputs. This can involve rating generated samples, providing corrections, or offering creative suggestions.

- Continuous Learning: Enabling the model to learn from new data and feedback over time. This involves retraining the model or updating its parameters incrementally.

A robust feedback loop ensures that the generative AI system aligns with user expectations and produces increasingly valuable outputs.

Deployment and Integration Layer

The final stage involves deploying the trained model into a production environment and integrating it with other systems.

- Model Deployment Strategies: Choosing the appropriate deployment platform (cloud, on-premise, edge) based on performance, scalability, and security requirements.

- Integration with Existing Systems: Seamlessly incorporating the generative AI model into existing workflows and applications. This involves developing APIs and connectors.

- API Development and Management: Creating well-defined APIs to expose the model's capabilities to external systems. This includes version control, documentation, and security measures.

Effective deployment and integration are crucial for realizing the full potential of generative AI and delivering value to end-users.

Also read: Examining the Leading LLM Models: Top Programs and OWASP Risks

Designing Effective Generative AI Architectures

The process of developing design for generative AI is a concept that is based on multiple technicalities, ethicities, and business strategies. The proper architecture is critical to the development of reliable, scalable, and accountable generative AI.

Generative AI Architecture Design Principles

Creating a successful generative AI architecture requires a holistic approach that encompasses several key principles:

Key considerations for designing effective generative AI architectures

- Performance Optimization: Focusing on the computation time, performance, and data transfer rate. This involves being selective with the use of the hardware, the kind of algorithms used and optimization methods.

- Scalability: Planning and implementation of architecture to handle data volume growth, the complexity of the models and the users. These are the aspects related to distributed computing, cloud systems, and modularity.

- Cost-Efficiency: Choosing between operational excellence and business expansion and fixing a budget. This means examining affordable options for hardware, software, and cloud platforms.

- Data Privacy and Security: Preserving sensitive data at the various stages of the AI life cycle. The major focus should be in carrying out strong measures of security, general data masking and ensuring that they observe privacy policies and laws.

- Ethical Considerations: Acknowledge potential sources of bias and comply with standard codes of ethics. This entails fairness, clarity, responsibility and no-reduced adverse effects.

Balancing performance, scalability, and cost-efficiency

Achieving an optimal balance between performance, scalability, and cost-efficiency is a critical challenge in generative AI. Several strategies can be employed:

- Hardware Acceleration: Leveraging specialized hardware like GPUs, TPUs, or FPGAs to accelerate computations.

- Model Optimization: Pruning, quantization, and knowledge distillation techniques to reduce model size and computational requirements.

- Cloud-Based Infrastructure: Utilizing cloud platforms for elastic scaling and cost-effective resource allocation.

- Distributed Training: Training models across multiple machines to accelerate the process.

By carefully considering these factors, organizations can build generative AI systems that deliver high performance while maintaining cost-effectiveness.

Ensuring data privacy and security

Protecting sensitive data is paramount in generative AI. Implementing comprehensive data privacy and security measures is essential:

- Data Anonymization and Privacy-Preserving Techniques: Transforming data to remove personally identifiable information while preserving utility.

- Secure Data Storage and Transmission: Employing encryption, access controls, and data loss prevention measures.

- Regular Security Audits and Vulnerability Assessments: Identifying and addressing potential security threats.

- Compliance with Data Protection Regulations: Adhering to relevant regulations like GDPR, CCPA, and HIPAA.

A robust data privacy and security framework is crucial for building trust and protecting organizational reputation.

Ethical implications and responsible AI practices

Generative AI raises ethical concerns that must be addressed. Responsible AI development involves:

- Bias Mitigation: Identifying and reducing biases in data and models.

- Fairness and Inclusivity: Ensuring that the AI system treats all users equitably.

- Transparency and Explainability: Making the AI system's decision-making process understandable.

- Accountability: Establishing mechanisms for identifying and addressing harmful outcomes.

- Human-Centered Design: Prioritizing human well-being and avoiding negative societal impacts.

By incorporating ethical considerations into the design and development process, organizations can build generative AI systems that benefit society.

Generative AI Architecture Patterns

Generative AI architecture patterns refer to structures that outline possible and preferred ways of configuring generative AI systems for different objectives. These patterns depend on factors like type of data being used, the kind of output desired, the available computational power, and the needed performance.

Common Architectural Patterns for Different LLM Applications

Thus, although the foundational elements of generative AI structures are shared, their configuration and the focus of each structure differ depending on their specific area of application.

Text Generation Architectures

- Encoder-Decoder Architecture: This pattern is particularly applied in tasks such as machine translation, text summarization and question answering. It contains an encoder, taking the input text, and an decoder that produces the output text.

- Transformer Architecture: Developed based on attention mechanisms, transformers are particularly good at learning about long-range dependencies and are now considered the go-to model for many NLP tasks.

- Generative Pre-trained Transformer (GPT) Architecture: An extension to the transformer architecture, GPT models are trained on massive amounts of text data and are then further trained for specific downstream tasks.

Image Generation Architectures

- Generative Adversarial Networks (GANs): Using a competitive interaction GANs are applied for generating photorealistic images most often. They include a generator which produces an image and a discriminator which validates the image produced by the generator.

- Variational Autoencoders (VAEs): Following the probabilistic approach, VAEs also capture the representation of images wherein generation and reconstruction takes place.

- Diffusion Models: A newer approach that requires the superposition of noise to an image then progressively erasing it to create other images.

Video Generation Architectures

- Video Transformers: Take the transformer structure to extend to the input of video data, it is also possible for video transformers to generate videos frame by frame.

- Generative Adversarial Networks for Videos: Expanding GANs to video generation from the perspective of generating the subsequent frames either in parallel or sequentially.

- Video Prediction Models: Predicting the subsequent frames from the former frames for generating/synthesizing a video.

Other Emerging Application Areas

- Audio Generation: Recent architectures for producing audible sequences include Recurrent Neural Networks, Convolutional Neural Networks, and Generative Adversarial Networks for music, speech, and other audible pieces.

- 3D Model Generation: In order to create the 3D, there are techniques like Voxel based models and Point cloud, neural network for rendering the implicit representations.

- Drug Discovery: During the drug-searching tasks, generative models are known to perform the proposal of the new molecules with required properties.

It's important to note that these are just a few examples of common architectural patterns, and the field of generative AI is rapidly evolving. New architectures and combinations of existing patterns are continuously emerging.

Also read: Designing Generative AI Applications: 5 Key Principles to Follow

Building Generative AI Applications

The transformation of generative AI models into practical applications requires careful consideration of architectural design, integration, and user experience.

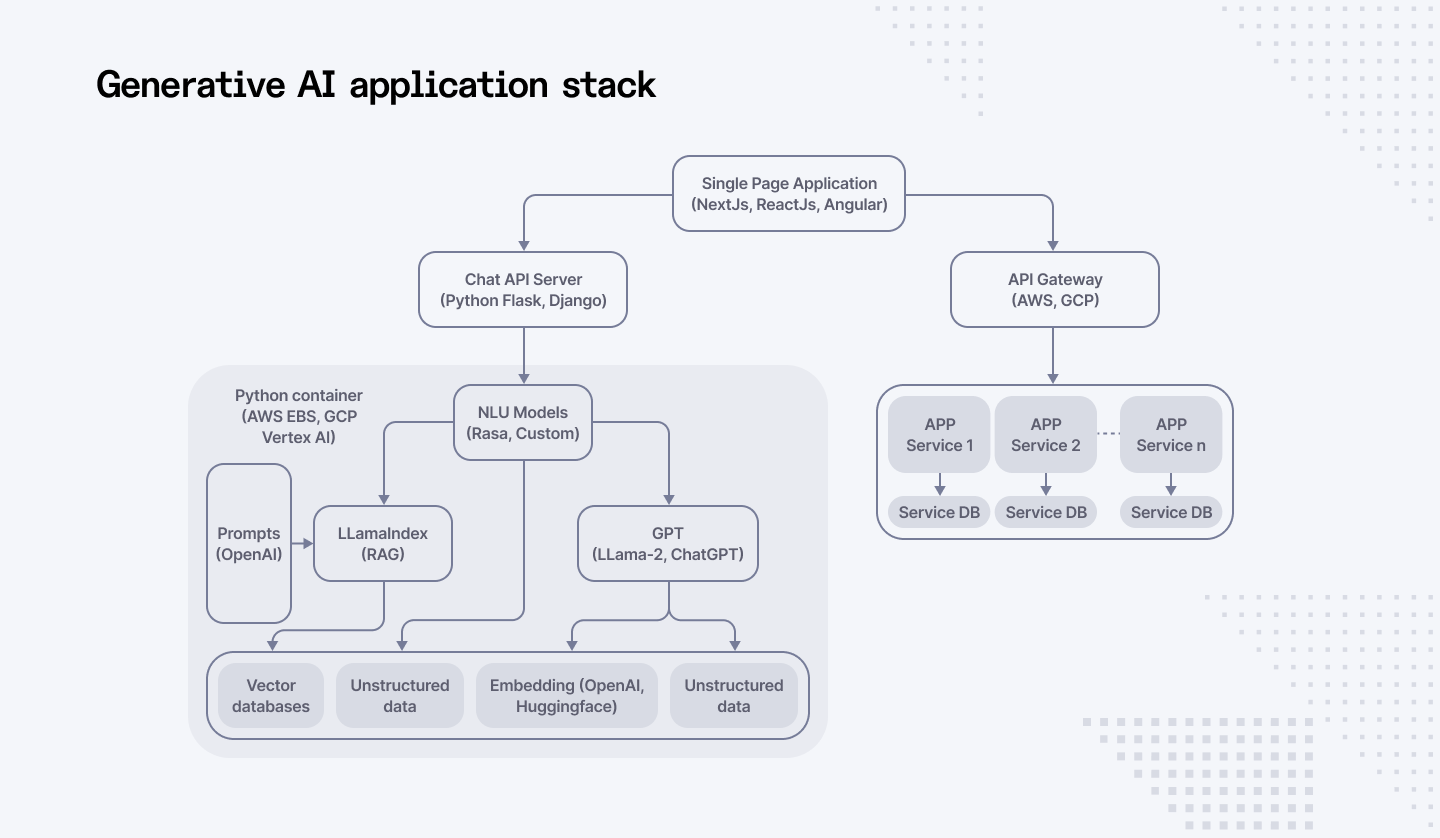

Generative AI Application Architecture

Key components of a generative AI application architecture

A generative AI application architecture typically consists of several interconnected components:

- Model Serving Infrastructure: This component handles the deployment and serving of the generative AI model. It includes considerations for hardware acceleration, load balancing, and fault tolerance.

- Data Pipeline: It makes sure that raw data can be fed into models frequently for both training and infliction. Also, it stands for data ingestion, data cleaning, data preprocessing and feature engineering.

- Prompt Engineering Module: Translates user inputs into effective prompts for the generative model. It requires understanding of model capabilities and user intent.

- Output Processing Module: Pipeline that transforms the output generated by one type of component into a form better suited for the next designated use or application.

- User Interface: It presents an easy means by which users can engage the generative AI system. It comprises input processes, output presentation, and feedback control.

- Monitoring and Evaluation System: Tracks application performance, model accuracy, and user behavior to enable continuous improvement.

Integration of generative AI models with other systems

To create value, generative AI models often need to be integrated with other systems and applications. Key integration points include:

- Database Integration: Accessing structured data for context and grounding the generated content.

- API Integration: Call other services or APIs for additional or more specific functions or information.

- Cloud Integration: Factors such as those related to deployment in cloud based facilities for storage, capacity, flexibility and processing use.

- Enterprise Systems Integration: Integration with other business applications for data transfer and business process management.

User interface and experience design

A well-designed user interface is crucial for the success of a generative AI application. Key considerations include:

- Intuitive Input Mechanisms: Providing clear and easy-to-use methods for users to provide prompts or inputs.

- Effective Output Visualization: Presenting generated content in a visually appealing and informative manner.

- Interactive Features: Enabling users to refine or modify the generated output.

- Feedback Mechanisms: Allowing users to provide feedback on the quality and relevance of the generated content.

AI Generator Architecture

Deep dive into the architecture of AI generators

Understanding the internal workings of an AI generator is essential for optimizing its performance and addressing potential issues. Key components include:

- Encoder: Processes input data and converts it into a suitable representation for the model.

- Decoder: Generates the desired output based on the encoded input and learned patterns.

- Attention Mechanism: Captures dependencies between different parts of the input data.

- Loss Function: Measures the difference between the generated output and the ground truth, guiding the model's learning process.

- Optimizer: Updates model parameters to minimize the loss function.

Optimization techniques for efficient generation

To improve the efficiency and quality of generative AI models, various optimization techniques can be applied:

- Model Compression: Reducing model size without significant performance degradation.

- Quantization: Reducing the precision of model parameters to accelerate inference.

- Knowledge Distillation: Transferring knowledge from a large model to a smaller one.

- Hardware Acceleration: Utilizing specialized hardware for faster computations.

- Hyperparameter Tuning: Fine-tuning model parameters to optimize performance.

Build a robust LLM application with confidence. Hire qualified and vetted Generative AI developers from Index.dev. In 48 hours, you'll have access to top profiles!

Generative AI in the Enterprise

Generative AI Enterprise Architecture

The integration of generative AI into the enterprise IT landscape is a complex undertaking that requires careful planning and execution.

This section will explore the challenges and opportunities, as well as the importance of governance and management in this transformative journey.

Integrating generative AI into enterprise IT landscape

Integrating generative AI into an existing enterprise IT landscape involves several key considerations:

- Data Integration: Seamlessly connecting generative AI models with enterprise data sources, ensuring data quality, privacy, and security.

- Infrastructure Integration: Integrating generative AI workloads with existing IT infrastructure, including compute, storage, and networking resources.

- Application Integration: Integrating generative AI capabilities into existing applications and workflows, enhancing user experience and productivity.

- API Integration: Developing and managing APIs to expose generative AI services to internal and external users.

Challenges and opportunities in enterprise adoption

The adoption of generative AI in enterprises presents both challenges and opportunities:

Challenges:

- Data Quality and Bias: Ensuring data quality, addressing biases, and maintaining data privacy.

- Model Governance: Establishing clear guidelines for model development, deployment, and maintenance.

- Talent Acquisition and Development: Building a skilled workforce with expertise in generative AI.

- Ethical Considerations: Navigating ethical implications, such as bias, fairness, and transparency.

Governance and management of generative AI models

Effective governance and management of generative AI models are essential for ensuring their responsible and beneficial use:

- Model Lifecycle Management: Establishing a framework for model development, deployment, monitoring, and retirement.

- Risk Assessment: Identifying potential risks associated with generative AI models and implementing mitigation strategies.

- Model Validation and Testing: Rigorously testing models for accuracy, fairness, and robustness.

- Explainability: Developing techniques to understand and explain model decisions.

- Ethical Guidelines: Defining ethical principles and standards for generative AI development and use.

AI-powered business process transformation

Generative AI has the potential to revolutionize business processes by automating tasks, improving decision-making, and creating new opportunities:

- Process Automation: Automating repetitive tasks, such as data entry, report generation, and customer service interactions.

- Decision Support: Providing insights and recommendations based on data analysis and predictive modeling.

- Product Development: Accelerating product development cycles through generative design and optimization.

- Customer Experience: Enhancing customer experiences through personalized recommendations, content generation, and virtual assistants.

By strategically integrating generative AI into business processes, organizations can achieve significant efficiency gains, cost reductions, and revenue growth.

Also read: Generative AI for Business Automation: Improving Efficiency & Reducing Cost

Generative AI Solution Architecture

The successful implementation of generative AI requires a well-defined solution architecture that incorporates best practices and addresses potential challenges.

Case studies of successful generative AI implementations

Analyzing successful generative AI implementations provides valuable insights into architectural patterns and best practices. Some notable examples include:

- Content Generation: Companies like OpenAI and Jasper.ai have successfully deployed generative AI models for creating high-quality text, code, and other content formats. These platforms demonstrate the power of large language models and their ability to automate content creation processes.

- Image and Video Generation: Companies like Midjourney and Stable Diffusion have achieved remarkable results in generating realistic images and videos. Their architectures often involve advanced GAN or diffusion models, trained on massive datasets.

- Drug Discovery: Pharmaceutical companies are leveraging generative AI to accelerate drug discovery processes. By generating novel molecular structures, these models help identify potential drug candidates more efficiently.

Best practices for building generative AI solutions

Building successful generative AI solutions requires a systematic approach:

- Data Quality and Quantity: Prioritize high-quality and diverse datasets to train robust models.

- Model Selection and Architecture: Choose the appropriate model architecture based on the specific use case and available resources.

- Training and Optimization: Employ efficient training techniques and hyperparameter tuning to maximize model performance.

- Evaluation and Refinement: Continuously evaluate model performance and iterate on the architecture to improve results.

- Deployment and Scaling: Consider scalability and performance requirements when deploying the model into production.

- Ethical Considerations: Address biases, privacy, and security concerns throughout the development process.

Emerging trends and future directions

The field of generative AI is rapidly evolving, with several promising trends emerging:

- Multimodal Models: Developing models capable of generating multiple forms of content (text, image, video, audio) simultaneously.

- Reinforcement Learning from Human Feedback (RLHF): Improving model performance by incorporating human feedback.

- Explainable AI: Enhancing model transparency and interpretability.

- Ethical AI: Developing frameworks for responsible and fair generative AI systems.

- Specialized Hardware: Utilizing specialized hardware accelerators to improve training and inference efficiency.

Looking for senior LLM engineers? Index.dev connects you with the most thoroughly vetted ones ready to work on your most challenging projects. Hire in under 48 hours!

In a Nutshell

By staying informed about these trends, organizations can position themselves at the forefront of generative AI innovation.

Would you like to delve deeper into a specific aspect of generative AI solution architecture, such as model evaluation, deployment strategies, or ethical considerations?

To stay ahead of the curve, organizations should consider partnering with platforms like Index.dev that provide a comprehensive suite of tools for building, deploying and monitoring generative AI solutions at scale.

Index.dev offers:

- Model evaluation and benchmarking to ensure high performance

- Secure and scalable deployment strategies for production use cases

- Responsible AI practices to mitigate ethical risks and biases

- Continuous monitoring and optimization to maintain model accuracy

By leveraging platforms like Index.dev, enterprises can accelerate their generative AI adoption and innovation while navigating the challenges of this rapidly evolving landscape.