AI is transforming every industry, but trusting the right models is critical. The stakes are high: trust, governance, performance, ethics, and security all hang in the balance.

Closed-source models (e.g. GPT-4, Claude) lead today in raw performance and centralized safety controls.

Open-source models (e.g. Llama 2, Mistral) shine in transparency, customization, and community vetting.

Recent benchmarks show closed-source still outperforms on average, but open-source is narrowing the gap fast. Security experts warn open models risk easier attacks due to public weights, yet their transparency accelerates fixes, while closed models hide vulnerabilities but rely on vendor trust for patches.

Surveys of developers reinforce this debate: younger and early-career engineers especially value transparency. In fact, a 2025 StackOverflow survey found that “trust and learning are central to younger developers’ interactions with open-source AI”.

Major AI labs mirror this split: OpenAI keeps its flagship models behind API walled gardens for safety, while companies like Meta and Anthropic have open-sourced many models. Even policy makers are taking notice with the EU’s AI Act explicitly highlighting open-source as a public good.

In this article, we will dive deep into the open vs closed AI debate. We’ll explain what each approach means, who favors which, why trust matters, when and where each is appropriate, and how they compare on key fronts. Along the way we’ll examine performance benchmarks, security trade-offs, ethical considerations, and governance and policy implications.

By the end, you’ll have a clear, evidence-backed view on which models (and development practices) inspire confidence in which contexts.

Ready to build with trusted AI? Join Index.dev and connect with top companies seeking skilled AI developers!

Who’s Involved: Developers, Companies, and Communities

Who is shaping this debate? On one side are the open-source community and independent developers. The debate pits the open-source community (developers behind PyTorch, TensorFlow, Hugging Face, and countless GitHub projects) against AI companies that keep their latest models proprietary for control and IP protection.

Open advocates, 82% of whom have contributed to open-source tech, prize transparency, peer review, and shared innovation. Enterprises and regulators, meanwhile, lean on closed-source solutions (e.g., Azure OpenAI) to meet strict compliance in sectors like healthcare and finance. At conferences and on policy platforms, experts debate ‘open collaboration vs. closed control’ as a fundamental split in AI strategy.

Governments also weigh in: the EU’s 2023 AI Act highlights open‐source foundation models’ economic benefits while insisting on safety, and U.S. and Chinese policies similarly debate openness versus control. Yet these lines are blurring as OpenAI’s Sam Altman has noted the limits of full secrecy, and Meta’s Llama 2 release underscores industry-wide collaboration.

What Is Open-Source and Closed-Source AI?

What do we mean by “open-source AI” versus “closed-source AI”? In practice, open-source AI models are those whose code, model weights, and training data are published under permissive licenses (MIT, Apache, GPL, etc.) so that everybody can use and alter it. Consider projects such as LLaMA (Meta models) or Stable Diffusion.

They live on platforms like Hugging Face or GitHub, and a community of volunteers can inspect and improve them.

The key promise: full transparency. You can peer into architecture, training data (if published), and tweak the model.

By contrast, closed-source AI models are proprietary systems, often offered only via an API or commercial license. Companies keep the weights and code behind the scenes. You might use them through cloud services or products (e.g. ChatGPT, Bard, Azure Cognitive Services). The inner workings are not visible, and usage terms are fixed by the provider. The trade-off is control: providers say closed models let them enforce policies, fix bugs centrally, and monetize more easily.

Between these poles there are hybrid approaches:

- Tiered models

- Controlled releases

- “Open core” with proprietary add-ons

Some systems (like Llama 2) are open with limited licenses; others are open-weight but require API keys. These hybrids attempt to balance openness with oversight.

The label also extends to tools: many libraries (PyTorch, Hugging Face Transformers, etc.) are open-source, enabling AI even for closed models. When we talk “open vs closed AI,” we're really asking who can see or change the model’s inner workings.

In essence, open-source AI = transparency and community, while closed-source AI = proprietary control.

Each side trusts different mechanisms to ensure reliable, ethical AI.

Explore 20 high-impact open-source GitHub projects to contribute to.

Pros and Cons of Each Approach

Why is this trust debate so heated? Because AI models can have profound impacts (some unintended) and handling that requires confidence in how they work.

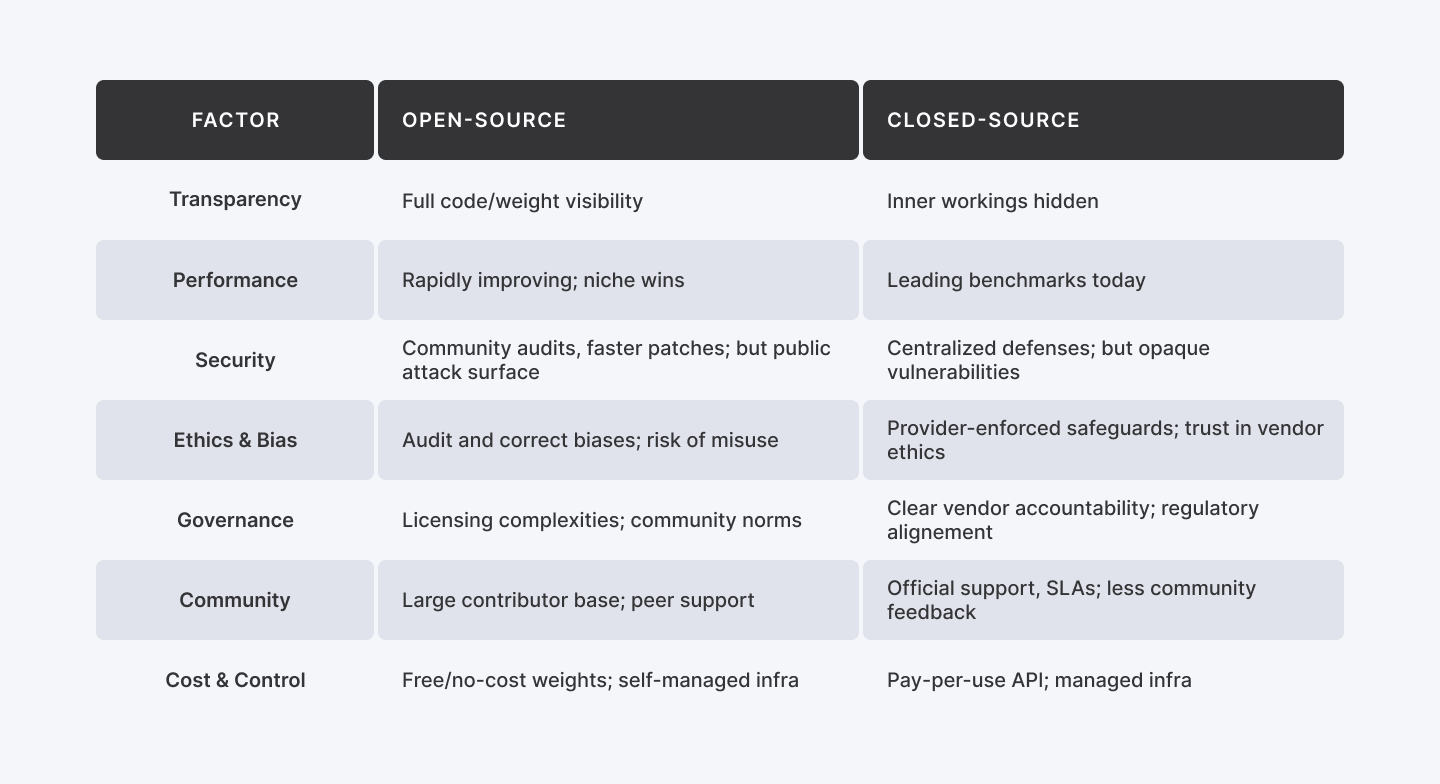

Here are key factors:

- Transparency vs. Secrecy

Open models let us audit code, inspect data sources, and test for biases, building confidence through visibility. That same openness exposes vulnerabilities (backdoors, data leaks) in plain sight. Closed models keep their internals hidden, so we must trust the provider’s safety and bias-mitigation claims.

- Security

Open code invites security experts to find and fix flaws but this means that it also gives attackers the same blueprints. Closed models hide their architecture but rely on a few certified experts and internal audits. Providers like OpenAI add layers (for example, “AI firewalls” that vet prompts) to balance transparency with protection.

- Performance

Proprietary LLMs (GPT-4, Claude) still top benchmarks, so we trust them for high-stakes tasks. But open models such as Mistral and Llama-2-70b are closing the gap fast, and in niche scenarios they can even outperform closed systems.

- Ethics and Bias

With open models, we can review training data and apply ethical filters ourselves. That visibility also makes misuse (for disinformation or hate speech) easier. Closed models enforce rules centrally, but we must have faith in the vendor’s ethical priorities. Some propose “tiered openness” or public audits to get the best of both worlds.

- Governance

Regulators face a challenge: if anyone can modify an open model, who’s accountable? Several recent papers (e.g. R Street Institute) argue regulators must adapt, since traditional product liability doesn’t fit easily. The EU AI Act acknowledges open-source benefits but requires safety guardrails, while U.S. policies focus on vendor responsibilities regardless of code status. Whether through community norms or corporate compliance, trustworthy governance is essential.

Community and Support

Open-source communities (forums, GitHub) offer rapid peer help and collective innovation as 57% of developers enjoy contributing to open projects. Closed ecosystems provide official SLAs and vendor support. Platforms like Index.dev bridge both by vetting talent, so we know skilled, trustworthy people are behind every AI system.

In short, open-source AI tends to earn trust through transparency, collaboration, and community oversight, at the cost of exposure to vulnerabilities and (until recently) lagging raw performance.

Closed-source AI earns trust through centralized control, security measures, and brand reputation, but it asks users to have faith in unseen processes. Both approaches have merits and pitfalls, and real-world solutions often mix elements of each.

Performance and Reliability: Head-to-Head

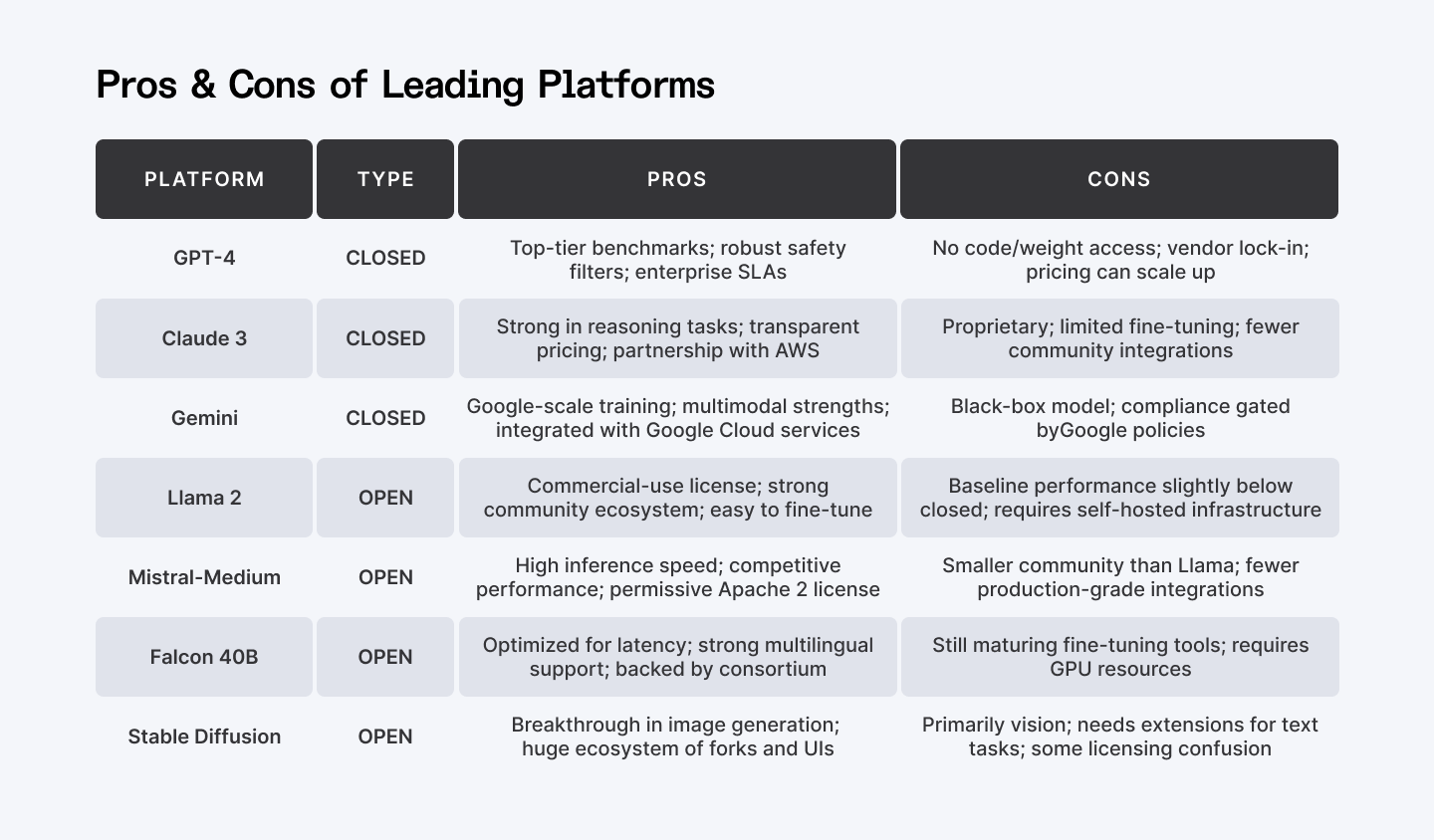

Let’s dig into performance which is a key aspect of trust for many users. If an AI chatbot reliably gives useful answers, users will trust it more. Recent benchmarks clearly show the landscape:

- Current Leaders:

Proprietary LLMs like GPT-4, Google’s Gemini, and Claude top benchmarks. In “ELO score” comparisons, closed models consistently outshine open ones, with open models clustering at the lower end.

- Open Gains:

The gap is closing. Open models such as Qwen-72B, Llama-2-70b-chat, and Mistral-Medium now rival closed systems. Big Tech is even open-sourcing more of its lineup with Google’s Gemma and Microsoft’s Phi-4 having open variants, and Meta’s Llama 2 found commercial traction in 2023.

- Beyond Chatbots:

Open models aren’t just catching up in text; rather they’ve led breakthroughs in image (Stable Diffusion) and vision (CLIP) tasks, boosting confidence in open approaches.

- Hybrid Solutions:

Many teams combine closed services (e.g., GPT-4) for core workloads with smaller open models for experimentation, achieving both stability and flexibility.

In Practice:

For mission-critical tasks (like advanced summarization or coding), a top-tier closed model may be safest today. If you need customization or a deep understanding of the model’s behavior, open models now deliver competitive performance with fine-tuning capabilities. Recent surveys even show that “small open-source models can keep up well with closed-source models” on many tasks.

Choosing the right tool means balancing immediate reliability and long-term adaptability. For learning and non-critical projects, open models shine with enterprises launching new AI features often start with closed offerings and layer in open frameworks for flexibility.

Ethics, Bias, and Governance

Ethics and Bias

Ethical AI demands responsible, fair behavior. Open models shine in transparency—anyone can audit and correct biases (e.g. Wikimedia’s “open-weight AI” policy and academic ethics frameworks)—but they’re also easier to tamper with. Closed models embed ethics through provider filters and alignment training.

Still, openness fuels safety research: the collaborative ecosystem drives new safeguards even as it spawns some malicious variants. Both must meet regulations like the EU AI Act, which mandates human oversight and “Human Autonomy.”

Governance and Policy

Trust rests on legal oversight. The EU AI Act treats all foundation models—open or closed—by risk tier, valuing open-source’s economic benefits while enforcing safety. U.S. policy (Biden’s 2023 AI Executive Order) focuses on vendor best practices over openness labels. In Europe, closed-model users must prove compliance; open-model modifiers may incur data-governance duties.

Practically, this means organizations must align with transparency standards either way. For example, if you use a closed model in Europe for a high-risk application, you have to demonstrate compliance (even if you can't see the code). If you modify an open model, you may face new legal duties (like data governance) as the R Street report warns.

Best Practices

Most experts advocate a hybrid approach: apply access controls or licensing to powerful open-source models (“open-core”) and involve community norm-setting (e.g. “responsible release” guidelines), while closed-source vendors publish ethics statements and collaborate on standards. Agile decision-making (tiered access for open systems and open audit pipelines for closed ones) ensures accountability.

Learn how to start Vibe Coding with AI (the easy way).

Security: Vulnerabilities and Defence

Security underpins trust. Open and closed AI systems both face attacks, but the methods differ:

- Open-model attacks

Public code and weights make privacy and poisoning attacks easier. Threats like model inversion, membership inference, data leakage and backdoors are real, but community-driven patches and fine-tuning mean fixes can roll out quickly once flaws are spotted.

- Closed-model attacks

Hidden internals require you to trust the provider’s security testing, yet bugs still slip through. For example, an open-source foundation model once exposed API keys and chat logs due to a flaw. To defend, many vendors deploy AI firewalls that scan inputs and filter outputs, and they enforce authenticated API calls with logging and rate limits.

- Insider and supply-chain risks

Open-source projects must guard against malicious code contributions, while closed systems must secure their data pipelines and hardware. Common defenses include formal code audits, certified hardware and trusted compute environments.

- Finding the balance

Experts recommend combining transparency and control: adopt model cards and regular security reviews, publish transparency reports, and engage third-party “red teams” to probe both open and closed models. This approach merges community scrutiny with enterprise-grade safeguards.

Developer Perspective and Platforms

Trust for developers hinges on community and tooling. Many engineers favour open source to learn and experiment on platforms like GitHub and Hugging Face. Talent sites such as Index.dev vet and curate skilled developers, ensuring trusted contributors to any project, open or closed. Confidence in who writes the AI code is nearly as vital as the code itself.

Community engagement boosts trust: firms releasing open models partner with developer forums for feedback. According to the previously mentioned StackOverflow survey, 57% of developers prefer open-source projects while only 30% favour proprietary technology. This pro-open mindset leads to more issue reporting, enhancements and knowledge sharing.

Enterprises wary of open models over governance gaps can now opt for open projects that provide premium support or certification. Meanwhile, closed-model providers are courting developers too—Microsoft, for instance, has open-sourced parts of its cognitive services.

Key takeaway:

Trust does not lie solely in open or closed labels. It grows through transparent roadmaps, certified models and vetted talent from reliable networks.

So, how should you decide which model to trust? Consider:

Practical Tips:

- Who should decide?

Your engineering and legal teams, with input from ethics officers and maybe developer community leaders.

- What to do?

Evaluate models via benchmarks (like those in recent papers), perform security tests, and check licenses.

- Where/When to use each?

Use open for research, internal tools, and community-driven products; use closed for critical production systems where guarantees are paramount.

- Why trust one over the other?

It boils down to your risk tolerance and values: openness vs control.

- How to build trust?

Vet your developers (see platforms like Index.dev), adopt best practices (regular audits, “red teams”, documentation), and stay updated on policy (e.g. EU AI Act compliance).

Conclusion: Balancing Trust in an AI World

Choosing between open and closed AI models isn’t about picking sides, it’s about understanding your needs.

Transparency, performance, security, and community all factor into trust, and the right balance often lies in combining both worlds. Open-source offers you flexibility and collaboration while closed-source provides polish and accountability.

For many organizations, a hybrid strategy makes the most sense: leverage open models for innovation and fine-tuning, and deploy closed models where reliability is critical. What matters most is how you implement, govern, and staff your AI efforts.

Platforms like Index.dev play a valuable role in this equation, connecting businesses with thoroughly vetted developers who bring both expertise and accountability to the table. Because in the end, trustworthy AI isn’t just about the code. It’s about the people behind it, and the practices around it.

Trust isn’t a toggle, it’s a system. Build it deliberately.

For Developers:

Get matched with top global teams building ethical AI projects. Remote, flexible, and rewarding, only on Index.dev.

For Clients:

Need trusted AI solutions? Hire vetted developers from Index.dev to build secure and reliable AI systems for your business.