AI has exploded into recruiting: almost every Fortune 500 company uses AI to sift through resumes, screen candidates, and even predict who will stay on the job.

By 2025, roughly 68% of employers expect to rely on AI for hiring. Companies like Unilever and IBM report huge gains (Unilever’s AI-driven hiring saves 70,000 interview-hours a year, and IBM’s AI models accurately flagged 95% of at-risk employees), cutting $300M in turnover costs.

Yet new research from University of Washington shows AI resume scanners favor white-male names 85% of the time and male names 52%, while overlooking Black and female applicants.

AI can slash your hiring time and uncover hidden talent; yet only a hybrid, human-audited approach keeps bias in check. In this article, we show how AI tools for developer hiring can boost your productivity, minimize bias when properly audited, and deliver measurable ROI.

Use AI responsibly and hire better. With Index.dev, access top 5% vetted developers, matched in 48 hours, with bias-minimized processes and a 30-day free trial.

How Widely Is AI Used to Hire Developers?

AI recruiting has become nearly universal among large firms. In North America and Europe, over 80% of Fortune 500 companies use some form of AI for initial screening, scheduling, or candidate matching.

Adoption is also surging in Asia-Pacific and Latin America. Surveys show 59% of Indian companies deploy AI-powered filters; many Latin American outsourcers add AI matching to their existing platforms.

Globally, a 2025 study of 4,000 HR leaders found 72% now use AI in recruiting (up from 58% in 2024) and 62% report increased efficiency.

Demand for developers remains sky-high (over 1 million unfilled jobs), so AI promises speed without sacrificing candidate quality. For example, 48% of hiring managers now use AI to screen resumes and applications. Similarly, nearly half use AI to match candidates to jobs by skill.

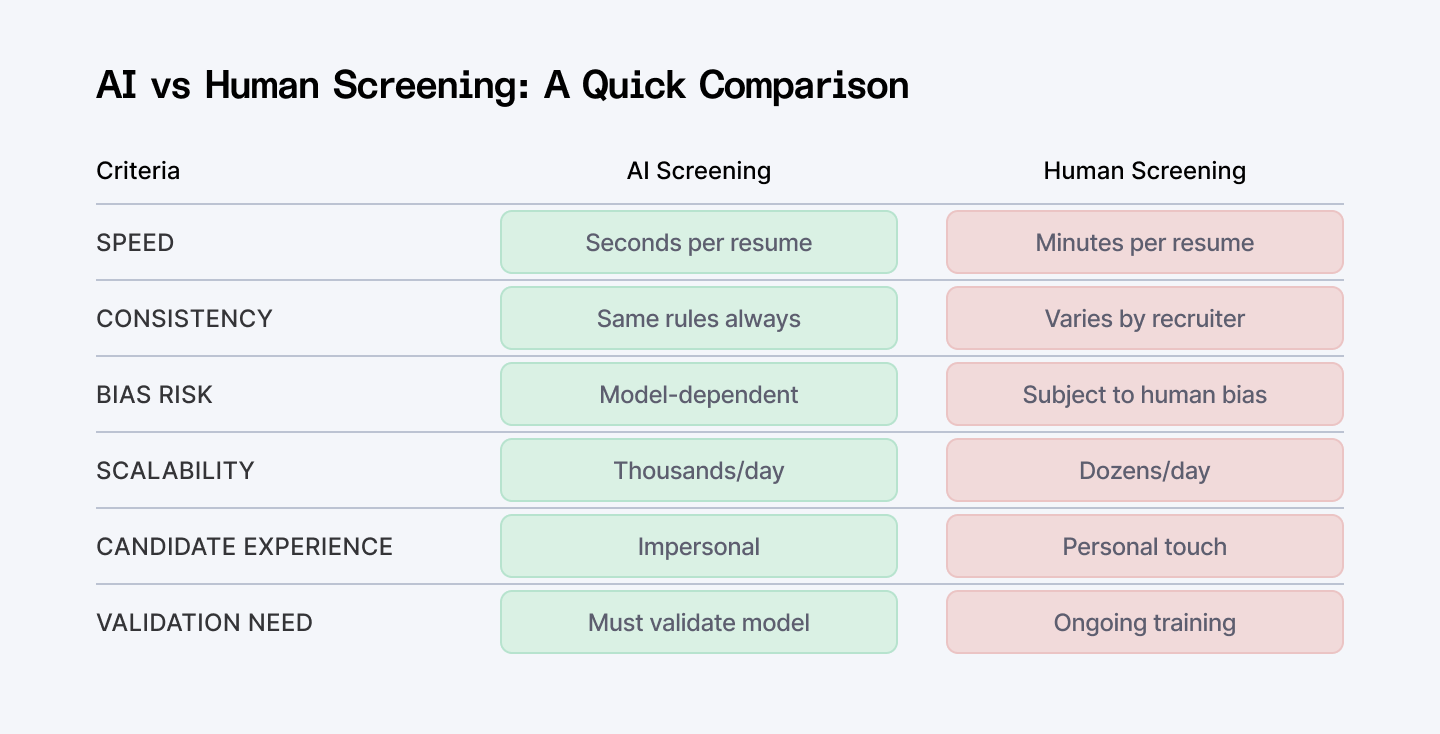

In practice, your common AI tools scan resumes for keywords, rank candidates’ technical backgrounds, and schedule interviews automatically. On the candidate side, developers increasingly use AI (like GitHub Copilot or ChatGPT) to craft resumes and apply at scale. This new tech-for-tech interplay makes developer hiring a high-stakes proving ground for AI, both for efficiency gains and for managing unintended bias.

What Does AI Screening and Skills Testing Look Like?

How Do AI Resume Screeners Work?

AI resume screeners use NLP to extract skills (e.g., Python, React) and rank candidates by fit.

Standard features:

- Keyword Matching:

Scans for technical skills, certifications, and relevant projects.

- Profile Enrichment:

Cross-references LinkedIn or GitHub to validate experience.

- Fit Score:

Assigns a numerical score based on job-description alignment.

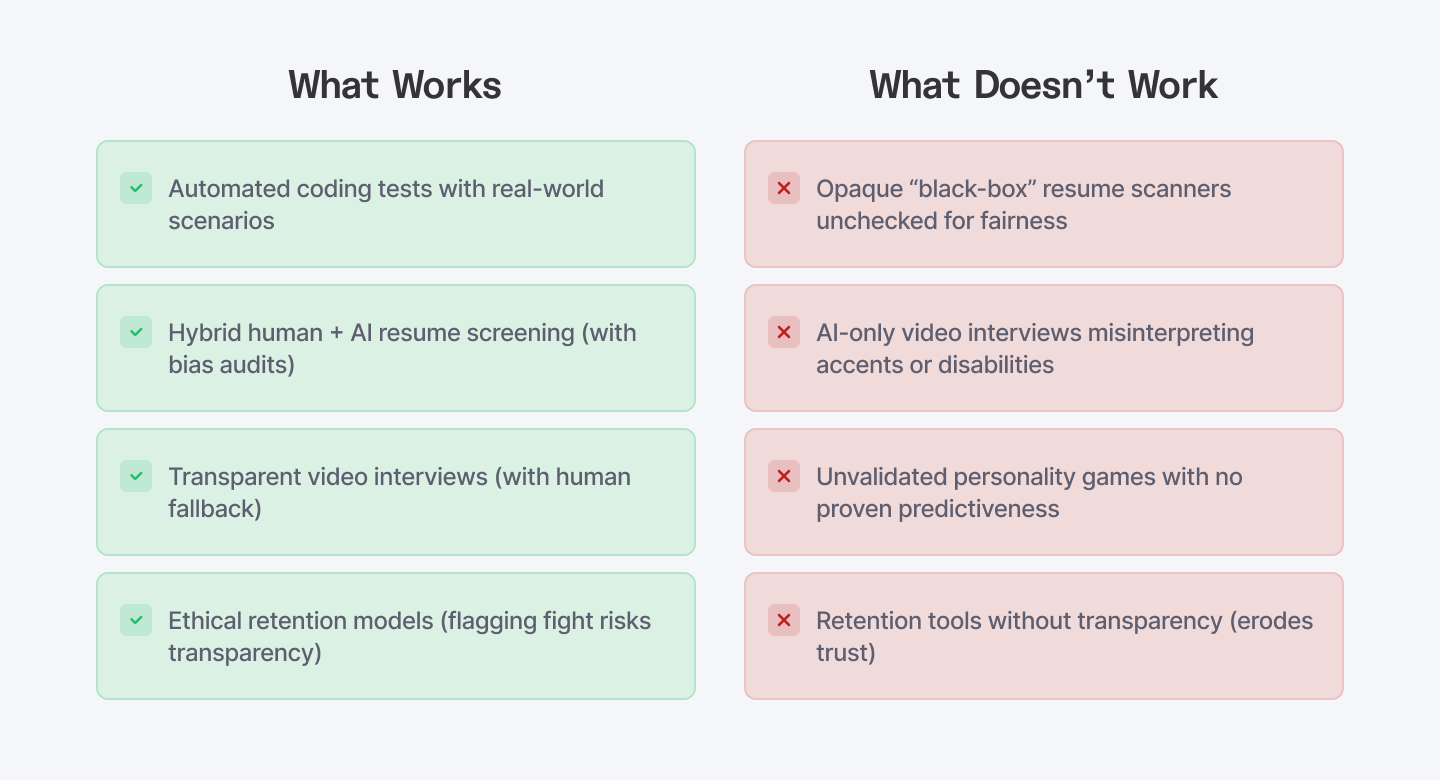

These tools can review hundreds of resumes in seconds. However, they risk overlooking qualified candidates when not carefully audited. For example, University of Washington research found AI resume scanners selected white-male associated names 85% of the time, but Black-female names only 8%, even though resumes were identical apart from names. Amazon famously dropped its AI resume screener after it learned to penalize women (because past resumes were mostly from men).

How Do AI Coding Assessments Work?

Platforms like HackerRank and Codility’s skill tests present your candidates with real-world coding tasks (API design, debugging, or algorithm challenges).

Key features include:

- Realistic Environments:

Browser-based IDEs with auto-grading.

- AI-Enhanced Feedback:

Some platforms flag inefficient code or common errors.

- Proctoring:

AI flags suspicious activity to deter plagiarism.

These tests can gauge actual coding skill quickly; for example, Hackerearth boasts “real-world scenario” simulations for API design and database tasks.

Talent networks (like Index.dev) combine AI screening with expert vetting. For instance, Index.dev matches developers to roles using automated filters, then has their in-house team confirmed on technical nuances and cultural fit. This hybrid model is one of the most reliable methods for hiring developers at scale.

What About Video and Virtual Interviews?

AI video tools let candidates record answers to preset technical or behavioral questions. Algorithms analyze:

- Audio Cues:

Tone, pace, and fluency.

- Visual Cues:

Facial expressions, micro-expressions, eye contact.

- Language Cues:

Keyword usage and syntax complexity.

Companies like HireVue claim AI insights uncover potential beyond resumes. Still, accents, dialects, or disabilities can confuse AI models.

One high-profile case alleged that a deaf employee was unfairly screened out because the AI misinterpreted her communication style which prompted an ACLU investigation. For developer roles, often global and multicultural, relying solely on AI for interview evaluation can be risky.

Studies of AI interview scoring are still sparse, but related research shows systemic issues. One ResearchGate review points out that combining multiple modalities (video, audio, text) increases complexity and potential for “fairness problems”.

HR specialists now advise you:

AI screening should be combined with structured processes.

Experts recommend continuous auditing of AI output and human review of borderline decisions.

Explore 17 AI recruiting tools that make finding software engineers easier.

Can AI Worsen Bias in Tech Recruiting?

Why Do AI Systems Become Biased?

Across all AI hiring tools, fairness is the prime concern. Cutting-edge research confirms that left unchecked, AI often reproduces human biases. AI models learn from historical data harboring existing inequalities. If past hiring favored certain demographics, AI replicates those patterns.

Key research findings:

- Intersectional Bias:

The UW study showed Black-male names were almost never selected, while white women slightly outperformed Black women. Removing names or pronouns alone didn’t fix it, models infer gender or race from schools, activities, or writing style.

- Multimodal Risk:

Video analysis can misinterpret non-standard speech or facial patterns, disproportionately impacting non-native speakers or people with disabilities.

How Does Opaque “Black-Box” AI Harm Fairness?

- Lack of Transparency:

Recruiters often don’t know which features drive scoring.

- Automated Disqualifications:

Candidates may be rejected without human review.

- Regulatory Scrutiny:

The UK’s ICO and US EEOC both demand transparency and fairness audits for high-risk AI (like hiring).

What Do Peer-Reviewed Studies Say?

Academic reviews emphasize that simple “de-biasing” fixes often fall short. For instance, removing names or pronouns from resumes might prevent one kind of bias, but AI can still infer identity from school names, project topics, or even hobbies.

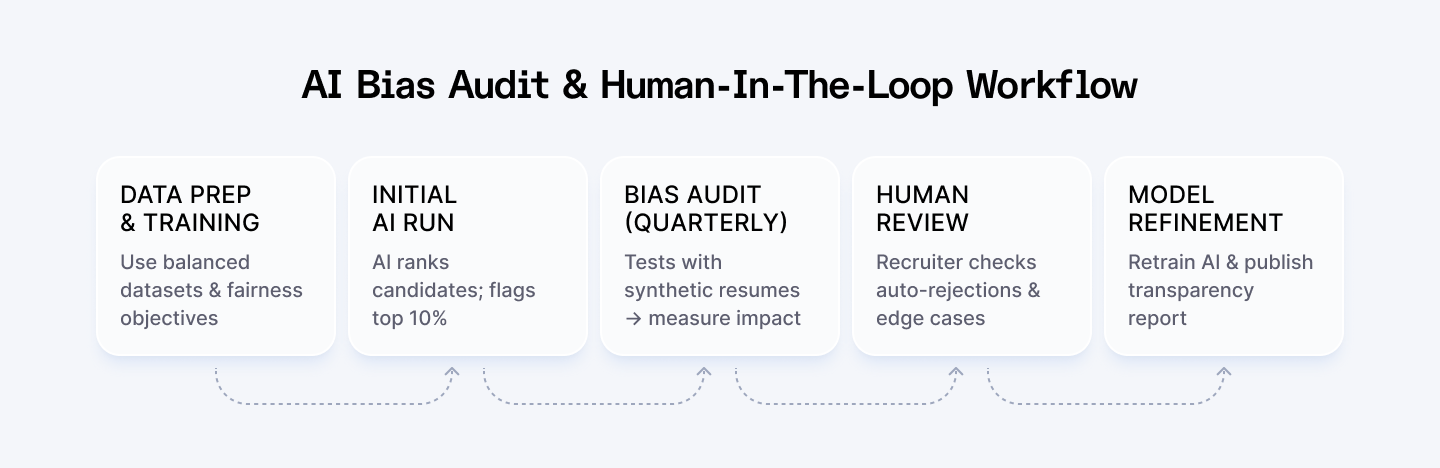

AI can mitigate bias if designed with fairness goals—adversarial training, re-sampling to balance data, and human-in-the-loop (which allow recruiters to adjust the AI’s filters, review samples of auto-rejections, and continuously improve the model with feedback) checks. Otherwise, it exacerbates inequities.

- Brookings Report (2024):

Most commercial AI hiring products reflect historical inequities unless continuously audited.

Which AI Tools Predict Developer Success?

What About AI Skill Matching?

Tools like LinkedIn Recruiter use LLMs to scan job descriptions and candidate profiles, surfacing “hidden” matches to you. These systems can recover strong candidates that manual keyword searches miss. Recruiting teams report a 15% uplift in qualified pipeline when they use AI matching engines.

Can AI Predict Retention and Culture Fit?

Retention models analyze HR data (code commit frequency, survey scores, performance reviews) to flag who might quit.

- IBM Case Study (2024):

Their internal AI model achieved 95% accuracy in predicting flight risk. Early managers intervened, reducing turnover costs by $300 million.

- Unilever Example:

Automated hiring cut screening time and improved “quality of hire,” leading to a 20% increase in first-year retention.

Developer-specific signals

For engineering teams, AI can monitor metrics like:

- Code Commit Patterns: Sudden drop-offs may signal burnout.

- Collaboration Activity: Reduced participation in code reviews or team chats.

- New Job Search Indicators: Online behavior (e.g., new portfolio updates) can feed into the model.

Ethical concerns arise

Employees should know which signals (e.g., low commit counts) trigger alerts. Otherwise, trust erodes, and privacy issues spike. Developers often worry about “being watched” every time they push code.

How Do You Mitigate Bias and Ensure Fairness?

What Are Common Bias-Mitigation Techniques?

- Human-In-The-Loop Design:

Recruiters review AI decisions at key checkpoints, the first 5% of auto-rejections, for example.

- Adversarial Debiasing:

Training AI with counterfactual examples (e.g., same resume with swapped names) to penalize biased associations.

- Fairness Audits:

Regularly test AI models on synthetic datasets to measure disparate impact across gender, race, and intersections.

- Transparency Reports:

Inform candidates that AI will evaluate certain aspects (e.g., “Your coding test will be scored by an automated system”).

What Do Case Studies Show?

- UK ICO Audit (2023):

Vetted five top AI hiring vendors, and found that only two had robust fairness checks. Vendors were instructed to release transparency reports showing how they handle data and mitigate bias.

- Intuit/HireVue Complaint (2024):

A deaf employee alleged the AI video tool unfairly downgraded her; Intuit paused AI scoring for accommodations, adding human fallback options.

Why Does Combining AI and Human Judgment Work?

Research consistently shows hybrid approaches outperform AI-only or human-only methods:

- Consistency + Context:

AI sifts quickly; humans interpret nuance.

- Rapid Iteration:

Human feedback continuously refines AI models, reducing unfair outcomes over time.

- Legal Safety:

Human oversight ensures compliance with discrimination laws (Title VII, ADA, UK Equality Act).

Some HR leaders also invest in fairness training for their teams, so recruiters learn how to spot AI quirks. As one AI review notes, addressing biases requires both technical and managerial solutions. The good news: when done right, AI can even reduce some biases.

A recent survey-driven study found that many users perceived generative AI screening as more objective and data-driven, which in turn raised efficiency and “candidate quality”. In short, AI has the potential to help diversity, but only if organizations actively manage it.

What Developer-Focused AI Hiring Tools Are Available?

AI recruiting isn’t just resume scanners and video interviews—developer hiring has its own ecosystem of specialized platforms. Here’s a concise look at the major categories and players:

Automated Coding Assessments

HackerRank, Codility, and TestGorilla, all offer browser-based environments where candidates solve real-world coding challenges. These platforms auto-score code performance, flag inefficiencies, and often include AI-powered feedback loops. For example, iMocha’s “AI-LogicBox” combines coding tasks with analytical puzzles to judge both syntax and problem-solving style.

Soft-Skill and Personality Screens

Firms like Pymetrics use AI‐driven game-based assessments to measure traits (such as problem-solving style or teamwork aptitude). While not developer-specific, many recruiters add these to technical tests to balance out pure coding metrics. The idea is simple: if coding exams measure “can they code,” personality games gauge “will they fit” without human bias from resumes or interviews.

AI Skill Matching Engines

Newer “LLM-powered” tools scan job descriptions and candidate GitHub or LinkedIn profiles, then produce ranked matches. Recruiters report discovering high-quality developers they would have missed with manual keyword searches. LinkedIn Recruiter, for instance, uses proprietary AI to suggest candidates across millions of profiles, though the exact matching algorithms remain proprietary.

Talent Networks (e.g., Index.dev)

Index.dev combines automated screening with human curation. AI does the first cut, filtering by language, framework experience, and relevant open-source contributions, then Index.dev’s talent experts vet the finalists. This hybrid model ensures efficiency (AI narrows a pool of 5,000 to 50 in minutes) and quality (experts confirm technical depth and cultural fit). For HR leaders, it exemplifies “assisted AI hiring” rather than “self-service.”

While tool choices vary by region, adoption tends to cluster:

- North America & Europe: HackerRank, TestGorilla, HireVue, Pymetrics.

- Asia-Pacific: Domestic platforms plus HackerRank; some government hiring bodies trial AI.

- India: Companies like Infosys and TCS built in-house AI filters; startups use niche tools like CodeTantra or Codetantra.

Latin America: Outsourcers leverage global tools and also local networks like Workana with added AI matching.

What Are Best Practices for HR Leaders?

How Should You Integrate AI Without Losing Control?

Validate Every AI Tool as a “Test”

- Before full rollout, run a pilot: correlate AI scores (resumes, coding tests) with actual job performance over 3-6 months.

- If correlation is below 0.4, rework or replace the tool—evidence shows that unvalidated AI can misdirect hiring decisions.

Audit for Bias Quarterly

- Use synthetic test sets (e.g., identical resumes with different demographic cues) to measure disparate impact.

- Document results; if any group’s selection rate is <80% of the highest-scoring group, investigate and retrain.

Offer Candidate Transparency & Alternatives

- Inform applicants: “An AI tool will score your coding test, but a human engineer will review the top 20%.”

- Provide an option for a live coding session instead of AI-only assessments, especially for neurodiverse or non-native speakers.

Monitor ROI & Experience

- Track KPIs: time-to-hire, first-year retention, candidate NPS (net promoter score).

- According to HireVue’s internal survey, organizations saved 55% of screening time and saw a 30% increase in positive candidate feedback once they added a human review layer.

Integrate, Don’t Isolate

- AI tools should augment, not replace, human hiring teams.

- Use AI for the tasks it does best (processing large data, consistency, pattern detection) while having humans check context and values.

Keep Fairness In Front

- Design AI models with fairness goals baked in by using balanced training data (if possible), explicitly measuring disparate impact during development, and involving diverse teams in tool selection.

- Sharing how you use AI can build trust (for example, “we use automated coding tests so every candidate is assessed by the same standards, not at a recruiter’s whim”).

Which Metrics Matter Most?

- Time-to-Fill & Quality-of-Hire: Are you matching job requirements with fewer team-culture issues?

- Diversity Ratios: Track gender, race/ethnicity, and intersectional representation at each pipeline stage.

- Candidate Satisfaction: Run post-interview surveys; if candidates frequently complain about “robotic” assessments, adjust the process.

Turnover & Retention: Are AI-matched hires staying at least 12 months at the same rate as traditionally-hired developers?

By continuously tracking these, HR leaders can prove to executives that their AI hiring content is both authoritative and data-driven.

Get clear answers to 5 key AI hiring questions.

Conclusion

As we’ve seen, AI can transform your developer hiring by trimming hours of manual screening, flagging promising candidates, and even predicting which hires are likely to stay. But it’s not a magic bullet. Left unchecked, AI can reinforce biases, misinterpret diverse candidates, and give recruiters a false sense of accuracy.

The truly successful approach balances the speed of automation with the insight of human judgment. Use AI to handle high-volume tasks (resume parsing, initial code challenges, early video screens) then you bring in experienced recruiters or engineers to validate those results, especially for critical roles. Build fairness checks into every step: run quarterly bias audits, offer alternative evaluation paths for non-standard speakers or neurodiverse applicants, and be transparent about how data is used.

By blending AI’s efficiency with human expertise, organizations can not only fill developer roles faster, but also create a more inclusive, data-driven hiring process. Remember: AI is a powerful co-pilot, but people remain your pilots. Armed with the right tools, processes, and ongoing vigilance, HR leaders have every reason to use AI comfortably to hire and retain the best engineering talent, without compromising fairness or quality.

For Clients:

Hire elite developers in 48 hours, risk-free. Access the top 5% of vetted talent with Index.dev’s AI-matching and get a 30-day free trial.

For Developers:

Want to get hired by global companies using smarter hiring tools? Join Index.dev and get matched with the world's best tech teams. Fast, fair, and remote.