AI agents (often called agentic AI or autonomous agents) represent a paradigm shift from conventional software. In the last year, software has shifted from rigid rule-sets to adaptive, goal-driven AI agents. Built on large AI models with planning modules, these agents perceive their environment, learn from feedback, and act autonomously without the need of manual code changes. Traditional applications, by contrast, require you to update and redeploy whenever requirements evolve.

This article explores how these approaches differ in architecture, execution models, adaptability, learning capabilities, and user interaction.

We draw on 2024-2025 research and industry reports to explain who uses each, what they can do, when (and why) agents are emerging now, where they fit into workflows, and how they operate.

Find your AI-ready developers fast from Index.dev's top 5% talent pool, with 48-hour matching, a no-risk trial, and built-in compliance.

Why Agents Matter Now

- Who & What:

Traditional software (ERP software, web apps, microservices) executes predetermined logic. AI agents layer on top of modern AI (e.g. large language models) to handle complex tasks.

- When & Why it’s Critical:

Spurred by breakthroughs in machine learning, agents emerged in 2024-25 as enterprises seek more adaptive, autonomous tools.

- Where They Fit:

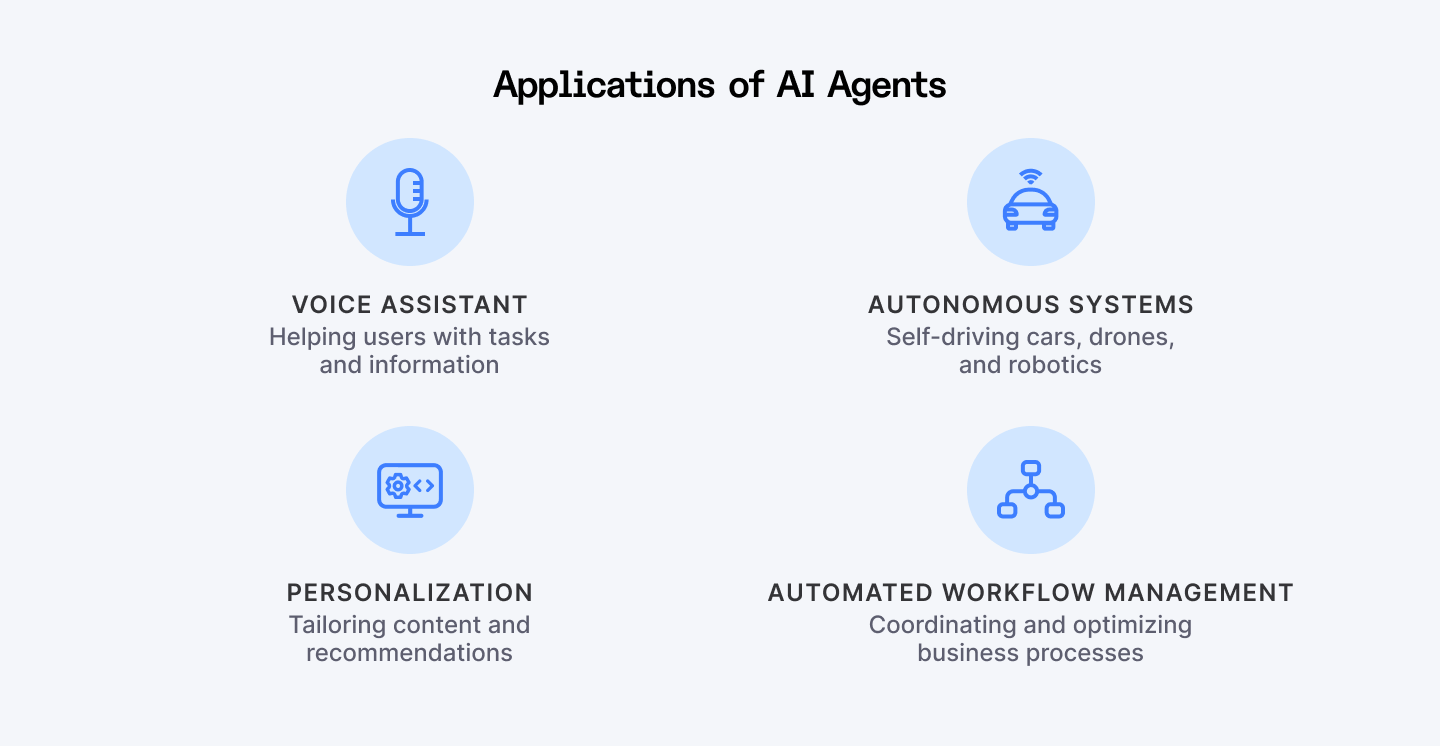

HR, customer service, IT workflows, basically any process with complexity benefits.

- How They Operate:

Agents integrate modules like memory, planning, and “tools” (APIs) around an AI model. They run in closed loops: sensing, reasoning, and acting, unlike the linear request-response flow of legacy apps.

Together, these factors give AI agents new capabilities like higher autonomy, on-the-fly learning, and natural interaction that static applications lack.

What Are AI Agents vs Traditional Applications?

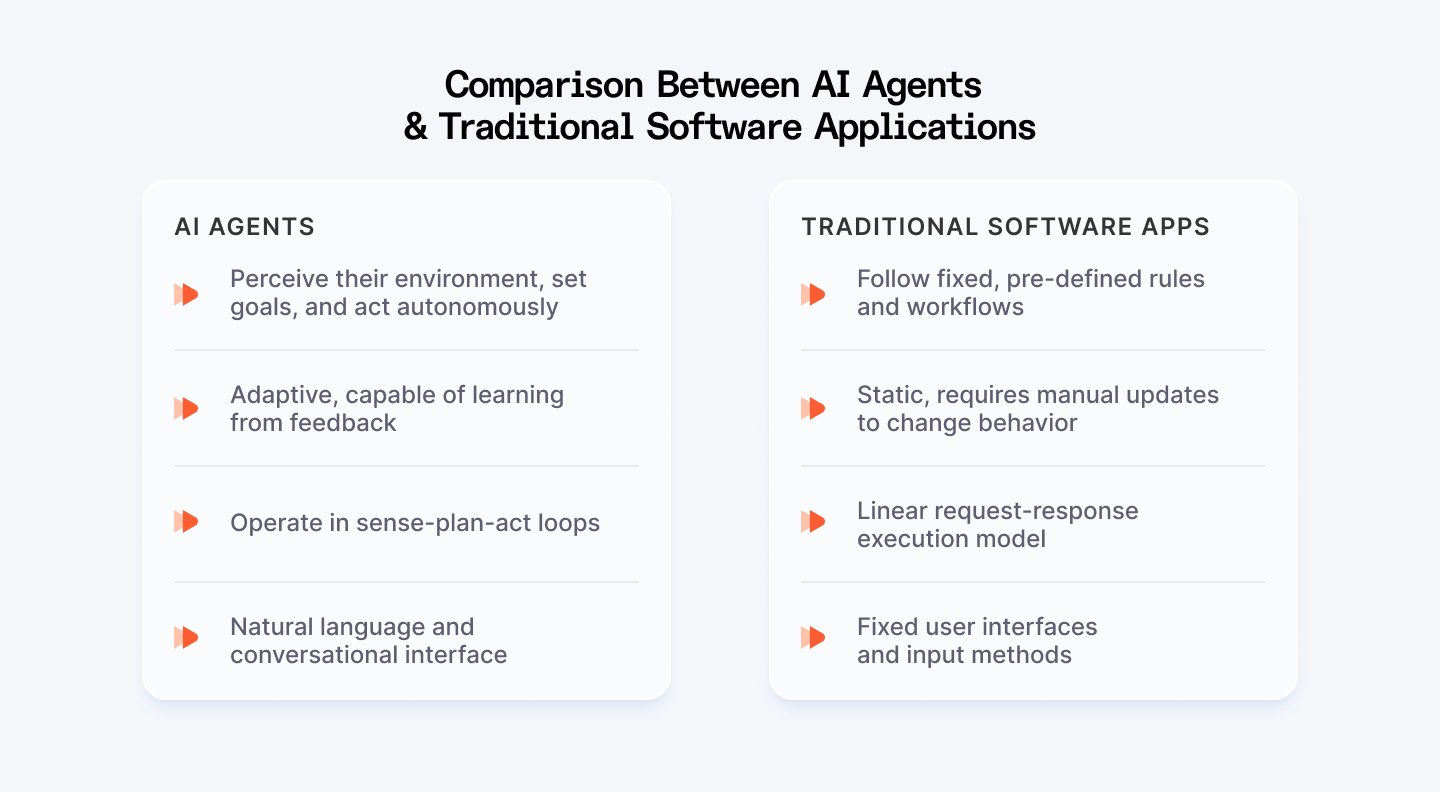

Traditional software applications are built on fixed workflows and rules. Developers write detailed code (often thousands of lines) to handle each step of a process. They are deterministic: given the same inputs, they always produce the same outputs. Business logic changes (new features, exceptions) require human updates and redeployment.

By contrast, an AI agent is an autonomous program that can plan, reason, and act using AI-driven decision-making. Agents often sit on top of powerful ML models (like LLMs) and incorporate components such as memory, planning modules, and tool interfaces. They can interpret new inputs and handle unforeseen scenarios without being explicitly reprogrammed thereby updating their own strategies by learning from feedback.

For instance, a traditional CX system follows fixed rules: 'If a customer says refund, send form A.' An agentic CX platform like Nextiva, by contrast, can assess whether the customer is frustrated or just curious, check their purchase history and previous interactions, determine if this requires immediate human escalation or can be resolved with a personalized offer, and execute that decision by routing to the right team or sending a tailored response.

A sales agent built on an LLM might take a natural-language task (“find leads for product X”), autonomously query databases, run analyses, and draft emails; all without step-by-step coding from developers.

In practice, “AI agents combine precompiled components for critical paths with reasoning capabilities for complex decisions,” according to IBM, giving “adaptive intelligence” on top of high performance.

In conclusion, AI agents (which are goal-driven programs built on large models) autonomously sense, learn, and act without code rewrites. Traditional apps remain deterministic: update, redeploy, repeat.

How They Work

When you look under the hood of AI agents, you’ll see a streamlined yet powerful structure that blends deterministic code with adaptive intelligence. We can categorize them into four key pillars:

1. Architecture and Orchestration

Traditional software relies on predefined modules, like monoliths or microservices, that follow fixed interaction patterns. AI agents, by contrast, assemble cognitive building blocks around a core model (often an LLM) and orchestrate them dynamically:

- Model (LLM) as the decision engine.

- Perception/Input modules (NLP, APIs) to gather user data and sensor feeds.

- Memory/Context stores conversation history, facts, or interim results.

- Reasoning/Planning decomposes goals into actionable steps.

- Tools & Connectors (external APIs, database calls) bridge the AI to real-world systems (e.g., updating a CRM record or fetching live data).

Together, these components form a cognitive architecture that adapts as conditions change.

2. Sense-Plan-Act Loop

AI agents operate in a closed-loop, feedback-driven model. They repeatedly sense and act, often with built-in exception handling. Agents can even work 24/7 reviewing data and making decisions without human supervision.

Rather than a single pass from input to output, agents run a closed-loop cycle:

- Sense: Gather new inputs or results.

- Plan: Update the task breakdown based on current context.

- Act: Invoke tools or generate outputs.

- Repeat until the goal is achieved.

This continuous loop lets agents course-correct on the fly, unlike the linear request-response flow of legacy apps.

This adaptability stems from their design: agents incorporate mechanisms to learn and adjust on the fly. For instance, if an AI agent receives a novel request, it might decompose the task into subtasks, search for new tools, or query its memory. Human oversight is reduced because the agent can plan its next steps autonomously.

In enterprise case studies, deploying agents has dramatically sped up processes: one logistics company cut error rates by 83% and processing time by 62% by using agents for documentation tasks. Such gains come from continuous optimization which is something traditional apps cannot do without a new software release.

3. Learning and Adaptability

While traditional software is largely hand-coded. Even if you use ML models (e.g. a static AI feature inside an app), the overall flow remains fixed. Your AI agents, however, get smarter after every run and have moved beyond static code paths. Agents incorporate feedback mechanisms (like RLHF) so they:

- Learn from outcomes, which means your agent gets sharper over time, tackling increasingly complex scenarios without fresh code.

- Adjust strategies mid-task by querying memory or discovering new tools.

- Handle novel inputs without manual redeployment.

For instance, learning agents represent a significant advancement, incorporating mechanisms to improve performance through experience and using techniques like reinforcement learning. They can adjust their future actions based on past outcomes (e.g. if a tool call failed, choose differently next time).

4. Resource Management and Performance

Enterprises still demand speed and reliability. Agentic frameworks typically:

- Blend compiled, high-performance code for routine tasks with on-demand AI reasoning for ambiguity according to IBM.

- Use ahead-of-time compilation (where needed) to maintain low latency even as models fire up.

- Incorporate exception-handling loops to adapt when inputs stray from the expected.

This hybrid design ensures you retain the speed of traditional apps while unlocking autonomous, goal-driven behaviour.

Explore the growing role of AI agents in software engineering.

User Interaction and Experience

Conversational Interfaces

Traditional software usually presents fixed user interfaces: click buttons, enter data in forms, or call specific APIs. Agents invite you to talk, not click. Instead of rigid forms or APIs, you engage in natural-language dialogs through typing, speaking, or even feeding in images. They parse intent, ask follow-up questions to clarify ambiguous requests, and deliver context-rich responses.

Imagine telling a finance agent, “Show me last month’s top expense categories,” and having it not only display charts but say, “I noticed utilities spiked. Would you like to drill into those?” That two-way interaction feels human, reduces missteps, and cuts down on training overhead.

Collaboration

Each agent retains memory of past interactions (e.g. your preferences, corporate guidelines, or project context) so handoffs between designers, analysts, and reviewers happen seamlessly.

They can also collaborate in teams: multi-agent systems allow specialized agents to hand off tasks to each other, working like a virtual team. This collaborative model has no analogy in classic software architecture.

Trust, Transparency, and Explainability

As agents make autonomous decisions, explaining those decisions becomes important. Verifier agents can automatically check another agent’s output for accuracy or policy compliance, creating an internal QA loop. And when you need transparency, agents can expose their reasoning steps, “I ranked candidates by match score, then filtered by availability”, so you stay confident in their autonomy.

Traditional software typically logs errors but doesn’t need to explain decisions because its logic is transparent code. In agentic AI, building trust may require additional design (like showing the reasoning steps), further differentiating user experiences.

Next Steps: When, Where and How to Prepare

1. Pinpoint High-Value Workflows

Look for processes that are both mission-critical and change-prone like expense approvals, IT ticket routing, or HR onboarding. These are prime candidates for agentic automation.

2. Prototype with Eval-Driven Development

Spin up a minimal agent that tackles a single task, say, generating weekly reports. Use evaluation suites (unit tests for goals, not just code) to measure success and iterate quickly.

3. Leverage Community & Vendor Resources

Getting started means retooling development practices. Teams should identify workflows that are both performance-critical and complex (worth agentizing). They may prototype agents for tasks like report generation or data analysis. Importantly, new evaluation methods (so-called “eval-driven development”) are emerging to test agent behaviors, beyond traditional unit tests.

- Index.dev publishes tutorials and case studies on building AI agents (see “Build Your First AI Agent” with LangChain/LangGraph and lists of popular AI agents for software tasks).

- Google’s 2024 Agents whitepaper and Microsoft’s Semantic Kernel docs provide reference architectures and best practices.

- IBM Think articles on agentic microservices share performance-tuning tips for enterprise scale.

4. Invest in Oversight and Explainability

Plan for human-in-the-loop checkpoints and verification agents from day one. That way, your team can audit decisions, refine policies, and maintain trust as agents learn on the fly.

5. Scale Gradually

Once your first pilot demonstrates reduced errors and faster cycle times, expand agent roles into adjacent workflows so that it encompasses cross-department handoffs, multi-agent collaborations, or customer-facing bots.

By following these steps, you’ll be ready to harness AI agents safely and effectively across your organization.

Learn how to build your first AI agent using a simple guide with LangGraph.

Conclusion

AI agents differ fundamentally from traditional software in design and purpose:

- Traditional apps are static and rule-bound;

- AI agents are goal-driven, adaptive, and interactive.

Agents combine AI models with planning, memory, and tool-use, operating in a closed-loop “sense-plan-act” cycle. This makes them more flexible and capable in dynamic environments.

Traditional software will not disappear overnight, but agents are augmenting or even replacing these static workflows. Yes, agents won’t replace all software, but they will start handling the tasks that traditional programs struggle with: complex reasoning, continual learning, and natural communication. Cross-departmental workflows (finance, HR, IT) are prime candidates for intelligent, self-improving systems, because agents can coordinate tasks across systems which is something siloed traditional apps struggle to do.

By understanding the differences between AI agents and traditional software (in architecture, execution, learning, and interaction) organizations can better adopt agentic AI safely and effectively and strategize AI investments.

For Clients:

Need AI-ready developers? Hire from Index.dev’s elite 5% talent pool with 48h matching, no-risk trial, and built-in compliance.

For Developers:

Build with the future. Join Index.dev and work on cutting-edge AI agent projects with top global companies—remotely, and on your terms.