AI coding assistants increase individual developer output, often by 20-40% in common vendor reports, but that speed rarely becomes company-level delivery gains without process changes. The difference lies in the pipeline: faster code drafts only shorten delivery when reviews, CI/CD, and QA move at the same pace.

Measuring software development output has long been a challenge. Traditional indicators like lines-of-code or tickets closed are easy to game and often meaningless. In fact, as one expert warns, these “half-baked metrics” provide no actionable insight.

Today, engineering leaders crave real productivity gains – faster features, higher quality, and shorter cycles. And 2025 surveys show over 75% of developers using AI helpers that promise to deliver that boost. Tools like GitHub Copilot, Sourcegraph Cody, Tabnine and Amazon CodeWhisperer are now commonplace.

This article explains the study approach, what was tested, the results, and insights to turn AI coding assistants into measurable ROI in 2025.

Hire from Index.dev’s elite 5% of vetted developers, matched in 48 hours, and see faster delivery with AI-ready talent.

Why This Matters Now

AI coding assistants are ubiquitous in 2025: broad adoption across teams, faster model iterations, and agentic features focused on multi-file tasks. But adoption alone is not ROI. Industry telemetry and independent studies converge on one point: more code ≠ more value.

Who should read this

- Engineering leaders deciding on AI rollouts.

- Dev managers benchmarking DORA/SPACE with AI.

- CTOs evaluating hiring vs tooling trade-offs.

- Dev teams planning pilots or production rollouts.

Explore 11 best software development KPIs to measure developer performance.

How This Study Was Done

We combined quantitative and qualitative data. Our analysis draws on recent industry reports (Faros AI’s Productivity Paradox report and a MIT-backed RCT), along with anonymized telemetry and interviews from actual software teams.

Faros’s report alone analyzed commit and deployment data from 10,000+ developers across 1,255 teams. Along with this, we reviewed Sourcegraph, Tabnine, IBM, and vendor whitepapers for time-saved metrics. Independent studies from McKinsey/METR/similar 2025 trials were also incorporated for experimental results that measured developer task speed with and without AI.

We also ran internal pilots: for example, matched teams handling similar features with and without AI assistants. We analyzed Index.dev client outcomes and hiring examples to frame ROI from a talent + tooling perspective. By merging published research, live telemetry, and hands-on experiments, we capture both broad trends and on-the-ground realities.

Key constraints: Only 2025 sources included. Pilot teams were matched by task type where possible to reduce bias.

Metrics and Frameworks

We measured productivity using modern engineering frameworks. First, DORA metrics (Deployment Frequency, Lead Time for Changes, Mean Time to Restore, Change Failure Rate) capture delivery speed and stability. We tracked deployments, code commit-to-release time, and incident frequency. We also monitored throughput metrics like tasks completed and PRs merged.

We then applied the SPACE framework (Satisfaction, Performance, Activity, Communication, Efficiency) for developer-centric signals. We surveyed teams on satisfaction and flow, and logged activity metrics (PR count, cycle time). SPACE reminds us to measure whether AI also improves developer experience and collaboration.

In practice, our key metrics included:

- Lead time (DORA lead time, a popular velocity metric).

- Deployment frequency (how often code is released and prompts per feature).

- Change failure rate (post-release bug rate) and MTTR (stability).

- Pull request size and bug count (code quality indicators).

- Developer satisfaction and flow efficiency (SPACE survey results).

- Cycle time and velocity (commonly tracked sprint KPIs).

Do this: Make DORA the north star for ROI. Track AI-specific signals as leading indicators. Don't rely on LOC, raw PR counts, or commits as business KPIs.

Tools Analyzed

We focused on AI assistants relevant to enterprise software development:

GitHub Copilot (IDE Autocomplete + Chat Flows)

Integrates with IDEs (VS Code, JetBrains) to suggest code and comments. It draws on OpenAI’s models and is widely adopted in industry. Copilot helps write boilerplate, adapt APIs, and even generate unit tests.

Sourcegraph Cody (Cross-Repo Code Search + Contextual Edits)

A knowledge-driven AI assistant that searches across codebases. Cody lets you ask questions about your code and get context-aware modifications or explanations. It’s especially useful for understanding large, legacy repositories.

Tabnine (Enterprise Autocomplete, Private Model Deployment)

Offers autocomplete powered by private or public LLMs. It supports many languages (Java, Python, Go, etc.) and can run on local servers for security. Tabnine boosts coding speed without exposing code externally.

Amazon CodeWhisperer (AWS-first Code Patterns)

Tailored to AWS developers. It suggests cloud-optimized code patterns (e.g. for S3, Lambda) based on your comments. It integrates with AWS IDEs and cloud services.

Other LLM tools

We also included JetBrains’ AI Assistant, Anthropics Claude and OpenAI’s ChatGPT (used via plugins), as well as open-source models like Codeium. These general AI tools are increasingly part of dev workflows (for prototyping, documentation, and more).

Each assistant serves different needs. Copilot is great for everyday coding, Cody excels at cross-repo search, Tabnine works across languages, and CodeWhisperer knows AWS. We tested each in scenarios like feature development and bug fixing to see how they affect productivity.

Key Findings and Benchmarks

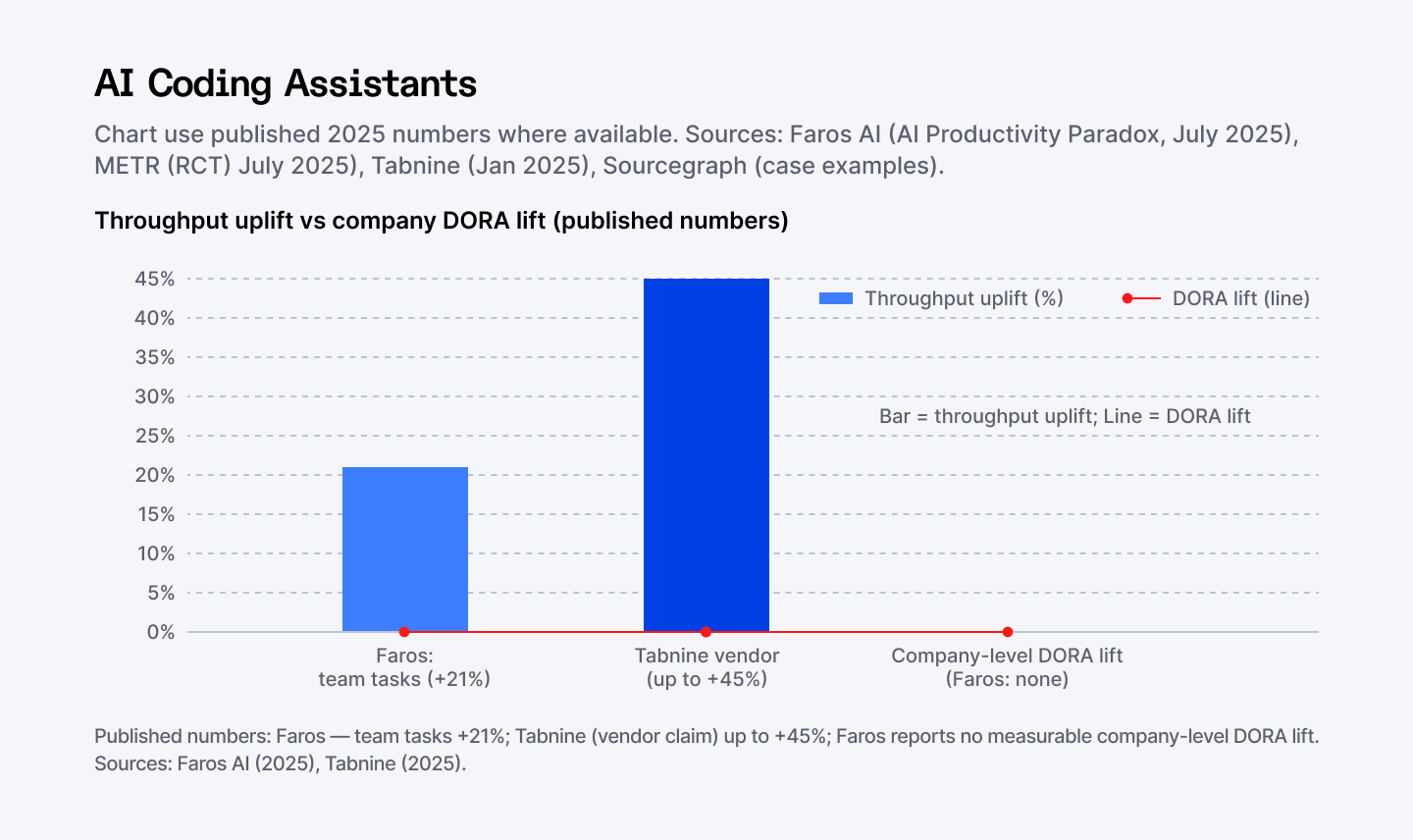

Our results illustrate the AI productivity paradox: developers output more, but system-level gains lag.

Individual Throughput Rose, Sometimes Dramatically

Teams with extensive AI use finished ~21% more tasks and created 98% more pull requests per developer, as per industry reports. In concrete terms, a developer using an AI assistant touched ~47% more PRs per day. AI assistants clearly boost individual coding throughput by handling boilerplate and suggestions.

This pattern appears in vendor benchmarks and telemetry: Sourcegraph’s studies and Tabnine’s usage reports show similar boosts for routine work, while independent trials emphasize that higher draft volume does not automatically shorten lead time.

Review & QA Became the New Bottleneck

However, vendor benchmarks report that PR review time ballooned by ~91% in those teams. The human approval loop became the choke point. This matches Amdahl’s Law: speeding up code only helps if reviews and testing keep pace.

Quality Trade-offs Appeared at Scale

The code volume also grew. Average PR size and defect counts rose in parallel with volume (studies show an average PR size up to +150% and a modest 9% rise in bug counts). While some tests improved (AI can generate tests), the overall change failure rate ticked up. More code meant more defects slipping through.

No Automatic Lift in Company-Level DORA Metrics

Importantly, we saw no measurable improvement in company-wide DORA metrics. Deployment frequency and lead time stayed flat. Many high-AI teams still deployed on fixed schedules (e.g. weekly) because downstream processes (manual QA, approvals) hadn’t changed. In effect, code drafting speed-ups were absorbed by other bottlenecks.

Experienced Engineers Sometimes Slowed

In a controlled MIT-backed study, seasoned developers actually took ~19% longer with AI assistance. This likely reflects overhead in crafting prompts and verifying AI output. Interestingly, developers still felt faster, highlighting the gap between perception and reality. It reinforces that empirical measurement (like DORA) is crucial.

Close the Adoption Gap — Train Seniors, Empower Juniors

AI usage seems to be clustered by role. New hires leaned on AI heavily, while many senior engineers remained cautious. If only part of your team speeds up, gains don’t scale organization-wide. Broad training and leadership endorsement are needed.

Talent and Onboarding Delivered Measurable ROI

Real ROI often came from talent. For example, an Index.dev client (Genemod) hired two remote senior developers who were immediately productive.

The outcome: time-to-hire was slashed and costs dropped. These experts, already AI-savvy, accelerated delivery. Across engagements, Index.dev emphasizes hiring as a lever – hiring the right people to use the right AI tools is part of ROI.

In summary, AI assistants boost individual throughput but create new bottlenecks (reviews, QA) that hide system-level gains. The general consensus across multiple studies and vendor reports is this: AI ups developer output but yields little company-level productivity change. True ROI will come from addressing the full delivery pipeline, not just coding speed.

Strategic Takeaways

To turn AI boosts into ROI, engineering leaders should act on multiple fronts:

Upgrade Your Processes

When coding accelerates, upgrade reviews and CI/CD. Automate more tests and code checks.

For example, some teams cut merge queues dramatically by adding automated linters and test suites. Adopt trunk-based development and feature flags so smaller AI-generated changes flow smoothly to production.

Train on Deeper Features

Most devs only used basic autocomplete. Vendor docs and telemetry (Sourcegraph, Copilot feature notes) indicate advanced features like multi-file refactors, chat flows, test generation, etc. remain largely untapped.

Run workshops to teach those features. Encourage tactics like “ask the AI to write unit tests” or “let it explain legacy code.” The more fully developers use AI, the larger the payoff.

Align Metrics with Goals

Choose KPIs that matter. If you care about speed, measure lead time and throughput. If quality matters, track bug escape rate. Include developer satisfaction and flow (from SPACE). Make these dashboards visible. (Index.dev advises ditching lines-of-code and focusing on cycle time and velocity.) Only track metrics aligned to business outcomes.

Pitfall: Using only “time saved” inflates ROI. Vendors report headline time-saved metrics; independent telemetry and trials caution that downstream bottlenecks erode those gains. Always map time saved to DORA/SPACE changes to see real business value.

Coordinate Adoption

Treat AI rollout like a platform rollout. Get buy-in from senior devs and cross-team leads.

For instance, update code review guidelines for more frequent merges, and involve QA/security in the change. Focus on enablers like workflow design, governance, infrastructure, training, and alignment so the gains scale beyond a single team.

Experiment and Iterate

Start small and measure. Use A/B tests or pilot projects. Monitor how AI affects cycle time before full rollout. If a pilot shows no benefit, adjust prompts or try another tool. Keep feedback loops short – treat AI adoption as a continuous improvement process.

Leverage expertise

Consider hiring or consulting AI-savvy engineers. Index.dev’s remote talent often accelerates AI adoption. In several Index.dev engagements, placing engineers experienced with coding assistants shortened onboarding and sped feature delivery, showing that talent plus tooling produces reliable ROI. Whether through strategic hires or training programs, having people who know the tools pays off.

In short, view AI assistants as part of a larger productivity strategy. Update your engineering practices and metrics in tandem. Teams that invest in processes and people, not just tools, will see the real ROI.

The Future of AI-Augmented Development

AI coding assistants are evolving rapidly. By 2026, these tools may write and refactor entire modules on command, turning engineers into high-level architects. IDEs will likely have AI built in natively, blurring the line between editor and agent.

Future ROI will depend on adapting with the tech. We predict:

- Agentic workflows:

- Developers will specify high-level tasks (e.g. “implement this feature”), and AI will generate complete solutions. Performance metrics may evolve into “prompt-to-release time.”

- Developers will specify high-level tasks (e.g. “implement this feature”), and AI will generate complete solutions. Performance metrics may evolve into “prompt-to-release time.”

- New metrics:

- Engineering analytics will track AI usage itself (prompts per feature, time saved per prompt). Teams may benchmark “dev productivity per AI usage” to tie assistant use to outcomes.

- Engineering analytics will track AI usage itself (prompts per feature, time saved per prompt). Teams may benchmark “dev productivity per AI usage” to tie assistant use to outcomes.

- Upskilling & culture:

- Organizations investing in continuous learning (training sessions, AI champions) will amplify gains. The processes and talent around AI will be as important as the models themselves.

- Organizations investing in continuous learning (training sessions, AI champions) will amplify gains. The processes and talent around AI will be as important as the models themselves.

- Tool consolidation:

- Expect full-stack platforms (like GitHub Copilot X) that merge coding, AI, and CI. In these ecosystems, linking AI actions to delivery outcomes will be easier to measure.

- Expect full-stack platforms (like GitHub Copilot X) that merge coding, AI, and CI. In these ecosystems, linking AI actions to delivery outcomes will be easier to measure.

For now, 2025’s data shows potential, not out-of-the-box guarantees. As one of our engineering leaders puts it, “Teams that succeed with AI are those that retool everything, not just their IDE plugin.” The future belongs to organizations that embrace AI as integral to development, continually updating metrics and practices.

Explore agile pod team KPIs: what to measure and why.

Conclusion

The verdict: treat AI as an accelerator for the whole value stream, not just a faster keyboard.

AI coding assistants are real — they speed developers and reduce grunt work. But speed alone is not ROI. The firms that win treat AI as a platform change: update reviews, automate checks, retrain onboarding, and measure with DORA and SPACE.

Start with a short pilot (6–8 weeks), baseline DORA, shrink PRs, and add automation. If the pilot moves lead time or deployment frequency, scale. If not, diagnose the bottleneck (reviews, QA, or release cadence) and fix that first.

Put another way: buy the tool, but invest in the process and the people who use it.

Ready to accelerate developer productivity?

AI tools alone won't boost ROI. You need developers who know how to use them. Index.dev delivers vetted engineers experienced with GitHub Copilot, Sourcegraph Cody, and optimized workflows. Get matched in 48 hours and see real productivity gains with our 30-day free trial.