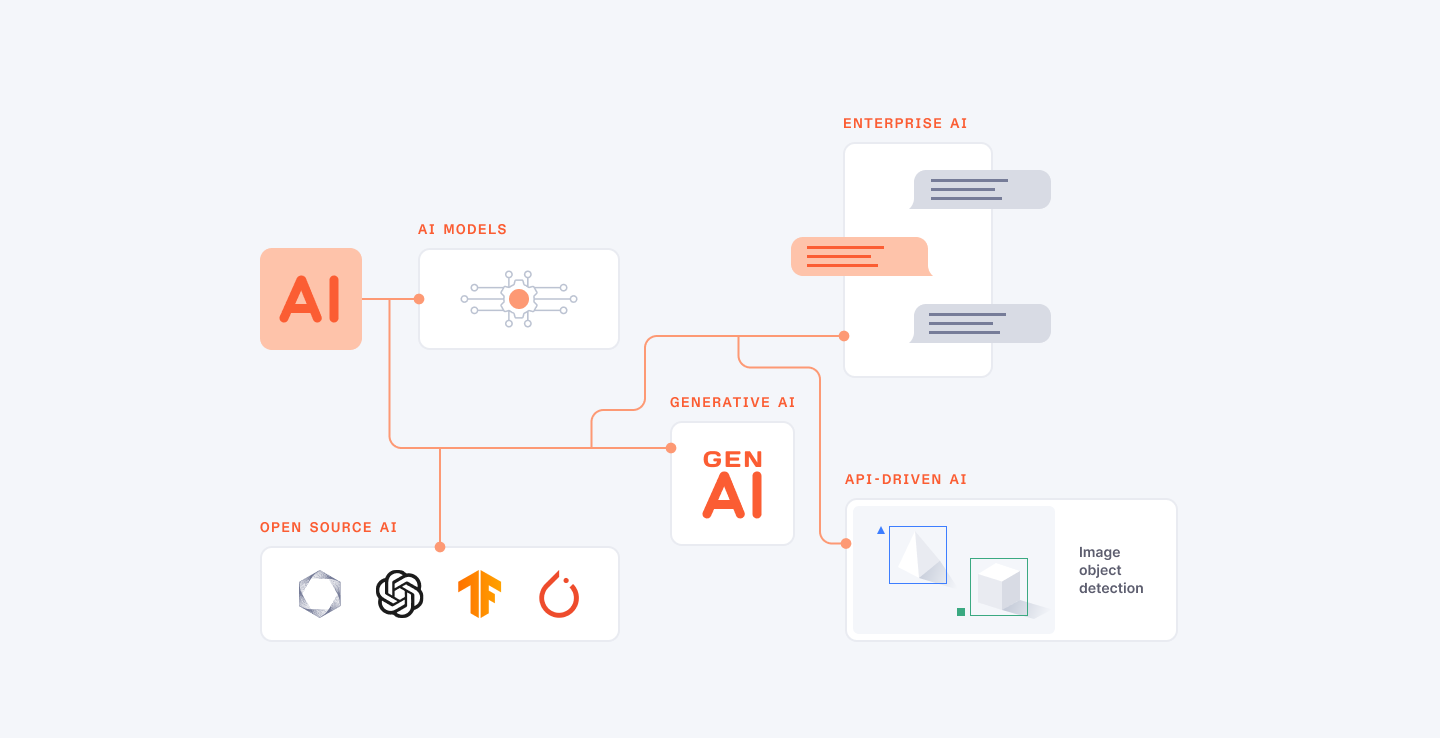

With the development of advanced technology, AI models have been introduced in numerous fields and sectors solving challenges and opening up new possibilities. These advanced technologies enhanced through big data have shown excellent performance in fields like NLP, CV, predictive analysis, and decision-making. Due to the advancement of AI technology, the decision of which model of artificial intelligence to use in a particular project is one of the most important decisions an organization has to make if they are to realize the full potential of this disruptive technology.

But, selecting the top AI model for a particular project as well as understanding the differences in the types of models and their strengths is a complex process.

This guide intends to provide project leaders and decision makers all the information and data they need when choosing the right AI model for an undertaking in the year 2024. We will present you different types of AI models, outline the most popular and advanced approaches, and give you the tools to compare the models to choose the most appropriate one for your task and limitations of your project. We will also explore the various aspects of differentiating AI models, showcasing how these models can be applied in the real world, by providing actual examples for you to better understand how you can put them to use when it comes to developing your products and creating value for your organization.

This article will prove useful regardless of the extent of one’s experience with AI since it provides both a perspective on future trends within the field and a framework by which one may decide which model best fits the project in 2024 and beyond.

Hire expert AI developers from Index.dev to build the best model for your project!

Understanding Different Types of AI Models

AI models are the basic components without which virtually no application is possible, from natural language processing to computer vision and everything in between. These models are often trained on a lot of data to be able to make predictions, and solve various problems. With the constantly advancing field of AI, it becomes important to familiarize oneself with various categories of AI models and their peculiarities, advantages, and uses.

Supervised Learning Models

Supervised learning models are based on labeled data, which implies that the input data is associated with the output or target variable. These models know how to associate the input data with the output hence they are capable of making predictions on new unknown data. Supervised learning is especially useful for classification problems such as image recognition, spam detection and regression problems such as sales forecast, stock price prediction.

Some popular supervised learning models include:

- Linear Regression: It is applied to modeling of continuous numerical outcomes using one or more predictors.

- Logistic Regression: Used when the output can be either one of two categories or a probability that belongs to the range 0 to 1.

- Decision Trees: A family of models designed to make decisions according to a set of rules and can be applied to both the classification and regression problems.

- Support Vector Machines (SVMs): Supervised learning techniques that are capable of partitioning highly non-linear features in a high dimensional space using an optimal decision plane that best distinguishes between various classes.

- Random Forests: The models that consist of multiple decision trees connected to provide better results and require less training.

Unsupervised Learning Models

The unsupervised learning models are conditioned on the input data that does not contain any target variables associated with them. This process seeks to find the relationships, structure, and the patterns that are in the data without any help from the user. Unsupervised learning is especially beneficial in instances like clustering, reduction of dimensionality, and searching for an outlier.

Some popular unsupervised learning models include:

- K-Means Clustering: Divides data points into K clusters whereby data points with similar characteristics go together and is used in classificatory models, especially in segmenting customer databases and image compression.

- Hierarchical Clustering: Creates a tree-like structure of clusters, which makes it possible to analyze data at various levels of detail.

- Principal Component Analysis (PCA): Serves to decrease the dimension of high dimensional data in order to find out the relevant features or principal components.

- Autoencoders: Autoencoder models that utilize an artificial neural network to predict the input data, common in tasks such as feature extraction and dimensionality reduction.

- Generative Adversarial Networks (GANs): Two neural networks namely a generator and a discriminator are involved in this process and it is employed in the generation of synthetic data similar to the training data.

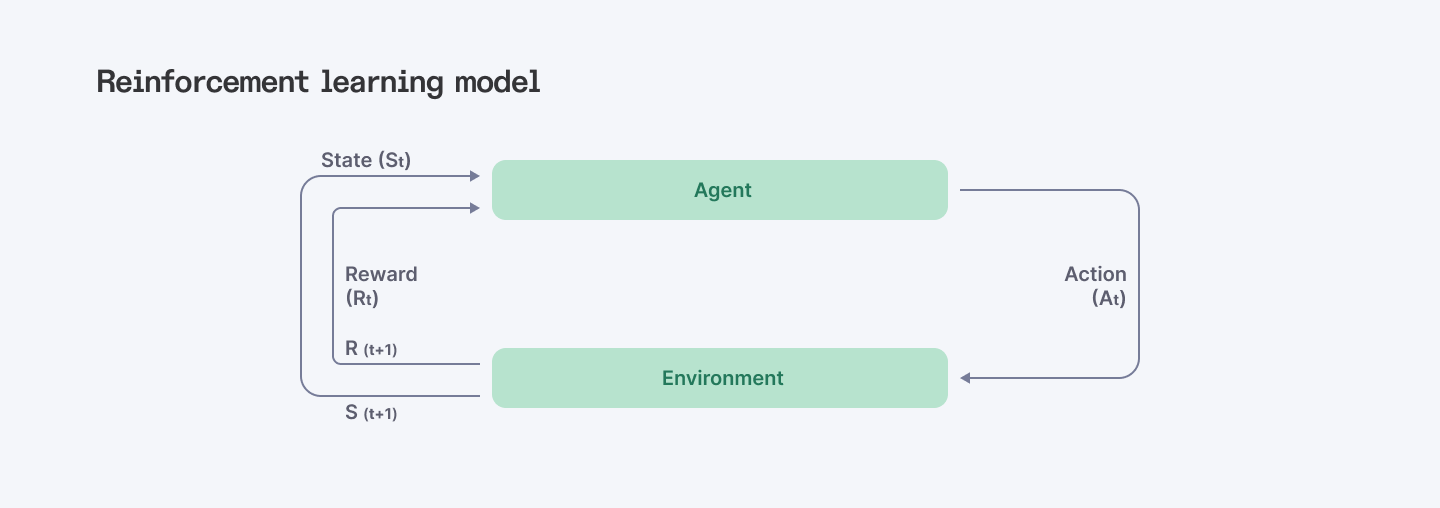

Reinforcement Learning Models

Reinforcement learning models interact with the environment and then get feedback in the form of rewards or penalties. These models are designed to capture ideal actions to take in a given environment in order to achieve the maximal total reward. Reinforcement learning is most suitable for applications that require decisions to be made over a period of time including games, robots and self-driving cars.

Some popular reinforcement learning algorithms include:

- Q-Learning: Understands the importance of a certain approach in a given state, commonly used in decision problems.

- Policy Gradient Methods: Learn the function that maps states to actions directly, which is commonly utilized in continuous control tasks.

- Deep Q-Networks (DQN): Integrate reinforcement learning with deep neural networks, thus ensuring the capacity to learn high-dimensional state-action space.

- Proximal Policy Optimization (PPO): A form of policy gradient that is more efficient in the sample and stable, usually applied in robotics, game development, and artificial intelligence.

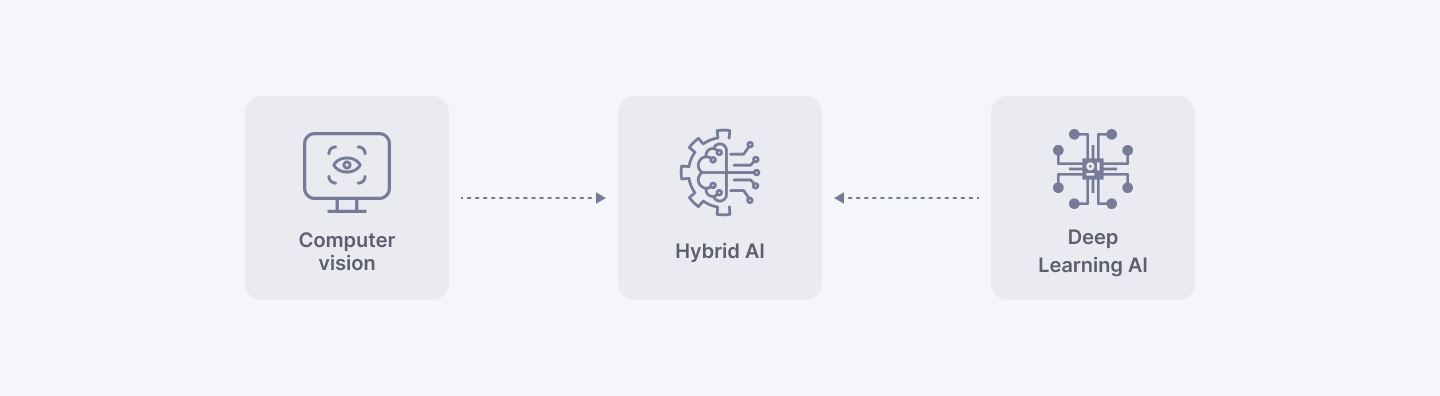

Hybrid and Specialized AI Models

In addition to the three main types of AI models, there are also hybrid and specialized models that combine different approaches or target specific domains.

- Semi-Supervised Learning: The combination of supervised and unsupervised learning takes advantage of unlabeled data to refine the models produced from supervised learning.

- Multi-Task Learning: Treansforms one or more related tasks into a ‘multi-task learning’ form, where the training focuses on a common representation and knowledge.

- Transfer Learning: Uses a model trained on one task to another similar task, commonly used in cases where there is little data for training.

- Federated Learning: It updates a single model across multiple devices or organizations but does not transfer the models’ training data, which keeps data private and secure.

- Transformer Models: BERT and GPT-3, for instance, are some of the models that have been designed to handle NLP tasks and are able to account for long-range connections within strings.

- Convolutional Neural Networks (CNNs): The structures that specialize in working with data represented as multidimensional grids, e.g., images, common in computer vision.

- Recurrent Neural Networks (RNNs): Specific sub-architectures for handling sequential data, including text or time series, which apply in natural language processing and speech recognition.

The decision on which kind of AI model is more suitable for a particular project is based on a number of factors that include the nature of the problem that has to be solved, the data that is available, the results that are desired to be obtained, and the computational capability that is available. This way the project leaders become aware of the various strongholds and weaknesses of the different AI models in use so that they can make sound decisions today and use the most appropriate approach to drive the innovation of their projects.

Read more: Designing Generative AI Applications: 5 Key Principles to Follow

Factors to Consider When Selecting an AI Model

The choice of the right AI model to be used in your project is a critical factor towards the success of the project. There is no doubt that there are numerous algorithms out there, yet these may not be equally effective. As a result, the choice depends on certain technical and project characteristics, which should be assessed comprehensively.

Understanding Project Requirements

When you look at specific models, it is crucial to clarify the objectives of your particular project and its results. What question is your answer to? Do you expect such outputs as predictions, classifications, and so on? Consider factors like:

- Task type: Is it a classification problem (for instance, spam detection), a regression problem (for instance, sales prediction), or is it something else (for instance, anomaly detection)?

- Data characteristics: Which kind of data is present (tabular, non-tabular, sequential data)? How much data is there and how good is it?

- Performance metrics: How will you evaluate it (in terms of accuracy, precision, recall, etc.)? These optimal measures depend on the task and the needs of the business.

- Deployment constraints: In what environment will the model be operating (cloud-based, edge computing)? Is there any issue of latency or any limitation in terms of resources?

- Explainability requirements: In some domains (for example, medical), it is vital to explain why the model came to such a decision.

This makes it easy for you to expand your knowledge on such project requirements hence helping you to filter out and select the most suitable model.

Key Factors influencing AI Model Selection

Now, let's explore the key factors that influence AI model selection in detail:

1. Data Availability:

- Data Volume: Deep models such as Deep Neural Networks demand large datasets for superior results. Such models as decision trees are fine when the set of data is small.

- Data Quality: Common programming principle ‘Garbage in, garbage out’ also applies to AI systems. Check and clean your data to eliminate any bias or inconsistency which may result in the models to deliver wrong outputs.

- Data Labeling: Supervised learning models are based on the data that is already labeled. Data labeling as a step can be costly and time-consuming, and its implementation is even more challenging when it comes to certain tasks such as image recognition.

2. Model Complexity:

- Trade-off between Accuracy and Explainability: In general, models with greater complexity are more accurate; however, their interpretability decreases. For some use cases explaining the model decisions (for example, loans’ approval) basic models might be more desirable.

- Computational Resources: Training of complex models is usually computationally intensive (GPUs, TPUs). The type of resources that are available and the probable cloud solutions should also be taken into consideration.

3. Computational Resources:

- Training Time: Modern complicated models can require days up to weeks to train big amounts of data. Take the training time into account when planning for the project time frame.

- Inference Speed: The time taken by the model to forecast predictions is significant when the application is real-time. For instance, a self-driving car needs models to be trained for low latency to make necessary decisions instantly.

4. Deployment Environment:

- Cloud vs. Edge Devices: All the models which are implemented in cloud have scalability and flexibility however, these models may have a higher latency because of the network communication. Edge devices help improve inference time but possess fewer computations as compared to the central servers.

5. Explainability and Interpretability:

- Understanding Model Decisions: Some models are easier to explain than other models, for instance decision trees compared to deep neural networks. This is important in cases where the reasoning of the model needs to be explained and understood.

If you think about all these factors and their possible interactions, you will be able to choose the right AI model for your project and achieve a proper goal.

Read more: 6 AI Model Optimization Techniques You Should Know

Hire top AI developers from the elite 5% engineer network at Index.dev and ensure the success of your AI model.

AI Model Comparison and Evaluation

Therefore, picking the right AI model that corresponds to your project also involves comparing its performance to other possible contenders for the job.

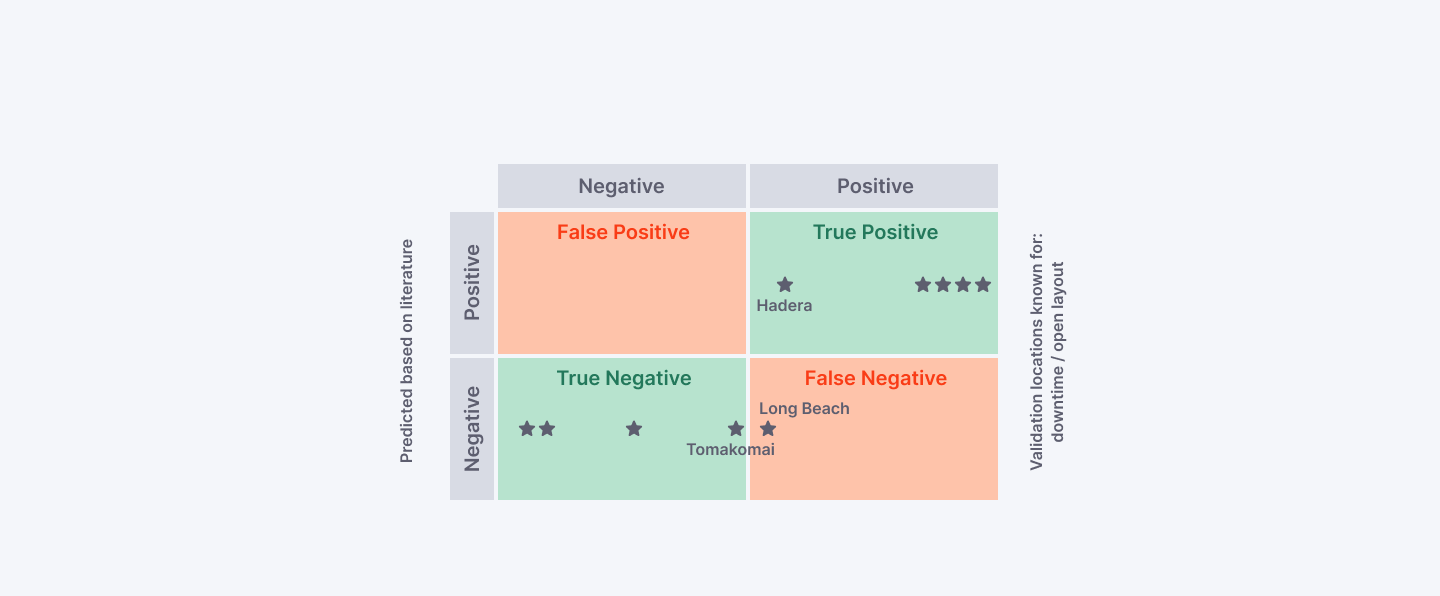

Classic Metrics: Accuracy, Precision, Recall, and Beyond

Although the basic measure such as accuracy (the percentage of correct predictions) can often be misleading, more insights are needed. Consider these metrics for a more nuanced evaluation:

- Precision: Allows for the relative quantification of the number of the positive results that can be accurately predicted, thus minimizing the number of false positive results. This is especially important in high misclassification cost situations such as the diagnosis of diseases.

- Recall: Evaluates how many of the actual positive cases are identified and correctly classified by the model (minimizing false negatives). This is useful particularly when recall is important, for instance, when false negatives have major implications, such as cases of fraud, for an organization or business.

- F1 Score: Takes both precision and recall and compresses into one metric especially when working with a large set which may be imbalanced.

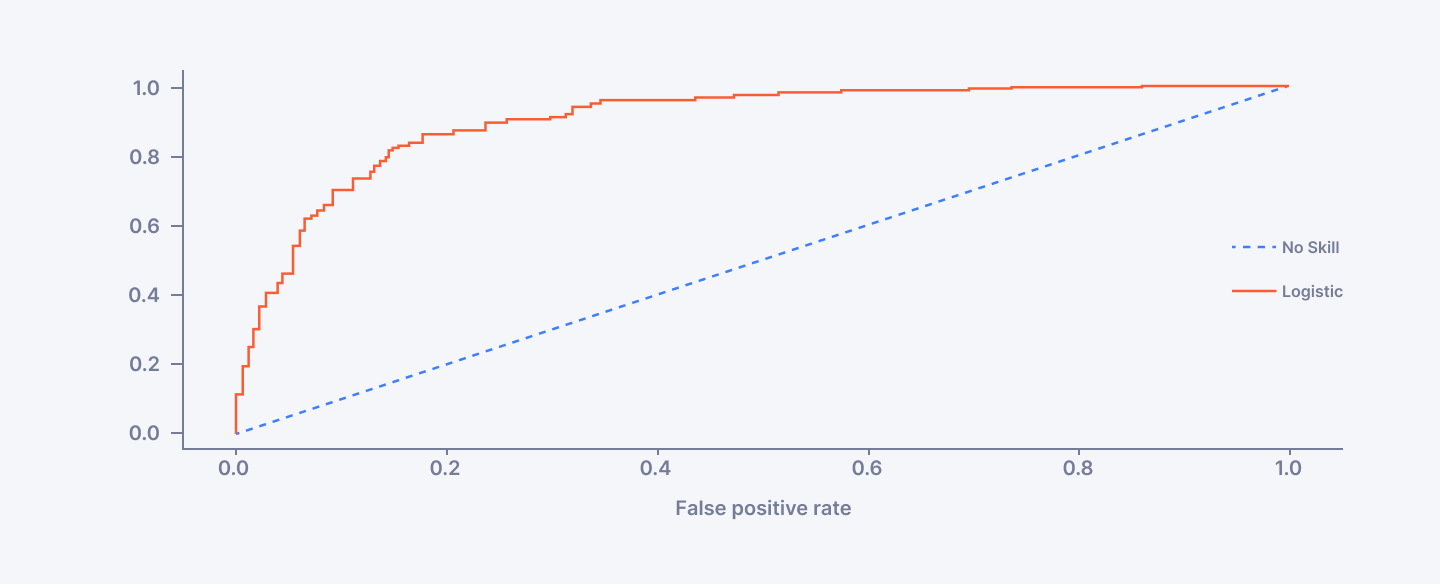

- ROC AUC (Area Under the Receiver Operating Characteristic Curve): Gives a better idea of model performance at all classification levels. The higher AUC value indicates the better result of the model.

These measures are rather useful, yet give a thought of including specific domain measures where possible. For example, in cases such as recommender systems, measures such as Normalized Discounted Cumulative Gain (NDCG) would be used to assess a model’s capacity to recommend relevant items.

Advanced Techniques for Robust Evaluation

Beyond classic metrics, explore these advanced techniques for a robust evaluation:

- Cross-Validation: Splits the data into training, validation and test dataset. The training is performed on the training dataset, and the model is tested on the validation dataset to avoid overtraining, and the last assessment is done based on the test dataset.

- K-Fold Cross-Validation: A stronger form of the cross-validation which performs the cross-validation process for k-folds with different splits of data and provides a more general estimate of performance.

- Stratified K-Fold Cross-Validation: Preserves the class distribution across each fold in the K-Fold process, when an important characteristic is the class distribution in the original dataset.

- Hyperparameter Tuning: Accuracy in most of the models depends on the hyperparameters that are involved in the learning process. Using Grid Search or Randomized Search, the best hyperparameter configuration for a model can be determined.

- Statistical Significance Testing: Significance analysis allows us to check whether the observed differences in performance of the models are statistically significant or not. This can be done by using methods such as paired t-tests or the Wilcoxon signed-rank test.

Emerging Trends: Fairness, Explainability, and Drift Monitoring

Beyond core performance metrics, consider these emerging trends in AI model evaluation:

- Fairness, Accountability, and Transparency (FATE): Critically examine models to determine if any of the models used would classify as a high risk for having bias that could cause discrimination within the population. The methods such as the use of the fairness metrics and the counterfactual explanations will assist in the detection of the bias.

- Explainability: During model selection, for models where it is necessary to determine the rationale for the decisions, methods such as LIME or SHAP can help identify feature relevance and model performance.

- Model Drift Monitoring: In some cases, real-world data distributions change over time and thus, the model performance degrades. Some approaches like KS tests or Kolmogorov-Smirnov or data visualization can be used to notice the drift and subsequently the step of retraining.

Focus on performance, not recruiting. Hire senior AI developers hand-picked by us!

Best AI Models for Specific Use Cases

There are many different AI models, and the availability of this technology is rapidly growing, so there are many opportunities regarding what is possible today. However, selecting the right model out of all the models to your application setting is conceivable so that the goals can be met.

Natural Language Processing (NLP):

NLP tasks are numerous and the selection of the model depends with the purpose of the task set to be accomplished. Here are some prominent examples:

Task: Text Classification (e.g., sentiment analysis, spam detection)

- Model: The CNN with proper word embeddings such as Word2Vec or GloVe is suggested to use for text classification. As for these models, performance is increased since they are more than suitable for capturing semantic relation across the words.

- Success Story: Social media platforms like Twitter rely on CNNs to filter abusive posts and analyze the public perception of trending issues in real-time.

Task: Machine Translation (e.g., translating documents or conversations)

- Model: New generation transformer models such as Google Transformer or OpenAI GPT3 become the new standard in machine translation. They impose long-range dependencies in the process of translation of the context confined to a sentence, which results into more precise translations.

- Case Study: For real-time and accurate translation, Microsoft Translator uses both Recurrent Neural Networks and Transformed models for translation in over 100 languages to ensure that people across the globe communicate effectively.

Task: Text Summarization (e.g., generating concise summaries of lengthy documents)

- Model: Sequence-to-sequence models with attention mechanisms, like those based on the encoder-decoder architecture, are well-suited for text summarization. The attention mechanism allows the model to focus on relevant parts of the input text while generating the summary.

- Example: News aggregator applications employ summarization models to provide users with concise overviews of news articles, enabling them to stay informed efficiently.

Computer Vision: Seeing the World Through Machines

Computer vision models excel at extracting insights from visual data. Here are some impactful applications:

Task: Image Classification (e.g., recognizing objects or scenes in images)

- Model: Convolutional Neural Networks (CNNs) are the go-to approach when it comes to image classification. By having such structures they are capable of learning hierarchical features within images which enables them to identify objects and their characteristics.

- Success Story: Companies developing self-driving cars, such as Tesla, use CNNs to analyze images captured by cameras with subsequent identification of objects and understanding of traffic scenes for true autonomous driving.

Task: Object Detection (e.g., identifying and locating multiple objects in an image)

- Model: There are other CNN derivatives such as YOLO or Faster R-CNN that are more frequently used for the object detection task. Besides, the models that are to be introduced here are capable of not only classifying an object but also identifying its location in an image using bounding boxes.

- Case Study: Retail stores are using object detection models for automatically taking up jobs such as inventory counting. Cameras are positioned in such a way that observe items on shelves and will notify when certain amounts are exhausted.

Task: Facial Recognition (e.g., identifying individuals from images or videos)

- Model: Deep Convolutional Neural Networks (DCNNs) with large training datasets are employed for facial recognition. These models can learn complex facial features and variations, enabling accurate identification.

- Example: Law enforcement agencies utilize facial recognition for suspect identification, aiding in criminal investigations. However, privacy concerns surrounding this technology necessitate careful consideration and ethical implementation.

Predictive Maintenance: Proactive Problem Prevention

Predictive maintenance leverages AI to anticipate equipment failures before they occur, saving costs and downtime. Here's a model driving success:

Task: Anomaly Detection (e.g., identifying deviations from normal sensor readings that might indicate equipment failure)

- Model: Autoencoders are a type of neural network that learn a compressed representation of "normal" data. Deviations from this learned representation can signal anomalies, potentially leading to equipment failure.

- Case Study: Manufacturing plants are deploying anomaly detection models to monitor sensor data from machinery. Early detection of anomalies allows for preventive maintenance, avoiding costly downtime and production delays.

These are just a few examples, and the field of AI models is constantly evolving. Remember, the best approach involves understanding your specific use case, data characteristics, and project requirements to select the most effective model for your needs.

Read more: Comparing Top LLM Models: BERT, MPT, Hugging Face & More

Best Open Source AI Models

The open-source AI landscape is brimming with powerful tools that democratize access to cutting-edge machine learning techniques. While these are frameworks and libraries that contain pre-built models, they can also be used to build new models from scratch.

Foundational Frameworks

TensorFlow (TensorFlow.org):

A plug-in, cross-platform web application development structure, available for public use, and created by Google. TensorFlow is a popular platform that provides tools for creating, training, and deploying machine learning models. It is compatible with different programming languages, including Python, Java, and C++; has a vast user base and rich documentation, which makes it suitable for both newcomers and professionals.

- Strengths: Flexibility, scalability, large community support

- Use Cases: Image classification, natural language processing, recommender systems

- Example: TensorFlow is a cornerstone for projects like DeepMind's AlphaGo, a program that defeated the world champion in the complex game of Go.

PyTorch (PyTorch.org):

Another well-known open source framework associated with flexible computational graphs and simplicity. Due to its Python-first design discipline, PyTorch is perfect for prototyping and even debugging among researchers. The work smoothly runs in parallel with scientific computing applications such NumPy and works efficiently with most cloud environments.

- Strengths: Dynamic computation graphs, user-friendly Python interface

- Use Cases: Natural language processing, computer vision, reinforcement learning

- Example: Facebook AI Research utilizes PyTorch extensively for various projects, including image captioning and machine translation models.

Keras (Keras.io):

A framework of programming interfaces at the level of application that allows the simplification of constructing and testing deep learning models. It guides users through the construction of models on top of frameworks like TensorFlow or PyTorch thus making it easier for users to work on models. They facilitate model building and assist in the arrangement of a model and can be used to quickly experiment with a new structure.

- Strengths: Ease of use, rapid prototyping, works seamlessly with TensorFlow and PyTorch

- Use Cases: Early exploration of deep learning architectures, prototyping models for various tasks

- Example: Many researchers and data scientists leverage Keras as a quick and easy way to test different model configurations before diving deeper into framework-specific implementations.

Specialized Open-Source Models

Beyond foundational frameworks, a vibrant ecosystem of open-source models exists for various tasks:

OpenNLP (OpenNLP.apache.org):

A powerful toolkit for natural language processing tasks in the JAVA environment. The functionalities provided by OpenNLP are tokenization, sentence detection, NER and sentiment analysis. Because of its suitability is text processing applications and compatibility with the Java environment.

- Strengths: Java-based, comprehensive NLP functionalities

- Use Cases: Text classification, information extraction, sentiment analysis

- Example: OpenNLP is used in various applications, including spam filtering and customer service chatbots.

Scikit-learn (Scikit-learn.org):

One of the most versatile and comprehensive Python libraries dedicated to machine learning tasks. Scikit-learn contains a great number of classical categories of ML methods for classification, regression, clustering, and dimensionality reduction. Thus, the offered package is compact, non-setting, friendly to the user and offers efficient implementations and therefore can be useful for a wide range of machine learning tasks.

- Strengths: Extensive library of classical machine learning algorithms, user-friendly Python interface

- Use Cases: Building baseline models, exploring various machine learning algorithms for a task

- Example: Scikit-learn is a popular choice for tasks like fraud detection and stock price prediction, where classical machine learning algorithms can be effective.

OpenAI Gym (Gym.OpenAI.com):

It is a set of tools for the creation and comparison of reinforcement learning algorithms. Gym offers a uniform environment in many settings that an agent can gain knowledge of from experience. This enables researchers and developers to compare and contrast various reinforcement learning techniques.

- Strengths: Standardized interface for reinforcement learning environments

- Use Cases: Developing and evaluating reinforcement learning algorithms for robotics control, game playing, and other applications

- Example: OpenAI Gym is a cornerstone for research in reinforcement learning, enabling researchers to develop and test new algorithms in various simulated environments.

Read more: Examining the Leading LLM Models: Top Programs and OWASP Risks

In a Nutshell

Select the AI model that addresses the needs of your project, operates at your data and volume level, matches your computational resources, and requires an insight into the model’s decision-making. When it comes to choosing recommender models it is advised to do a bit of benchmarking and try out several models to identify the one that is the best fit for a certain project.

Index.dev's AI engineers possess the necessary technical skills, expertise in distributed computing and data engineering, as well as customization capabilities to ensure smooth integration of AI models with a company's existing infrastructure and systems.

For Clients:

Focus on innovation! Join Index.dev's global network of pre-vetted developers to find the right match for your next project. Sign up for a free trial today!

The AI engineers at Index.dev have profound knowledge about how to implement different optimizations methods to enhance the performance of sophisticated AI models. They are highly skilled in the hyperparameter optimization techniques, including the use of Bayesian optimization, grid search, and random search for identifying the hyperparameters that provide the best performance at the lowest resource costs.

By partnering with Index.dev, you can get the most out of your AI models and solve the issues, such as the complexity and resource limitations. This is because we have established ourselves as a company capable of delivering optimized AI solutions for industries across the board coupled with a fast and efficient way of hiring the best candidates for the optimization of your business AI.

For AI Developers:

Are you a skilled AI engineer seeking a long-term remote opportunity? Join Index.dev to unlock high-paying remote careers with leading companies in the US, UK, and EU. Sign up today and take your career to the next level!