Artificial Intelligence or AI has become not just an area of scientific interest anymore, but has become an integral part of the technology world. Behind this revolution, there are AI models – complex sets of equations with access to big data, designed to achieve a particular goal. From improving assembly lines in factories to creating individual drug discovery maps, companies implement AI models in various fields.

However, the ability of AI models is the lack of efficient training and deployment. Even the most advanced AI model needs to be trained and implemented properly, just like a stunning race car needs a good driver and a functional pit crew. It provides leaders with a basic background knowledge of AI and explores the strategies of developing and applying AI models in 2024.

Hire expert AI developers from Index.dev to build the best model for your project!

The Transformative Power of AI Models

Artificial Intelligence (AI) models are actually a type of software program that can learn from data sets. Known as machine learning, these algorithms explain data by ingesting text, images, sensor readings, or a mix of these or any other inputs. This knowledge makes them capable of handling various tasks, which sometimes are beyond the abilities of human beings in terms of speed, accuracy and sometimes even imagination.

Here are just a few examples:

- Computer Vision: The AI models are able to consider and interpret any type of visual input with a high degree of precision. They can be trained to differentiate between normal and abnormal patterns in the medical scans, objects in self-driving cars or even faces in secured cameras.

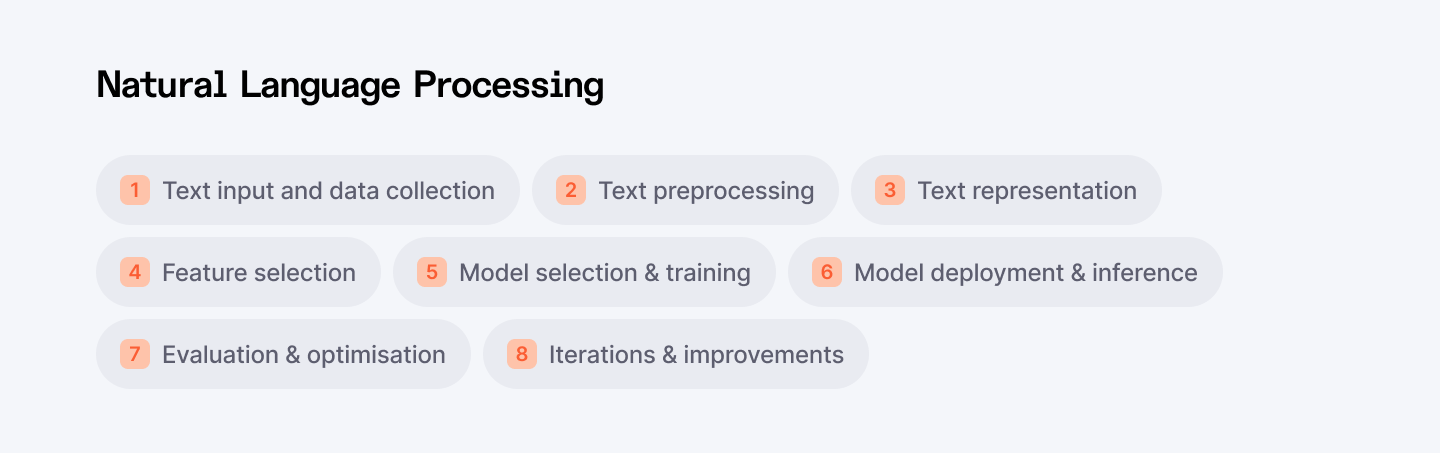

Natural Language Processing (NLP): Human language can be translated and explained by AI models. They are in charge of such applications as chatbots that can provide answers to customers’ questions, language translators, or even engaging promotional texts.

- Predictive Analytics: AI models can analyze the past, in order to make assumptions about events that are yet to happen. It is applied in finances to evaluate risk, in healthcare to predict the likelihood of epidemic, and in retail to predict the stock level.

These are only some of the possibilities of AI models, there is a lot more that one can imagine, which are yet to be discovered. The advancement of the computing field and innovations in other related areas make one hope to see even more radical uses across various industries.

The Efficiency Imperative: Training and Deployment

While the power of AI models is undeniable, their effectiveness hinges on two crucial stages: training and deployment.

- Training: This involves providing the model with large amounts of data that are related to the task being performed. The standard and amount of data define the effectiveness of the model. Furthermore, the choice of the training algorithm, and determination of the right hyperparameters for that algorithm, are very important. If the training process is not optimized, a model may take a long time to learn, it may learn incorrectly or it may not be able to learn at all what is expected from it.

- Deployment: After training the model, it becomes necessary to incorporate the model back into appropriate use in practicality. This involves preparing the model for serving and handling various real-time requests while also management over the model’s performance overtime. That is why a poorly deployed model can lead to latency issues, scalability bottlenecks, and the absolute unwillingness of users to trust it.

Having efficient ways to train and deploy AI models is crucial for getting the most out of the models. Through reducing the training time, resource leaders can enhance the cycle of innovation and deliver solutions that are based on Artificial Intelligence to the market. Also, effectiveness in implementation guarantees that the model works as intended in real-world situations and hence enhances the model’s effectiveness.

In the next few sections, we will provide the necessary information regarding the training process and subsequent deployment, including the best practices and innovative approaches for optimizing the performance level in 2024.

In the case of every AI model, there is one fundamental aspect that it is based on – training. This interplay between data and algorithm unlatches the model’s capacity for performing certain tasks. But how does the feeding of such data turn into machine intelligence? Now we will turn to the description of the main AI training techniques as well as their advantages and uses.

Feeding the Machine: The Core Concept

Machine learning models, much like a child learning to recognize animals, acquire knowledge through exposure to data. Just as a child can learn to differentiate between a cat, dog, and bird by being shown labeled pictures, AI models learn to perform specific tasks by consuming relevant data.

- Structured: Categorized and labeled data points, often stored in tables (e.g., customer information with labels for credit risk)

- Unstructured: Text documents, images, sensor readings, etc., requiring preprocessing to extract relevant features

Using specific mathematical operations, the model brings this data into its equations, making internal changes to set up parameters and search for patterns and proportions. This process is referred to as optimization and is driven by what is known as a loss function – a gauge of how far off the outputs provided by the model are from the anticipated value. The objective is to decrease this loss function as much as possible to obtain a model that will perform the specified target job successfully.

Read also: 6 AI Model Optimization Techniques You Should Know

Unveiling the Spectrum of Training Techniques

It is paramount to note that AI training is not a one-shot process that follows a common model. The technique used in data analysis and interpretation depends on the form and kind of data that has been obtained and the intended goal. Here's a breakdown of the most common methods:

1. Supervised Learning: Learning with a Teacher

Supervised learning falls under the more like a teacher and students scenario. It requires inputting labeled data, where every input appears also with the label of what is expected of the model. The model learns how to find the right correlation in bet between the input data and the output label.

Example Algorithms:

- Support Vector Machines (SVMs): These algorithms put it in a position of developing a hyperplane that effectively models for two classes of data. They are useful for tasks that involve categorization such as filtering out spam content or identiying objects within an image.

- Decision Trees: These algorithms establish a tree structure where each node is the prediction based on one attribute of the data. Which are interpretable, meaning that they are especially valuable if the goal is to understand how the model came to a particular decision.

Applications:

Supervised learning is commonly applied in such processes as image classification, sentiment analysis, as well as the detection of fraudulent activities in financial transactions.

2. Unsupervised Learning: Finding Structure in the Unknown

As the name suggests, while dealing with models, unsupervised learning is different from supervised learning in the manner that it applies to some data sets which are unlabelled. Here it is necessary to find the previously unknown patterns in the data without even knowing the classes in advance.

Example Algorithms:

- K-Means Clustering: As the name implies, it partitions a set of data into several groups of k clusters depending on the similarity between these data points. It can be applied for operations such as customer segmentation or for identifying abnormal behaviours in an outlet from sensor data.

- Principal Component Analysis (PCA): This technique helps when it comes to dimensionality reduction since it pinpoints the most important features. This is used in cases where it is required to re-embed pictures or text data; mainly it is used in cases where dimensionality is to be reduced.

Applications:

Some of the uses of unsupervised learning include; market segmentation (grouping of customers with similar characteristics), anomaly detection (identifying of really strange features in network traffic) and recommendation systems (product recommendations based on user behavior).

3. Reinforcement Learning: Learning Through Trial and Error

As in the case of learning through interaction with the environment, reinforcement learning mimics the learning methods employed by human beings and animals. Here the agent learns to deal with a simulated environment and gets a reward for taking the required actions. As the final output of the reinforcement learning system, the agent gains an ability to choose best actions that yield the most rewards in each state.

Applications:

Reinforcement learning is highly applied in robotics when the agent has to acquire the best control policies. It is also applicable in game playing AI, in which the agent is trained to make correct moves that would lead to a winning scenario.

A Note on Emerging Techniques:

The training of AI is a field that continues to be expanded. Promising techniques include Few-Shot Learning, which can help models learn from small data samples, and Meta-Learning, which makes models capable of learning better and faster.

Hire expert AI developers from Index.dev to build the best model for your project!

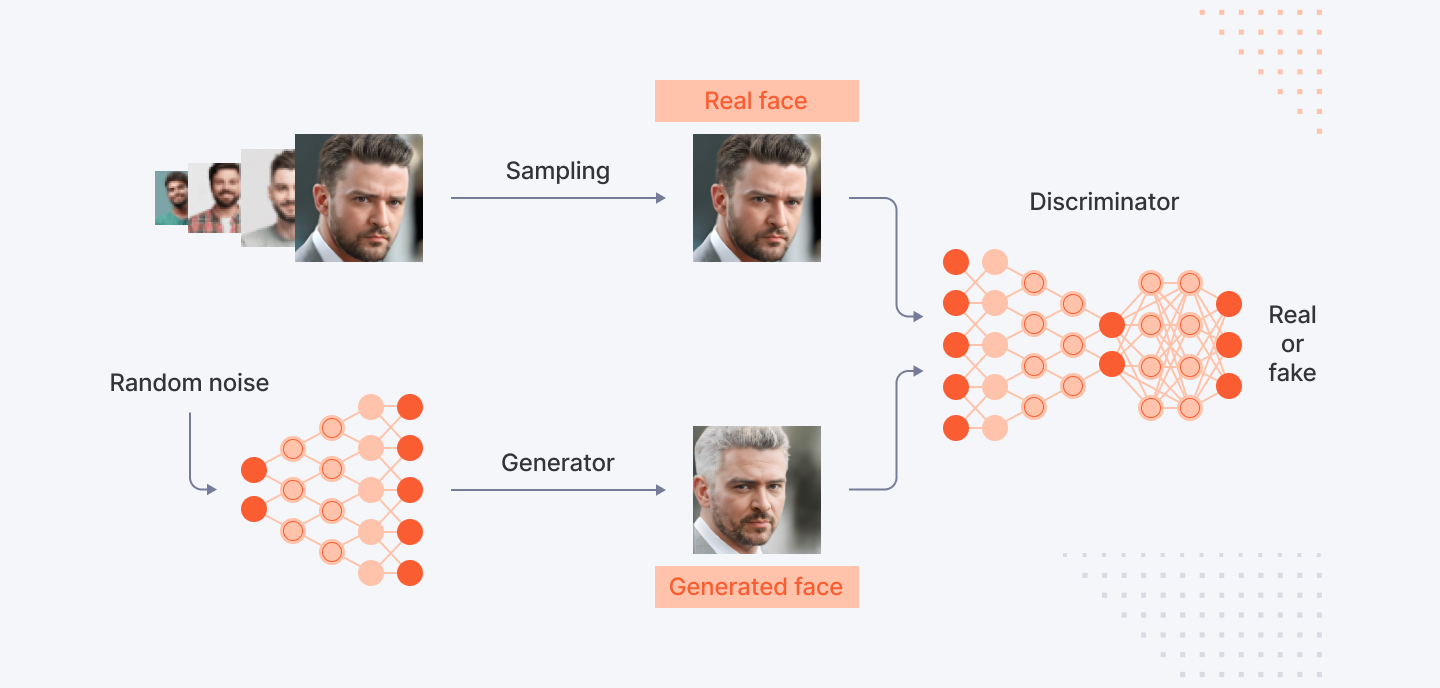

Generative AI Model Training: The Art of Creation with GANs

One special branch of AI training is generative modeling, which can produce entirely new data, such as images, text, or music. Among these, Generative Adversarial Networks, also referred to as GANs, are a critical method in this field.

There is no doubt that with AI models one has the potentially very useful and powerful tool at his disposal, but realising this potential will not happen by itself, there needs to be a clear and defined development process. This part provides more information on how to train your own AI model, thus preparing you on how to go through this fascinating yet complex process.

1. Defining the Problem: Charting the Course

One special branch of AI training is generative modeling, which can produce entirely new data, such as images, text, or music. Among these, Generative Adversarial Networks, also referred to as GANs, are a critical method in this field.

There is no doubt that with AI models one has the potentially very useful and powerful tool at his disposal, but realising this potential will not happen by itself, there needs to be a clear and defined development process. This part provides more information on how to train your own AI model, thus preparing you on how to go through this fascinating yet complex process.

2. Data Acquisition and Preprocessing: The Fuel for Learning

Once the problem is defined, the acquisition of data stands out as the next critical quadrant that needs to be filled. This entails gathering of information that would be used in training of the model. Here are some key considerations:

- Data Quality: Make sure that the data that is collected is timely, comprehensive and corresponds to the purpose of the task. Some pre-processing methods such as data cleaning and outlier elimination could be carried out.

- Data Quantity: It means that the amount of data influences model performance to an extent, especially if it is abundant. Essentially, it is agreed that the availability of large amounts of data results in improved outcomes. However, the exact value is defined in part by the specificity of the project or the selected architecture of the model.

- Data Preprocessing: Real life data is not usually clean and tends to deviate from the model in many ways. The fact that in most cases it is necessary to carry out some initial preparatory work with the features such as normalization, scaling features and encoding of categorical variables.

Data can be defined as the core of intelligence models. The company gets lifelong reliable performance from quality and well-prepared data.

3. Model Selection: Choosing the Right Tool for the Job

The next step is to select the correspondingly proper AI model architecture when the problem is defined and the data is prepared. Subtypes of models vary in their performance depending on the tasks that need to be solved. Here's a breakdown of some popular choices.

- Convolutional Neural Networks (CNNs): These models are very efficient in identification – especially in image recognition and Computer Vision tasks. They use a structure of neural networks which emulates the function of the visual cortex and helps them identify features of images.

- Recurrent Neural Networks (RNNs): Used with follow-up data such as text or time series information, RNNs are capable of interpreting temporal relations in the data. It is widely applied in activities such as machine translation, sentiment analysis, and time series predictions.

The decision of which model architecture to use will depend on the type of input data being used as well as the user’s intended outcome. Such a choice can be useful in making this significant decision after consulting with data scientists and reading through research papers.

4. Model Training: The Heart of the Process

After determining the type of model that will be adopted for the project, it’s now time to get serious on the actual training. This in the process of training involves providing the preprocessed data to the model and tuning its internal components for iterative reduction of the loss function , which defines the discrepancies between the model’s output and the expected results.

Key Concepts in Model Training:

- Loss Function: This function measures the quality of the model in respect of a particular data point. Some of the most famous metrics are mean squared error (MSE) for the regression problem and cross-entropy for the classification problem.

- Optimization Algorithms: These algorithms help the model to evolve by changing its parameters to reduce the loss defined by the loss function. Some of them are gradient descent and some variants of it; Adam and RMSprop such as.

Monitoring Training Progress:

In the helping process, several metrics such as training accuracy and loss should be trained to ensure that it is not over-fitted or under-fitted. In other words the model tends to have high variance because it focuses too much on the training data and does not generalize well when tested with unseen data called overfitting. Since the model does not learn the patterns of the data accurately, underfitting arises. Such problems can be managed using methods like regularization and early stopping.

Understanding Training Time:

The training time depends on the model, dataset, and computational power, and it can take minutes, hours, or even days. It must be noted that cloud solutions with GPUs are capable of providing much faster data training.

5. Evaluation and Monitoring: Ensuring Model Efficacy

Training is not the last process either. This is especially important because the model may work well on the training data used to develop the model but perform poorly on the unseen data (test set). The quantitative measures common in assessment include accuracy, precision, recall, F1-score for classification or MSE, and R-squared for regression analysis.

Monitoring for Model Drift:

The real-world data distribution is not constant and may vary over a period of time. However, the model has to be evaluated, or monitored in this sense, constantly. Here are some strategies for monitoring model drift:

- Periodic Retraining: From time to time, you must update your model with new data to make it acquainted with current trends.

- Data Drift Detection Techniques: Use statistical methods or online learning algorithms to identify changes in the data distribution and initiate retraining when appropriate.

Through the process of evaluation and monitoring, the effectiveness of the AI model will be achieved and at the same time, its efficiency in the real world will be established.

For the AI models, especially when they are being built and deployed, certain strategies help in achieving efficiency. What was provided in this tutorial is sufficient knowledge about how to move from problem definition to model evaluation and even monitoring. OK, always know that model creation with artificial intelligence is a quite heuristic process.

Apply the results of evaluation to the respective models, adopt newly developed approaches, and aim at maximum effectiveness to achieve maximum potential for AI in your company.

Read also: Designing Generative AI Applications: 5 Key Principles to Follow

Hire expert AI developers from Index.dev to build the best model for your project!

Deployment Nirvana: Seamlessly Integrating Your AI Model

The life cycle of an AI model does not stop at the training phase. For it to realize its optimum value, it has to be translated from one environment-from the artificiality of a training script to day use. This section goes over the details of the act of deploying an AI model and outlines the best practices one needs to follow to get to the ‘Deployment Nirvana’.

Training to Deployment Journey: Bridging the Gap

When your model has been trained and tested, you move it to the final stage of passing it into live use also known as production. This involves several crucial steps:

- Model Packaging: To make the trained model ready for serving an operational request, one has to transform it into a format that facilitates serving requests in a production environment. This mostly involves the process of converting or exporting the model to a format that is easy for the application to load.

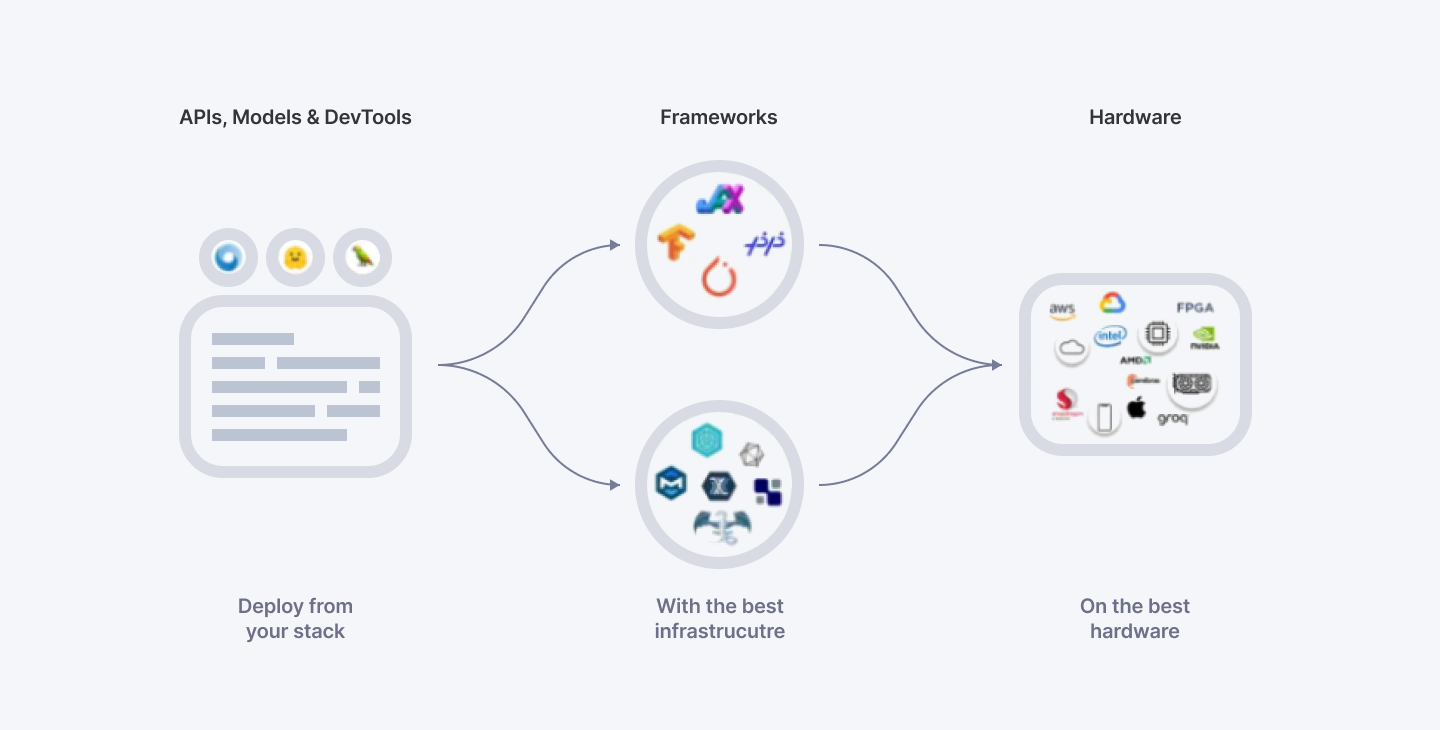

- Model Serving Framework Selection: A serving model framework is an interface between the actual model and the application. It receives the incoming request, forwards data to the model, and returns the model prediction. Some of the commonly used serving platforms are TensorFlow Serving and PyTorch Serving.

- Integration with Application: Before the model can be used, it has to be integrated in the application or system that will be utilizing the modeled predictions. This might involve creation of APIs which are interfaces that the model and application have to utilize to communicate.

- Infrastructure Selection: The decision about the infrastructure to be deployed mostly depends on such parameters as scalability, cost, and security demands. While you can easily build your own custom AutoML solutions on cloud structures with your preferred programming languages and deep learning frameworks like TensorFlow, Keras, PyTorch, and more, utilizing Google Cloud AI Platform or Amazon SageMaker can help with the managed service and managing the infrastructure.

Key Considerations for Deployment: Ensuring Real-World Success

While the technical aspects of deployment are crucial, several other factors influence the success of an AI model in the real world:

- Monitoring and Logging: Supervise the model when it is in production and look for such problems as declining accuracy or increasing response time. Log the models properly so as to be in a position to determine the model’s behavior or diagnose a problem that might be ailing the model.

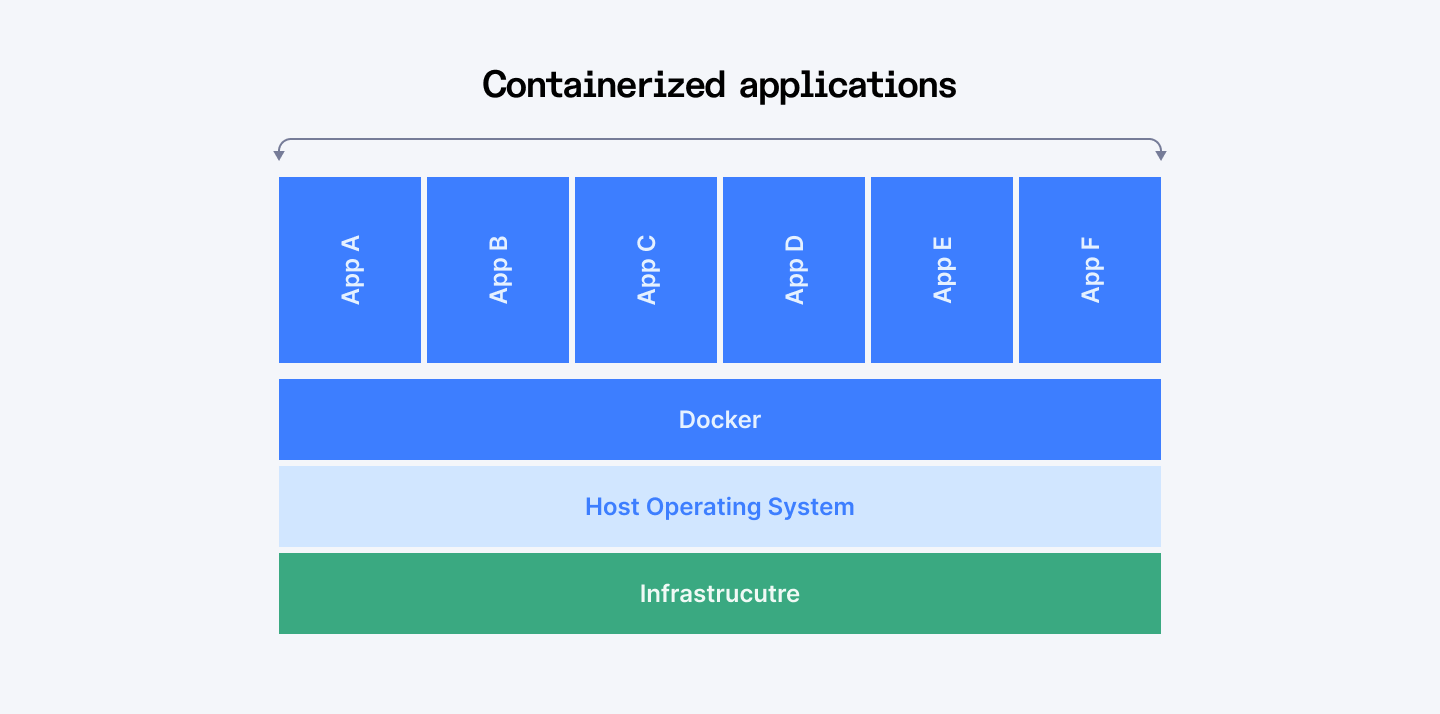

- Scalability and Performance: Check that the infrastructure for deploying products can be scaled up to meet the growing workload and user traffic. One can use the strategies, such as containerization with Docker to bundle the model and its dependencies and manage them to scale flexibly.

- Explainability and Fairness: Sometimes it is important to understand how the model makes these decisions. A technique such as Explainable AI (XAI) can make the model’s decision-making process transparent, and pave the way to trust and bias identification.

- Security: It is crucial to prevent input manipulations and unauthorized access to the model. CSO measures such as access rights and data encryption should also be employed to secure your model and data.

- Governance and Regulatory Compliance: For some applications, the type, amount, and usage of data might be regulated by guidelines on data protection and algorithmic fairness. Check that the corresponding regulations are complied with in the case of deployment practices.

If these factors are addressed, one can build a solid strategy for the proper implementation of an AI model to address real-world applications.

Diving Deeper: Specific Technologies for Deployment

Here's a closer look at some of the technologies mentioned earlier:

Model Serving Frameworks:

- TensorFlow Serving: A model serving framework developed by Google with high performance and flexibility for serving multiple models. It also operates with one or several languages and is not tied to a specific platform.

- PyTorch Serving: Open sourced by PyTorch, this framework is best for serving your PyTorch models. This one provides such options as model versioning and batching for inference.

Containerization with Docker:

With Docker, the model, the dependencies, as well as the runtime environment can be packaged in a container. It can then be directly put into a container and deployed in any other environment with ease enabling making copies easier.

Diagram of a Docker container with the model, dependencies, and runtime environment packaged together

You can use these technologies and best practices to offer an efficient solution to achieving strategic deployment, to reach “Deployment Nirvana.”

Read also: How to Select the Best AI Model for Your Project in 2024

In a Nutshell

Training is a very important process where models can be optimized, while deployment is a bridge between the concept of the model and the real world. Following the systematic approach together with the analysis of key factors and application of the modern technologies you will be able to avoid the vast amount of potential issues that may appear during the transition of your models to the real world application process and enhance the models’ profitability for your organization. It is important to remember that deployment is not a single time occasion. Sustainably track the overall performance, optimize, and upgrade the AI models’ deployment process to enhance a model’s efficiency. Index.dev provides solutions for automating maintenance tasks, such as updating models and managing infrastructure. This reduces the burden on internal teams and ensures that AI systems remain up-to-date and effective. Our developers can also set up alerts and analytics tools to proactively address any performance degradation.

For Clients:

Focus on innovation! Join Index.dev's global network of pre-vetted developers to find skilled AI engineers who can optimize your AI models. They specialize in hyperparameter optimization techniques like Bayesian optimization, grid search, and random search, ensuring top performance with minimal resource use. Partner with Index.dev to overcome challenges like complexity and resource limitations, and benefit from efficient hiring and optimized AI solutions for your business.

For AI Developers:

Are you a skilled AI engineer seeking a long-term remote opportunity? Join Index.dev to unlock high-paying remote careers with leading companies in the US, UK, and EU. Sign up today and take your career to the next level!