Scaling AI teams has emerged as a defining challenge for both startups and large enterprises. In today's fast-paced industry, organizations must frequently expand from a small core of three engineers to fifty within a year to stay competitive.

Scaling AI teams, unlike typical software teams, presents a unique set of challenges, including balancing research and engineering, handling massive data volumes, and ensuring production-ready deployment. At the same time, leaders must find a delicate balance between speed and sustainability, avoiding technical debt while hastening delivery.

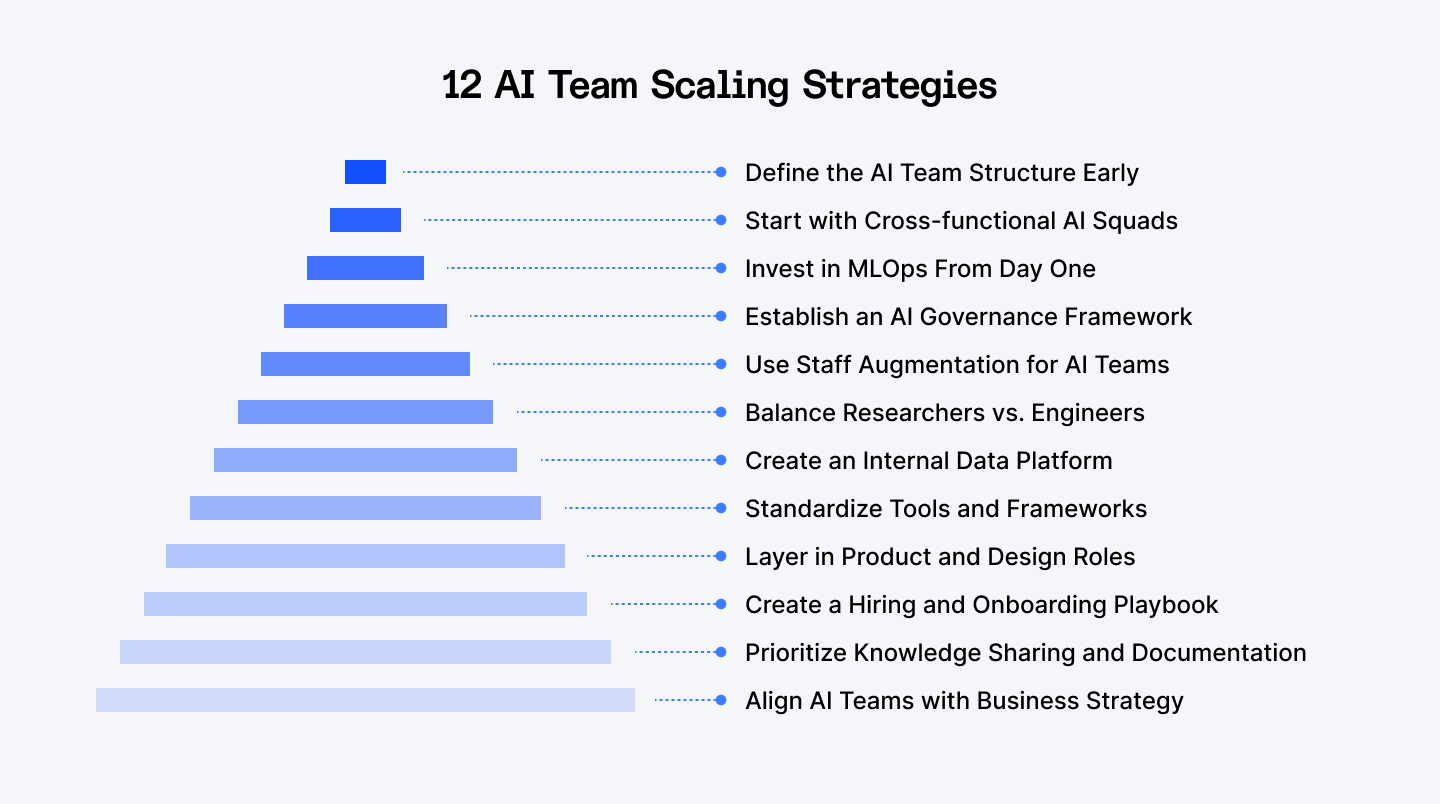

This article explores 12 concrete techniques that provide a strategic roadmap for establishing an effective AI team organization. From MLOps adoption to cross-functional teams and governance frameworks, these insights can assist firms in scaling wisely while remaining aligned with business objectives.

Google's MLOps guidelines and HBR's AI-powered organization model emphasize the need for structure above speed when growing AI.

Need AI talent to scale your team? Access vetted AI engineers through Index.dev's talent network. Get matched in 48 hours with a 30-day free trial.

Why Scaling AI Teams is Different from Scaling Traditional Software Teams

Scaling AI teams differs significantly from developing typical software engineering groups.

The primary problem is Data Dependency; AI systems need robust machine learning pipelines, repeatable experimentation cycles, and specialized infrastructure for training and deployment. Unlike software engineering, where code alone drives development, AI performance is also determined by data quality, volume, and governance.

Equally important is the Talent Mix. A well-designed AI team structure includes not just software engineers, but also data scientists, machine learning engineers, and MLOps professionals who ensure seamless integration from research to production. This combination of skills is critical for avoiding bottlenecks, especially as firms grow rapidly from three to fifty engineers.

Finally, there is significant Organizational Complexity. AI teams must strike a balance between research-driven discovery and production-focused delivery. Without explicit alignment, models risk remaining in labs rather than adding commercial value.

Google's MLOps whitepaper offers a complete methodology for properly managing AI pipelines, governance, and scalability.

12 AI Team Scaling Strategies

Scaling an AI team from a small group of engineers to a full-fledged organization involves more than simply rapid recruiting; it also necessitates deliberate structure, governance, and technological underpinning.

The 12 strategies listed below give a road map for increasing from 3 to 50 engineers while maintaining alignment and momentum.

1. Define the AI Team Structure Early

The first step in growing AI teams is to determine how to structure an AI team. Organizations often select between two models:

- Centralized, in which a single AI group services all departments.

- Federated, in which smaller AI pods are embedded within business divisions.

Centralized structures provide uniformity, shared infrastructure, and strict control, whereas federated structures provide domain-specific knowledge and speedier response.

Delaying this decision is a typical error, since it can result in organizational silos and repeated efforts. The appropriate AI team structure should be decided early on, taking into account the company's size, data maturity, and development trajectory.

2. Start with Cross-functional AI Squads

AI initiatives flourish when they are directly related to business objectives. Instead of organizing solely by role (for example, data scientists separate from ML developers), successful firms build cross-functional AI squads. Each squad consists of data scientists, machine learning engineers, product managers, and, on occasion, domain specialists who work together to solve a specific challenge.

This methodology guarantees that AI teams offer demonstrable results rather than creating isolated prototypes. Squads can function similarly to agile software teams, but with particular skills, providing a repeatable pattern for growth.

Including product managers in AI squads also assures alignment with user demands and commercial goals, avoiding the pitfall of doing research just for the sake of it.

Discover 10 emerging AI roles you’ll need to hire (beyond just developers).

3. Invest in MLOps From Day One

Unlike traditional software, machine learning systems need ongoing retraining, monitoring, and pipeline automation. Early adoption of MLOps is critical for startups seeking quick expansion. MLOps incorporates DevOps principles—continuous integration, testing, and deployment—into the ML lifecycle, guaranteeing that trials result in production-ready models.

When teams grow from five to fifty engineers, manual techniques soon fail due to the amount of experimentation. Automated pipelines for data intake, feature engineering, training, and deployment provide consistency and repeatability. This decreases downtime, speeds up releases, and lowers infrastructure debt.

Companies that underinvest in MLOps frequently face "prototype graveyards", models that function in notebooks but never reach production.

4. Establish an AI Governance Framework

Scaling AI responsibly requires not only technological pipelines, but also AI governance structures. Governance ensures that teams follow data privacy regulations, use ethical AI approaches, and review models for fairness, drift, and performance over time.

Organizations without governance run the danger of losing their reputation, facing regulatory fines, or receiving skewed results. A governance structure generally consists of data quality checks, model explainability policies, bias audits, and compliance monitoring. It should be integrated into regular operations, not viewed as an afterthought.

This is especially important when AI teams scale and deploy models across various domains or geographies.

5. Use Staff Augmentation for AI Teams

Hiring top AI expertise is challenging and time-consuming. To expedite scalability, several organizations use staff augmentation for AI teams, bringing in external expertise for NLP, machine vision, and LLM fine-tuning.

The advantage is that you may have rapid access to particular knowledge without having to go through lengthy recruiting processes. Staff augmentation can also assist businesses in overcoming surges in project demand or replacing short-term talent shortfalls. However, management must prepare for integration—external engineers should use the same pipelines, documentation methods, and squad structures to minimize fragmentation.

The most significant risk is knowledge transfer: if external talent leaves without a thorough handoff, the internal team may struggle to maintain continuity. Strong onboarding and leave procedures help to reduce this issue.

6. Balance Researchers vs. Engineers

To be successful in AI, you must strike a balance between frontier research and production engineering. Researchers experiment with new designs and push performance bounds, but without ML engineers and infrastructure specialists, such findings may never be implemented.

Scaling frequently reveals imbalances: startups overhire researchers early on, resulting in models that never leave notebooks. Alternatively, recruiting solely engineers can limit creativity.

The ideal combination varies by context, but it often begins with a stronger technical foundation, which is then supplemented by researchers as production pipelines mature. Bottlenecks are avoided by defining roles clearly and ensuring shared ownership.

7. Create an Internal Data Platform

A scaled AI team can't function without a centralized data platform. As squads expand, so do data pipelines. Without standards, data quality concerns and duplication become commonplace.

An internal platform should provide for controlled access to raw and processed datasets, data versioning, and, ideally, a feature store for reusing engineering features across models. This uniformity promotes experimentation while lowering expenses.

Investing early in data infrastructure pays off at scale, ensuring that every new engineer or squad can operate successfully from the start.

8. Standardize Tools and Frameworks

Fragmentation is the quiet killer of scalability. Without agreed-upon standards, teams are left with conflicting frameworks (such as PyTorch vs. TensorFlow) and duplicating tools. This makes cooperation, deployment, and hiring more difficult.

Standardizing fundamental tooling, frameworks, and infrastructure from the outset promotes interoperability and speeds up onboarding. This does not exclude experimentation—teams can still experiment—but official support should focus on a uniform stack.

The benefit: engineers can effortlessly transition across squads, while infrastructure teams can optimize around a proven toolchain.

9. Layer in Product and Design Roles

AI engineers and data scientists prioritize model accuracy over user experience. To make an effect, businesses must integrate product managers and designers into AI teams.

Product managers ensure that AI roadmaps are aligned with company strategy, while designers convert model outputs into user-friendly experiences. This collaboration is especially vital in areas like conversational AI and recommendation engines, where user trust and usability are critical.

Scaling AI without product and design roles frequently produces technically attractive models that fail to connect with end users.

10. Create a Hiring and Onboarding Playbook

Scaling from 3 to 50 engineers in under a year necessitates a consistent hiring and onboarding process. Ad hoc methods slowed recruiting and integration.

Standardized coding challenges, machine learning problem-solving exams, cultural fit interviews, and planned onboarding bootcamps are all components of a solid playbook. Clear documentation allows new workers to rapidly learn infrastructure, processes, and code standards.

This technique not only facilitates expansion but also guarantees that culture and quality stay consistent even as recruiting rates rise.

11. Prioritize Knowledge Sharing and Documentation

As AI teams grow, knowledge silos can stifle productivity. To circumvent this, firms should implement information sharing mechanisms like wikis, reproducible notebooks, internal tech talks, and shared repositories.

Documentation is very important in AI, as experiments can be complicated and data-dependent. Without records, duplicating prior efforts is impossible, resulting in lost effort.

Cultural incentives, such as rewards for contributions to shared documentation, stimulate adoption. Scaled teams that value documentation progress quickly than those that keep reinventing the wheel.

12. Align AI Teams with Business Strategy

At scale, AI teams must avoid becoming pure research laboratories. The most effective organizations link squads to business KPIs like revenue growth, cost savings, and customer engagement.

This alignment not only provides stakeholder buy-in but also ensures that AI programs receive ongoing financing. Teams that achieve demonstrable results are more robust to changes in leadership or budget cycles.

Embedding product owners inside squads and establishing quarterly business-linked OKRs (objectives and key results) ensures that AI is in line with business strategy.

Learn how to accurately estimate and optimize costs in AI application development.

Conclusion

Scaling an AI team from 3 to 50 engineers in less than a year is a lofty goal, but one that can be accomplished with the appropriate foundations. Organizations may develop rapidly while retaining quality and sustainability by concentrating on AI team structure, investing in MLOps for startups, and implementing an AI governance framework. The objective is to develop a strategic plan that strikes a balance between speed and long-term scalability.

Are you ready to take the next step? Contact us at index.dev to build a future-ready AI team.