A recent industry report shows that 75% of financial companies already use AI, and another 10% are close behind.

Artificial Intelligence is now woven into the fabric of modern financial services. It’s the engine quietly running the sector. It decides who gets approved, which transactions look suspicious, when to freeze an account, and how customers get support – instantly, at scale, and around the clock.

The world's most valuable unicorns, including Fintech companies like Stripe and Revolut

But with great power comes great exposure. In a sector defined by regulation and trust, being “smart” isn’t enough.

AI must be transparent. It must be fair. It must be auditable. And it must comply with the rules, every time, without exception.

That’s where most fintech firms hit a wall. Building AI isn’t the bottleneck. Hiring the right people is. The industry needs engineers who understand machine learning and the regulatory pressures shaping financial systems: risk, compliance, bias, ethics, and the real-world consequences of algorithmic decisions.

This article explores why fintech companies need regulation-aware ML engineers, where to find them, and how a forward-thinking talent strategy can be the difference between scaling safely, and becoming a liability.

Index.dev helps fintech teams hire pre-vetted ML engineers who understand compliance, risk, and real-world regulation. Start hiring!

Why Explainable AI, Fairness, and Risk Governance Matter in Fintech

The financial services industry runs on rules. When AI shows up, it just makes the rules harder to follow.

AI gives financial institutions extraordinary leverage: faster credit decisions, sharper risk modeling, real-time fraud detection, scalable compliance workflows, and dramatically improved customer experience.

But the moment an AI system becomes a “black box,” all that value can turn into liability.

- Opaque models make compliance and accountability nearly impossible. Complex ML systems often generate outputs even their creators can’t intuitively explain. In credit scoring, underwriting, or fraud detection, that opacity can conceal bias or unfair outcomes – unacceptable under fair lending laws and anti-discrimination standards. Regulators, auditors, and customers no longer settle for a simple decision. They expect a defensible one. This is where Explainable AI (XAI) becomes a cornerstone of compliance and trust. Regulators don't just want a "yes" or "no" from a model; they demand to know why.

- Bias and fairness pose equally serious challenges. Financial data reflects decades of human behavior, and often, historical inequity. If left unchecked, ML models can replicate or amplify those patterns. In a regulated market, an algorithm that consistently disadvantages protected groups is a legal violation.

- Then comes the governance challenge, the one too many fintechs underestimate. When AI influences credit, liquidity, fraud, or compliance exposure, it must be governed with the same rigor as any financial-grade model. That means continuous validation, drift monitoring, thorough documentation, audit trails, stress tests, fallback strategies, and real human oversight. Not once. Always. In practice, many organizations also support these processes with tools like an LMS for compliance, ensuring teams are consistently trained on regulatory requirements and internal policies

In short: AI in finance demands transparency, fairness, ethical reasoning, governance discipline, and deep risk literacy. And the talent capable of operating at that intersection is rare.

Which leads to the next, and most urgent, question:

Where do you find ML engineers who meet those standards, and how do you hire them before someone else does?

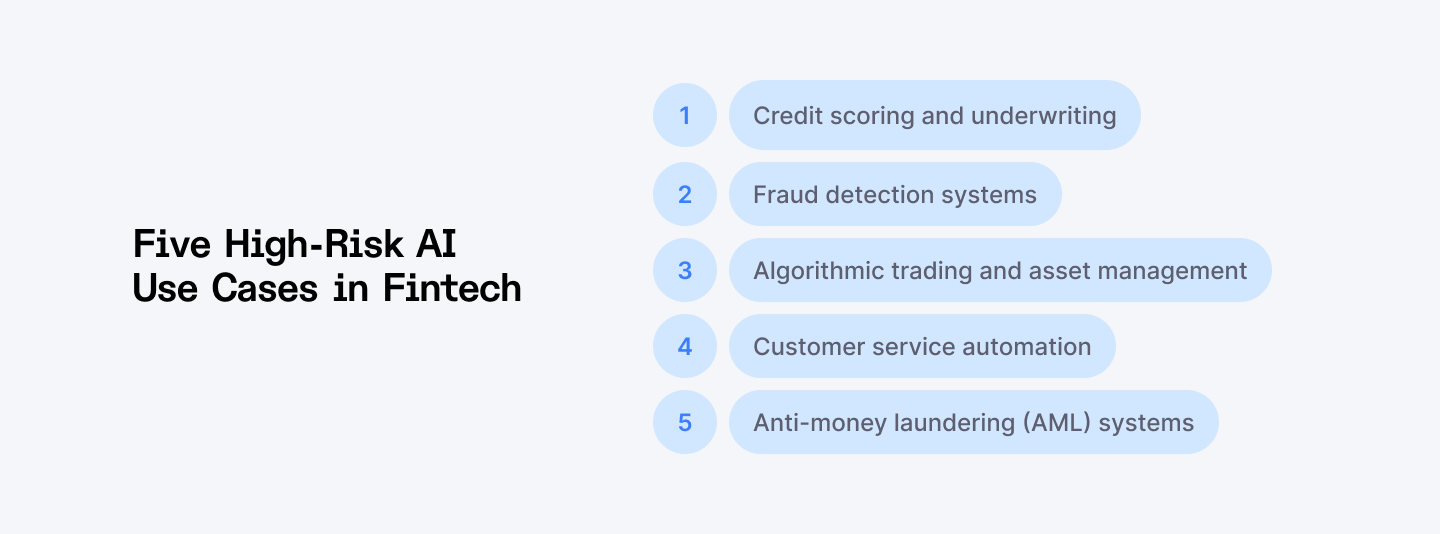

Five AI Use Cases in Fintech Where Compliance Complexity Peaks

Before exploring hiring strategies, it’s important to map the AI and ML use cases where regulatory risk is highest, and where the need for “regulation-aware” engineering talent is most acute.

1. Credit scoring and underwriting:

These models evaluate borrower risk, predict default probability, and influence who gains access to credit, including consumer-facing decisions like whether someone can refinance student loan debt. Without rigorous explainability and fairness controls, they can quietly hard-code historical bias into lending decisions.

2. Fraud detection systems:

Real-time anomaly detection is essential to minimizing losses and combating financial crime. But high-velocity systems must also minimize false positives. Blocking legitimate customers, freezing accounts, or misidentifying risk can trigger regulatory complaints, reputational damage, or operational chaos.

3. Algorithmic trading and asset management:

ML models that inform trading strategies or portfolio decisions sit under intense scrutiny, especially after unexpected losses or market disruptions. These systems require meticulous risk controls, versioning, and audit trails. If you can’t prove why a model behaved the way it did, you don’t have a defensible trading system.

4. Customer service automation:

Chatbots and AI-driven service tools handle sensitive financial data and must comply with consumer rights, data protection laws, and communications regulations. A misinformed bot can give non-compliant guidance, mishandle consent, or escalate privacy risks. An AI Chatbot for Customer Service must be carefully trained and monitored to ensure accurate responses, secure data handling, and full regulatory compliance.

5. Anti-money laundering (AML) systems:

ML-driven transaction monitoring and sanctions screening are under some of the sharpest global oversight. These systems must be accurate, interpretable, and traceable. Regulators expect documented rationales for every alert, escalation, and dismissal.

The sheer scarcity of "regulation-aware" ML engineers is the biggest bottleneck to scaling AI in fintech. These aren't just data scientists; they're hybrid professionals, part-coder, part-compliance officer, part-risk manager.

The Scarcity of “Regulation‑Aware” ML Engineers: Why Supply Doesn’t Meet Demand

There’s a reason fintech companies struggle to hire the right AI developer. It’s not the job description. It’s the talent market itself.

Most ML engineers are trained to optimize models, not navigate regulatory landmines. And yet, financial institutions keep posting roles like “ML engineer with fintech experience” and wondering why the pipeline is thin.

The truth is uncomfortable but simple:

Regulation-aware ML engineers are genuinely scarce.

These are not developers who skimmed compliance documentation or completed a weekend course on fairness. They’re professionals whose models have survived formal regulatory audits. People who understand that explainability is mandatory. People who can translate between a data scientist talking about precision-recall thresholds and a compliance officer talking about model risk tiers.

This expertise cannot be faked, and it cannot be accelerated.

It comes from building credit scoring models that passed fair lending examinations.

From architecting AML systems that withstand regulator scrutiny.

From implementing trading models that satisfy market surveillance and reporting standards.

From documenting, monitoring, and stress-testing models because the law—not preference—requires it.

The talent exists. It’s just not sitting in the mainstream ML talent market.

Read next: Learn what skills matter most when hiring remote fintech developers—and how to vet them properly.

The Talent Solution: Going Global to Beat the Scarcity

When talent becomes scarce, leaders have two choices: compete harder or redefine the supply chain. The smartest executives choose the latter.

The ML engineers fintech needs are not concentrated in the same high-cost, oversaturated hubs where every bank, fintech, and Big Tech company is fighting over the same dozen resumes.

They’re distributed globally. And that’s the advantage.

Central and Eastern Europe (CEE)

CEE, home to Poland, Romania, Ukraine, the Baltics, and others, has long been known for world-class engineering and rigorous academic foundations. That matters. Because this foundation produces engineers with a strong theoretical understanding, the exact thing needed to debug and explain complex models.

Two additional dynamics make CEE uniquely suited for fintech AI:

- Regulatory proximity:

- Developers in the region operate within EU frameworks shaped by GDPR, PSD2, and the EU AI Act. This alignment naturally produces talent comfortable with privacy constraints, model documentation, and strict auditability expectations.

- Developers in the region operate within EU frameworks shaped by GDPR, PSD2, and the EU AI Act. This alignment naturally produces talent comfortable with privacy constraints, model documentation, and strict auditability expectations.

- Operational fit:

- Near-perfect time-zone overlap with Western Europe and workable overlap with the U.S. make collaboration seamless. English proficiency is high, technical maturity is strong, and cost structures remain competitive.

- Near-perfect time-zone overlap with Western Europe and workable overlap with the U.S. make collaboration seamless. English proficiency is high, technical maturity is strong, and cost structures remain competitive.

For fintech firms licensed in both EU and U.S. jurisdictions, CEE provides a rare combination of technical excellence, cost discipline, and regulatory familiarity.

Latin America (LatAm)

LatAm has undergone a rapid transformation into a global AI delivery hub. Countries like Brazil, Argentina, Colombia, and Mexico now supply a steady stream of experienced ML engineers supporting global fintech, SaaS, and payments firms.

The region’s advantages are clear:

- Time-zone alignment:

- Near-shore overlap with the U.S. allows for real-time collaboration, critical for high-velocity trading, fraud, and risk teams.

- Near-shore overlap with the U.S. allows for real-time collaboration, critical for high-velocity trading, fraud, and risk teams.

- Modern technical expertise:

- Engineers in LatAm increasingly have hands-on experience with deep learning, LLM applications, computer vision, and real-time ML pipelines. They’re not just academic, they’ve shipped products.

- Engineers in LatAm increasingly have hands-on experience with deep learning, LLM applications, computer vision, and real-time ML pipelines. They’re not just academic, they’ve shipped products.

- Cost efficiency:

- While senior ML engineers in the U.S. routinely command high six-figure salaries, LatAm markets offer materially lower total cost for comparable experience. CEE mirrors this trend.

- While senior ML engineers in the U.S. routinely command high six-figure salaries, LatAm markets offer materially lower total cost for comparable experience. CEE mirrors this trend.

This makes LatAm especially attractive for fintechs building product and AI teams serving the Americas.

A Broader Global Talent Strategy

Beyond specific regions, adopting a global recruitment mindset allows fintech firms to tap into pockets of “hidden talent,” experienced engineers in less saturated markets who may have the skills and maturity to build compliant, high‑quality financial AI systems.

This global strategy also helps avoid “talent wars” in major hubs, where competition is fierce and compensation expectations sky-high.

Up next: See how fintech teams move faster by scaling product and AI teams with remote developers from Index.dev.

Compensation, Expectations, and What Firms Need to Know About Talent Economics

Compliance-aware ML engineers are rare. Combine that scarcity with the heightened risk profile of fintech applications, and you get a talent market where compensation reflects the premium these professionals command.

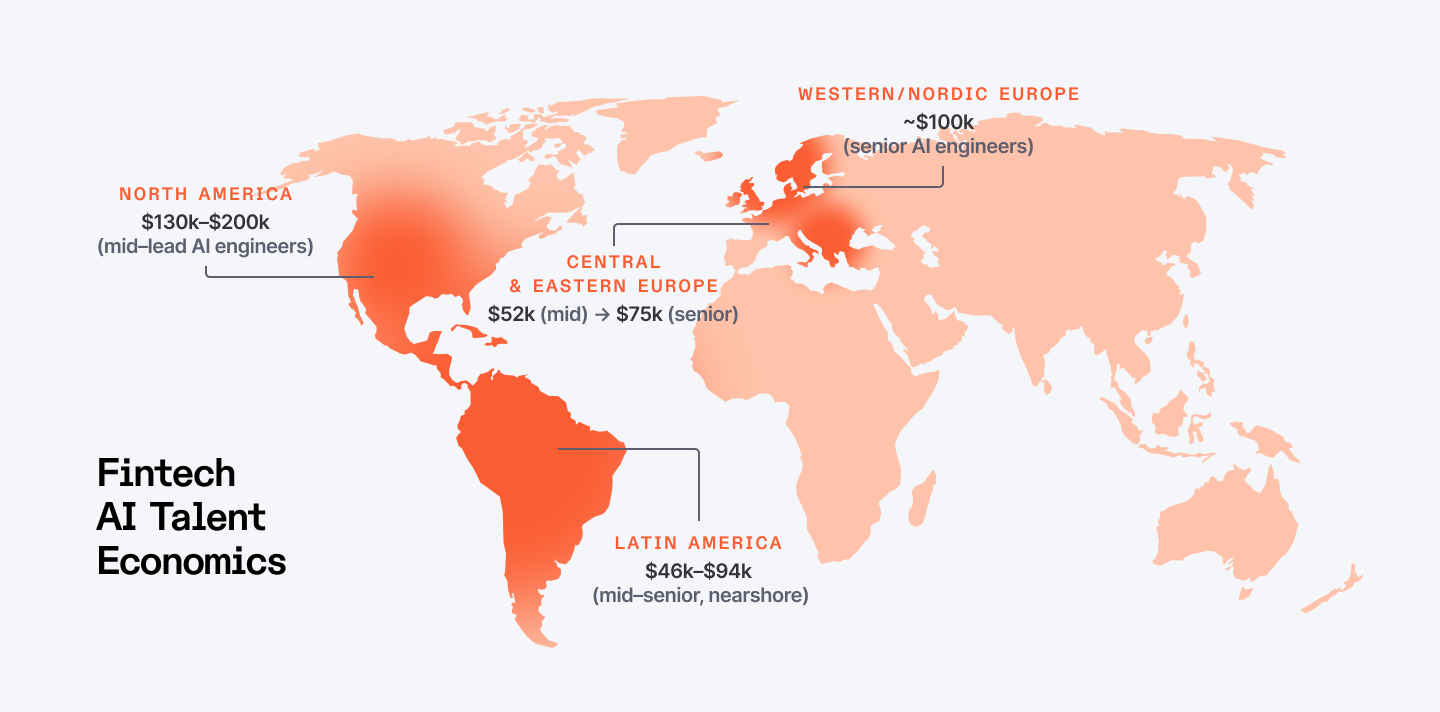

Current data suggest that in regions such as North America, mid‑level to lead AI developers earn between approximately USD 130,000 to 200,000 per year. In Western and Nordic Europe, senior AI developers earn around USD 100,000 per year on average.

In CEE, mid-level AI engineers sometimes earn around USD 52,000/year, while senior-level AI engineers can reach USD 75,000/year, offering strong value compared to Western markets. Hourly contracting (especially for remote/offshore hires) often falls between USD 50–100 per hour, depending on seniority and specialization.

In LatAm, recent reports highlight that for the U.S.-bound nearshore teams, billing rates remain attractive and competitive given the skill level, ranging from $46,000–$94,000 for mid- to senior-level roles.

For fintech firms, understanding these economics is essential: premium skill + compliance mindset = value.

Paying more for the right engineer reduces risk, builds trust, and can prevent costly regulatory or reputational mistakes down the line.

A Practical Example: Closing a Hard-to-Fill Role with Global Sourcing and Compliance Mindset

The companies that succeed at hiring regulation-aware ML engineers don’t post generic job ads and hope. They rethink the process entirely.

First, stop chasing unicorns

Few developers are simultaneously ML experts, finance veterans, and compliance specialists. The better approach pairs strong ML engineers with domain experts. Collaboration often outperforms the myth of the “all-in-one” hire.

Second, test for the right skills

Technical interviews should go beyond algorithm optimization. Candidates must be evaluated on compliance scenarios as well. Can they explain model decisions in plain language? Do they consider regulatory requirements before proposing solutions? Pre-vetted talent pools solve this challenge by front-loading the hard work: instead of each company verifying expertise in model risk management or explainable AI, these platforms ensure candidates are already compliance-ready. For example, one of our fintech clients had been searching for a senior ML engineer for their lending platform for five months with no success. Candidates with strong ML skills lacked finance domain knowledge, and those with finance experience weren’t technical enough. Through a global, compliance-focused search, we connected them with a developer from Poland who had built credit models for a major European bank. He understood both the technical architecture and regulatory frameworks and was productive from the start. The role filled in three weeks instead of five months.

Third, build global teams strategically

A company can now pair US-based compliance expertise with CEE-based engineering talent. The timezone overlap is manageable. The cost structure is sustainable. The knowledge transfer goes both directions. We must stop viewing remote talent as a temporary stopgap and start seeing it as the foundational structure for high-performance, compliant AI teams. Remote tech talent is highly valued because it brings geographic diversity of thought, which directly combats groupthink and, crucially, algorithmic bias. A global team, drawing on diverse socio-economic and regulatory backgrounds, is better equipped to spot and mitigate the biases inherent in models designed to serve a global customer base.

Firms that adopt such a talent strategy early will likely build more robust, scalable, and trust-worthy AI platforms. They will be better prepared when regulations tighten, when audits come, or when fairness and transparency are scrutinized.

Up next: Understand why data residency is becoming a critical issue for fintech leaders and how to stay compliant.

Looking Forward

Financial services are at a crossroads. Everyone agrees AI will transform the industry, and billions are being invested. Yet most initiatives remain experimental or stalled in compliance review.

That means rethinking how talent is sourced and evaluated.

- Stop searching locally for global problems. The specialized skills fintech AI demands are spread across the world. Access them.

- Stop hiring in sequence. Don’t build the model first and layer compliance on later. Bring regulatory thinking into the engineering process from the start.

- Stop treating remote talent as a fallback. The developers who can build compliant ML models often aren’t in your city, and that’s okay. Build systems that let great people work from anywhere.

The future of finance is about Responsible AI. And the only way to achieve it is by hiring Responsible AI builders. The talent exists. The question is whether you are looking in the right places.

Global networks like Index.dev are helping fintech companies connect with pre-vetted AI developers ready to build compliant, scalable, and auditable machine learning solutions, turning hard-to-fill roles into strategic hires.

➡︎ Want to explore more insights on AI talent, strategy, and hiring? Dive into our related guides on global AI talent pools, building an AI-first tech stack, hiring developers faster with AI, emerging AI roles, and how top companies solved remote AI hiring challenges.