Your cloud bill jumped 40% last month. Finance wants answers. Engineering doesn't know why. The DevOps team says "we're not managing costs, that's not our job." Nobody owns it. So nothing changes. Repeat next month.

According to the FinOps Foundation's 2025 data, 50% of FinOps practitioners list workload optimization as their top priority. But priorities don't pay bills. Results do.

Need experts who can reduce your cloud bill? Hire pre-vetted cloud, DevOps, and FinOps engineers through Index.dev and start cutting waste fast.

The Real Problem: Complexity, Not Visibility

Recent surveys show executives estimate approximately 30% of cloud compute spending is wasted, while 52% of engineering leaders admit the disconnect between FinOps and development teams directly drives that waste. The FinOps community knows the real problem isn't visibility—it's accountability.

As one practitioner on Hacker News put it:

"The biggest threat to cloud vendors is that everyone wakes up tomorrow and cost optimizes the crap out of their infrastructure. I don't think it's hyperbolic to say that global cloud spending could drop by 50% in 3 months if everyone just did a good audit and cleaned up their deployments."

The follow-up response cuts deeper:

"Even with AWS telling you exactly how to save money (they have a thousand different dashboards showing you where you can save), it'll still take you months to work through all the cost optimization changes. Since it's annoying and complicated to do, most people won't do it."

This isn't a visibility problem. It's a complexity problem.

The seven platforms covered here solve that. They handle the messy work: finding waste, allocating costs fairly, and automating optimizations before they become somebody else's problem. Most mature organizations layer multiple tools together. But even one deployed correctly prevents the financial hemorrhaging most companies accept as inevitable.

For teams building internal FinOps capacity, Index.dev connects you with developers and DevOps engineers who understand cloud architecture, cost optimization patterns, and infrastructure-as-code practices. Implementation partners accelerate adoption far beyond what internal teams achieve solo.

Understanding Where the Money Goes (And Why Nobody Owns It)

Cloud waste isn't a mystery. It's predictable. It compounds. And it lives in the gaps between teams.

Before jumping into platforms, understand what you're fighting.

Where Cost Control Fails

Recent surveys show 78% of organizations believe they're wasting between 21% and 50% of cloud spend. That's not guessing. That's empirical admission of failure.

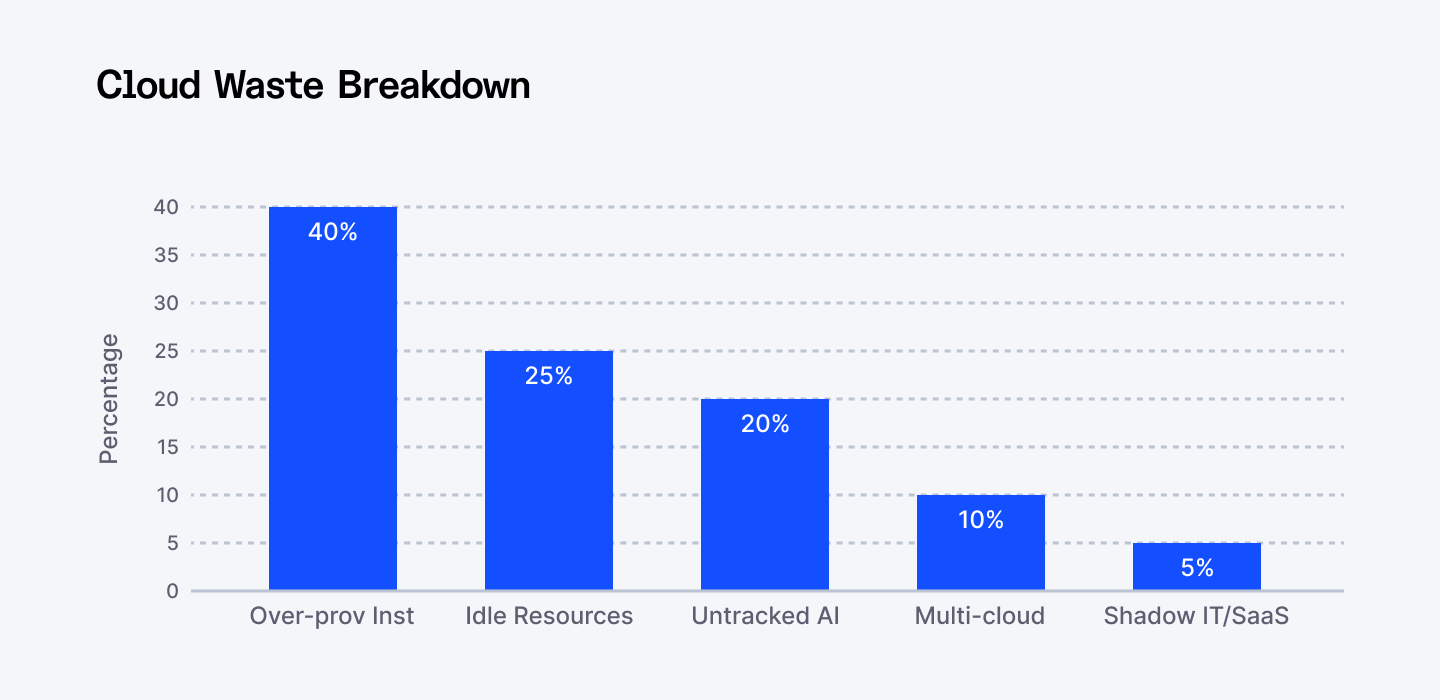

The causes cluster predictably:

- Visibility gaps create ownership voids.

- 7 out of 10 companies don't know where their cloud budget actually goes and can't trace their cloud budget to specific resources or teams. The over-provisioned instances, abandoned EBS volumes, and idle databases sit untouched. If you can't see the spend, you can't control it.

- 7 out of 10 companies don't know where their cloud budget actually goes and can't trace their cloud budget to specific resources or teams. The over-provisioned instances, abandoned EBS volumes, and idle databases sit untouched. If you can't see the spend, you can't control it.

- Resource mismatches compound monthly.

- Oversized instances. Underutilized databases. Storage nobody's accessing. These cost money for doing nothing. About 49% of cloud waste stems from incorrect resource sizing.

- Oversized instances. Underutilized databases. Storage nobody's accessing. These cost money for doing nothing. About 49% of cloud waste stems from incorrect resource sizing.

- AI complexity magnifies the problem.

- LLM models run experiments. Token usage varies wildly. Fine-tuning multiplies costs. Most teams have no visibility into token economics—they just pay the bill.

- LLM models run experiments. Token usage varies wildly. Fine-tuning multiplies costs. Most teams have no visibility into token economics—they just pay the bill.

- Multi-cloud sprawl hides waste.

- 80% of organizations use multiple cloud providers. Costs fragment across platforms. Tracking becomes impossible. Optimization opportunities hide in the gaps.

- 80% of organizations use multiple cloud providers. Costs fragment across platforms. Tracking becomes impossible. Optimization opportunities hide in the gaps.

Companies now waste an estimated $44.5 billion in cloud infrastructure costs annually due to poor management. That's a billion with a B.

Why Tools Matter More Than Dashboards

Dashboards don't save money. Policies and automation do.

The seven platforms below deliver three things:

- Visibility (connecting spend to actual business units)

- Ownership (assigning costs to teams)

- Automation (preventing waste before it happens)

Different platforms excel at different layers. Most mature organizations combine them.

If you're fighting rising cloud bills, take a look at the AI tools teams use to cut infrastructure costs fast.

The Platforms That Work

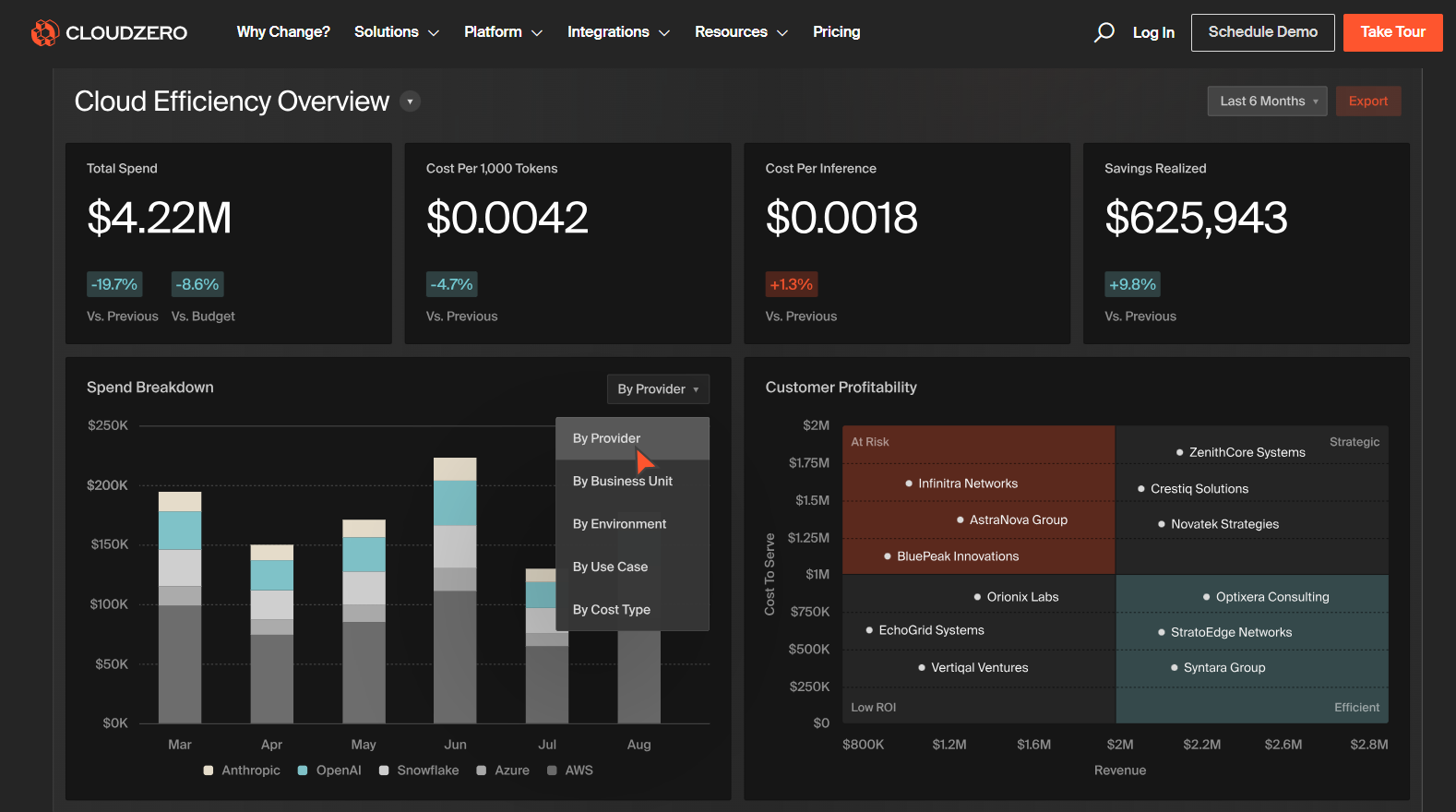

1. CloudZero: Granular Cost Attribution Without the Tagging Hell

CloudZero connects infrastructure spending to business outcomes. Instead of "that Kubernetes cluster costs $5,000/month," it tells you "that cluster serving mobile customers in the EU costs $5,000/month."

The platform ingests AWS, Azure, GCP, Kubernetes, Snowflake, Databricks, and AI providers like OpenAI, Anthropic, and CoreWeave. Token-level LLM tracking isn't a feature—it's built into the core engine.

The magic is token-level AI cost tracking. Over 90% of CloudZero customers now ingest AI-related spend into the platform.

Here's what that looks like in practice: A global SaaS platform managing 40 million users ran over 50 LLMs across multiple regions. Their engineering teams couldn't allocate AI spend by customer, geography, or user tier. CloudZero's ingestion engine eliminated that problem.

Result: They uncovered $1 million in immediate savings by optimizing inference workloads and leveraging token caching, plus a 50% reduction in compute spend. Within months, they had granular allocations by customer, region, app, OS (Mac vs. Windows), and user tier. The unit economics became transparent. AI spending transformed from a black box into a controllable investment.

The platform doesn't require perfect tagging infrastructure. CloudZero's ingestion engine works around gaps in your setup—it's built for fast-moving AI development environments where manual tagging falls behind reality.

2. Vantage: AI Workloads Across Multiple Clouds

Vantage specializes in multi-cloud AI cost management. The platform connects AWS, Azure, GCP, and 20+ providers including OpenAI and Anthropic. GPU costs—critical for AI workloads—get granular visibility.

The edge: ML-powered forecasting and optimization recommendations. Vantage analyzes your infrastructure patterns and surfaces cost-cutting opportunities automatically. The platform maintains tagging hygiene across complex AI infrastructures and provides unified cost reporting that actually makes sense when you're split across three clouds.

Development teams get custom cost allocation that maps AI expenses directly to specific projects or business units. Smaller teams benefit from clean dashboards and real-time Slack alerts. Enterprises appreciate the integration depth and scalability.

Vantage maintains a reputation for supporting the full FinOps practice, not just cost dashboards. Organizations report significant improvements in AI cost predictability and optimization efficiency—the metrics that actually matter for budget planning.

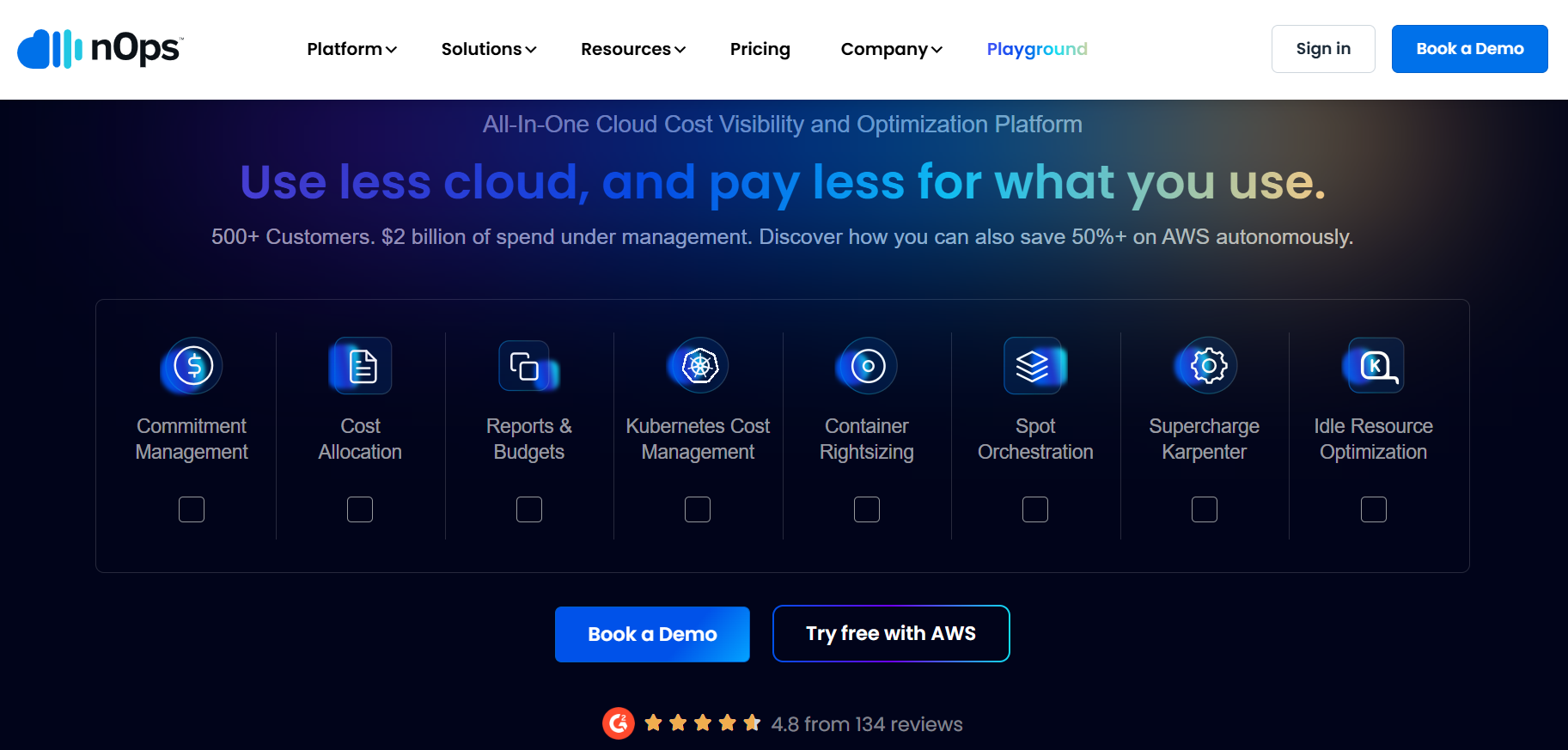

3. nOps: AWS Optimization with AI Intelligence

nOps manages $2+ billion in cloud spend across customers and recently earned top rankings in G2's cloud cost management category. But the real innovation is AI-specific.

The platform introduced AI Model Provider Recommendations that analyze your OpenAI usage patterns. It then estimates potential savings by comparing your costs against AWS Nova models and other alternatives. Teams routinely save 20-50% by switching to more cost-effective models without sacrificing quality.

Here's how it works: the system analyzes your token usage and request counts, generates side-by-side cost comparisons, and delivers ROI calculations automatically. No manual analysis. No guesswork. The ML engine just runs and tells you where the money's hiding.

For teams managing both traditional cloud infrastructure and AI workloads, nOps consolidates visibility into one platform with built-in anomaly detection and automated optimization. Real-time anomaly detection alerts teams to unusual spending patterns before they compound into major surprises—by project, API, business metric, or team.

4. Harness: Governance-as-Code Stops Waste Before It Starts

Harness Cloud Cost Management puts cost visibility directly in the hands of engineers. The platform specializes in Kubernetes cost visibility without requiring engineers to tag resources—a critical distinction from tools that demand perfect tagging discipline before delivering value.

Real impact—with documented savings: Software company Relativity reduced their cloud spend by $8,000 per day using Harness—saving $3 million over 5 months.

The standout feature is Cloud AutoStopping, which automatically detects and shuts down idle non-production resources like testing infrastructure or development clusters. Organizations achieve up to 70% cost reductions on non-production workloads by ensuring resources only consume budget when actively needed.

Governance-as-Code using Harness Cloud Asset Governance enables proactive policy enforcement across multi-cloud and Kubernetes resources. AI-powered Harness AIDA generates YAML policies for cost, security, and compliance management—bridging the gap between engineering and finance without manual intervention.

The platform aggregates cost and utilization metrics across AWS, Azure, GCP, and Kubernetes into a single dashboard. Anomaly detection identifies abnormal cost spikes, stopping bill shock before it compounds.

5. Kubecost: Kubernetes Cost Precision

Kubecost, now part of IBM, provides real-time cost monitoring specifically for Kubernetes environments. The platform maps costs to Kubernetes-native objects like namespaces, deployments, and services. You get precise cost views for specific infrastructure objects instead of guessing at shared costs.

The recommendation engine typically identifies $97 per month in waste from over-provisioned containers with excessive CPU and memory requests. By right-sizing resource requests to match actual utilization, teams need fewer nodes, translating directly into lower bills.

Real-time alerting notifies teams when costs spike or configured thresholds are crossed. Showback and chargeback capabilities let organizations attribute costs to teams and encourage accountable usage.

The limitation: optimization remains mostly manual. Teams must implement recommendations themselves. The open-source version works for single-cluster cost monitoring with basic insights—suitable for smaller deployments. Enterprise features like multi-cluster visibility, governance, and support require paid tiers.

Still, for organizations running containerized AI workloads or traditional microservices on Kubernetes, Kubecost delivers unmatched granularity into where every dollar goes.

6. WrangleAI: LLM Token Control Across Providers

WrangleAI specializes in one problem: runaway LLM costs. The platform provides token-level visibility into every LLM request across OpenAI, Anthropic, Gemini, and custom APIs. It's built around the reality that most companies now juggle multiple models simultaneously.

The standout feature: Intelligent Routing. The system automatically selects the best model for each workload—sending routine queries to cheaper distilled models while reserving premium models for complex tasks. Teams routinely cut premium-model usage by 40-70% through smart routing and caching.

Real-time dashboards show token usage, costs, and model performance from a single interface. Scoped API keys segment access by team or environment. Prompt-level tracking identifies expensive prompts begging for optimization. Spend caps and alerts prevent runaway bills.

For organizations deploying multiple LLMs, WrangleAI consolidates visibility and comparison data—ChatGPT vs. Claude vs. Gemini vs. custom models. Unlike generic cost tools that only track usage, WrangleAI actively optimizes spending through automated routing and proactive controls.

The token analysis capabilities help teams understand exactly where spending occurs, enabling data-driven decisions about model selection and architectural improvements.

7. Zluri: SaaS Stack Hygiene Prevents Duplicate Spend

Zluri addresses traditional SaaS cost optimization. The platform uses nine discovery methods to identify all SaaS applications within organizations, including shadow IT and rarely-used tools.

Beyond listing applications, Zluri surfaces which users access each tool, which apps are critical, compliance data, and risk scores. The renewal calendar prevents surprise costs by tracking upcoming renewals across the entire portfolio. Vendor management consolidates contracts, showing active agreements and expirations at a glance.

Companies typically waste 30% of SaaS spend on duplicate tools and orphaned subscriptions. Zluri's automated recommendations analyze usage patterns and functionality to suggest alternatives offering similar features at lower costs. The data-driven approach guides smarter decisions during procurement and renewal processes.

For organizations managing both traditional SaaS and emerging AI tools, Zluri recently extended into AI governance with Next-Gen Identity Governance & Administration capabilities—providing complete visibility into AI landscapes with intelligent risk controls and automated access management.

Choosing Your Stack: Stop Treating This Like a Single-Tool Problem

No single platform solves everything. The organizational context matters. The pain point matters. The budget matters.

- Use CloudZero, Vantage, or nOps when you need to connect cloud and AI spending to business outcomes. They excel at tracking GPU usage, API costs, and token economics across multi-cloud environments.

- Use Harness and Kubecost when Kubernetes cost control is your bottleneck. Harness's no-tagging-required visibility solves governance lag. Kubecost delivers container-level precision when you need manual granular control.

- Use WrangleAI if you're scaling LLM workloads. Token-level tracking and intelligent routing prevent the cost surprises that blindside teams.

- Use Zluri when SaaS sprawl is out of control. Discovery and license reclamation deliver quick wins before tackling infrastructure complexity.

Most mature organizations combine platforms. Use Zluri for vendor management and SaaS hygiene. Add CloudZero or Vantage for cloud and AI costs. Layer in WrangleAI if LLM workloads matter. Supplement with Finout if multi-cloud allocation is messy.

The key is matching platform capabilities to your specific friction points. If 7 out of 10 team members can't explain your cloud bill, you need allocation tools. If AI experiments multiply without ROI visibility, prioritize token tracking. If renewals catch finance teams by surprise, focus on vendor management automation.

Implementation: Why Buying a Tool Is Only 20% of the Work

Buying a platform doesn't cut costs. Implementation determines the other 80%.

1. Start With One Metric (And Stop Being Vague)

Define what "optimized" means for your organization. Maybe it's 20% lower cloud spend. Maybe it's zero surprise renewals. Maybe it's complete AI cost visibility. Pick one.

2. Connect All Data Sources

Incomplete integrations produce incomplete insights. Treat complete data ingestion as non-negotiable. Manual setup creates gaps that hide waste.

3. Establish Cross-Functional Ownership

Engineering teams need cost data integrated into their workflows—not a separate dashboard they check once quarterly. Finance requires detailed allocation and forecasting. Product teams benefit from understanding feature-level costs and ROI.

For organizations building internal FinOps teams, hiring the right people matters. Index.dev connects companies with pre-vetted developers and DevOps engineers who understand cloud architecture, cost optimization patterns, and infrastructure-as-code.

Implementation partners accelerate adoption and surface optimization opportunities faster than internal teams working solo.

4. Automate Everything Possible

WrangleAI's intelligent routing, nOps' optimization recommendations, and Zluri's license reclamation all work best when automated. Manual reviews of suggestions delay savings. Set policies and let tools execute.

5. Monitor Business Metrics, Not Just Dashboards

Monitor cost per customer, cost per feature, or cost per transaction depending on your model. Watch efficiency ratios like cloud spend as a percentage of revenue. Measure time-to-detection for cost anomalies.

These indicators show whether platforms deliver promised value or just add dashboards nobody uses.

6. Review and Iterate Monthly

Costs change. Workloads scale. New products launch. Experiments conclude. Regular reviews catch drift before it compounds.

Building or testing APIs? Discover the AI tools developers now rely on to ship cleaner, faster, and more reliable API workflows.

Start Somewhere (Not Everywhere)

The worst approach: picking all seven platforms, implementing none, and wondering why costs don't improve.

The better approach: identify your biggest pain point. Run a 30-day focused evaluation. Measure one specific outcome. CloudZero offers trials. Vantage provides free tiers. Kubecost has open-source options.

A startup might begin with Zluri for SaaS discovery, add Kubecost when Kubernetes costs climb, then layer in WrangleAI as AI workloads mature. An enterprise might deploy CloudZero across business units, supplement with Harness for governance automation, and use nOps for AWS-specific optimization.

Teams that master these platforms gain compounding advantages: lower CAC, healthier margins, faster innovation cycles, and clarity into what actually drives business value.

The FinOps Foundation reports that workload optimization keeps 50% of practitioners up at night. That's half your market already feeling the pain. For organizations building internal FinOps teams, hiring the right people matters enormously.

Start somewhere. Pick a metric. Connect your data. Measure results. Repeat.

➡︎ Exploding cloud bills? Hire FinOps-savvy DevOps engineers via Index.dev in 48 hours—vetted for cost optimization and IaC mastery

➡︎ Want to dive deeper into cloud development skills and AI-driven engineering? Explore our full collection of practical guides on cloud roles, cloud-native development, skills to learn, and programming languages every cloud engineer should master. Start with these: cloud native developer job description template, top 10 cloud computing skills, 10 best cloud computing programming languages, and key differences between cloud engineer & DevOps engineer.