Fintech companies have a special balancing act to deal with. Your transaction volumes escalate significantly while customers demand lightning-fast processing 24/7. If not planned properly, infrastructure costs balloon rapidly out of sight.

According to PwC, companies implementing strategic cost optimisation initiatives can achieve up to 30% savings, while maintaining or enhancing performance. Flexera also reports that around 32% of cloud spending is wasted due to inefficiencies, underscoring the need for better cloud cost management. Let’s look at some practical cost optimisation strategies in fintech infrastructure.

Optimize your fintech infrastructure costs with Index’s pre-vetted cloud specialists.

Strategy 1: Embrace Microservices and Serverless Architectures

Problem

The majority of fintech firms still operate monolithic stacks that require high overprovisioning. Such monoliths burden you with significant technical debt, with costs and time penalties to modify them, especially during peak load times. Monolithic structures also lead to inefficient resource usage. When you need to scale a single component, it cannot be done without scaling the entire application, resulting in unnecessary expenses.

Solution

Segmenting applications into microservices and employing serverless architectures for event-driven workloads significantly reduces expenses. Different pieces scale independently as dictated by actual demand, with resources going precisely where needed. Serverless computing also eliminates idle capacity costs, charging only for actual function execution time and resources consumed.

Practical Implementation

Tools to consider

- AWS Lambda and Fargate

- Azure Functions and Container Instances

- Google Cloud Functions and Cloud Run

- Kubernetes with autoscaling features

Implementation approach

- Map your domain models and data patterns to determine service boundaries

- Define asynchronous APIs clearly

- Shift occasional workloads (processing payments, generating statements) to serverless functions

- Implement container orchestration for operations requiring periodic running

- Establish automated deployment pipelines

# Example AWS Lambda function for payment processing

import json

import boto3

import uuid

def lambda_handler(event, context):

dynamodb = boto3.resource('dynamodb')

table = dynamodb.Table('Transactions')

transaction_id = str(uuid.uuid4())

# Process payment logic here

payment_status = process_payment(event['payment_details'])

# Store transaction with minimal compute resources

response = table.put_item(

Item={

'transaction_id': transaction_id,

'customer_id': event['customer_id'],

'amount': event['amount'],

'status': payment_status,

'timestamp': event['timestamp']

}

)

return {

'transaction_id': transaction_id,

'status': payment_status

}Success Story Powered by Index.dev

With assistance from our vetted fintech developers, a payments startup in Europe transitioned from a monolithic Java application to microservices with serverless elements. The outcome? Infrastructure expenses decreased 40% while transaction handling capacity was expanded. Payment verification functions of theirs now cost virtually nothing during slow times, but scale effortlessly in times of spikes.

Explore how to implement microservices architectures for better scalability.

Strategy 2: Master Auto-Scaling and Right-Sizing Resources

Problem

Fintech apps experience wildly fluctuating demand, day-to-day swings to end-of-month payroll surges. Added expenses are common to maintain capacity all month long. Cost inefficiencies are compounded further when engineers regularly over-provision "just to be safe," frequently choosing instance types with more capabilities than they need.

Solution

Enforcing smart auto-scaling and right-sizing guarantees your infrastructure dynamically scales to true demand patterns. It eliminates waste without sacrificing performance. Predictive scaling with machine learning can forecast traffic patterns from past data and pre-emptively scale capacity before eventual spikes in demand happen. It also allows for graceful degradation techniques. Here, less essential features may be temporarily switched off during peak demand events instead of over-provisioning for infrequent situations.

Practical Implementation

Tools to consider

- AWS Auto Scaling with Predictive Scaling

- Google Cloud Autoscaler with Horizontal Pod Autoscaling

- Azure Autoscale with VM Scale Sets

- Kubernetes Horizontal Pod Autoscaler

Implementation approach

- Examine past patterns for identifying predictable change

- Set metrics-based scaling policies (CPU usage, number of requests, queue length)

- Use correct thresholds with cooldown periods

- Set predictive scaling for known peak times

- Monitor instance utilisation regularly and make size adjustments

# Example Kubernetes HPA configuration for a payment API

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: payment-api-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: payment-api

minReplicas: 3

maxReplicas: 50

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

- type: Pods

pods:

metric:

name: transactions_per_second

target:

type: AverageValue

averageValue: 1000

behavior:

scaleUp:

stabilizationWindowSeconds: 60

scaleDown:

stabilizationWindowSeconds: 300Success Story Powered by Index.dev

A digital bank used tailored scaling metrics to monitor transaction volume, under the guidance of our fintech developers. With monitoring of real usage, they right-sized core instances from r5.2xlarge to r5.xlarge, saving £25,000 per month without sacrificing performance. Their system automatically takes on month-end payroll surges (300% of regular volume) before scaling in efficiently.

Strategy 3: Leverage Spot Instances and Reserved Pricing Models

Problem

The majority of fintech firms default to on-demand pricing for all workloads. This flexibility comes at a steep premium, often 2-3x higher than alternative purchasing models. The complexity of cloud provider pricing options creates decision paralysis, causing teams to stick with simple but expensive on-demand instances.

Solution

Deploying a diverse instance buying strategy aligns price models with workload profiles. Mission-critical workloads are positively impacted by reserved instances and interruptible workloads can use spot instances to achieve staggering cost savings. Advanced interruption prediction algorithms and automated instance rebalancing are now available from modern spot instance management platforms. This eases concerns over reliability.

Practical Implementation

Tools to consider

- AWS Spot Instances, Reserved Instances, Savings Plans

- Azure Spot VMs and Reserved VM Instances

- Google Cloud Preemptible VMs and Committed Use Discounts

- Spot.io for cross-cloud management

Implementation approach

- Sort workloads by criticality and interruption tolerance

- Use spot instances for batch processing, risk simulations and analytics

- Build fault tolerance with checkpointing, stateless design

- Purchase reservations for stable workloads (databases, API servers)

- Deploy instance selection tools for optimal type selection

# Terraform example for AWS spot instance configuration

resource "aws_spot_instance_request" "analytics_worker" {

count = 5

ami = "ami-0c55b159cbfafe1f0"

spot_price = "0.10"

instance_type = "c5.2xlarge"

wait_for_fulfillment = true

spot_type = "persistent"

instance_interruption_behavior = "stop"

tags = {

Name = "analytics-worker-${count.index}"

Environment = "production"

CostCenter = "analytics"

}

}Success Story Powered by Index.dev

A crypto trading platform cut costs by nearly 60% with a smart hybrid setup created by Index.dev developers. They used reserved instances for critical systems like the trading engine and APIs, saving 40% right there. For data-heavy tasks like risk simulation and backtesting, our developers switched to spot instances with a resilient setup, slashing costs by up to 80%. The result? Hundreds of thousands are saved every year.

Strategy 4: Optimise Data Storage Costs

Problem

Fintech applications generate mountains of data requiring storage for operational and compliance purposes. With 5-7 year retention being the norm, storage expenses multiply exponentially. Conventional single-tier storage methods classify all data as equally costly, irrespective of access frequency. Database licensing models employed by most financial institutions also generate hidden expenditures that usually far surpass true storage costs by considerable margins.

Solution

The adoption of tiered storage approaches, lifecycle management policies and efficient caching can cut costs on storage while ensuring compliance and performance. Partitioning data strategies, such as time-based sharding, can also shrink active database sizes further while retaining historical data for when it is needed. Open-source database solutions can remove expensive licensing schemes without compromising on reliability and compliance features.

Practical Implementation

Tools to consider

- Amazon S3 with Intelligent Tiering and Glacier

- Google Cloud Storage with Nearline and Coldline

- Azure Blob Storage with Access Tiers

- Redis or Memcached for cache

- TimescaleDB for time-series

Implementation approach

- Categorise data as a function of access frequency and compliance requirements

- Establish automated lifecycle policies for cold storage migration

- Migrate to columnar databases for analytics workloads

- Provision caching for high-access data

- Implement compression where necessary

// Example S3 lifecycle policy configuration

{

"Rules": [

{

"ID": "Transaction Data Lifecycle",

"Status": "Enabled",

"Filter": {

"Prefix": "transactions/"

},

"Transitions": [

{

"Days": 30,

"StorageClass": "STANDARD_IA"

},

{

"Days": 90,

"StorageClass": "GLACIER"

},

{

"Days": 1825,

"StorageClass": "DEEP_ARCHIVE"

}

],

"Expiration": {

"Days": 2555

}

}

]

}Success Story Powered by Index.dev

A payments processing company approached Index after they faced backlash for processing lags. Our developers used Redis cache for high-frequency account lookups, reducing database reads by 75%. They also created a tiered strategy, keeping only 90 days in primary storage, moving older data to lower-cost storage classes and archiving historical data to cold storage. This reduced database costs by 35% and overall storage spending by 50%.

Strategy 5: Adopt FinOps for Continuous Optimisation

Problem

Development teams provision cloud resources while finance manages budgets. This disconnect leads to unchecked spending, unclear accountability and missed optimisation opportunities. Fintech organisations frequently lack visibility into granular cost attribution, making it impossible to connect infrastructure spending to specific product features or revenue streams.

Solution

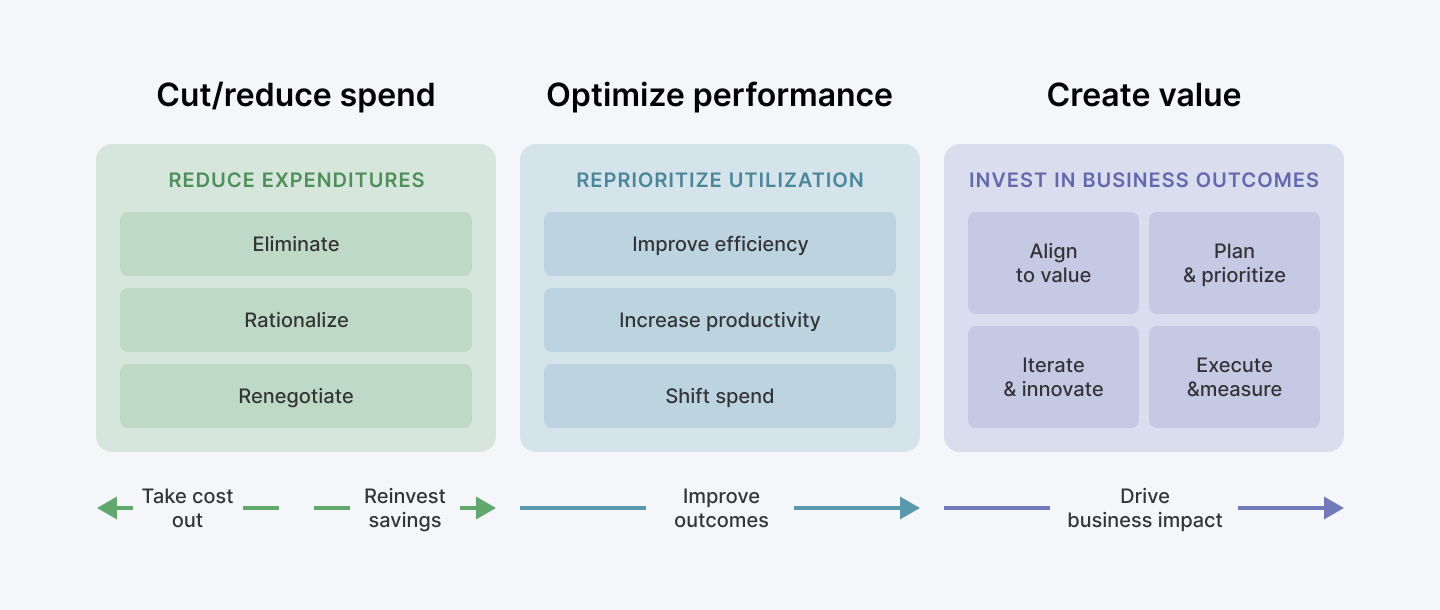

With FinOps (Financial Operations) practices, business, finance and technology teams work together to enable cloud cost visibility, accountability and ongoing optimisation. Cost attribution is possible by linking infrastructure spend to business metrics like cost-per-transaction or cost-per-customer, enabling data-driven investment decisions for feature spend.

Practical Implementation

Tools to consider

- CloudHealth, AWS Cost Explorer, Azure Cost Management

- Kubecost for Kubernetes spend allocation

- OpenCost for open-source monitoring

- Terraform Cloud Cost Estimation

Implementation approach

- Produce detailed tagging for every resource

- Construct real-time cost dashboards accessible to engineers

- Create internal chargeback plans

- Set up anomaly detection and budget thresholds

- Arrange periodic optimisation reviews

# Example AWS CLI command to tag resources for cost allocation

aws ec2 create-tags \

--resources i-1234567890abcdef0 \

--tags Key=CostCenter,Value=Payments Key=Environment,Value=Production Key=Team,Value=TransactionProcessingSuccess Story Powered by Index.dev

A payment gateway, under the guidance of Index.dev, formed a special FinOps group and applied detailed cost allocation tagging. Making costs visible to engineering teams fostered a cost-conscious culture, resulting in a 30% reduction despite 50% transaction growth over the same period.

Comparison: Cost Optimisation Approach Impact Analysis

| Optimisation Strategy | Initial Implementation Effort | Ongoing Maintenance | Typical Cost Reduction | Best Suitable for |

| Microservices / Serverless | High | Medium | 30-45% | Event-driven variable workloads |

| Auto-scaling / Right-sizing | Medium | Low | 20-35% | Predictable but fluctuating loads |

| Smart Instant Purchasing | Low | Medium | 40-70% | Stable, predictable workloads |

| Data Storage Optimisation | Medium | Low | 35-60% | Data-intensive applications |

| FinOps Implementation | Medium | Low | 25-40% | All organisations |

Learn how to accurately estimate and optimize costs in AI application development.

Beyond Cost-Cutting: Building a Sustainable Infrastructure Strategy

Optimising infrastructure costs shouldn't be viewed as a one-off project but as an ongoing discipline. The most successful fintech companies integrate cost awareness into their engineering culture without stifling innovation.

Consider these principles for sustainable optimisation:

- Make costs visible to engineers:

When developers see the financial impact of their architectural choices, they naturally make more cost-effective decisions.

- Treat infrastructure as code:

Programmatically defined infrastructure allows version control, testing and automated cost comparison before deployment.

- Calculate the cost per transaction:

As your business increases, raw infrastructure expenses will rise. Instead, aim to optimize cost efficiency per transaction.

- Invest in automation:

Human processes introduce variability and waste. Automation ensures the consistent application of cost optimisation processes.

- Periodic review cycles:

Cloud providers also introduce new instance types and pricing structures at regular intervals. Conduct quarterly reviews to take the fullest advantage of them.

Read more about cost optimization strategies for faster software development.

Putting It All Together

In the fintech competitive landscape, optimising infrastructure spend creates strategic value. By adopting microservices, serverless, auto-scaling, smart instance purchasing, data storage optimisation and FinOps, fintechs can cut costs without sacrificing performance or compliance.

Start with the strategy that addresses your biggest cost drivers, measure the impact and expand from there. Over time, these efforts can transform infrastructure from a cost burden into a competitive edge. As fintech continues to accelerate, those building efficient systems today will have more room tomorrow to invest in innovation, customer experience and growth.

Transform your fintech’s cloud spending with our vetted remote Fintech developers. Get started now.