You’ve been grinding for weeks: sifting through resumes, screening candidates, and finally booking that make-or-break interview with what looks like your perfect remote developer. The chat is smooth, their answers nail the tech questions, and you’re just about ready to hire. But halfway through the video call, something nags at you. Their eyes don’t quite track naturally. The head movements are a bit robotic. The smile looks frozen. And why do they dodge when you ask them to move the camera or tweak the background? Heads up: you might be face-to-face with a deepfake, a candidate who isn’t really there.

This is happening now. Deepfake incidents in hiring have risen over 2,000% since 2022. With AI tools that can clone voices, swap faces, and generate convincing fake identities in minutes, fraudsters are slipping into hiring pipelines at an alarming rate. In fact, the FBI has already issued warnings about deepfake candidates applying for remote tech roles. Jobs in software, cybersecurity, and data engineering are prime targets. A bad hire there could mean more than wasted salary. It could mean stolen data, breached systems, or compromised IP.

In this blog we’ll break down what to watch for and how to spot the fakes before they cost you.

Build your team with verified developers, fully vetted to prevent fake hires.

Why You Should Be Seriously Worried

Deepfake job applicants are a real threat. According to Palo Alto Networks, creating a convincing fake candidate now takes less than 70 minutes. Gartner predicts that by 2028, one in four job applicants worldwide could be fake. A survey by Resume Genius found that 17% of hiring managers have already come across candidates using deepfake tech in video interviews.

Why should you care?

Because these phony candidates aren’t just harmless fakes. They’re potential Trojan horses. Once inside your system, they could siphon off your company’s secrets, mess with your finances, or leave behind ransomware that holds you hostage.

Andi Stan, CSO at Index.dev, puts it bluntly:

“Deepfake candidates are flooding the tech job market at an unprecedented rate. Right now, it’s shockingly simple to make a fake video interview. You only need a single photo or short video of someone and a few seconds of their voice.”

Andi warns that if this keeps up, companies will need tools to verify candidates’ identities in real-time, or risk serious damage to their teams and systems.

The threat isn’t limited to pranksters. Many of these fake candidates come from high-risk or sanctioned countries. In May 2024, the U.S. Justice Department revealed that over 300 companies had unknowingly hired impostors linked to North Korea for remote IT roles, causing at least $6.8 million in revenue losses. These actors often use stolen identities, virtual networks, and other tricks to hide their true locations. These weren't small startups with weak hiring processes. These were established companies with real HR teams and they still got fooled.

“As AI evolves, fake job profiles are undermining trust in the hiring process, ” adds Stan.

And it's not just about deepfakes. Some fraudsters skip the tech entirely. They pass the interview themselves, then send someone else to do the work. A junior developer, an offshore proxy, or a team of people rotating shifts under one identity.

Why Human Expertise Still Matters

At Index.dev, we understand the promise of AI but also its limits. Back in 2020, only about 5% of profiles we screened raised red flags for potential deepfakes. In 2025, that number has doubled to 10%, and it’s climbing. “We expect fake profiles on LinkedIn and job boards to grow another 5–7% in the next two years. It’s getting easier to generate fake identities, so companies need better defenses,” Andi adds.

That’s why we currently don’t rely on specialized AI screening tools for one simple reason:

Human expertise catches what AI misses.

Every developer entering our platform is verified by experienced recruiters trained to spot inconsistencies and deepfake attempts. We use a proprietary method called Consistency Matching, where our recruiters cross-check what’s on the candidate’s resume with:

- LinkedIn profiles for professional history

- GitHub activity for real code contributions

- Upwork or other platforms for work samples and reputation

- Vetting calls where we compare answers and stories across stages

Only after everything aligns does the candidate move to the final stage: a video vetting call where we verify three things that most hiring platforms never think to test:

- Identity Verification: We use fraud detection tools and human cross-checking to catch deepfakes, proxies, and inconsistencies before they ever reach your screen. The person you interview is the person who shows up on day one — verified, accountable, and real.

- Technical Capability: We test for real capability in their core stack and in AI systems — LLMs, RAG pipelines, production environments. No surface-level screening. No checkbox exercises.

- Operational Maturity: We only vet engineers who have shipped in high-stakes environments. We look for clear thinking under pressure, ownership of outcomes, and a "ship-first" accountability mindset.

The Sneaky Signs That You Might Be Interviewing a Deepfake

At Index.dev, we’ve analyzed hundreds of remote interviews, from coding assessments to skill certifications, and noticed a handful of telltale clues that scream “fake.”

Here’s what to keep an eye on:

Lips and voice are out of sync.

- Especially when the candidate gets nervous or internet connection dips. The words don’t quite match the mouth movements.

- Especially when the candidate gets nervous or internet connection dips. The words don’t quite match the mouth movements.

Weird eye movement and blinking patterns.

- Either too mechanical, too perfect, or completely missing. Sometimes eyes dart in unnatural ways.

- Either too mechanical, too perfect, or completely missing. Sometimes eyes dart in unnatural ways.

Flickering or ghostly edges around the face.

- This glitch usually shows up when deepfake rendering slips up in real time: a little “frame skip” or jitter around their jawline or forehead.

- This glitch usually shows up when deepfake rendering slips up in real time: a little “frame skip” or jitter around their jawline or forehead.

Lighting that just doesn’t fit.

- Shadows fall funny, reflections don’t match the room, or their face lighting feels off compared to the background.

- Shadows fall funny, reflections don’t match the room, or their face lighting feels off compared to the background.

Robot-like, stiff body language.

- No natural head turns or camera movements. They avoid moving, don’t shift in their seat, and camera control feels awkward or frozen.

- No natural head turns or camera movements. They avoid moving, don’t shift in their seat, and camera control feels awkward or frozen.

Avoiding multi-angle views.

- When asked to show their profile or turn their head quickly, they get evasive or freeze. Deepfake tech struggles with real-time dynamic angles.

- When asked to show their profile or turn their head quickly, they get evasive or freeze. Deepfake tech struggles with real-time dynamic angles.

No personal or contextual answers.

- They trip over questions requiring cultural, local, or company-specific knowledge. Common deepfake operators struggle with unscripted conversation.

- They trip over questions requiring cultural, local, or company-specific knowledge. Common deepfake operators struggle with unscripted conversation.

A single sign might be nothing. But if two or more appear together, it’s worth taking a closer look.

Explore the most valuable human skills in an AI-driven job market and how to hire them.

So How Can Businesses Protect Themselves from Deepfake Applicants?

AI is getting smarter by the day, which means your job of spotting fake candidates has to get sharper too. You can’t just rely on gut feeling or quick visual checks anymore. Catching these impersonators takes a mix of tech know-how and human intuition.

Here are seven solid ways to catch deepfakes before they seep into your team, or worse, put your company at risk.

Strategy #1: Challenge Candidates With Moves AI Struggles To Fake

AI can be impressive, but it has limits. When a candidate can’t respond naturally to small, physical, or spontaneous requests, that’s a red flag.

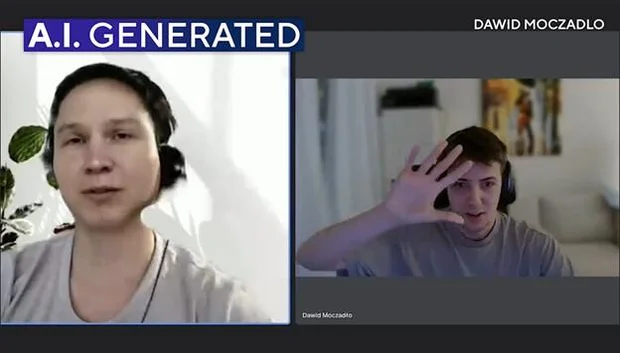

This whole problem became very real when Vidoc Security shared their now-viral story. It started with what looked like a dream candidate: great CV, polished LinkedIn, solid tech stack experience. Everything checked out on paper. But during the interview, something felt… off. His accent was strange. His facial expressions looked mechanical, almost too perfect. When the Vidoc CTO, Dawid, asked him to put his hand in front of his face, he refused. Interview over. Later, they analyzed the video and realized it was a deepfake using the likeness of a real Polish politician.

The scary part? If Dawid hadn't trusted his gut and pushed, that candidate might have made it through.

Here’s how you can test for AI-generated impostors:

A scammer uses artificial intelligence during an interview for a position with Vidoc Security.

1. Face and hand interactions

Ask them to touch their nose, cover one eye, or wave a hand across their face. AI struggles with overlapping or occluded features.

2. Multiple viewing angles

Have them turn their head, nod, or tilt rapidly. Deepfake models often fail with non-frontal poses. Videos often freeze or distort when switching angles fast.

3. Spontaneous expressions

Ask them to laugh, frown, or mimic an emotion on the spot. Deepfake algorithms often produce unnatural facial responses. Also, ask them for quick head movements: up, down, side to side. Real faces move naturally. AI-generated ones often lag, stutter, or lose quality.

4. Environmental interactions

Simple objects become complex problems when you're trying to render them in real-time over a fake face. Request they hold a physical object, move a chair, adjust lighting, or even briefly step away and come back. AI-generated visuals can lag or distort.

5. Dynamic speech and gestures together

Have them combine a verbal answer with hand gestures. AI may sync one correctly but mess up the other, revealing inconsistencies.

Make them do things that feel natural, but are tricky for AI. Most real candidates adapt immediately; most fakes freeze, glitch, or fail. It’s not mean, it’s smart hiring.

Strategy #2: Spot the Tech and Visual Slip-Ups

Even the best deepfakes leave subtle traces. A trained eye, or even a careful observer, can catch them. Try these methods to detect visual and technical inconsistencies:

1. Eye and blink patterns

Humans blink randomly based on emotions, thoughts, and environment. AI often produces too-perfect, too-fast, or missing blinks.

2. Facial edges and lighting

Look for that weird "cutout" effect around their face. It's like someone took scissors to a photo. You'll see fuzzy, flickering edges where the fake face meets the real background. It's most obvious around the hairline and jawline.

3. Audio-visual sync

Watch their lips during complex words or when they get excited. Even slight delays or mismatched tones can reveal AI manipulation.

4. Micro-expression gaps

Real people have tiny facial movements happening constantly: slight eyebrow twitches, nostril flares, corner-of-mouth movements. Look for overly smooth smiles or rigid reactions that feel “off.”

5. Unnatural motion

Head tilts, hand gestures, or subtle body movements that appear delayed, robotic, or jittery are red flags. Remember: real people aren’t perfect, but their imperfections are natural, not glitchy.

Trust your instincts. If something feels just slightly “off,” it probably is. Deepfake tech can fool algorithms, but it still struggles with the messy, unpredictable reality of human behavior.

Strategy #3: Lock Down Identity Before You Hire

In remote hiring, trust but verify isn’t enough, especially when sensitive systems are on the line. You need layers of identity verification that go beyond a simple video call. Here’s how to make sure the person you see is actually the person you’re hiring:

1. Mandatory verification policies

Communicate your verification steps upfront. Candidates know it’s standard practice and fakes often get nervous or refuse.

2. Government ID check

Request a secure, government-issued ID before the interview. Then compare it carefully with their live video appearance.

3. Multi-modal verification ecosystem

Create a verification ecosystem that no single deepfake can navigate. Combine ID checks, live technical demos, reference calls with previous managers, and LinkedIn verification requests. Run identity info through trusted watchlists, criminal databases, and fraud detection services to catch any shady slip-ups.

4. Live skill tests

Regardless of how developers feel about them, have candidates solve real-time problems. Watching them think, type, and troubleshoot makes it almost impossible for AI to fake.

5. Cross-platform validation

Check LinkedIn profiles, GitHub contributions, or verifiable work samples. Real professionals have a solid online presence. Fake candidates have suspiciously clear, recent histories with no authentic digital footprint.

In a world where up to 1 in 4 candidates might not be real within a few years, locking down who is actually applying is survival. It safeguards your team, your IP, and your peace of mind.

Strategy #4: Hit Them with Contextual and Culture Tests

Deepfakes might nail scripted answers, but they stumble hard when you throw in questions that need real-world, on-the-ground knowledge. When you dig into culture and context, you’re asking for details no AI can fake, because it’s not really living that life.

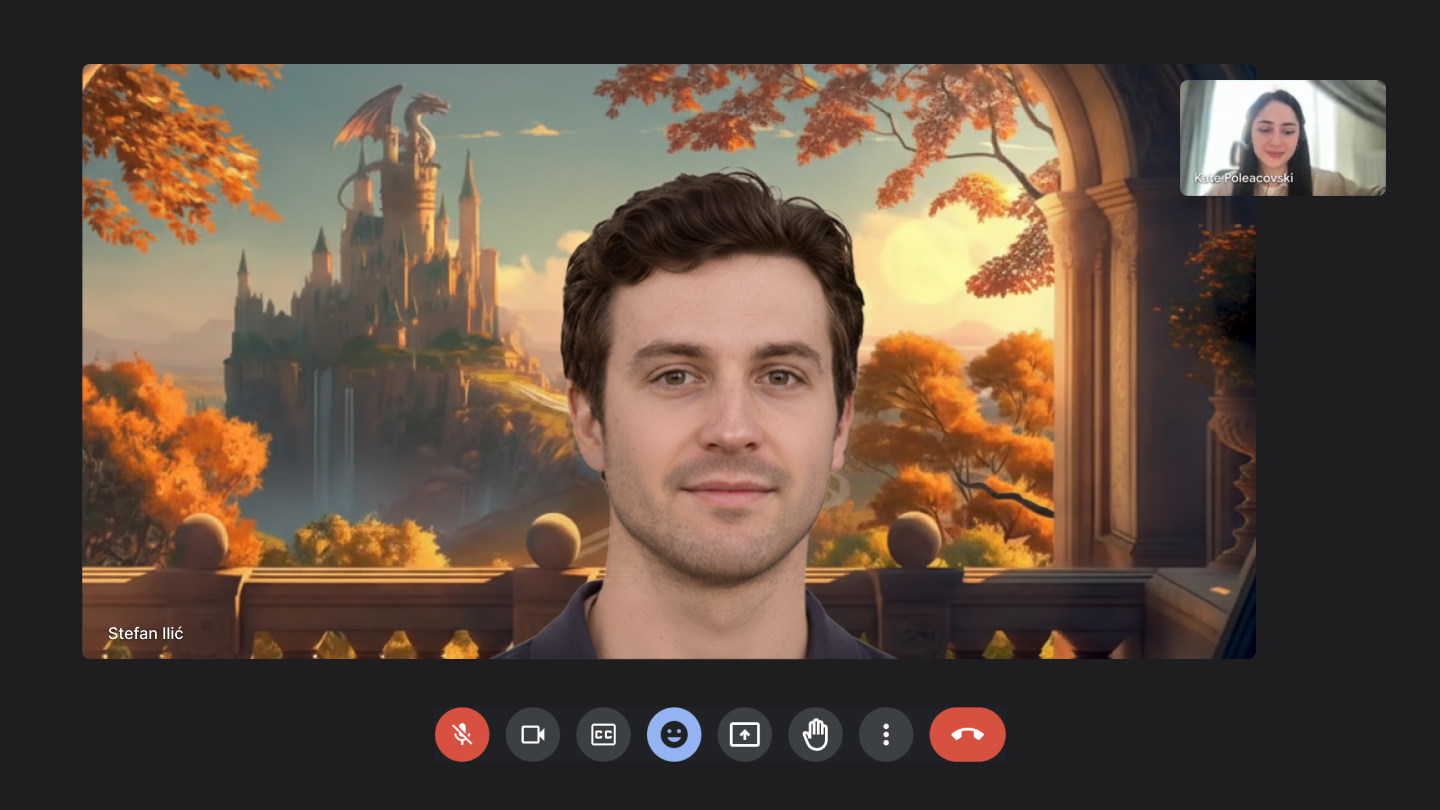

Kate Poleacovski, one of our most experienced tech recruiters, recalls one interview that raised every red flag in the book. “On paper, he looked perfect: strong resume, solid LinkedIn, even glowing references. But the second we got on the call, something felt… off. His face looked weirdly frozen, the mouth movements were stiff, and the background was oddly static,” Kate says. “When he claimed he was based in a small town in Eastern Europe, I casually asked him to name a local street or landmark. He dodged. That was the moment I knew something wasn’t right.”

Deepfake developer avatar interviewed by Kate Poleacovski (an experienced Tech Recruiter at Index.dev)

It turned out the person behind the screen wasn’t in Eastern Europe at all. He was using a deepfake avatar to pose as a local developer, while actually logging in from a completely different part of the world. Kate had a choice: end the call or confront him. She chose the latter. “I asked him to wave his hand in front of his face. He refused. Then I asked him to turn his head to the side. The image glitched, hard. Finally, I asked him what time it was locally. He paused for a full five seconds before guessing, and got it wrong.”

At that point, she told him straight: “I know this isn’t your real identity.” The candidate froze. Then the video went black. Minutes later, a follow-up email came in asking why he was “disqualified” and insisting he was “legit.” “That’s the reaction we often see,” Kate explains. “Some deny everything, some disappear, and others try to argue. But none ever admit they were using deepfake tech.”

This is not a rare story. Our recruiters encounter situations like this regularly. The fakes are getting bolder because most companies aren't looking.

Here’s how to put AI to the test:

1. Local knowledge checks

"Oh, you worked in Austin? I love that city!" Real locals don't just know the big landmarks, they know the weird little details. Which Starbucks has the longest line? What's that one restaurant everyone argues about? Deepfake algorithms won’t know these details.

2. Experience depth probes

Ask them to walk you through a typical day at their last job. "What unexpected challenges did you face on that project, and how did you handle it? What was your commute like?" Real experiences are full of boring, specific details that are weirdly hard to make up on the spot.

3. Situational storytelling

Everyone has work horror stories. "Tell me about a project that went completely sideways. What went wrong? How did people react?" Listen for emotional authenticity. Real people get frustrated, blame the wrong things initially, and have messy feelings about past failures.

4. Cultural cues

"What was the vibe like at your last company? Any weird traditions or inside jokes?" Company cultures are impossible to Google. Real employees know about the quirky stuff. Deepfake responses may lag, over-explain, or sound robotic.

5. On-the-spot improvisation

"How did you hear about this role? Do you know anyone who works here?" Then follow up: " How do you know each other?" Real professional networks have stories. Fake ones have suspiciously convenient coincidences or vague connections.

Real people can expand on any detail because they lived it. Fake people hit dead ends fast because they're working from scripts, not memories.

Strategy #5: Track the Technical Clues

Beyond faces and voices, there’s a whole layer of tech signals that can blow a fake wide open. By paying attention to technical indicators, you can catch suspicious candidates before they ever start work.

Here’s how to read the signals:

1. IP location vs. claimed address mismatch

If they say they’re dialing in from San Francisco but their IP points straight to Eastern Europe, or worse, somewhere like North Korea, that’s a huge problem. Location lies are classic fakes. Remember: over 300 companies unknowingly hired North Korean operatives this way. They didn't know until the damage was done.

2. Unusual platform choices

Real candidates don't usually care whether you meet on Zoom, Teams, or Google Meet. They just want the job. But deepfake operators? Be cautious if they insist on obscure or niche video tools. Some deepfake software works better on certain platforms.

3. Repetitive interview patterns

Sometimes, the same operator will run multiple fake profiles, repeating answers or behaviors across different “candidates.” Spotting recycled stories or similar mannerisms can tip you off.

4. Device inconsistencies

Pay attention to metadata, webcam quality, or device switching. AI-generated feeds may show repeated glitches across devices.

5. High-value targeting

Tech roles with remote-friendly policies, juicy IP, and good salaries are prime targets. If the candidate seems unusually polished or too “perfect” for a high-demand role, it might be intentional deception rather than coincidence.

One weird technical thing might be explainable. Five weird technical things happening at once? That's when you know you're dealing with something that's not quite human.

Strategy #6: Cross-Verify Online and Offline Footprints

A deepfake candidate might look convincing on video, but AI can’t easily replicate a real digital history. They have no real professional relationships, no authentic work history, and no genuine connections in the claimed industry. Your best defense? Follow the trail they leave online.

1. Social media consistency

Review LinkedIn, GitHub, or Twitter for activity. Dig into their connections: former colleagues or industry contacts from conferences. Cross-reference their work history timeline with company events, layoffs, or major changes. Fake profiles often have sparse posts, minimal connections, or sudden account creation.

2. Past employer validation

Reach out to references or previous companies. Are these real companies with real phone numbers? Do the reference contacts have legitimate LinkedIn profiles with authentic work histories? Fake candidates often provide fake references, creating entire fictional professional ecosystems.

3. Work portfolio verification

Ask for real project links, code samples, or contributions. Check for inconsistencies between their claimed experience, GitHub contributions, and the content of their portfolio. Complete digital silence from someone claiming senior-level experience? Major red flag.

4. Content authenticity

Look for repeated phrasing, generic resumes, or AI-generated writing. Use plagiarism checkers or reverse image search on profile photos and resumes. Deepfake operators often recycle visuals.

5. Cross-platform anomalies

Compare usernames, emails, and profile pictures across platforms. Mismatches can indicate stolen identities or AI-generated profiles.

AI can create a convincing face, but it can’t fake a genuine, traceable digital life. A thorough online check often exposes fakes instantly.

Strategy #7: Trust Your Gut, But Verify With Behavioral Analytics

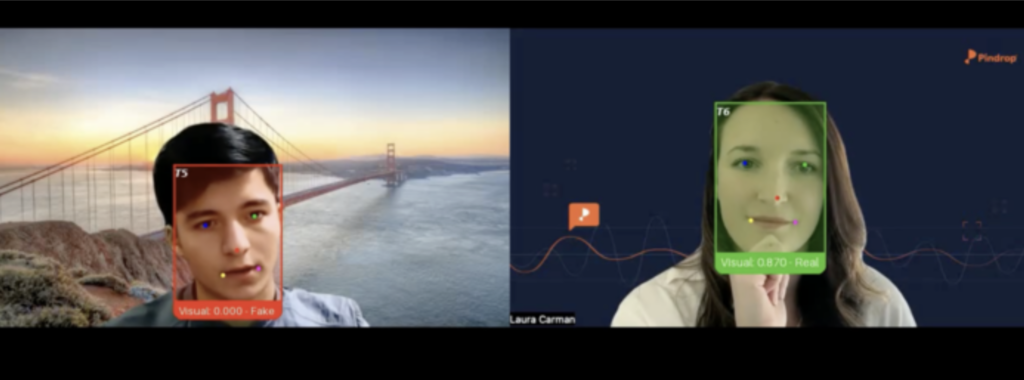

Here’s another curious case of Ivan X. On paper, Ivan was the perfect Senior Backend Engineer. But the moment the interview started, red flags went up:

- His facial expressions were slightly out of sync with his words.

- His voice lagged, sometimes dropping out entirely.

- He froze on unexpected questions, like the system was “buffering.”

When investigators ran the video through detection tools, the truth came out: Ivan wasn’t Ivan. He was a deepfake.

Machines can imitate knowledge, but they struggle with human decision-making under pressure. Observing behavior in real-time can reveal inconsistencies no AI can hide.

Here’s how:

Deepfake backend engineer, Ivan X, monitored by security assistant, a real-time deepfake detection bot

1. Stress response monitoring

Ask unexpected or rapid-fire questions. Mid-sentence, politely interrupt with "Sorry, can you repeat that last part?" Real people pause, think, and often rephrase things slightly differently. AI-assisted responses tend to restart from the exact same spot with identical phrasing, like hitting replay on a recording.

2. Interactive problem-solving

Present a live scenario that requires reasoning and improvisation. Ask them to explain something complex, then say "I'm not following that part about X." Humans will think laterally and use different analogies; deepfakes will struggle with open-ended, dynamic tasks. Scripts don't adapt well to confusion.

3. Consistency across sessions

Conduct multiple mini-interviews or skill checks. "You mentioned you worked with Python earlier, what version were you using?" Compare responses and behavior. Real people naturally reference previous parts of your conversation. AI often treats each question as isolated, losing conversational thread.

4. Peer interactions

Include team members in the interview. Group dynamics often expose unnatural behavior or delayed reactions. Drop something unexpected: "Oh, that's interesting timing, we just had someone from your previous company interview yesterday." Watch for genuine surprise, curiosity, or natural follow-up questions.

5. Emotional authenticity check

Bring up a challenging topic: "Tell me about a time you disagreed with your manager." Listen for genuine frustration, hesitation, or emotional complexity. Real people show micro-emotions: slight pauses, tone changes, even defensive body language. Fake responses feel rehearsed and emotionally flat.

Humans are messy, adaptive, and nuanced. Deepfake AI is precise, but predictable. Behavioral checks exploit that gap.

Learn the best practices for vetting software developers, including technical skills, soft skills, culture fit, and more.

How We Confront Deepfakes at Index.dev

At Index.dev, we take authenticity seriously. One fake candidate getting through isn't just a bad hire — it's a security breach waiting to happen. We know your team's time is valuable, and one fake candidate can cost far more than a few extra minutes of verification.

Here's how we do it:

Live + AI proctoring

Our system spots face swaps, mask artifacts, and synthetic behavior as it happens, so you catch anomalies before they become a problem.

Multi-layer ID verification

We cross-check live images with ID checks, live technical demos, reference calls with previous managers, and LinkedIn verification. No loose ends, no guessing. The person you interview is the person who joins your team. That's a guarantee most platforms can't make.

Skill validation in real-time

Candidates complete interactive coding challenges or practical exercises while humans monitor every step. AI can't fake hands-on problem-solving. We also go deeper — testing capability in AI systems, LLMs, and production environments, not just core language syntax.

Digital footprint correlation

We analyze LinkedIn, GitHub, portfolio links, and past contributions to make sure online presence aligns with the live candidate.

Behavioral and context checks

Through tailored situational questions and spontaneous tasks, we test for cultural fit, improvisation, and authenticity.

With multiple verification layers, we ensure that only real, qualified, and trustworthy candidates make it through your pipeline.

Tactics We Use

Our talent team has refined a Confrontation Playbook over the past three years, built on dozens of encounters like this. Here’s what we do during interviews the moment we suspect something’s off:

- The movement test

- Ask the candidate to adjust the camera, wave, or pick up a random object in the room. AI often glitches when the face or background moves in real time.

- Ask the candidate to adjust the camera, wave, or pick up a random object in the room. AI often glitches when the face or background moves in real time.

- The local knowledge trap

- “Oh, you’re in Cluj? Which café has the best coffee there?” Real locals know. Fakes stumble.

- “Oh, you’re in Cluj? Which café has the best coffee there?” Real locals know. Fakes stumble.

- The quick angle switch

- “Can you turn your head to the left for a moment?” Deepfakes hate sudden profile views.

- “Can you turn your head to the left for a moment?” Deepfakes hate sudden profile views.

- The spontaneous chat

- Drop in casual questions: “What’s the weather like where you are?” Real people answer instantly. Fakes lag or give generic responses.

- Drop in casual questions: “What’s the weather like where you are?” Real people answer instantly. Fakes lag or give generic responses.

- Consistency matching

- We cross-check their resume with LinkedIn, GitHub, even Upwork profiles. If the timeline, skills, and projects don’t line up, we dig deeper.

- We cross-check their resume with LinkedIn, GitHub, even Upwork profiles. If the timeline, skills, and projects don’t line up, we dig deeper.

What Happens After We Catch Them

Catching a fake is one thing. But what happens next?

“We document everything,” explains Mihai Golovatenco, Director of Talent at Index.dev. “Screenshots, timestamps, the exact moment we spotted the glitch. That way, if the fake tries to reapply under a different name, we already have the evidence.”

In some cases, we follow up with:

- An authenticity email explaining why they were rejected.

- IP tracking to see if the same network pops up in future applications.

- Blacklist tagging across our platform to prevent repeat attempts.

And while we haven’t rolled it out yet, we’re testing AI-assisted detection tools that flag anomalies automatically before recruiters even join the call.

Every fake we catch gets documented and blocked, permanently. Our platform builds a growing database of fraud patterns, so the same operators can't keep cycling through under new names and new faces.

Why This Matters for Our Clients

For clients, deepfakes aren’t just a hiring headache. They’re a security risk. That’s why our process is intentionally strict, so only real, verified professionals make it through. At Index.dev, every candidate is hand-vetted before joining our network.

That means:

- No anonymous hires. We verify real identities before anyone touches your code or systems.

- No shortcuts. Every developer completes skill tests and live vetting calls under human observation.

- No hidden risks. We reject fakes before they ever reach your shortlist.

And no guesswork about capability. We don't just confirm someone is real — we confirm they can do the job. Identity verification, technical integrity, and operational maturity. All three, every time.

At the end of the day, we give you confidence, so you can hire fast without fearing that the person on the other side of the screen isn’t real.

Wrapping It Up

Hiring is hard enough without AI-generated impostors trying to slip in unnoticed. But there are clear steps you can take to protect your process, and your team.

Start with the basics. Résumés and online profiles. If a resume feels too shiny, full of buzzwords but thin on real results or hands-on skills, pause for a second. That’s your first “orange flag.” Dig deeper. Check their LinkedIn, GitHub, or other professional footprints. Real people leave digital footprints. Sparse profiles, zero connections, no endorsements? That’s suspicious.

Next comes the interview. Onsite meetings are ideal, but in today’s remote world, they’re not always possible. That’s where video vigilance comes in. Watch for unusual glitches: lips out of sync, frozen edges around hairlines, or a candidate who’s unnaturally crisp against a virtual background. These subtle cues often reveal AI trickery. Challenge them physically and contextually. Ask the candidate to wave a hand, point to a light switch, or adjust something in the room. Toss in some cultural or situational questions they can’t script ahead of time. Deepfake algorithms stumble here, humans adapt.

Trust your instincts, but verify with consistency. Compare their writing style to their verbal communication. Is it off? Too formal in email, robotic on video? That’s a pink flag. If doubts linger, a casual, unscheduled follow-up call can confirm authenticity. Real candidates handle spontaneity easily; fakes usually falter.

At the end of the day, hiring now requires adding “authenticity checks” to your toolkit. You’re no longer just evaluating skills and culture fit, you’re verifying that the person you’re about to onboard is … a person.

Stay sharp. Ask unexpected questions. Watch for inconsistencies.

And remember:

AI may be clever, but it can't fake a life well-lived, a project truly shipped, or an engineer who's been in the trenches. That's what we screen for. That's what we guarantee.

Avoid scams and fake candidates! Get trusted, pre-vetted developers in 48 hours.