How you choose an AI transformation partner is the only decision that differentiates pilots from production. Choose the wrong partner and the project stalls. The cost overruns and stakeholder trust erode. Pick the right partner and AI starts to pay its way: fraud gets caught earlier, checkout rates climb, and engineers stop firefighting and start building.

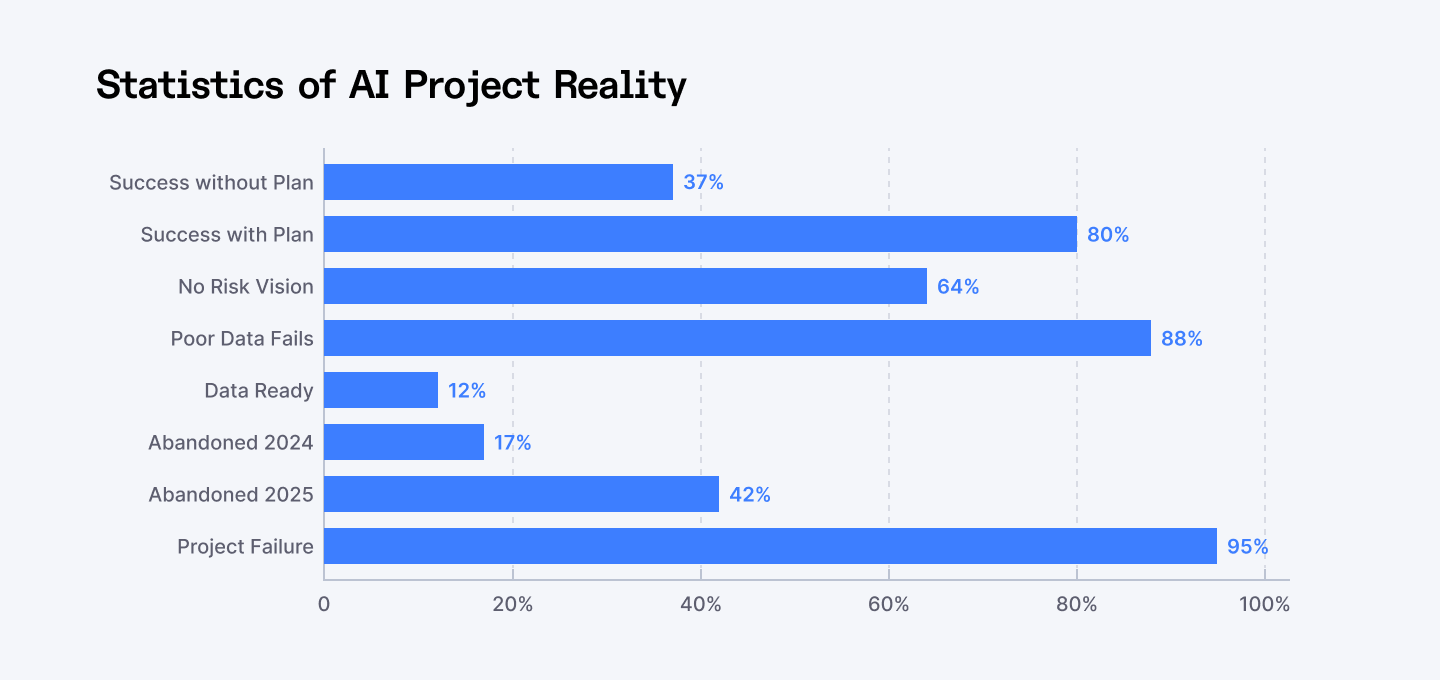

Most organizations began AI experiments in 2024. In 2025 fintech and e-commerce stepped up adoption, but true, production-grade success remained rare. Recent research indicates that about 95% of generative AI pilots never achieve measurable impact at scale — a pilot-to-production indicator that identifies how frequently prototypes get stuck prior to generating business value.

The same year, a separate measure found that 42% of companies abandoned most of their AI initiatives — a program-level abandonment metric that signals strategic retreat, not merely a stalled pilot. Both figures explain the same problem from different angles: experiments rarely become production-ready, and a growing number of organizations consequently stop pursuing AI programs entirely.

This article explains what to measure, what questions to ask, and how to run a pilot that proves value. Start with one measurable business outcome, audit data readiness, require production-grade MLOps, and vet partners on verifiable deployments, named references, and a repeatable pilot-to-scale process.

Want AI projects that succeed? Hire pre-vetted AI specialists through Index.dev and start pilots with confidence.

Why the failure numbers look so scary and what each one means

A 95% pilot failure rate describes how often experiments fail to show measurable business impact. It is a technical reality: many models never survive the leap from prototype to production because they cannot be integrated, monitored, or maintained. That statistic captures the technical and operational gap between an impressive demo and a sustained business outcome.

Critical AI project statistics revealing the scope of the failure crisis and key success factors.

A 42% abandonment rate describes a strategic choice: companies that conclude their AI investments are not worth continuing and halt programs altogether. Abandonment is the business-level consequence when prototypes do not produce reliable ROI, or when costs, compliance risk, or lack of adoption overwhelm expected benefits.

Together, the two numbers tell a complete story: technical stalls create strategic retreat.

Understanding that distinction changes what success looks like. Fixing a 95% pilot problem requires engineering disciplines: MLOps, integration, observability. Preventing a 42% abandonment requires governance, executive alignment, and a path to measurable outcomes. Treat both as priorities.

Why projects fail at scale

The root causes of AI project failures cluster around three critical areas: partner selection mistakes, unrealistic expectations, and technical debt. According to Gabriel Cohen from Klipboard, a business management platform, technology partners should be evaluated not just on implementation capability, but on their commitment to ongoing optimization and support: "The best transformations happen when vendors act as true partners, continuously refining systems to match evolving business needs.

Poor data quality affects 88% of failed projects. Only 12% of the organizations provide data properly prepared for deployment of AI. Infrastructure barriers multiply these issues, as just 64% of the organizations lack insights into the risk of AI.

Strategic misalignment kills promising projects. Companies often chase "shiny" front-end use cases without addressing core business needs. Leadership, swayed by vendor promises, deploys AI for problems better suited to traditional solutions.

The "AI-washing" phenomenon has eroded trust further. Multiple 2025 cases revealed companies marketing AI-powered solutions that were actually manual processes disguised as automated systems.

Continue reading: Learn key strategies to estimate and optimize AI application development costs.

The partner selection impact

Organizations with formal AI strategies report 80% success rates, compared to just 37% for those without strategic frameworks. The difference lies primarily in partner selection methodology.

Successful companies evaluate potential partners across six dimensions: technical depth, domain expertise, delivery track record, cultural fit, scalability, and post-deployment support. They avoid vendors who promise instant results or downplay technical complexity.

As AI budgets grow — often by three quarters year over year — those investments have to produce real, measurable value.The difference between success and failure lies in systematic partner evaluation.

Here's the framework successful companies use.

1. The first question: What outcome will matter to the business?

Begin by naming one measurable outcome. Do you want to reduce loan decision time by 30% in six months? Or are you looking to increase repeat purchase frequency by 15% in one quarter? These concrete targets orient technical choices and let teams measure progress in weeks, not quarters.

A partner who asks you about KPIs before architecture knows the job. Don’t chase every shiny AI buzzword – focus on outcomes. If a vendor starts with models and frameworks rather than outcomes, pause the conversation and reframe it around the business result.

Teams that make ROI the north star make decisions faster and avoid technology-first traps.

Key questions: What problems must AI solve? How will success be measured? Do your goals align with overall strategy (e.g. fintech compliance, e‑commerce growth)?

2. Next, test data readiness

AI needs reliable, accessible data. Many organizations discover their data is inconsistent, siloed, or incomplete only after development begins. That friction multiplies effort and cost.

Evaluate whether your data is clean, organized, and accessible. Check if existing systems (cloud platforms, databases, pipelines) can support new AI models. A good partner will audit your data and suggest fixes or tools.

Ensure you have data governance (security, privacy) sorted – especially vital in fintech (KYC/AML rules) and e-commerce (customer data). Without these basics, even a great AI algorithm will falter.

Tip: Ask potential partners how they handle messy data. A strong vendor will offer data-cleaning sprints or integration frameworks as part of their process. If a partner can’t describe this sprint clearly or does not offer one, the engagement is at risk from day one.

3. Check domain and regulatory fit before diving into technical detail

AI is not generic. Seek out partners that have knowledge in your industry. Industry experience is important because framing of problems differs by industry. Fraud detection, credit scoring, and KYC need to make different modeling decisions, audit trails, and compliance protection than a product-recommendation engine.

For fintech, ask about experience with the regulation requirements such as PCI-DSS, SOC 2, and data residency requirements. For e-commerce, ask about privacy, consent, and order lifecycle integration. Partners who bring domain context reduce design rework and speed time-to-value.

- Check case studies: Have they built AI solutions for peers? For example, did they help a retailer boost engagement or a bank cut loan processing time? Real examples show they understand your challenges.

- Industry knowledge: Can they cite relevant regulations (like GDPR, PCI-DSS) and data types in your field? Do their proposed solutions respect those rules?

A partner who “has walked a mile in your shoes” can deliver solutions faster and avoid pitfalls. On the other hand, a partner lacking sector experience may deliver generic tech that fails to meet your needs. Choose one whose past work aligns with yours, so you get customized, ready-to-run results.

4. Verify engineering maturity

Production requires engineering disciplines and prototypes skip. Expect CI/CD for models, reproducible training, drift monitoring, failover, and secure deployments. Ensure they can handle your stack.

Vet partners on both AI and engineering chops. Require a technical walkthrough.

Ask the partner to show a real deployment diagram and explain trade-offs: where models run, how data is piped, how latency is controlled, and how rollback works. Partners that cannot present a deployment with observability controls and SLAs are offering theory, not engineering.

In practice, ask them to describe how they would build a solution: that reveals their mastery.

5. Run a short, focused pilot that proves the path to scale

Before a full rollout, plan a pilot or proof-of-concept. A pilot is not a technology demo; it is a miniature production environment designed to validate the value chain (e.g. a prototype fraud detector or a first-pass recommendation engine).

Use live data. Define success with two to three KPIs. Limit scope so the pilot completes in 60–90 days.

During the pilot, track progress: Are deadlines met? Does the team communicate clearly? Are early results promising? Use the pilot to validate integration, governance, and user adoption simultaneously.

If issues arise, a good partner responds quickly and iterates. If the partner proposes an open-ended pilot without clear exit criteria, realign the scope. The pilot is the moment to confirm that engineering, product, and business teams can operate together.

6. Demand named proofs rather than polished slide decks

A track record matters. Insist on concrete case studies with metrics. Vague promises aren't enough.

Case studies should be specific: Did they speed up processes, increase revenue, reduce costs? Does the partner share these freely, or do they dodge accountability? Do they have a contactable reference? A partner that hides clients or uses anonymous samples for critical claims adds risk.

Beware empty talk: Steer clear of firms that only rattle off buzzwords. The right partner delivers real results, not just hype. Ask for a post-deployment report: what metrics moved, who owned the change, and what maintenance effort was required.

If numbers are missing or timelines vague, treat the claim skeptically. Verifiable proof of production operations is the clearest signal of capability.

7. Measure governance and ethics as operational requirements

Ethics and compliance are not optional features. Especially in fintech, ensure your partner builds security and fairness into every model from day one. For fintech projects, privacy, auditability, and bias mitigation must be embedded in design and testing.

For e-commerce, consent management and secure customer data handling are core deliverables. Check that they follow responsible AI practices (bias testing, model audits, data privacy). As another checklist notes, your partner should "embed ethics and compliance from day one".

Ask partners for governance artifacts: data lineage diagrams, bias-testing protocols, and a schedule for third-party audits or model explainability reports. If governance is an afterthought, the chance of regulatory friction or reputational harm increases. Make governance a pass/fail criterion for pilot completion.

8. Look for a repeatable delivery pattern

AI projects must prove their worth. Successful partners document how pilots move into production. They define phases, milestones, and acceptance gates. They show a template for the pipeline and a defined handover to internal teams.

A repeatable pattern reduces risk and cost. It also shows whether the partner builds capabilities inside the organization rather than creating permanent vendor dependency. Prefer partners who commit to knowledge transfer and have materials and training baked into the engagement.

9. Evaluate organizational fit and communication rhythm

A technical solution only works if the organization adopts it. Check for cultural fit: does the partner involve product owners, engineers, and data teams in planning? Do their communication rhythms match the organization’s cadence?

Also consider their team composition. A strong AI team blends data scientists, ML engineers, AI architects, and domain analysts. Don’t settle for a vendor that outsources all work; you need direct access to experts. Inquire about certifications (e.g. AWS, Google Cloud) or partnerships that prove they stay up-to-date.

- Ask for a RACI for the pilot: who approves decisions, who integrates systems, who tests models, and who is accountable for outcomes. Partners that insist on top-down handoffs without cross-functional collaboration create integration debt.

- Cross-functional approach: Do they involve business analysts or product owners in planning? Good partners help translate business goals into technical steps and transfer knowledge to your team.

- Scalability: Can they deploy models into production, handle data at scale, and maintain systems? Check that they use MLOps practices and secure cloud setups, so your AI can grow over time.

Remember: Choosing the right AI partner isn’t just a contract – it’s the start of a strategic alliance. The best ones become long-term allies, aligning their success with yours.

Keep learning: Check out more insights on AI talent, hiring, and project planning on Index.dev.

Budget signals: realistic timelines save money

Expect meaningful generative AI projects to require months of work to reach business impact. Overly optimistic timelines are a common sales tactic, and they inflate expectations.

Ask prospective partners for realistic cost components: discovery, data engineering, model development, MLOps, compliance, and ongoing monitoring. When the timeline sounds like a sales pitch ('plug-and-play in weeks'), budget for a standalone technical due diligence prior to signing. Honest timelines and clear cost breakdowns avoid scope creep and program abandonment.

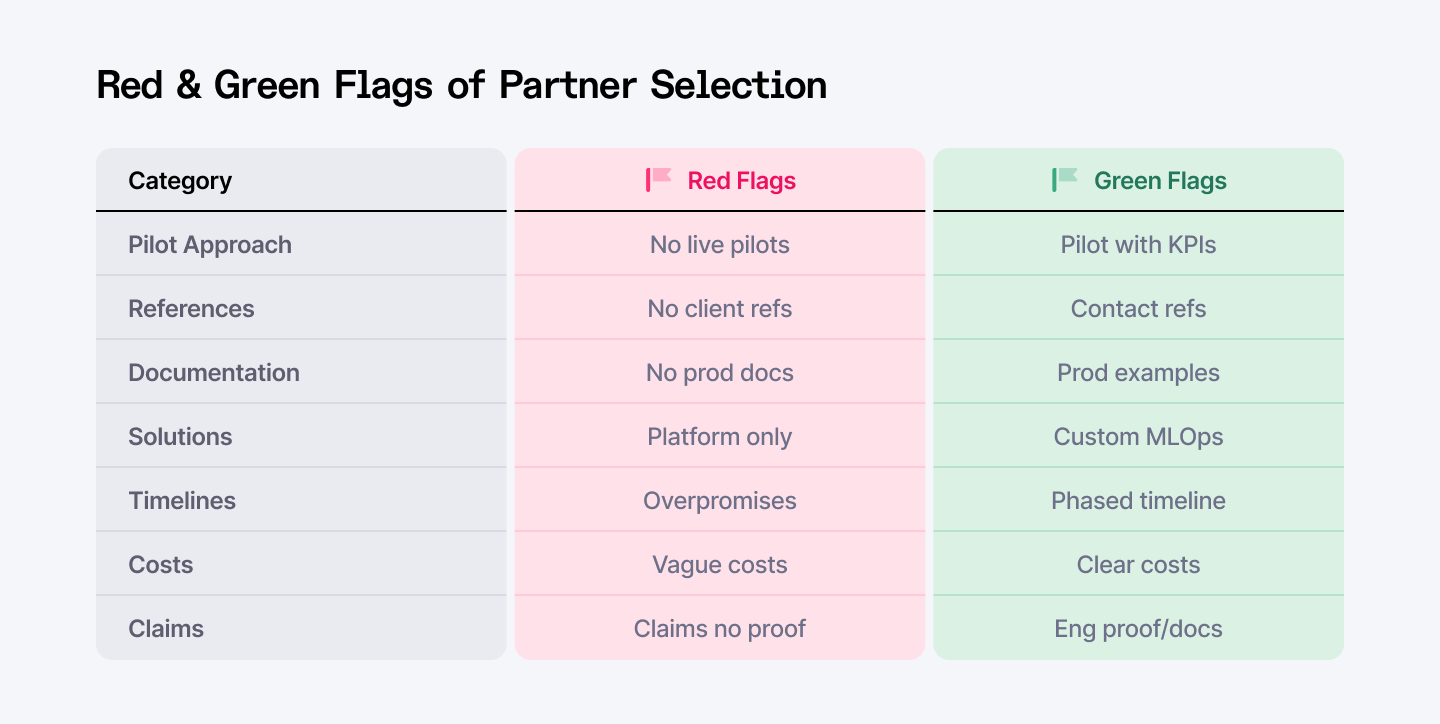

Red flags that matter

If a partner refuses to run a pilot with your live data, that is a signal. If a partner cannot name a client reference for a relevant deployment, that is another signal. If documentation of production operations is missing, that is the ultimate signal.

Another red flag is “platform-only” reliance: partners who sell a platform as a one-size-fix often skip integration work, leaving heavy customization back on the client.

Critical warning signs versus positive indicators when evaluating AI transformation partners.

Also beware of vendor claims about “autonomous agents” without a clear, auditable architecture — Gartner warns that many agentic AI projects will stall or be canceled in coming years due to immaturity and unclear business value. Treat bold autonomy claims skeptically and demand engineering proof.

How to score partners: A simple, weighted rubric

Create a short rubric to compare candidates. Weight technical delivery, domain fit, and proof-of-execution higher for complex fintech projects. Weight integration speed and UX more heavily for e-commerce.

Use the rubric as the decision anchor. A transparent scoring process reduces bias and forces comparative conversations that reveal substance over style. If a partner consistently scores low in any critical category, remove them early to save time.

Future-proofing: Look for multi-platform and MLOps depth

Technology will change, but integration patterns matter. Prefer partners who can deploy across cloud providers and on hybrid infrastructure. Ask how they handle model retraining, model promotion, and rollback. Partners with mature MLOps reduce operational risk and long-term costs.

Also test their approach to model security: runtime protections, access controls, and monitoring for prompt-injection or data-exfiltration threats must be present in 2025 project architectures. Demand specifics.

Where Index.dev fits in the process

When an organization needs to accelerate pilots with vetted, production-ready talent, Index.dev provides access to pre-vetted AI engineers and ML teams ready to integrate into pilot programs. These engineers come with demonstrated production experience, and they can plug into discovery sprints to speed the data readiness phase.

Index.dev shortens hiring friction and supplies engineers whose primary skill is converting prototypes into maintainable production systems. Consider Index.dev when time-to-hire and technical depth are gating factors for the pilot.

Next up: Explore our 10-week AI hiring plan and start building your team effectively.

Quick governance and pilot checklist

Before signing a statement of work, confirm three things. First, that the partner will run a bounded pilot using your live data and clear KPIs. Second, that the partner can show a production-grade deployment example and provide a contactable reference. Third, that the partner commits to a knowledge-transfer plan with documentation and training.

If all three are there, the engagement stands a realistic chance of delivering measurable value. If any one is missing, consider it a structural risk signal and re-evaluate.

Conclusion: Making the right choice

Choosing the right partner is the single choice that separates the few AI projects that scale from the 95% that stall. Firms that take the time to perform thorough partner evaluation, technical due diligence, and cultural fit reap significantly superior results from AI investments.

If you need guaranteed results, demand production proof: live production, measurable results, and reachable references. By following the evaluation framework and selection criteria outlined in this guide, your organization can join this successful minority and avoid the costly failures that plague the majority of AI initiatives.

The AI market will keep growing — now is the time to lock in partners who can deliver and grow with you.

➡︎ Beat the 95% AI failure rate with vetted engineering talent.

Index.dev provides pre-vetted AI engineers and ML teams with proven production experience who plug directly into your transformation pilots. Speed up data readiness sprints, accelerate pilot-to-production workflows, and get matched with developers who convert prototypes into maintainable systems. Start with a 30-day risk-free trial and scale confidently.

➡︎ Want to dive deeper into AI transformation and hiring strategies?

Explore our guides on 10 vendor selection mistakes to avoid when choosing AI partners, AI talent retention strategies, the best countries to hire AI developers, AI-driven development for business, and cost estimation strategies for AI applications. Browse our full collection of AI-focused articles for actionable insights from Index.dev experts.