Europe’s data-annotation market just hit €2B and is shifting from commodity work to regulated, expert-led projects. That's not because annotation is trendy. It's because AI can't work without clean, trustworthy data.

GDPR mandates it stays within EU borders. The AI Act requires audit trails. And yes, quality really matters. Miss one flipped pedestrian in a self-driving video? It could mean a crash.

This is why European providers command premium prices and why the talent battle for skilled annotators is just starting. Let's look at what's actually driving the market, who needs it most, and where the money is flowing.

Need compliant, domain-expert annotators in Europe? Hire vetted annotators, QA leads, and data managers in 48 hours with Index.dev.

Why Europe's Market is Exploding

The Market Splits Two Ways

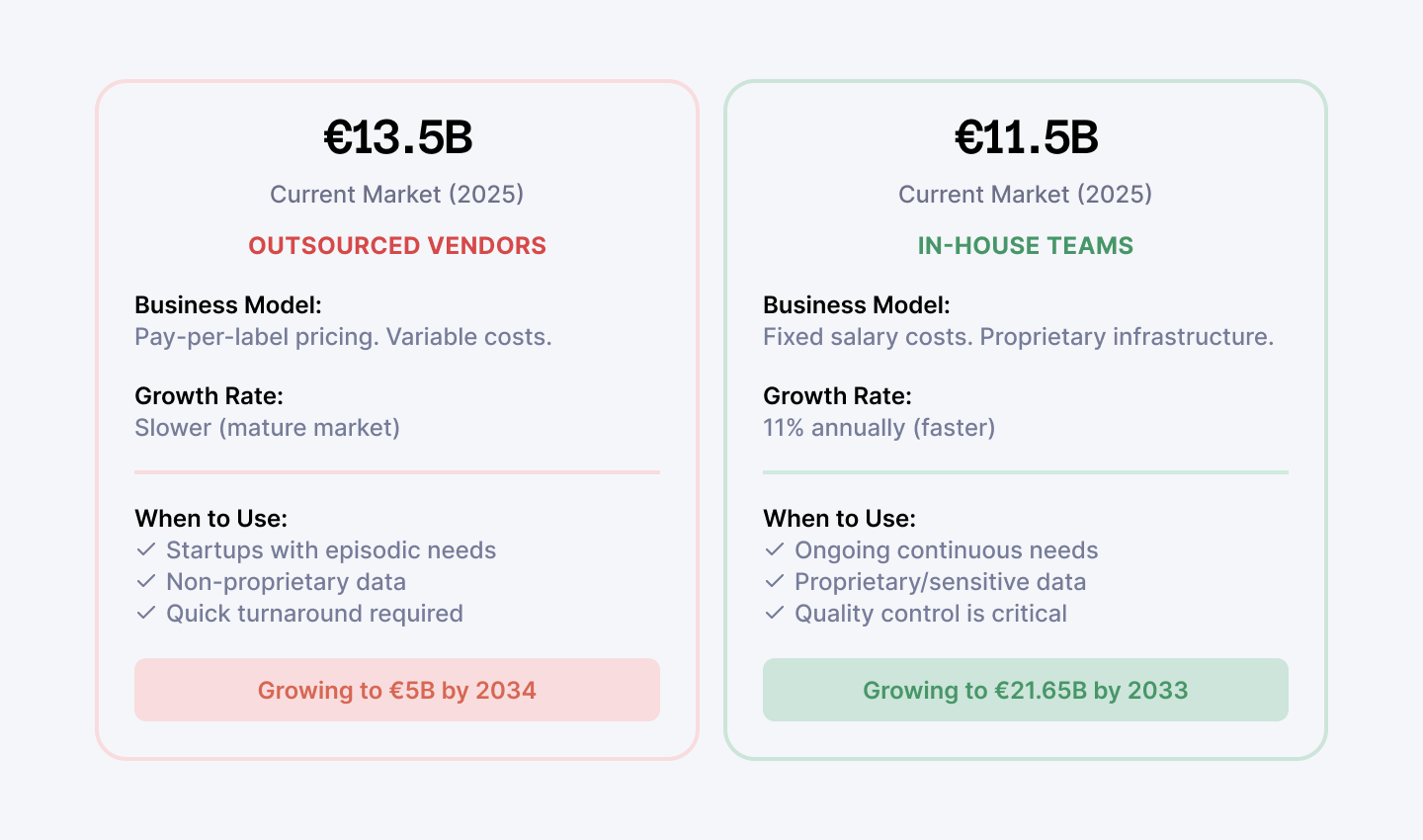

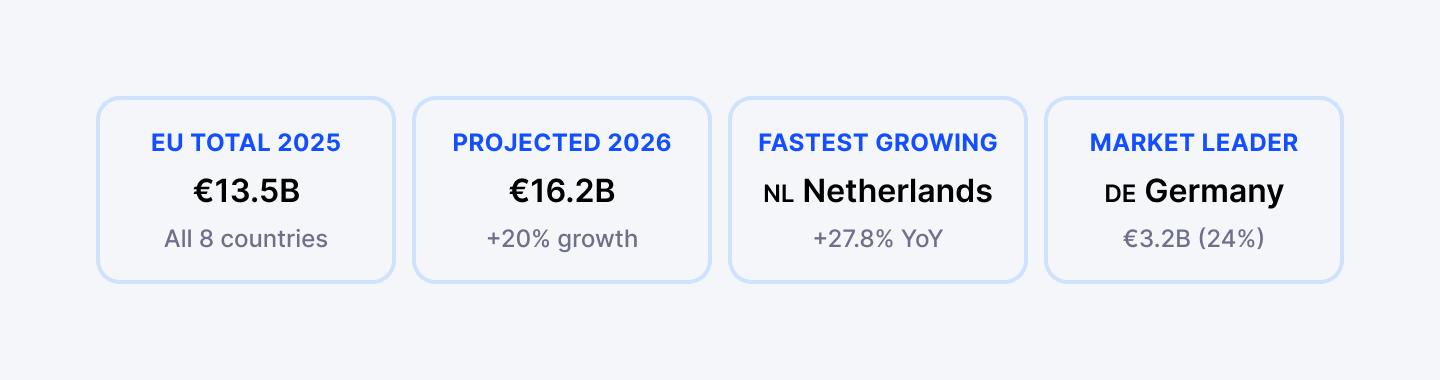

Europe's annotation market sits at €2 billion today and will more than double by 2034. Meanwhile, in-house labeling—companies building internal annotation teams—is worth €11.5 billion and growing 11% annually.

The market’s divided into outsourced specialists (medical, multimodal sensor work) and in-house teams (sensitive customer data, proprietary models). They’re complementary, not rivals.

Regional Powerhouses Driving Demand

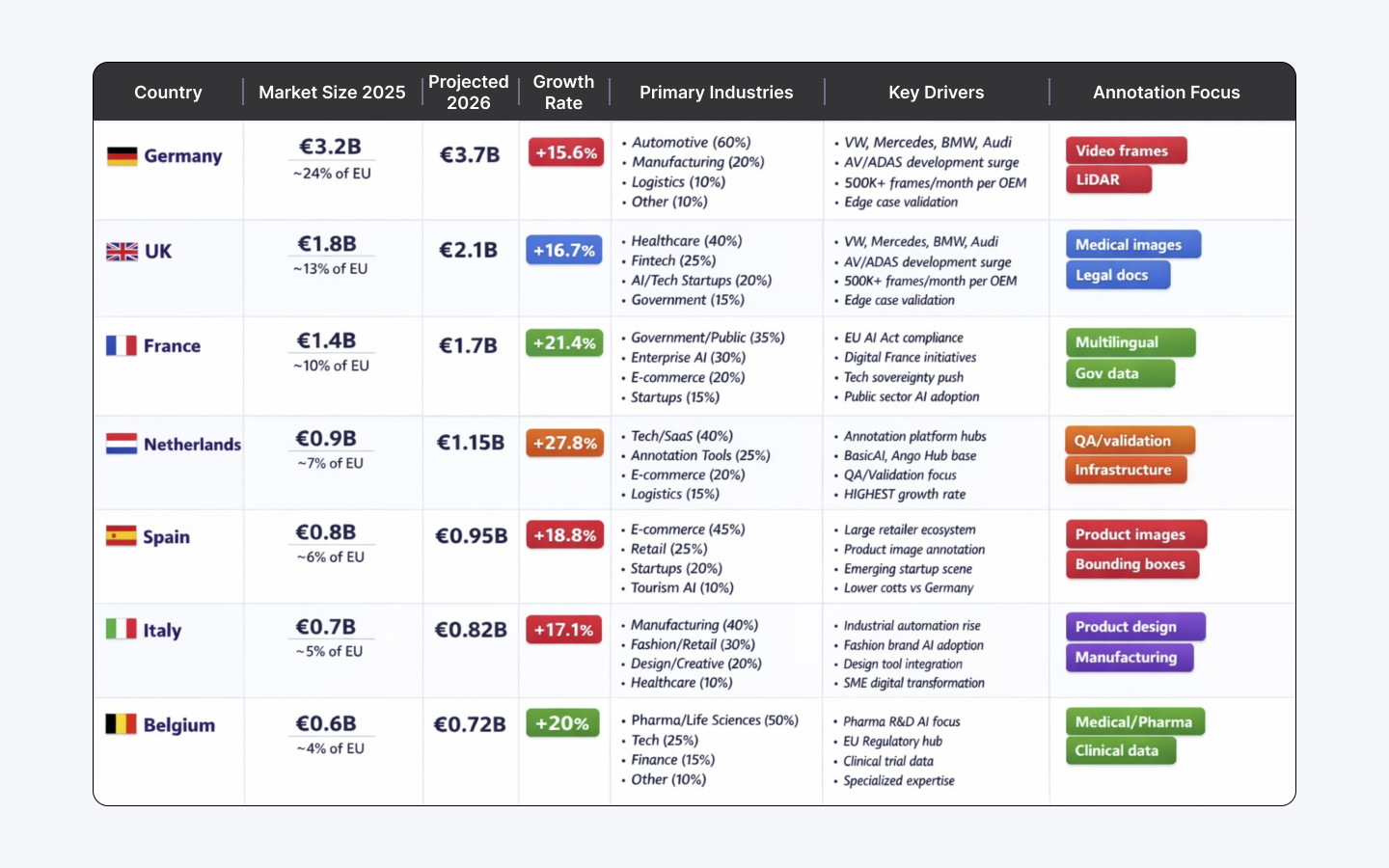

Germany dominates the market. Volkswagen, Mercedes, BMW, Audi—every major automotive OEM is pouring billions into autonomous driving and ADAS development. That means annotation demand is relentless.

The UK leads in healthcare AI. Hospitals need annotated medical images. Fintech firms need compliance documentation labeled.

France is backing digital transformation with government initiatives. The Netherlands is strong in automation and validation infrastructure.

Why Europe Costs More

But here's what makes Europe different from cheaper alternatives: compliance costs real money. GDPR requires data residency within EU borders. You can't just send files to a call center in Asia. It has to stay in-region.

The EU AI Act (fully live August 2, 2026) demands registration of high-risk systems, FRIAs (fundamental rights impact assessments), and full audit trails for every training data sample.

GDPR requires in-region protections and DPIAs; the EU AI Act mandates audit trails and registrations for high-risk systems. Together they add ~15–25% cost premium — and create a legal moat that European vendors can sell.

When to act (practical timing):

- Startups:

- Pilot now, outsource commodity labeling, build internal QA and sample audits; delay full in-house until monthly label volume justifies FTEs (≈100k+ labels/month).

- Pilot now, outsource commodity labeling, build internal QA and sample audits; delay full in-house until monthly label volume justifies FTEs (≈100k+ labels/month).

- Mid-market:

- Begin internal validation and provenance logging before scaling; require vendors to deliver machine-readable provenance now.

- Begin internal validation and provenance logging before scaling; require vendors to deliver machine-readable provenance now.

- Enterprises:

- Treat audit trails and FRIA/DPIA as a gating requirement — plan CAPEX for tooling and a compliance manager in year-one.

- Treat audit trails and FRIA/DPIA as a gating requirement — plan CAPEX for tooling and a compliance manager in year-one.

Read next: Learn how to assess real AI and ML skills before making a costly hiring decision.

Real Budget Expectations: What Does Annotation Actually Cost?

You know the hourly rates (crowdworkers: €10, domain experts: €50, specialists: €100+). But what does an actual project cost? Here's the breakdown for three realistic scenarios.

| Project Type | Dataset Size | Avg. Label Complexity | Time to Complete | Total Cost | Cost per Label | Notes |

| E-commerce Product Classification | 50,000 images | Simple (5 sec/label) | 4-6 weeks | €2,500–€5,000 | €0.05–€0.10 | Generic crowdworker level. Boxes checked. Minimal QA needed. |

| Autonomous Vehicle AV Training | 100,000 video frames | Complex (90 sec/label) | 10-14 weeks | €50,000–€85,000 | €0.50–€0.85 | Edge cases matter (fog, occlusion, rare scenarios). Inter-rater QA required. Multiple annotators per hard frame. |

| Medical Imaging (CT/MRI) | 10,000 scans | Very Complex (5 min/label) | 16-24 weeks | €50,000–€100,000 | €5.00–€10.00 | Requires radiologist review. 2-3 independent labels per image. Audit trail mandatory. GDPR + HIPAA compliance. |

| Fintech Document Classification | 25,000 documents | Medium (45 sec/label) | 5-8 weeks | €12,500–€22,500 | €0.50–€0.90 | Legal document complexity. Requires domain knowledge (finance). Consensus scoring for disagreements. |

| Retail Inventory Annotation | 200,000 product images | Medium-Simple (20 sec/label) | 8-12 weeks | €20,000–€40,000 | €0.10–€0.20 | Mix of generic labels + attribute tagging. Some domain knowledge helpful (size, color, condition). |

| Hiring Platform Skills Extraction | 50,000 job descriptions | Medium (2 min/label) | 8-10 weeks | €20,000–€35,000 | €0.40–€0.70 | Requires HR/recruiting background. Taxonomy consistency critical. Feedback loops needed. |

Key Insight:

Cost Per Label ≠ Total Cost. Many teams budget only the "cost per label" and forget the hidden costs:

- QA & Rework: Add 15-20% for quality audits and re-labeling bad data

- Tools & Infrastructure: BasicAI, Ango Hub, or internal platform = €2K–€5K/month

- Project Management: 1 FTE managing annotators, tracking progress = €40K–€80K/year

- Compliance Audit: If GDPR/HIPAA required = €30K–€75K one-time

- Real Budget Formula: (Cost per label × Dataset size) × 1.5 (add overheads) + Tool costs + PM costs = Realistic Total

Autonomous Vehicles

Why Self-Driving Cars Drive Annotation Demand

Self-driving cars drive annotation demand.

German OEMs generate terabytes of sensor data daily—camera feeds, LiDAR point clouds, radar signals. Most are useless. Empty highway. Clear weather. Nothing interesting.

The valuable 1% contains edge cases: fog, rain, occlusion, unusual traffic patterns. Those are the labels that matter.

A single annotation project might include 3D point cloud labeling, instance segmentation, multi-sensor fusion, and exact bounding box coordinates for traffic signs at millimeter precision.

A 5% error in a static image? Acceptable. A 5% error in a 100 km/h driving scenario? A crash.

The Scale Problem (And the Solution)

The scale is mind-bending. One major OEM collects 100,000+ hours of driving video monthly. Pure manual annotation would require a small army.

Instead, vendors use semi-automated pipelines: models pre-label everything, humans validate and correct. This cuts annotation time 60-70% while maintaining quality.

Vendors like BasicAI and Ango Hub built 3D sensor fusion suites specifically for automotive workflows. They know what German OEMs actually need because they've done hundreds of projects with them.

Medical Imaging

Not Every Annotator Can Label Medical Data

A radiologist in Vienna reviews a CT scan and marks a tumor. That annotation trains AI for early cancer detection.

But medical annotation isn’t crowd work. Radiologists, not general labelers, must mark CT/MRI scans. That makes medical labeling 2–3x the cost of standard CV work and forces vendors to provide strict audit trails and certified storage.

The Compliance Burden is Real

HIPAA compliance is mandatory. GDPR is non-negotiable. Patient privacy means no screenshots circulating internally. Data must be pseudonymized, encrypted in transit, stored only in certified facilities. One breach and you're paying millions in fines.

Medical annotation costs 2-3x more than standard computer vision work. It requires clinically trained specialists—radiologists, pathologists, cardiologists. Annotation types are specialized: DICOM files contain metadata.

Multi-modality annotation requires cross-referencing spatial coordinates across PET and CT scans. This level of rigor doesn't scale.

European providers have built specialized infrastructure: compliance frameworks, radiologist networks, audit logs. These aren't advantages they advertise. They're table stakes.

LLM Fine-Tuning

Why LLM Annotation Requires Judgment, Not Just Labels

Language models changed the annotation game entirely.

LLM annotation is judgment work. Domain knowledge. Reading comprehension.

Annotators don’t tick boxes—they score responses for factual accuracy, clarity, tone, and safety. Example: for a medical LLM you’d have radiologists grade model outputs against actual reports (not interns). Expect this to be slow and pricey; quality is the differentiator.

Domain Experts Are Non-Negotiable

A startup fine-tuning an LLM for medical reports needs radiologists evaluating generated text against actual clinical reports. A fintech company fine-tuning for compliance needs lawyers checking outputs for legal accuracy.

Europe has the talent. University-trained linguists. Domain experts with decades of industry experience. Native speakers across 24 EU languages.

But LLM annotation is slow and expensive. You can't scale it like image labeling. Quality is the only differentiator.

Up next: Explore the open-source LLMs from China that are shaping global AI development.

Synthetic Data + Human Validation

The Hybrid Approach That Works

Manual annotation alone can't scale anymore. The math doesn't work.

Enter hybrid workflows.

Generate synthetic data using generative AI models or physics-based simulators—automatically labeled, no human required. The labels are perfect because the generator knows what it created.

The catch? Synthetic data has blind spots.

Real-world distributions, lighting, weather, rare edge cases—synthetic generators miss them.

Humans spot it immediately.

80/20 Rule: Where the Money Is

The winning approach: 80% synthetic + 20% human validation.

This reduces annotation costs 40-50% while maintaining accuracy. Generate millions of synthetic autonomous driving scenarios cheaply. Domain experts review the critical 20%—edge cases, rare weather, unusual traffic.

European teams are adopting this pattern now.

Automotive groups generate synthetic lidar point clouds using physics engines. Medical researchers create synthetic medical images using diffusion models. LLM teams generate instruction candidates, humans rank and filter them.

This shift changes the vendor game. It's no longer the raw volume of labels. It's domain expertise, quality control, knowing which 20% matters.

Practical rule: use synthetic for scale, humans for edge cases and auditable examples.

GDPR and the EU AI Act: The Regulatory Squeeze

What the Laws Actually Demand

On August 2, 2025, the EU AI Act expanded its obligations for general-purpose AI models.

Providers must now document model training data with machine-readable provenance records. For many organizations, this effectively turns annotation workflows into part of a broader NIS2 requirements checklist, especially where AI systems support critical or regulated services.Disclose copyrighted material used in training. Register high-risk systems in an EU database. Conduct fundamental rights impact assessments.

This has direct implications for annotation.

GDPR already requires data protection impact assessments (DPIAs). The AI Act adds FRIAs on top.

Bias Detection is Now Mandatory

Organizations now must prove annotation quality processes don't introduce bias. For autonomous driving, document that pedestrian detection datasets include diverse body types, ages, genders. For medical AI, ensure training data doesn't over-index on wealthy populations.

Your Annotation Vendor Must Track Everything

The compliance burden falls on whoever touches the data. Annotation vendors need systems that track:

- Who labeled each data point

- When it was labeled

- What guidelines were used

- Who reviewed it

- What feedback was incorporated

These audit trails are expensive to maintain. They're also mandatory. Any vendor without this infrastructure is betting on non-compliance.

In-House Labeling: The Emerging Shift

Why Companies Are Building Internal Teams

Companies are building internal annotation capabilities instead of outsourcing everything.

The in-house data labeling market in Europe is projected to grow from €11.5 billion in 2025 to €21.65 billion by 2033. That's 11% annual growth—faster than outsourced services.

Why? Control. Data sensitivity. Quality consistency.

When proprietary datasets touch your competitive moat, external contractors aren't acceptable. A financial services firm doesn't want external teams labeling transaction data. A pharma company doesn't want external annotators touching patient records.

In-house teams also build institutional knowledge. They understand which edge cases matter. They iterate faster. They don't wait for vendor turnaround times.

The Trade-Off: Fixed Costs vs. Flexibility

The trade-off: fixed costs. Building annotation infrastructure requires hiring, tooling, management overhead. It makes sense for large enterprises with steady demand. Startups still outsource because per-unit economics don't work until you hit scale.

This bifurcation is reshaping the vendor landscape. External providers are moving upmarket—specialized workflows, complex domains (medical, legal, financial). High-volume commodity work is being internalized by companies with the scale to justify it.

Quality Control: Where Systems Catch What Humans Miss

Poor Annotation Quality Kills AI Systems

This is true. A mislabeled medical image trains a bad diagnostic model. One edge case missed in autonomous driving datasets is a crash. One biased instruction in LLM fine-tuning data propagates discrimination through the model.

The Layered Quality Approach

Leading European providers use layered quality control:

- Inter-rater reliability testing compares annotations from multiple human reviewers. If two radiologists disagree on a lung lesion, escalate to a senior radiologist.

- Consensus scoring means data points get labeled by multiple people. Disagreements trigger expert review.

- Automated quality checks flag suspicious annotation patterns.

- Domain expert review queues ensure complex cases go to senior annotators, not junior staff.

- Feedback loops send misclassified data back to annotators for retraining.

These processes cost money and take time. They're not scalable if volume is your only goal. They're essential if your goal is AI systems that actually work in production.

The Annotation Vendor Landscape

Which Platforms Do What

The European annotation stack has matured beyond simple dashboards.

- Security & compliance: Encord — for medical/HIPAA workflows.

- Automotive & multimodal: BasicAI, Ango Hub — strong LiDAR and sensor fusion support.

- Developer-friendly: Roboflow — API first and fast prototyping.

Choose Based on Your Actual Needs

Choose based on your data types, compliance requirements, and integration needs. Medical imaging needs DICOM support and radiologist workflows.

Autonomous driving needs LiDAR handling and sensor fusion. Financial services need text annotation and audit logging.

The Talent Problem

You Can't Train This in a Week

Annotation isn't a training-wheel job. You can’t just hire anyone off the street to label MRIs overnight. You can’t label clinical datasets without actual radiological expertise.

Europe's Unique Challenge

Europe compounds this. Labor is expensive. Immigration rules vary by country.

Building a team of 50 clinical annotators across Germany, France, and the UK requires navigating different labor markets, tax regimes, employment regulations.

This is why many providers have distributed teams across Eastern Europe. Lower labor costs. EU members. Easier employment logistics.

But it creates a challenge: maintaining quality and consistency across distributed teams requires tooling and management overhead.

Automation Is the Partial Answer

The alternative is automation. AI-assisted labeling reduces the number of humans needed. Self-supervised learning models learn from unlabeled data. Active learning systems identify which data points are worth human review.

We're seeing two parallel trends: (1) annotation services getting more specialized and expensive (medical, automotive, legal), and (2) annotation getting more automated (commodity tasks).

What to Do Now

The European annotation landscape is tightening. Compliance is non-negotiable. Quality standards are rising. Costs are rising. Demand is exploding.

1. Choose vendors early

Don't wait until you need 100,000 labels. Test them on a pilot. Understand their QA processes, compliance certifications, tool integrations.

2. Plan for in-house validation

Even if you outsource initial annotation, budget for internal review infrastructure. Your data is your competitive advantage.

3. Invest in synthetic data

Don’t rely on fully manual labeling to scale. Use a hybrid approach that combines synthetic data with human validation for the critical 20%.

4. Document everything

The EU AI Act is here. FRIAs and DPIAs are mandatory. Annotation audit trails are compliance requirements. Build this into your processes from day one.

5. Hire domain experts

If your annotation task requires judgment (medical imaging, legal analysis, financial compliance), don't cheap out. Domain expertise is the difference between usable datasets and garbage.

Up next: See how talent leaders can build a strong AI team in just 10 weeks, step by step.

The Bottom Line

The annotation market isn't a commodity anymore. It's specialized expertise. An ecosystem where compliance, quality, and domain expertise are the moats. Vendors who understand this are building sustainable businesses. Those who don't are racing to the bottom.

➡︎ Ready to scale annotation operations with vetted European talent? Index.dev connects you with data annotation specialists and quality assurance experts who understand GDPR compliance and audit requirements.

➡︎ Want to explore more about AI in hiring and recruiting automation? Read our deep dives on how to integrate AI tools in hiring workflows, discover the top 17 AI recruiting tools for hiring software developers, learn to spot biases in AI hiring tools, and explore the 7 best AI tools for large-scale hiring. Browse our full collection of AI recruitment insights on Index.dev to stay ahead of the curve in 2025 hiring trends.