Picture this: a shortlist of promising candidates and a single overnight production bug that costs the business money. Which hire prevents that bug? Which one ships a monitored model that scales?

In 2025, hiring for AI is less about degrees and papers and more about dependable delivery under real constraints — data limits, latency budgets, cost caps, and ethical guardrails. Start every role brief with the outcome and the operational constraint; that focus makes the rest of the hiring process simple, comparable, and predictive.

This article explains how to design assessments that surface those patterns, how to score consistently, and how to treat hiring as a product that improves with each hire.

➡︎ Hire AI/ML developers who deliver measurable outcomes.

Understand market realities

The market shifted fast in 2025. Generative-AI demand exploded. Job postings moved from research titles to production responsibilities. A short, reproducible take-home reveals more than résumés listing model names.

Employers pay premiums for skills that create product outcomes. Bad hires cost hundreds of thousands per position.

Key market signals:

- Only 15-20% of developers possess verified AI skills

- Over 200,000 global AI/ML job postings exist

- AI/ML roles pay 67% higher compensation than traditional software positions

- 3.2:1 talent shortage ratio creates hiring pressure

The hiring challenge:

Hire faster. Hire for measurable impact. Understanding market pressures establishes why we need better hiring. Those market signals make the same point: hiring must be judged by product outcomes. Which leads to a single decisive test — can the candidate ship a monitored endpoint?

Why hiring for AI is a product problem

If hiring were a product, the spec would start with a single goal: the outcome and its operational guardrails. The decisive hiring question is this: can the candidate move messy data into a monitored, cost-aware endpoint that serves users reliably?

If yes, you’ve narrowed the bar to practical delivery. If not, degrees and publications are comforting but not decisive.

Look for reproducibility and operational judgment first: a Docker/pinned-script run that reproduces results, a one-page trade-off memo, concrete monitoring thresholds, and basic CI/CD notes. Those signals separate people who prototype from people who ship.

AI/ML is a portfolio of specializations. Production machine learning engineers, data scientists, NLP specialists, and computer-vision experts need different evidence. Map roles to work, not titles, and you’ll avoid the common trap of conflating research prestige with production competence.

Define core competencies (what to test)

Before you design assessments, decide what competence looks like for the role. Below are the non-negotiables every assessment should be able to surface.

1. Programming proficiency

Python remains the lingua franca. Tests should show reproducible scripts, modular code, pinned dependencies, and simple tests. Look for clean organization, readable functions, and a README that explains how to reproduce the run in under 30 minutes.

2. Mathematical foundation

Ask candidates to connect math to behaviour: why a regularizer reduces variance here, or how uncertainty in predictions changes product decisions. Avoid cold recall — assess applied understanding in business contexts.

3. Machine learning fundamentals

Expect a working knowledge of supervised/unsupervised learning, validation strategies, evaluation metrics (precision/recall/F1/AUC), and pragmatic mitigation for overfitting.

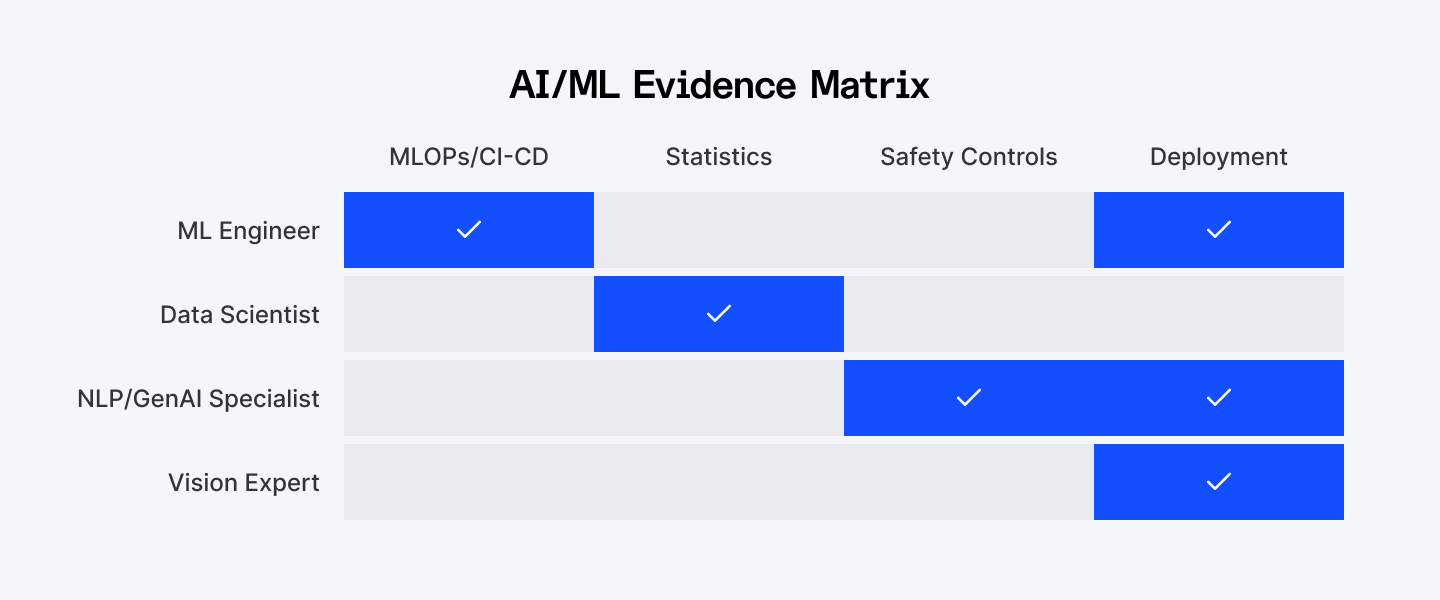

4. Specializations (map to evidence)

For each specialization, list competence proof:

- Machine Learning Engineer (production):

- Show MLOps pipelines, CI/CD for model builds, incident-response examples

- Show MLOps pipelines, CI/CD for model builds, incident-response examples

- Data Scientist:

- Show experimental design, statistical analysis, visualizations that tie metrics to business outcomes

- Show experimental design, statistical analysis, visualizations that tie metrics to business outcomes

- NLP/Generative-AI Specialist:

- Show safe fine-tuning, prompt-control strategies, harmful output prevention methods

- Show safe fine-tuning, prompt-control strategies, harmful output prevention methods

- Computer Vision Expert:

- Transfer learning, augmentation, and deployment constraints for inferencing at scale.

- Transfer learning, augmentation, and deployment constraints for inferencing at scale.

This matrix shows which evidence types each AI/ML role requires. It will help you avoid the common mistake of confusing different specializations.

Map each role to the evidence it should produce. Define which of the above are essential and which are nice-to-have for a given role. That decision determines screening thresholds and the rubrics you’ll use later. Index.dev's vetting approach provides useful reference for mapping evidence to role expectations.

Evaluate specialization areas

AI/ML hiring isn’t one-size-fits-all. After you define core competencies, map the role to a specialization and require matching evidence. Below are concise, role-specific probes and short assessment prompts you can reuse.

1. Natural Language Processing (NLP)

NLP work tests data hygiene, tokenization choices, and safety controls as much as model selection. Evaluate experience with libraries like Hugging Face, spaCy, and NLTK. Strong candidates demonstrate how preprocessing, embeddings, and prompt/control strategies affect downstream risk and latency.

What to ask for/assess

- Repo or notebook showing end-to-end text pipeline: tokenization → featurization → model → inference.

- Evidence of safe fine-tuning or prompt-control (filtering, classifiers for harmful outputs).

- Simple monitoring plan for semantic drift (embedding drift or class-shift alerts).

Sample take-home (NLP)

Classify short support messages into six labels with confidence. Deliver reproducible code, a 1-page trade-off memo, and a brief drift-monitoring checklist.

Interview probes

- Why choose X tokenizer vs Y?

- How would you detect and respond to semantic drift?

- Describe one mitigation for harmful outputs in generative models.

2. Computer Vision

CV candidates must show data augmentation sense, model-size tradeoffs, and inference optimization for edge or cloud deployment.

What to ask for/assess

- Example repo using transfer learning with a reproducible training script.

- Evidence of augmentation strategy and a short note on model size/latency trade-offs.

- A deployable inference stub or quantization notes.

Sample take-home (CV)

Create an image classifier for three classes using limited labeled data. Deliver reproducible training, a small inference container, and a one-page deployment/latency plan.

Interview probes

- How do you pick augmentations for a small labeled set?

- When is a larger backbone justified vs a distilled model?

3. Deep Learning & Distributed Training

These specialists must handle hyperparameter schedules, GPU usage, and scale concerns.

What to ask for/assess

- A training script that supports batch sizing, LR schedule, and checkpointing.

- Notes on reproducible runs across seeds and deterministic evaluation.

- Evidence of cost-aware training decisions (mixed precision, gradient accumulation).

Sample prompt

Given a large dataset, sketch a training plan that balances convergence speed, cloud cost, and reproducibility; include a runnable minimal example.

Interview probes

- How would you prevent OOM in multi-GPU training?

- Describe a learning-rate schedule you’ve used and why.

Implement practical assessment methods

Move from theory to demonstration. Use a mix of portfolio, timeboxed take-homes, live sessions, and presentations. Each reveals different signals.

Portfolio review

Don’t treat GitHub as résumé garnish. Systematically check for: reproducible runs, deployable artifacts (Docker/run.sh), README clarity, and evidence of monitoring/metrics. Rate repos for production intent (0–4).

Follow the Index.dev portfolio review playbook.

Take-Home Projects

Design short, realistic sprints: clear metric, timebox (~8 hours expected), and strict reproducibility requirement (Docker/run.sh, pinned deps, demo script). Require a one-page trade-off memo and a short screencast. Make reviewer reproduction feasible in ≤30 minutes.

Scoring tip: weight reproducibility & deployment readiness high — these predict on-the-job impact more than an extra 0.01 improvement in model accuracy.

Live Coding & Debugging

Use focused debugging prompts (broken script, failing pipeline) rather than algorithm puzzles. Evaluate thought process, debugging steps, and communication.

Technical Presentations

Ask candidates to present a past project in 10 minutes, focusing on decisions, failure modes, and monitoring. Use a 10-question panel rubric to score clarity, impact, and defense of trade-offs.

Evaluate soft skills and communication

Technical chops without soft skills are brittle. Measure the candidate’s ability to explain trade-offs, decompose ambiguity, and operate in teams.

Communication abilities

Require a 90-second “explain to an exec” summary in interviews. Score for clarity and outcome focus.

Problem-solving approach

Present an ambiguous product problem and score for structured decomposition: assumptions, hypotheses, quick experiments, and rollback plans.

Ethical AI understanding

Ask candidates to produce a 1-paragraph model card or risk note during the take-home and defend it in the interview. Probe for concrete thresholds (when would you stop a model?) and remediation steps.

Continuous learning

Ask for one example in the past year where they learned a new tool, and how they applied it — shows practice, not just interest.

Up next: Learn essential strategies for evaluating developers' problem-solving skills.

Design structured interview processes

Consistency reduces bias and makes results comparable.

- Multi-stage pipeline:

- Automated filter → portfolio check → take-home → rubriced review → live design review → references.

- Automated filter → portfolio check → take-home → rubriced review → live design review → references.

- Panel composition:

- Include a hiring manager, a peer, and a product/compliance stakeholder. Rotate interviewers regularly and hold calibration sessions monthly.

- Include a hiring manager, a peer, and a product/compliance stakeholder. Rotate interviewers regularly and hold calibration sessions monthly.

- Reference checks:

- Ask for concrete examples of production incidents, rollback decisions, and monitoring they implemented.

- Ask for concrete examples of production incidents, rollback decisions, and monitoring they implemented.

Leverage modern assessment tools

Automation scales early filtering but does not replace human review.

- Use automated tests for code execution, unit-level checks, and obvious plagiarism.

- Use collaborative platforms (GitHub, Kaggle) for continual evidence of practice.

- Keep automated tools narrowly scoped — they should fail fast, not decide fit.

Explore the 15 best developer assessment tools (both free and paid options).

Build onboarding and performance monitoring

Hiring is the start — onboarding makes it stick.

- Week-one onboarding checklist:

- Environment setup, repo walkthrough, 30/60/90 goals, assigned mentor.

- Environment setup, repo walkthrough, 30/60/90 goals, assigned mentor.

- 30/60/90 evaluation:

- Reproducible demo in week 2, first small production task by day 30, independent project ownership by day 90.

- Reproducible demo in week 2, first small production task by day 30, independent project ownership by day 90.

- Early performance metrics:

- Time-to-first-PR, code review quality, and ability to follow/run monitoring playbooks.

- Time-to-first-PR, code review quality, and ability to follow/run monitoring playbooks.

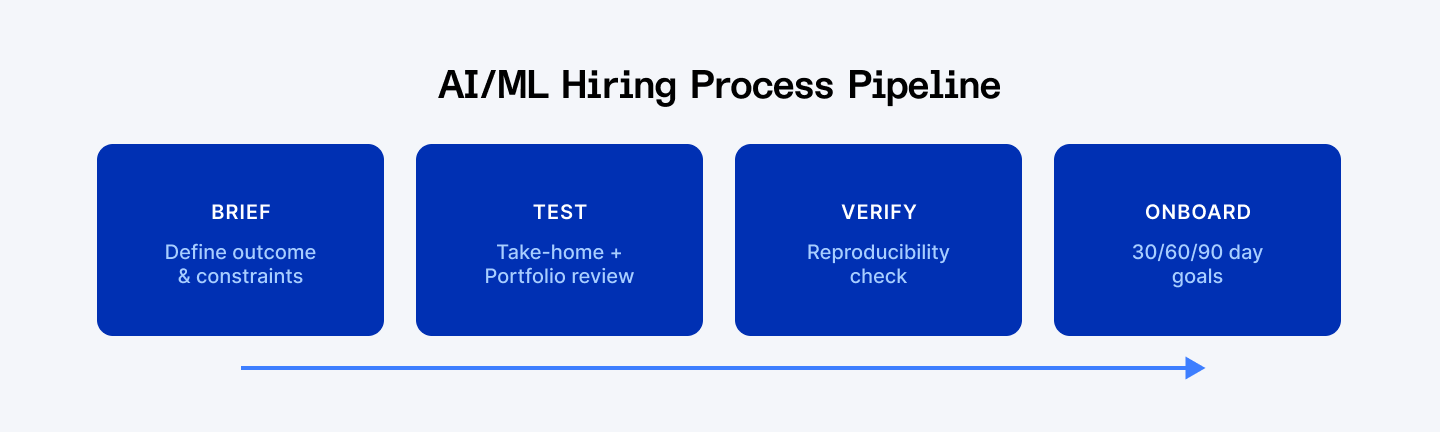

Write role briefs like product specs

When roles read like product briefs, hiring becomes repeatable: brief → test → verify → onboard. That pipeline produces faster decisions, fewer costly mistakes, and clearer accountability for the team that owns the product.

1. Name the outcome first

State the single business outcome the hire must deliver.

Use a single sentence: who the user is, what the system must do, and the metric that defines success. This removes ambiguity and forces candidates to design for the same acceptance criteria.

Example:

“Classify incoming support tickets into six labels so routing automation reduces average first response time by 25% within 90 days.”

That sentence sets the product, the user, and the metric. It also suggests evaluation axes: accuracy, latency, and time-to-impact.

2. Add operational constraints immediately

Add latency, cost, and compliance limits immediately after the outcome. These constraints change architecture decisions and expose operational judgement.

Example constraints:

“Latency: p95 < 200ms; budget: <1M tokens/day; retention: data kept 90 days; HIPAA compliance required.”

Constraints convert toy experiments into engineering problems. Here’s a concrete, copy-paste brief and a take-home template you can use right away.

3. Map the role to the work, not the title

List the evidence each specialization must produce. This reduces false positives and aligns reviewers on expectations. Keep a small library of role templates to speed future hiring and ensure consistent briefs across teams.

Discover which human skills are most valuable in an AI-driven job market.

Design assessments that require runnable evidence

Assessments should force candidates to make trade-offs under constraints and produce artifacts you can verify quickly.

Make the take-home a production sprint

Provide a tight brief, a realistic dataset (or generator), and a clear success metric. Timebox the project (≈8 hours expected work, 48–72 hour submission window). Require:

- A reproducible run (Dockerfile or run.sh).

- A one-page trade-off memo (model choice, failure modes, monitoring plan).

- A short (5–8 minute) screencast walkthrough.

Why this format: it surfaces engineering discipline, pragmatic trade-offs, and the candidate’s ability to communicate decisions succinctly.

Demand reproducibility, not polished demos

Require pinned dependencies, a README with reproduction steps, and a small test harness. During review, the reviewer should reproduce claimed metrics in ≤30 minutes. Reproducibility is the single best predictor that someone can move code into production.

Ask for a trade-off memo and a monitoring checklist

A great candidate supplies:

- Model choice and rationale.

- Two failure modes and their impact.

- Monitoring checklist: Alerts for data drift, label distribution changes, latency service level objective (SLO) breaches, cost anomalies, and a clear rollback condition.

Operational judgment shows up in those details.

Sample brief and take-home

Two-line job brief

Task: Build an English-language ticket classifier that assigns one of six labels and returns a confidence score for routing.

Success: Launchable endpoint with p95 latency < 200ms and precision ≥ 0.88 on the holdout within 90 days.

Sample 48–72 hour take-home (NLP ticket classifier)

Deliverables:

- Repo with run.sh or Dockerfile.

- Script to reproduce training, evaluation, and a small inference server.

- One-page trade-off memo describing model choice, two failure modes, and monitoring/rollback plan.

- 5–8 minute screencast walkthrough.

Constraints & expectations:

- Use provided anonymized ticket data (or the supplied generator).

- Expect ≈8 hours of work; project timeboxed to 48–72 hours.

- Reviewer will attempt to reproduce results in 30 minutes.

Scoring (high level): reproducibility; metric performance vs baseline; deployment intent; monitoring plan; communication clarity.

Deliverables are useful only if you score them consistently, below is a compact rubric that teams can use to compare candidates objectively.

Score objectively and verify reproducibility

Use a compact rubric

Score each competency 0–4 and sum. Use the same rubric across candidates.

Competency examples: problem framing; data handling; modeling choices; reproducibility; deployment & monitoring readiness; ethics & bias mitigation; communication.

Competency | 0–4 guide | Weight |

| Problem framing & assumptions | No coherent framing → Excellent, explicit assumptions | 15% |

| Data handling & feature pipeline | Non-reproducible / poor pipeline → Clean, efficient pipeline | 20% |

| Modeling choices & metrics | Poor alignment → Strong alignment and justification | 20% |

| Reproducibility & code hygiene | Fails to run → Reproduces in ≤30 min, tests present | 20% |

| Deployment & monitoring readiness | No plan → Concrete monitoring + rollback | 15% |

| Ethics & bias mitigation | No consideration → Explicit mitigations and thresholds | 10% |

Pass guidance: Aim for a minimum pass threshold of 70% (weighted).

Example:

A candidate scoring 78% (strong reproducibility + good monitoring + average ethics) should move to live design review; a candidate scoring 62% fails the repo reproducibility gate and should not proceed.

Use numerical scores to shortlist, then confirm with a live design review.

Run a 30-minute verification

Checks to run while scoring:

- Two-command run completes and produces the reported metric.

- Dependencies are pinned and documented.

- Dockerfile or run script exists and README has reproduction steps.

- Trade-off memo present.

If reproducibility fails, downgrade reproducibility and deployment scores immediately. Reproducible work beats impressive slides.

Evaluate model governance and ethics with the same rigor

Require a short model card or risk note

Ask candidates to include a brief model card: intended use, data sources, limitations, and key metrics for fairness and safety.

Probe for mitigation, not platitudes

In interviews, ask for concrete mitigations: which bias metrics were measured, what thresholds triggered action, and what remediation steps were taken. Vague answers should lower the ethics score.

Governance is not optional. In 2025, organizations face both regulatory and reputational risk if models run unchecked. Tie hiring signals to governance readiness.

When to use automated screening and when not

Automated tools scale early filtering for basic coding competence and plagiarism detection. Use them to remove submissions that fail basic execution or are plagiarized, but don’t let automation decide final fit. Preserve human review for interpretive signals: trade-offs, monitoring plans, architecture reasoning, and ethical judgments.

Practical rules

- Run automated checks for execution, simple unit tests, and plagiarism.

- Reject early if the candidate cannot reproduce a simple example or submit copied work.

- Always pair automated passes with a human review of the take-home and memo.

Conclusion

Effective AI/ML developer evaluation requires structured approaches combining technical assessment, practical demonstration, and soft skills validation. Success depends on clear requirements definition, comprehensive evaluation frameworks, and continuous process refinement.

The competitive 2025 market demands strategic hiring approaches leveraging modern assessment tools and global talent networks. Organizations implementing rigorous evaluation standards while maintaining efficient processes gain significant competitive advantages.

Want to dive deeper into AI hiring and developer evaluation?

Explore more practical guides on evaluating technical skills, building assessment frameworks, and AI developer rates. Browse our complete collection of AI hiring articles and discover more insights from Index.dev experts.

Looking to hire AI/ML developers?

Index.dev connects you with pre-vetted engineers ready to ship reliable, monitored AI systems. Our top 5% of vetted AI talent have proven portfolio evidence, reproducible code, and operational judgment. Scale confidently with experts who understand deployment constraints, cost controls, and ethical AI, not just model accuracy.