Until now, AI has lived in a narrow role. It answered questions. Suggested code. Flagged issues. Helpful, but passive. Always waiting for a human to lead. That limitation quietly defined the boundaries of what AI could be trusted to do.

Agentic AI breaks that boundary.

These systems interpret goals, gather context, choose tools, sequence actions, and execute end-to-end tasks. They can notice what’s wrong before they’re asked. They can decide what to do next. And they can act without constant supervision.

That changes how software gets built. And it changes what it means to be a software engineer.

This is the rise of agentic engineering. And it forces an uncomfortable but necessary question.

If software can act on its own, what exactly is the human role now? That question sits at the center of the next decade of software development and in the skills that will matter by 2026.

Join Index.dev to work remotely on cutting-edge AI projects.

5 Trends Accelerating Agentic AI in Engineering

The transition from "chatting with a bot" to "orchestrating a workforce" is moving faster than the cloud transition did a decade ago. Here are the five key metrics:

1. Agentic AI has crossed into production

Nearly 57% of teams already have agents running in production, and another 30% are actively preparing deployments. Large enterprises are leading, not because they move faster, but because the payoff is now big enough to justify the risk.

2. Coding agents are the daily workhorse

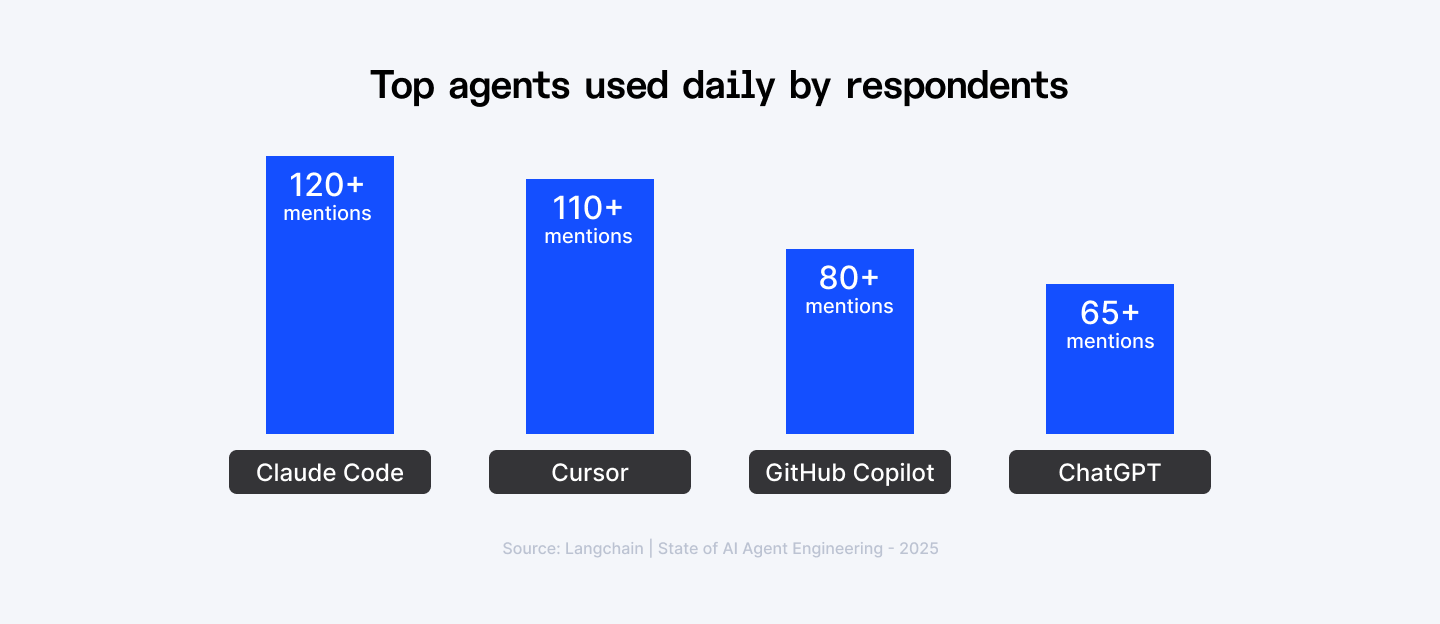

If there’s one place agents have earned trust, it’s coding. Tools like GitHub Copilot, Cursor, and Claude Code dominate daily use. According to Jellyfish, 90% of engineering teams now use AI coding tools, up from 61% just one year ago.

3. Quality, not cost, is the real blocker

About one third of teams cite quality as their top concern, covering accuracy, consistency, tone, and policy compliance. Cost worries have dropped. Reliability worries have not.

4. Observability is now table stakes

Almost 89% of teams have implemented observability for their agents, far outpacing formal evaluations. If you can’t trace what an agent did, why it did it, and what it touched, you can’t safely scale it.

5. Adoption is accelerating faster than most roadmaps

At the start of 2025, 51% of companies used agentic AI. By May, that number jumped to 82%. Code review cycles are already faster, and Gartner predicts that by 2028, 15% of daily business decisions will be executed autonomously.

What Is Agentic AI?

The agentic AI comes down to three capabilities that traditional AI lacks: it can reason through problems, plan sequences of actions, and execute those actions using external tools.

When you ask a standard AI system a question, it generates an answer based on patterns in its training data. When you give an agentic AI system a problem, it figures out what information it needs, where to get it, what steps to take, and then takes them.

The technical foundation is a process called ReAct: Reasoning and Acting. The system doesn't just think or just do. It cycles between the two. It reasons about what action to take, takes that action, observes the result, reasons about what to do next, and continues until the task is complete.

Think of it like this: A coding copilot suggests the next line of code. An agentic AI coding system reads your error logs, identifies the root cause, searches your codebase for similar patterns, checks Stack Overflow for relevant solutions, writes a fix, runs your test suite, and commits the change if tests pass.

The architecture has four essential components:

- Memory systems that track context across interactions. Both short term (what happened in this session) and long term (patterns from previous work). This is what allows an agent to learn from experience rather than treating every task as new.

- Planning frameworks that break complex goals into achievable steps. The agent doesn't just execute a fixed script. It evaluates options, chooses approaches, and adjusts when obstacles emerge.

- External tool access that extends capability beyond the model itself. Web search, code interpreters, database queries, API calls. The agent knows when it needs information it doesn't have and how to retrieve it. Standards like Model Context Protocol are emerging to make these integrations reliable and secure.

- Goal orientation that keeps the agent focused on outcomes rather than just completing steps. It understands what success looks like and can course correct when initial approaches don't work.

What makes this powerful is the synthesis. Memory without tools is just a chatbot with better context. Tools without reasoning is just automation. Planning without execution is just recommendation. Together, they create systems that can handle open ended tasks with genuine autonomy. But autonomy introduces risk. An agent that can take action can take the wrong action. An agent that can access tools can misuse them. An agent that can plan can optimize for the wrong objective. Which is why the most critical component isn't technical at all: it's the governance layer that defines boundaries, monitors behavior, and maintains human oversight where it matters.

Want to see how AI is reshaping software engineering? Discover the next frontier of development with AI agents.

How Agentic AI Redefines Software Engineering Roles

Software engineering is moving away from execution and toward intent, oversight, and system design. And it’s happening faster than most job titles can keep up with.

Already, over 13% of pull requests are generated by bots, according to LinearB. That number will not plateau. It will compound. As agents mature, writing code becomes the easiest part of the job, and the least differentiating.

From coder to architect to systems designer

Engineers are no longer valued primarily for how fast they can write logic. They are valued for how well they can design systems that decide, act, and recover without constant supervision.

This is the shift from coder to architect. From builder to system designer. From executing tasks to managing autonomy.

If you are building agentic frameworks that write code, test systems, and deploy services, you are no longer building applications. You are building the factories that build applications. That mindset changes everything.

Instead of asking “What code should I write?”, engineers now ask:

- What decisions will this system need to make?

- What context does it require to make them well?

- What happens when it fails?

- And how do I keep humans meaningfully in control?

Software engineering roles are mutating

Across teams, we’re seeing a clear pattern. Roles are moving away from fixed logic and toward behavior design, orchestration, and accountability.

- Backend developers are becoming specialists in agent interactions. Their value lies in how well agents can use tools, APIs, and context.

- Solution architects are turning into orchestration architects. They design how multiple agents coordinate, fail safely, and scale under uncertainty.

- Data engineers are evolving into curators of knowledge and memory. Their job is no longer just pipelines, but relevance, retrieval, and semantic quality.

- QA engineers are becoming auditors of autonomous behavior. They test not just correctness, but judgment, bias, and adaptability.

Traditional Role | Agentic Era Role | What Skills Matters Now |

| Backend Developer | Agent Systems Engineer | Tool and API design, context shaping, decision boundaries, agent reliability |

| Solution Architect | Agent Orchestration Architect | Multi-agent coordination, protocol design, failure handling, system resilience |

| Data Engineer | Knowledge & Memory Architect | Long-term memory design, retrieval quality, semantic relevance, data trust |

| QA Engineer | Autonomous Systems Auditor | Behavior testing, decision validation, bias detection, safety and compliance |

Every role shifts away from writing deterministic logic and toward shaping how autonomous systems behave, interact, and stay accountable.

A day in the life of a software engineer: then vs now

The shift becomes obvious when you look at daily work. Before, a backend developer received a feature request, wrote endpoints, tested them, and deployed. Now, an agent-focused engineer receives a business objective. They decide which tools an agent can use, what data it can access, and what decisions it is allowed to make. They define constraints, context windows, and escalation paths. They test not only for functional correctness, but for behavior under uncertainty.

The code still exists. But it’s no longer the center of gravity.

That requires a different mindset. A different skill set. And a different definition of seniority.

Curious how AI agents can boost your coding workflow? Check out our guide to the 5 best AI agents for coding.

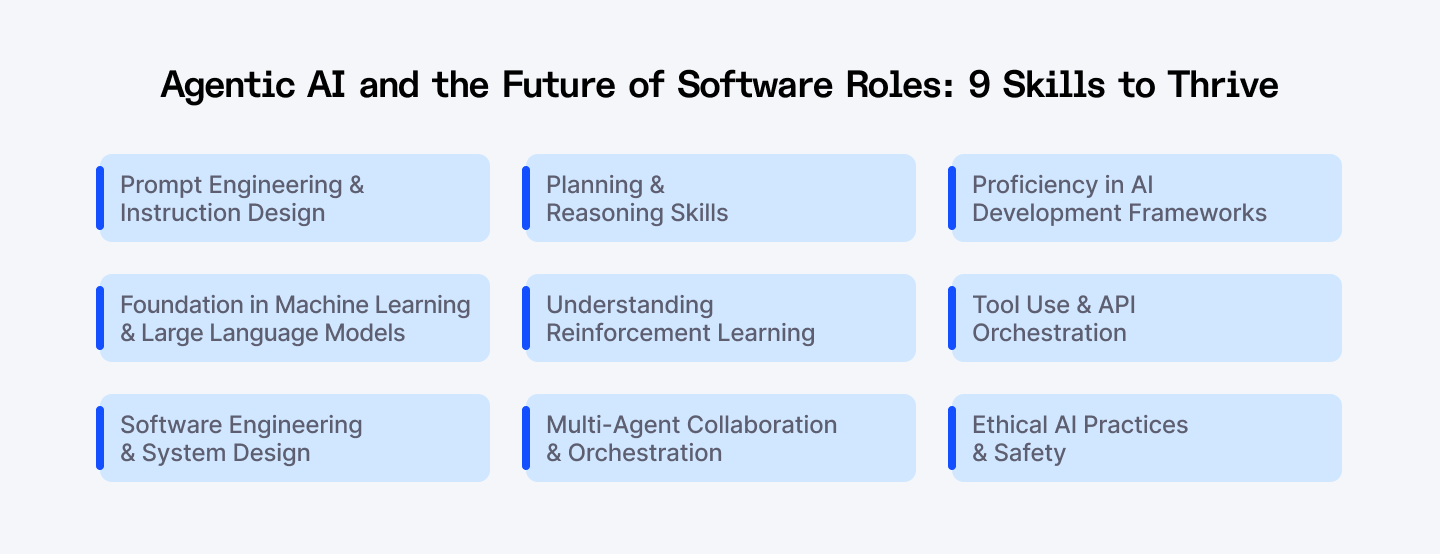

The Skills That Separate Agentic Engineers from Everyone Else

1. Prompt Engineering & Instruction Design

Most engineers think prompt engineering is about finding the right words to ask AI for what you want. That's search query thinking. In agentic systems, prompts are executable specifications that define how an autonomous agent reasons, plans, and acts.

The stakes are different when the system doesn't just respond but takes action. A vague prompt doesn't just return a bad answer. It cascades into flawed planning and incorrect execution.

Consider the difference between these two instructions. "Summarize customer sentiment" is a request that could mean anything. The agent has no constraints, no context, no decision framework. "Summarize customer sentiment from support tickets in the last 90 days, categorize by urgency, and highlight the top three recurring complaints" is a specification. It defines scope, structure, and output format.

To build this skill, focus on three core practices:

- Experiment with few-shot and chain-of-thought prompting to give the agent reasoning patterns to follow.

- Anchor context with role-based prompts, such as “act as a financial analyst,” to align expectations.

- Iteratively test, refine, and apply guardrails to filter irrelevant, unsafe, or low-quality outputs. Avoid overloading prompts with unnecessary details, and don’t under-specify tasks—the art lies in balancing clarity and flexibility so the agent can adapt intelligently.

2. Foundation in Machine Learning & Large Language Models

You can't architect what you don't understand. Building reliable agentic systems requires more than knowing how to call an API. You need to understand what's happening inside these models, where they excel, and crucially, where they fail in ways that could break your systems.

The essential competencies here go deeper than surface level familiarity:

- Transformer architecture and attention mechanisms to predict how context windows affect agent memory and reasoning.

- Training methodologies, particularly reinforcement learning from human feedback.

- Model evaluation metrics and benchmarks so you can assess whether a model is actually suited for your use case or just performs well on narrow test sets.

- API integration and LLM providers to understand their rate limits, cost structures, latency patterns.

Build this foundation deliberately:

- Start by implementing simple models from scratch, even if you'll never use them in production. Build a basic transformer. Train it on a small dataset. Watch how attention patterns emerge.

- Then systematically test commercial models against edge cases in your domain. Don't just test happy path scenarios. Find the boundaries where models become unreliable. Understand whether failures are consistent or random, because that changes how you build safety mechanisms.

- Finally, stay current with model releases and capability improvements, but with a critical eye. New models often trade off different characteristics. Faster inference might mean worse reasoning. Larger context windows might mean less precise attention. Understand the trade offs so you can choose the right model for each agent's role.

3. Software Engineering & System Design

Most agentic AI failures aren't AI failures. They're engineering failures. The model works fine. The system around it doesn't scale, doesn't handle errors gracefully, doesn't maintain state correctly, or falls apart under load. You can have the most sophisticated reasoning engine in the world, but if your architecture can't support it reliably, you've built nothing useful.

Core competencies include:

- Deep proficiency in Python and modern agentic frameworks like LangChain, LlamaIndex, and AutoGen.

- Solid command of microservices architecture and API design, because agents are essentially autonomous microservices that need to discover, authenticate with, and reliably call dozens of external services.

- Expertise in asynchronous programming and concurrent operations, because agents spawn parallel tasks, make simultaneous API calls, and coordinate results.

- Rigorous practice with version control, testing, and CI/CD pipelines. Agentic behavior is harder to test than deterministic code. You need reproducible environments, comprehensive logging, and automated testing that covers both happy paths and chaotic edge cases.

- Hands-on experience with cloud platforms and containerization. Agents consume unpredictable amounts of compute and memory. You need infrastructure that scales.

Build this capability through progressive complexity:

- Start by building a single agent system with proper error handling, logging, and observability. Don't move to multi agent architectures until you can reliably monitor and debug one agent's behavior.

- Then add complexity incrementally: introduce tool calling, then add memory persistence, then build agent to agent communication. At each stage, stress test the system. What happens when APIs are slow? When they return errors? When the agent makes 100 tool calls instead of 10?

- Finally, treat agent infrastructure like production infrastructure. Use the same standards for monitoring, alerting, deployment, and disaster recovery that you'd use for any critical system.

4. Planning & Reasoning Skills

Planning and reasoning are the engine of agentic AI. Without them, agents are just reactive tools. They respond, but they don’t think. Structured reasoning turns autonomy into reliability. According to Stanford’s Human-Centered AI Group, nearly 70 percent of multi-step tasks fail when planning mechanisms are missing, highlighting that reasoning is mission-critical.

Agents are now responsible for multi-step objectives that used to require human judgment. Tasks like “research competitors, summarize gaps, and draft a market entry strategy” demand foresight. Without explicit reasoning, agents may skip steps or jump to conclusions. With strong planning, agents break objectives into sub-goals—gather data, identify gaps, generate options—and execute each step methodically.

Core competencies include:

- Implementing chain-of-thought or tree-of-thought reasoning to simulate multiple possible paths before action.

- Leveraging planning libraries like LangGraph or CrewAI allows explicit goal decomposition.

- Self-reflection and error connection mechanisms, where the agent reviews its reasoning for errors before final output, are essential.

- Integrating symbolic reasoning with neural approaches, combining the pattern matching strengths of LLMs with the logical precision of rule based systems.

- Task decomposition and sub goal generation, breaking complex objectives into manageable pieces that can be executed, validated, and adjusted.

- Mitigation strategies include checkpoints, summaries of prior steps, and human-in-the-loop reviews for high-stakes tasks.

Build this skill through deliberate practice with increasingly complex tasks:

- Start with problems that require three to five steps. Have the agent explicitly state its plan before execution. Compare the actual execution path to the stated plan. Where did it deviate? Was that deviation intelligent adaptation or reasoning drift?

- Then scale to problems requiring ten or more steps, where maintaining coherent strategy becomes genuinely difficult. Implement different planning frameworks and benchmark them against each other. Chain of thought, tree of thought, ReAct, reflection based planning. Understand which approaches work best for which problem types.

- Finally, study agent failures systematically. When reasoning breaks down, trace the exact step where the agent lost the thread. Was it a context length problem? A goal ambiguity problem? A lack of verification? Build your planning architecture to prevent that specific failure class.

5. Understanding Reinforcement Learning

Reinforcement learning is the fundamental mechanism that enables systems to learn what works through experience rather than being told what to do through training data.

RL matters in agentic systems because real world environments are too complex to specify every correct action in advance. You can't write rules for every situation an autonomous agent will encounter. Instead, you define what success looks like and let the agent discover how to achieve it. A customer service agent learns which response strategies resolve issues fastest. A code optimization agent learns which refactoring patterns improve performance without breaking functionality. A resource allocation agent learns which scheduling decisions maximize throughput under varying load conditions.

The essential framework consists of four core elements that interact in continuous loops.

- States represent the agent's current situation and available information.

- Actions are the choices the agent can make from any given state.

- Rewards signal whether an action moved the agent closer to or further from its goal.

- The policy is the agent's learned strategy for choosing actions based on states.

The agent takes an action, observes the reward and new state, updates its policy, and repeats. Over thousands or millions of iterations, effective strategies emerge from this feedback loop.

The competencies you need:

- Familiarity with policy gradient methods, Q learning, and actor critic architectures, understanding when each approach fits different problem structures.

- Understanding states, actions, rewards, and policies, as well as designing reward structures that encourage desirable behavior while avoiding unintended shortcuts.

- Simulation environments and feedback loops, where agents can safely explore and learn before interacting with production systems.

- Familiarity with reinforcement learning frameworks such as RLlib, Stable Baselines3, or custom policy-gradient implementations.

Build this capability through progressively realistic environments:

- Start with simple grid world problems where you can visualize the entire state space and watch policies evolve. Implement basic Q learning and policy gradient algorithms from scratch, not using libraries. This builds intuition for how updates actually affect decision making.

- Then move to more complex environments with partial observability and delayed rewards, where optimal strategies aren't obvious. Observe how agents explore the space and converge on solutions.

- Finally, apply RL to agentic tasks in your domain, starting with low stakes decisions where bad policies have minimal consequences. Monitor the learning process closely. Which behaviors emerge quickly versus slowly? Where does the agent get stuck? How sensitive is learning to reward function design and hyperparameters?

6. Multi-Agent Collaboration & Orchestration

The single agent model is already obsolete for complex problems. The future isn't one superintelligent system doing everything. It's specialized agents coordinating like expert teams, each bringing distinct capabilities to collaborative problem solving.

This skill matters because most challenges are multi-dimensional. A financial advisory system, for example, is about combining portfolio modeling, risk assessment, and regulatory compliance. No single agent can excel at all three. When orchestrated properly, a team of agents delivers outcomes that are accurate, scalable, and resilient.

Key competencies include:

- Defining distinct roles for each agent, such as planner, researcher, or executor, and implementing communication protocols so agents share context seamlessly.

- Familiarity with coordination frameworks like CrewAI, AutoGen, or SwarmGPT is essential, as is designing conflict resolution mechanisms when agents produce contradictory outputs.

- Understanding multi-agent reinforcement learning (MARL) is critical for systems where cooperation or competition drives performance.

Build this capability incrementally:

- Start with two agents handling clearly separated concerns. A researcher agent that gathers information and an analyst agent that processes it. Nail the handoff protocol. What information does the analyst need versus what the researcher thinks it needs? Then add a third agent and watch how coordination complexity grows non linearly.

- Implement different coordination patterns: hierarchical with a supervisor agent, peer to peer with consensus mechanisms, pipeline where agents form a processing chain. Benchmark which patterns work best for which problem types.

- Finally, stress test coordination under failure. What happens when one agent becomes unresponsive? When agents receive conflicting information? When the system needs to roll back a partially completed multi agent workflow?

7. Proficiency in AI Development Frameworks

Proficiency in AI development frameworks is the practical backbone of agentic AI. Knowing theory is not enough—agents live in code, and frameworks are the engines that turn ideas into autonomous action. The right tools let engineers design, train, and deploy systems that are scalable, adaptable, and reliable in real-world environments.

The essential competencies center on knowing which tool fits which problem:

- TensorFlow excels at production deployment with mature serving infrastructure, monitoring integrations, and optimization for edge devices. If you're building agents that need to run efficiently on resource constrained hardware or require rock solid deployment pipelines, TensorFlow's ecosystem provides that stability.

- PyTorch dominates research and rapid prototyping with its intuitive dynamic computation graphs and debugging experience. For reinforcement learning workflows where you're iterating quickly on agent architectures and training loops, PyTorch's flexibility accelerates development.

- Keras simplifies neural network design with high level abstractions that let you build complex models in fewer lines of code, valuable when you need to experiment with different agent architectures quickly.

- JAX targets high performance computing with automatic differentiation and hardware acceleration, essential when training large scale agents or running massive multi agent simulations.

But framework knowledge extends beyond just the core libraries. You need proficiency with the broader ecosystem:

- LangChain and LlamaIndex for orchestrating LLM based agents and managing retrieval augmented generation.

- Hugging Face Transformers for accessing pretrained models and fine tuning them for specialized agent tasks.

- Ray for distributed computing when your agent training or deployment needs to scale across multiple machines.

- Weights & Biases or MLflow for experiment tracking, because agent development involves countless iterations and you need systematic ways to understand what worked and why.

Build proficiency through progressive challenges that force you to understand framework capabilities deeply:

- Start by implementing a simple agent entirely within one framework, using its built in components for model definition, training loops, and evaluation. This establishes baseline fluency. Then solve the same problem in a different framework, understanding how different design philosophies affect your implementation. PyTorch's imperative style versus TensorFlow's declarative approach, for instance.

- Next, build a system that requires integrating multiple frameworks, perhaps using PyTorch for agent training, LangChain for orchestration, and TensorFlow Serving for deployment. This teaches you how to bridge different ecosystems.

- Finally, extend a framework with custom functionality, implementing a specialized training loop or a novel architecture component. This builds confidence that you're not limited to what the framework provides out of the box.

8. Tool Use & API Orchestration

Text generation is table stakes. What separates functional agents from transformative ones is their ability to query databases, call APIs, execute code, trigger workflows, and interface with external systems.

This capability matters because almost no real world task exists purely in the language domain. A customer support agent that can only generate sympathetic responses is marginally useful. One that can query billing systems, apply credits, update account status, and generate confirmation emails transforms from advisor to problem solver.

Core competencies include:

- Starting with single-tool integrations, such as database queries or basic API calls, and progressing to multi-tool orchestration using frameworks like LangChain, AutoGen, or CrewAI.

- Robust error handling and fallback mechanisms, ensuring agents continue operating smoothly when a tool fails.

Build this skill through increasing integration complexity:

- Start with a read only tool that just fetches data, like a weather API or database query. Get error handling solid. Understand rate limits and latency characteristics. Then add a tool that modifies state, like updating a record or sending an email. Now you need transaction semantics and rollback capabilities for when things go wrong mid workflow.

- Next, combine multiple tools in a workflow where the agent must coordinate their use intelligently. Which order makes sense? How should results from one tool inform the next?

- Finally, give the agent tool choice, where it selects from a toolkit based on the situation. Observe how it learns which tools are most effective for different tasks.

9. Ethical AI Practices & Safety

Agentic systems operate in contexts with real consequences. An agent making hiring recommendations affects people's livelihoods. One handling medical triage affects health outcomes. One managing financial transactions affects economic security. Traditional software fails predictably. Agentic AI can fail in ways that are biased, opaque, and difficult to detect until harm has occurred. You can't debug fairness issues after deployment the way you patch security vulnerabilities. You need to build ethical operation into the architecture from the start.

Core competencies include:

- Prompt injection prevention, output validation, bias detection and mitigation, privacy-preserving techniques, and establishing ethical frameworks for autonomous decision-making.

- Traceability and explainable AI to ensure that every agent action is auditable and accountable, especially in critical sectors like healthcare, finance, and public services.

- Fluency with regulatory frameworks like GDPR, the EU AI Act, and emerging AI regulations that establish legal requirements for how autonomous systems must operate.

Build this capability through adversarial thinking and cross functional collaboration:

- Start by deliberately trying to bias your agents. What training data creates discriminatory patterns? What prompts cause harmful outputs? What tool combinations enable privacy violations? Understanding attack vectors helps you build effective defenses.

- Then work with domain experts, legal counsel, and ethicists to understand what responsible operation looks like in your specific context. Healthcare AI has different ethical requirements than marketing AI. Financial services have different regulatory constraints than entertainment. Generic ethics principles aren't sufficient.

- Finally, implement ethics as code, turning principles into executable policies that agents must satisfy. Make fairness constraints part of the objective function. Make transparency part of the architecture. Make accountability part of the deployment process.

Ever wondered what sets AI agents apart from traditional software? Learn how these systems are changing the game.

Professional Development Pathways: Your 2026 Roadmap

The agentic AI landscape is rich with resources. From reading foundational papers to experimenting with frameworks, the tools to learn are at your fingertips. Start small, iterate often, and focus on understanding agent behavior and interaction patterns rather than just functionality. For a more structured route, an agentic ai course can help you build practical skills in orchestration, tool use, and safe deployment.

Formal foundations

Take structured courses on agentic system design, multi agent orchestration, and advanced AI workflows. Not marketing webinars. Technical deep dives that cover architecture patterns, failure modes, and production considerations. Specialize in leading frameworks like LangChain, LlamaIndex, CrewAI, and AutoGen. Understand not just how to use them but how they're architected internally and where their limitations create constraints.

But don't stop at courses. Read the foundational research. Auto-GPT's technical report explaining early agentic architectures. Anthropic's Constitutional AI framework for embedding ethical constraints. Microsoft's research on emergent behaviors in LLM based agents.

Recommended communities

Active communities provide advice, collaboration, and early access to innovations:

- LangChain Discord: Implementation tips and feature exploration.

- AI Engineer Forum: Applied AI across domains.

- Reddit r/LocalLLaMA: Experiments with local models and agentic setups.

- OpenAI Developer Forum: Discussions on LLM-based system design.

Practical starter projects

- Start with a single agent workflow.

- Give it memory using LlamaIndex. Make it retrieve relevant context across sessions. Observe how memory design affects agent behavior. Then break it deliberately. Overwhelm the memory system. Give it contradictory information. Watch how it degrades and build resilience.

- Progress to multi agent coordination.

- Create a system where one agent researches topics and another synthesizes findings. Implement the handoff protocol. Debug the coordination failures. Add a third agent and watch the complexity compound. You'll learn more from these failures than any tutorial.

- Build tool driven agents that choose from multiple APIs based on context.

- Implement reflection loops where agents evaluate and improve their own outputs iteratively. Each project should push slightly beyond your current capability, forcing you to solve novel problems rather than following templates.

Here is a curated path to move from theory to production:

| Level | Project Goal | Frameworks to Use |

| Beginner | Self-Correcting Researcher: An agent that researches a topic, drafts a report, and then "critiques" its own work to improve accuracy. | LangChain, GPT-4o, Tavily |

| Intermediate | The "Shadow Developer": A system that monitors your Jira board, creates a branch for a bug, and suggests a fix with a passing unit test. | CrewAI, GitHub API, Cursor |

| Advanced | Multi-Agent SRE Swarm: A group of agents that detect production anomalies, analyze logs, and negotiate a self-healing patch. | LangGraph, MCP, Kubernetes |

The Final Words

The rise of agentic AI is here, reshaping software engineering at a pace few predicted. Engineers are no longer just coders; they are orchestrators, architects, and curators of autonomous systems that think, plan, and act.

Mastering agentic AI requires more than technical skill. It demands a new mindset: thinking in goals rather than steps, designing for emergence, embracing probabilistic outcomes, and balancing autonomy with human oversight. The skills we’ve outlined—from prompt engineering and reasoning frameworks to multi-agent collaboration, tool orchestration, ethical practice, and human-agent interaction—are the foundation of staying relevant, influential, and effective in the years ahead.

Ready to work at the forefront of agentic AI? Index.dev connects senior engineers with companies building the next generation of autonomous systems. Get matched with roles where you'll architect multi-agent workflows, design tool orchestration frameworks, and solve problems that didn't exist six months ago. Find your next role →

Building agentic systems demands engineers with next-level skills. Our talent pool includes engineers proficient in LangChain, multi-agent architectures, and production AI systems. Get access to pre-vetted developers who know how to build reliable, scalable agentic systems that deliver business value. Hire Agentic AI engineers →

➡︎ Enjoyed this read? Explore our in-depth guides on the skills AI can't automate and find out whether AI agents will replace software developers. Check out the top AI skills to learn to command a higher salary and discover the 10 must-have AI roles for the future of work. For data-driven insights, dive into 50+ AI in job interview stats, AI growth statistics by country, and developer productivity stats with AI coding tools. Finally, understand the bigger picture with 50+ key AI agent statistics and adoption trends.