2025 wasn’t the year AI became “sentient.” It was the year AI became infrastructure.

The chat window is dead. The parlor tricks are over.

If you are a CTO or a Lead Dev looking at the 2025 release logs, you noticed a pattern: Big Tech stopped trying to impress you with poetry and started trying to save you money on compute. They shifted from "magic" to reliability, routing, and agents that finish the job.

Here is a complete breakdown of the top 5 Big Tech AI features of 2025—from Google, OpenAI, Microsoft, Meta, and Apple—that you can ship to production today.

We aren’t speculating on "coming soon." This is what’s live, documented, and ready to break your legacy code.

Looking to implement the newest AI tools? Index.dev connects you with developers ready to deploy Big Tech AI features in your enterprise today.

The 2025 "Cheat Sheet" for Busy CTOs

If you only have 30 seconds between meetings, here is the state of play.

Company | The 2025 Feature | Why does this matter to you/your business? |

Gemini 3 (Pro/Flash) | Automatic reasoning without the manual toggle. Fast + Smart. | |

OpenAI | GPT‑5 System (Router) | Cost control disguised as a feature. Intelligent routing. |

Microsoft | Copilot Agent Mode | From "autocomplete" to "autofinish." |

Meta | Llama 4 (Scout/Maverick) | Open-weight control for when you can't leak data. |

Apple | Foundation Models Framework | Privacy-first AI that runs offline (and free). |

1. Google Gemini 3: The "Deep Think" Era is Fully Here

Google finally admitted something we all knew: sometimes you want the model to shut up and think.

The Feature

Gemini 3 (Pro & Flash)

Google skipped the half-measures. Launched in November 2025, Gemini 3 isn't just a model update; it's a reasoning engine built to rival—and often beat—OpenAI’s "Thinking" models.

It natively integrates "Deep Think" capabilities (previously experimental in 2.5) directly into the core model. This means that you don't toggle a mode—the model just knows when to pause and reason.

Why You Should Care

It flows like a chat but thinks like an engineer.

With Gemini 2.5, "Deep Think" was a specific mode you had to invoke. With Gemini 3, Google successfully merged the "fast" and "slow" lanes. It detects complexity (like a 300-line SQL query optimization) and automatically allocates inference time to "think" before outputting code.

Key Stat

Gemini 3 Flash is now positioning itself as the lowest-latency reasoning model on the market—perfect for agents that need to be smart and fast.

The Catch

It costs more (obviously). And early community feedback suggests it can be verbose. But for high-stakes decision-making, it beats flipping a coin.

Pro Tip: Don't let your juniors use Deep Think for CSS tweaks. That burns the budget. Use it for architecture reviews. If you need engineers who know the difference, hire top 5% AI developers here.

Discover the differences between Gemini and Claude for coding efficiency.

2. OpenAI GPT‑5: It’s Not a Model, It’s a Router

OpenAI stopped selling a "god model." They started selling a traffic controller.

Allow us to elaborate:

The Feature

The GPT‑5 System (Router + Safe-Completions)

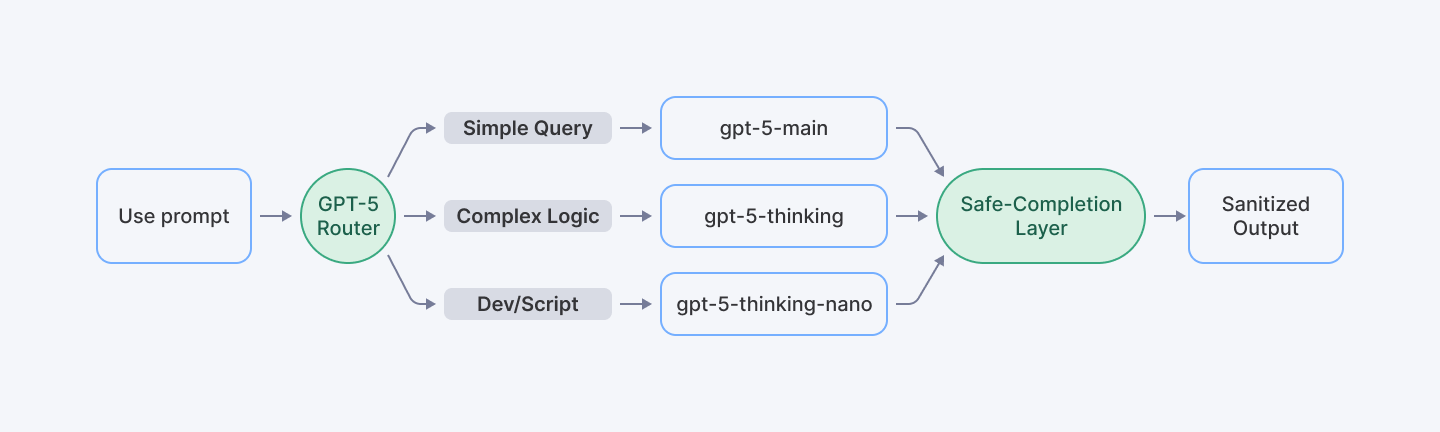

In 2025, OpenAI moved away from "one model to rule them all." The new GPT‑5 architecture is a system. It uses a real-time router to classify your request.

- Easy prompt? You get gpt-5-main (fast, cheap).

- Hard prompt? You get gpt-5-thinking (deep reasoning).

- Dev tools? You get gpt-5-thinking-nano.

They also introduced safe-completions. Instead of the model refusing to answer ("I'm sorry, as an AI language model..."), the system is trained to guide the output into a safe, constructive zone without breaking flow.

Why You Should Care

Reliability.

You don't want to manually pick models in your API calls. You want the API to be smart enough to save you money on the easy stuff and spend compute on the hard stuff.

The Community Vibe

Reddit is... divided. Some users miss the "personality" of older models. But for enterprise applications, "personality" is a liability. We want predictable outputs.

Explore how GPT‑5 is transforming developer workflows in our latest deep dive.

3. Microsoft GitHub Copilot: The "Intern" Got a Promotion

Remember when Copilot was just fancy autocomplete? It grew up.

The Feature

Copilot Agent Mode + MCP Support

This is the killer feature of 2025 for dev teams. Agent Mode allows Copilot to perform multi-step tasks. It doesn't just suggest a line of code; it can:

- Analyze your entire repo.

- Propose a plan.

- Edit multiple files.

- Run terminal commands to verify the build.

- Fix its own errors.

They also added MCP (Model Context Protocol) support, which lets the agent call external tools. It’s not locked in the IDE anymore; it can talk to your other services.

The "Trust Me" Stats

Microsoft’s FY25 Q4 earnings call dropped some heavy numbers to back this up:

- 20 million users.

- 90% of the Fortune 100 are paying for it.

- The code review agent is doing millions of reviews monthly.

Why You Should Care

Velocity.

If your senior devs are spending 4 hours a day writing boilerplate or fixing linting errors, you are lighting money on fire. Agent Mode handles the grunt work.

Reality Check: Agents are force multipliers. You still need senior engineers to review the agent's work. Find senior remote developers who know how to manage AI agents.

Check out the top 5 alternatives to GitHub Copilot that developers are using today.

4. Meta Llama 4: Open Source is Still Eating the World

Mark Zuckerberg is still the most dangerous man in AI because he keeps giving away the secret sauce for free.

The Feature

Llama 4 "Herd" (Scout & Maverick)

Meta’s 2025 drop includes Scout and Maverick—their first open-weight models that are natively multimodal (text, image, audio in one training run) and use Mixture-of-Experts (MoE) architecture.

The standout spec? Llama 4 Scout boasts context lengths up to 10 million tokens.

Yes, you read that right. You can basically dump your entire company wiki, three years of Slack logs, and the Declaration of Independence into the prompt, and it won't choke.

Why You Should Care

Control.

If you are in Fintech, Healthtech, or just paranoid about data privacy (which you should be), you cannot send everything to OpenAI APIs. Llama 4 lets you own the stack. You host it, you tune it, you control the data.

Verify the specs in the Meta Llama 4 announcement.

5. Apple Foundation Models: The Privacy Sniper

While everyone else was fighting over "biggest parameters," Apple quietly solved the biggest friction point in consumer AI: latency and privacy.

The Feature

Foundation Models Framework (On-Device + Offline)

With iOS 19 and macOS 16 (released late 2025), Apple unlocked the Foundation Models framework for developers. This lets you tap into the native, on-device LLM that powers Apple Intelligence.

The Killer Specs

- Offline capable: It works on a plane. It works in a tunnel.

- Free inference: You rely on the user's silicon, not your cloud budget.

- Swift Native: Integrated directly via @Generable and @Guide macros for structured outputs.

Why You Should Care

User Experience.

Cloud AI is slow. The "spinner of death" kills retention.

Apple’s approach lets you build features like "smart journaling," "local summarization," or "context-aware search" that feel instant because they happen locally.

See the developer docs on Apple’s Foundation Models.

The Bottom Line

The 2025 AI market isn't about who has the "smartest" bot. We passed that threshold years ago. It is about specialization.

- Need deep reasoning? Google Gemini 3.

- Need a safe enterprise system? OpenAI GPT‑5.

- Need to automate coding grunt work? Microsoft Agent Mode.

- Need total data control? Meta Llama 4.

- Need privacy and zero cloud costs? Apple On-Device.

The tools are there. The bottleneck now is talent.

You don't need "prompt engineers." You need full-stack developers who understand how to weave these APIs into a product that doesn't hallucinate 20% of the time.

Stay ahead with Big Tech AI features—hire vetted developers from Index.dev who can implement these cutting-edge tools in your enterprise today.

➡︎ Want to go deeper into where AI is really headed? Explore more Index.dev insights on AI literacy and what it means in 2026, how AI is reshaping application and cloud development, and which industries are closest to a real AI tipping point. You can also dig into practical perspectives on why forward-deployed engineers matter, plus hands-on model comparisons that break down DeepSeek versus ChatGPT, how it stacks up against Claude, and which open-source Chinese LLMs are gaining serious traction.