Most executives will tell you AI ethics matters. But only 20% of organizations actually practice what they preach when it comes to ethical AI. That means 80% are operating in a danger zone.

The same systems that help detect cancer earlier can also deny care to entire groups. The same models that boost efficiency can expose sensitive data, reinforce bias, or automate decisions no one can fully explain or reverse.

To move forward intelligently, leaders must confront AI’s dangers head on. What follows is a hard look at the real dangers of AI and concrete strategies to manage them. Some risks you can mitigate. Others you'll need to monitor closely. A few might require you to say no, even when the business case looks compelling.

We’ll start with the most common and arguably the most underestimated.

Hire vetted, experienced engineers through Index.dev who understand AI systems, security, governance, and how to build AI you can trust at scale.

1. Bias: When AI Learns Our Worst Habits

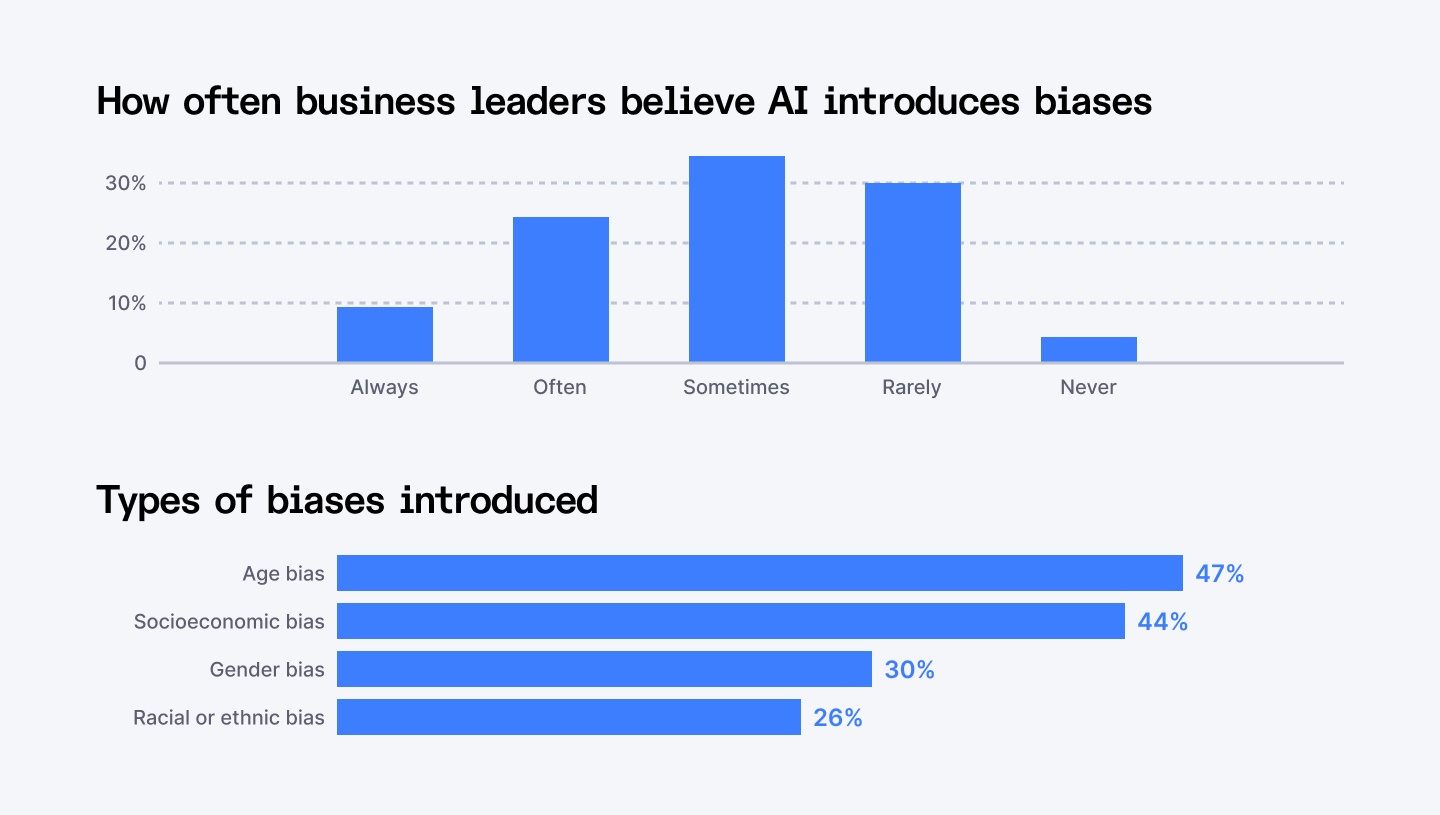

Humans carry biases. We know this. What we're only beginning to grasp is how AI doesn't just mirror these biases, it amplifies them, accelerates them, and wraps them in the false objectivity of mathematical precision. When you feed historical data into a machine learning model, you're teaching it patterns. And if those patterns encode decades of discrimination, your AI will learn to discriminate with ruthless efficiency.

And here's the multiplier effect: when AI systems make millions of decisions at scale, individual biases become systemic oppression. One biased hiring decision is wrong. A million biased hiring decisions reshape entire industries and lock people out of economic opportunity.

We have already seen what this looks like in practice. Recruiting systems that downgrade qualified candidates based on gender coded signals. Medical diagnostic tools that are less accurate for historically underserved populations. Risk assessment and predictive policing systems that disproportionately target marginalized communities because the data feeding them reflects decades of uneven enforcement.

When you train AI on an unfair world, you get unfair AI.

What you need to do

- Before you build a single model, establish an AI governance framework. Real frameworks with clear policies, defined processes, and consequences for violations. Every stage from data sourcing to deployment should have guardrails, checkpoints, and someone accountable when things go wrong.

- Document everything about your training data. Where did it come from? Who's represented? Who's missing? What time period does it cover, and what biases might that period reflect? You need transparency about data origin, structure, and limitations.

- Ensure training datasets actually reflect the populations your AI will serve. If you're building healthcare diagnostics, your training data better include diverse patient populations. If you're creating hiring tools, your data needs to represent who you want to hire, not just who you hired when bias was unchecked.

- Define fairness, then measure it. What does fairness mean for your specific application? Equal accuracy across demographic groups? Equal opportunity? Equal outcomes? These aren't the same thing, and you need to choose deliberately.

- Audit continuously, not once. Bias mitigation is an ongoing discipline across the entire AI lifecycle. Choose the right learning model for your use case. Process data with bias in mind. Monitor real-world performance constantly. Models drift. Contexts change. Populations shift. Build systems that flag degradation and alert you before harm scales.

- Bring business, compliance, and data teams together. Siloed decision making creates blind spots. Establish clear ownership and review processes that require collaboration. Business teams understand impact. Compliance teams understand regulation. Data teams understand technical constraints.

- Track how fairness is being assessed, flagged, and improved over time. When regulators come asking, or when something goes wrong, you need evidence that you tried. More importantly, documentation helps you learn.

Up next: Explore how to spot bias in AI hiring tools.

2. Cybersecurity: When AI Becomes a Weapon

We built AI to solve problems. Now bad actors are using it to create them, faster and more convincingly than we ever imagined.

Cybercriminals are already using AI to scale deception in ways that were impossible just a few years ago. Voice cloning that sounds uncannily real. Deepfake identities that pass verification checks. Phishing emails that no longer look sloppy or suspicious, but personal, contextual, and convincing. And this is happening daily.

AI has lowered the barrier to entry for cybercrime. Attacks that once required time, skill, and coordination can now be automated, personalized, and launched at scale. The result is simple. More attacks. Better attacks. Faster damage.

While bad actors are weaponizing AI aggressively, most organizations are defending passively. Only 24% of generative AI initiatives have adequate security measures in place. That means three out of four AI projects are essentially unprotected, sitting ducks for anyone who wants to compromise them.

What you need to do

- Outline a comprehensive AI safety and security strategy before you deploy another model. Your strategy needs to cover the entire AI lifecycle from data collection through model training, deployment, and monitoring. Define what you're protecting, from whom, and how you'll know if you've been breached.

- Hunt for vulnerabilities before attackers do. Conduct rigorous risk assessments of your AI environments. Map your attack surface. Where is your training data stored? Who has access to your models? What happens if an adversary feeds malicious inputs into your system? Use threat modeling to anticipate how bad actors might exploit your AI, then close those gaps.

- Secure your training data like it's gold. Because it is. Data poisoning attacks work by corrupting your training data at the source. If attackers can manipulate what your model learns from, they control what it becomes. Implement strict access controls. Validate data provenance. Monitor for anomalies that might indicate tampering. Encrypt data at rest and in transit.

- Build security in, not on. Adopt a secure-by-design approach to AI development. Security needs to be baked into architecture decisions, development practices, and deployment processes. Use secure coding standards. Implement principle of least privilege for model access and follow MCP server security best practices. Design with the assumption that some components will be compromised.

- Test like an attacker. Conduct adversarial testing against your models. Red team your AI systems. Try to break them before criminals do. Feed them malicious inputs. Attempt model extraction. Test prompt injection vulnerabilities. See if you can trick your model into revealing training data.

- Invest in your people, not just technology. Launch cyber response training across your organization. Humans are often the last line of defense and the first point of compromise. Train employees to recognize AI-generated phishing attempts. Teach them to verify unusual requests, even when they seem to come from trusted sources. Create protocols for high-stakes transactions that require multiple verification steps.

- Isolate and segment. Don't give attackers the keys to your entire kingdom if they compromise one AI system. Segment your AI environments. Limit lateral movement. Use air gaps where appropriate. If your customer-facing chatbot gets hacked, it shouldn't provide a pathway to your proprietary model training infrastructure.

3. Data Privacy and Quality: Garbage In, Liability Out

AI models are only as good as the data they learn from. Feed them inconsistent data and they make inconsistent decisions. Feed them incomplete data and they draw incomplete conclusions. Feed them outdated data and they optimize for a world that no longer exists.

Large language models are trained on data scraped from across the internet—social media posts, forum discussions, blog comments, website content—often without the knowledge or consent of the people who created it. Your words, your photos, your personally identifiable information might already be embedded in someone's AI model.

Web crawlers vacuum up billions of data points. Some contain names, addresses, medical information, financial details, and private conversations. That data gets fed into training pipelines, processed by algorithms, and deployed in applications that serve millions of users.

Consider the virtual assistant trained on scraped customer service conversations that accidentally memorizes and regurgitates sensitive customer information. Or the healthcare AI that makes diagnostic recommendations based on incomplete medical records from a system migration five years ago. Or the recommendation engine that personalizes content using demographic data collected without proper consent, then gets hit with GDPR fines.

What you need to do

- Inform users and customers exactly what data you're collecting for AI systems. When are you gathering it? What personal information does it include? How will it be stored, processed, and used? Be specific. Be honest.

- Provide clear, accessible opt-out options for data collection. Prominent, simple, and honored immediately. People should be able to refuse participation in your AI training without losing access to your core services.

- Consider synthetic data seriously. Where real data isn't necessary, use computer-generated synthetic data that mimics patterns without exposing individuals. Synthetic data can preserve statistical properties while eliminating privacy risk. It's not perfect for every use case, but it's massively underutilized.

- Know your data sources. Establish clear ownership and documentation for every dataset used in model development. Who created this data? When? Under what conditions? What quality controls were applied? What known limitations exist?

- Implement systems that track data lineage from source to application. Where did this dataset come from? How was it transformed? Who accessed it? Is it still suitable for analysis? Without documentation, you're flying blind.

- Connect datasets to business terms, owners, and quality control indicators. Technical teams need to understand what the data represents. Business teams need to know what the data can and can't support. When everyone shares understanding of data meaning and limitations, you catch issues before they become disasters.

- Validate quality continuously. Datasets drift. Sources change. Quality degrades. Implement ongoing validation that checks for completeness, consistency, accuracy, and timeliness. Set thresholds. Build alerts. When data quality falls below acceptable levels, stop using it for training.

- Maintain visibility across the lifecycle. Data moves through multiple stages: collection, storage, processing, training, inference. At each stage, quality can degrade and privacy can be compromised. Build visibility into every step. Who touched this data? What transformations were applied? Were privacy controls maintained? Is it still fit for purpose?

- Comply with data protection laws. GDPR, CCPA, HIPAA, and dozens of other regulations impose strict requirements on data handling. Non-compliance is expensive. Really expensive. Build compliance into your data practices from the start. Privacy by design. Security by default.

4. Intellectual Property: Who Owns What When AI Creates

Generative AI has become an expert forger. It can paint like Picasso, write like Hemingway, and compose like Mozart. The only problem? It never asked permission.

AI models learn by consuming vast amounts of copyrighted material. Books, articles, artwork, music, code, photographs, designs. They ingest millions of creative works, identify patterns, and then generate new content that mimics those styles. The creators whose work trained these models? Many never consented. Most weren't compensated. Some don't even know their work was used.

Is that innovation or theft? The law is still figuring it out, and that uncertainty is dangerous. The ownership question is even messier. Who owns content generated by AI? The person who wrote the prompt? The company that built the model? The creators whose work trained it? Nobody? The answer varies by jurisdiction, context, and use case. Some countries recognize AI-generated works as copyrightable. Others don't. Some situations might involve multiple parties with legitimate claims. The legal ambiguity creates risk on all sides.

And these cases are playing out right now. Getty Images sued Stability AI for using millions of copyrighted photos without permission. The New York Times sued OpenAI and Microsoft for training on its articles.

What you need to do

- Know what your models were trained on. If you're using third-party AI services, demand transparency about training data. What sources were used? Were they licensed? Is there potential exposure to IP claims? If vendors can't or won't answer, that's a red flag. You're assuming their legal risk by using their models.

- Implement compliance checks rigorously. Before using any content to train AI models, verify you have the legal right to use it. Licensed works require explicit permission. Copyrighted material needs clearance. Even publicly available content might have usage restrictions.

- Think twice before feeding sensitive information into AI algorithms. Proprietary documents, trade secrets, confidential customer data, or IP-protected information from partners can become embedded in models. Assume anything you feed into an AI model could eventually become public. Act accordingly.

- Establish clear IP policies for AI use. Create guidelines that define what AI tools can be used for, what data can be fed into them, and what outputs are acceptable. Make these policies explicit, train teams on them, and enforce them consistently.

- Maintain records of what content was created by AI versus humans. Mark AI-generated outputs clearly. Document the models and prompts used. If ownership disputes arise, or if regulations require disclosure, you need to know what's AI and what's not.

- Consider licensing and partnerships. Where possible, support models trained on licensed data or datasets where creators were compensated. Yes, it might cost more. But it dramatically reduces your legal exposure.

- Monitor the legal landscape actively. IP law around AI is evolving rapidly. Court decisions, regulatory guidance, and legislative action are shaping what's permissible. Stay current. Adjust your practices as the law develops.

- Consider insurance. Some insurers now offer AI-specific liability coverage, including IP infringement protection. Evaluate whether this makes sense for your organization. Insurance doesn't eliminate risk, but it can mitigate financial exposure. As the legal landscape remains uncertain, transferring some risk to insurers might be prudent.

5. Lack of Explainability: The Black Box Problem

Your AI just denied someone a loan. Or flagged a patient as high risk. Or rejected a qualified job candidate. Can you explain why? If the answer is no, you have a problem that goes beyond bad optics.

AI systems are black boxes. Even the researchers who build them often can't explain exactly why a model made a specific decision. The neural networks are too complex, the layers too deep, the connections too numerous. Input goes in one end, a prediction comes out the other, and what happens in between is largely opaque.

That's fine for recommending movies. But it's catastrophic for high-stakes decisions that affect people's lives, livelihoods, and rights.

When AI denies someone healthcare, rejects their credit application, or flags them for additional security screening, people deserve explanations. Regulators demand them. Courts require them. And your customers expect them.

What you need to do

- Make explainability a requirement. Before deploying any AI system for high-stakes decisions, establish whether you can explain its outputs to the satisfaction of regulators, customers, and your own teams

- Adopt explainable AI techniques systematically. Implement methods like LIME (Local Interpretable Model-Agnostic Explanations) to understand individual predictions from machine learning classifiers. Use techniques like DeepLIFT (Deep Learning Important FeaTures) to trace dependencies in neural networks and understand feature importance. Apply SHAP (SHapley Additive exPlanations) values to quantify how each input contributes to predictions.

- Create teams with explicit responsibility for assessing AI interpretability. Give them authority to block deployments that fail explainability standards. Include diverse perspectives: technical experts who understand model mechanics, domain specialists who understand business context, compliance officers who understand regulatory requirements. All three are necessary.

- Set explainability standards for different use cases. Not all AI applications require the same level of transparency. Movie recommendations can be opaque. Medical diagnoses cannot. Define explicit standards for what level of explainability is required for different types of decisions. Higher stakes demand higher transparency.

- Create human-readable explanations. Translate model reasoning into language non-experts can understand. "Your application was denied due to high debt-to-income ratio and limited credit history" works. "The model's logistic regression coefficients indicated negative prediction probability" doesn't. Explainability means human comprehensibility.

- Plan for the "explain this decision" moment. Eventually, someone will challenge an AI decision your organization made. A customer, a regulator. Prepare for that moment now. Can you reconstruct the reasoning? Do you have documentation? Can you demonstrate the decision was fair and justified? Can you identify if it was an error? War-gaming these scenarios helps identify gaps in your explainability infrastructure.

6. Existential Risk: When AI Outgrows Our Ability to Control It

In 2023, Geoffrey Hinton, one of the godfathers of AI, left Google and went public with a warning: AI's evolution might soon surpass human intelligence, and we're not ready for what comes next. Then came another statement, this time from AI scientists, computer science experts, and notable figures across disciplines. Their message was stark: mitigating the risk of extinction from AI should be a global priority, alongside pandemics and nuclear war.

We're racing toward artificial general intelligence and potentially superintelligence without knowing how to control it, align it with human values, or even reliably predict what it will do. We're building systems that could become smarter than us, faster than us, and fundamentally different from us in ways we can't anticipate. And once we cross that threshold, there's no undo button.

The immediate risks of AI—bias, security, privacy—are serious. But existential risks are permanent. Get bias wrong and you harm people. Get superintelligence wrong and there might not be people left to harm.

GPT-3 to GPT-4 happened faster than predicted. Capabilities emerged that weren't explicitly trained. Models started exhibiting behaviors their creators didn't anticipate. We're not in control of the trajectory. We're along for the ride, hoping the track leads somewhere safe.

What you need to do

- Stay informed about emerging developments in AI and their broader implications. This is about understanding current research, following policy discussions, engaging with ethical debates. Encourage your teams to explore these topics seriously.

- Create internal discussion forums. Establish spaces where teams can discuss AI developments, share concerns, and debate implications without fear of looking uninformed or alarmist.

- Strengthen your AI teams' skills continuously. Not just technical skills, though those matter. Also judgment, ethics, systems thinking, and the ability to recognize when something might be going wrong in subtle ways.

- Build flexible governance now. Establish a data governance framework and AI oversight structure that can evolve as capabilities change. Include escalation procedures. Define clear authority for pausing or shutting down systems. Create transparency mechanisms that work even when systems become complex.

- Follow developments in AI safety research, alignment theory, and interpretability. You don't need to become a researcher, but you need to know what questions they're asking and what progress they're making.

- Maintain technological flexibility. Build your tech stack to remain open to new developments without being dependent on any single approach. Experiment with emerging AI tools, but maintain the ability to pivot quickly.

- Plan for discontinuity. Most business planning assumes continuous, predictable change. AI might deliver discontinuous, unpredictable change. AGI could arrive suddenly. Capabilities could shift dramatically. Use cases could transform overnight. Build scenario planning that includes radical possibilities.

- Support AI safety research. If your organization has resources to invest in research, consider supporting AI alignment and safety work. This is a severely underfunded field relative to capability development.

- Take the long view seriously. Existential risk feels abstract compared to quarterly earnings or next year's product roadmap. Make space in your strategic planning for long-term AI implications. Ask the uncomfortable questions. What if we're five years from AGI? What if we're wrong about our ability to control it? What if the assumptions underlying our entire AI strategy become obsolete?

7. Misinformation and Manipulation: When AI Lies at Scale

AI has made lying easier, cheaper, and more convincing than ever before. AI-generated robocalls imitating President Biden's voice were used to discourage voters from going to the polls.

We're living through the death of "seeing is believing." AI can now generate deepfakes so convincing that experts struggle to detect them. It can clone voices with minutes of audio samples. It can create entirely fabricated images that look photographically real. And it can do all of this at scale, for minimal cost, with no specialized skills required.

Malicious actors are already weaponizing this capability. A fabricated video showing a CEO making racist comments. An AI-generated audio clip of a CFO authorizing illegal transactions. A fake image of a public figure in compromising situations. By the time the truth emerges, the damage is done.

What you need to do

- Educate relentlessly. Train users and employees to recognize AI-generated misinformation and disinformation. Teach them the warning signs: overly perfect lip-syncing in videos, unnatural blinking patterns, audio artifacts, images with suspicious lighting or proportions. Make skepticism a core competency.

- Implement mandatory verification protocols for high-stakes information. If you receive unexpected instructions from executives, confirm through alternative channels. If you see damaging content about your organization, verify authenticity before responding. Speed kills accuracy, and disinformation thrives on panic.

- Use high-quality training data. If you're deploying AI systems that generate content, ensure your training data is accurate, diverse, and well-sourced. Garbage training data produces garbage outputs, including hallucinations. Vet your data sources. Document provenance. Remove known misinformation.

- Refine models based on real-world performance. When your AI makes factual errors, use it to improve the model. Analyze why the hallucination occurred. Adjust training data. Modify architectures. Update fine-tuning. Treat each error as a learning opportunity.

- Implement human oversight for critical outputs. Never let AI-generated content reach customers, stakeholders, or the public without human review for high-stakes applications. Humans catch hallucinations that automated checks miss.

- Stay current on detection research. The techniques for creating deepfakes and generating misinformation evolve constantly. So do detection methods. Follow the latest research. Implement new detection tools as they emerge. Participate in information-sharing initiatives.

- Build authentication systems. For sensitive communications from your executives or organization, implement verification mechanisms. Digital signatures, authentication codes, multi-channel confirmation.

- Prepare crisis response plans for deepfakes. Assume at some point your organization or executives will be targeted with AI-generated disinformation. Have a response plan ready. Who monitors for fake content? How quickly can you respond? What channels will you use to communicate the truth? How do you work with platforms to remove fakes?

Up next: Explore 7 strategies to identify deepfake remote candidates.

8. Compliance and Regulatory Risk: Don’t Let AI Blindside You

Most organizations deployed AI faster than they built compliance infrastructure. They moved quickly to capture competitive advantage, assuming they'd handle governance later. Later is now.

GDPR already imposes strict requirements on automated decision-making. The EU AI Act creates comprehensive obligations for high-risk AI systems. Sector-specific regulations in finance, healthcare, and telecommunications are adding their own AI-specific requirements. And this is just the beginning. Every jurisdiction is scrambling to regulate AI before it creates irreversible harm.

Without proper documentation and control, you can't prove you're using AI responsibly. You can't demonstrate that decisions are fair. You can't show that data handling meets privacy requirements. You can't verify that human oversight exists. You can't trace how models were trained or why they produced specific outcomes. In the eyes of regulators, if you can't prove compliance, you're not compliant.

And the penalties are brutal. GDPR violations can cost up to 4% of global annual revenue. The EU AI Act introduces fines up to €35 million or 7% of worldwide turnover, whichever is higher.

What you need to do

- Embed AI governance in your compliance framework. Your existing compliance structure should encompass AI-specific requirements alongside traditional regulatory obligations. That means compliance officers need AI literacy, and AI teams need compliance training.

- Create a comprehensive AI inventory. Document every AI system your organization uses. Where is AI deployed? What functions does it serve? What decisions does it make or influence? What data does it access? How critical is it to operations?

- Define clear accountability. For each AI system, assign explicit ownership. Who approved its deployment? Who monitors its performance? Who responds when issues arise? Who ensures it remains compliant as regulations evolve? Diffused responsibility means no responsibility.

- Document relentlessly. Record everything about your AI systems: data sources, training processes, validation methods, deployment decisions, performance monitoring, updates and modifications, issue responses, and compliance reviews.

- Centralize AI risk assessment. Create a systematic process for evaluating and documenting risks for each AI application. What could go wrong? What harm could result? What mitigations are in place? How is ongoing risk monitored?

- Automate where possible. Manual compliance processes break down as AI deployments scale. Automate documentation capture where feasible. Use tools that automatically record model versions, training data sets, performance metrics, and access logs.

- Make compliance visible. Create reporting mechanisms that surface compliance status, identified risks, and remediation progress. Board members and executives should understand where AI compliance gaps exist and what's being done about them. Visibility drives accountability. Accountability drives action.

- Stay ahead of regulatory changes. AI regulations are evolving rapidly. What's compliant today might violate rules tomorrow. Assign responsibility for tracking regulatory developments in relevant jurisdictions and sectors. When new requirements emerge, assess impact quickly and plan implementation.

- Prepare for the worst case. Despite best efforts, compliance issues might emerge. Have response plans ready. Who investigates? Who communicates with regulators? Who makes decisions about system suspension or modification? What's your escalation process?

Discover top 10 new AI Regulations and Policy updates across the U.S., EU, and Asia.

9. Model Reuse and Integrity Risks: The Trojan Horse in Your Stack

You didn't build that AI model from scratch. Almost nobody does. You grabbed a pre-trained model, fine-tuned it, and deployed it. Fast, efficient, cost-effective. But do you know what you just put into production?

Model reuse is everywhere. Organizations download pre-trained models, integrate third-party AI components, and build on templates to accelerate development. It makes perfect sense from an efficiency standpoint. Why train a language model from scratch when you can fine-tune GPT? Why build computer vision algorithms when you can adapt existing ones? But here's what you might be inheriting:

- Embedded biases from training data you never saw

- Outdated logic that doesn't match current reality

- Licensing terms you don't fully understand

- Architectural assumptions that conflict with your use case

- Security vulnerabilities the original developers haven't patched

- Performance characteristics that degrade in your specific context

You're building critical systems on foundations you didn't inspect and don't control.

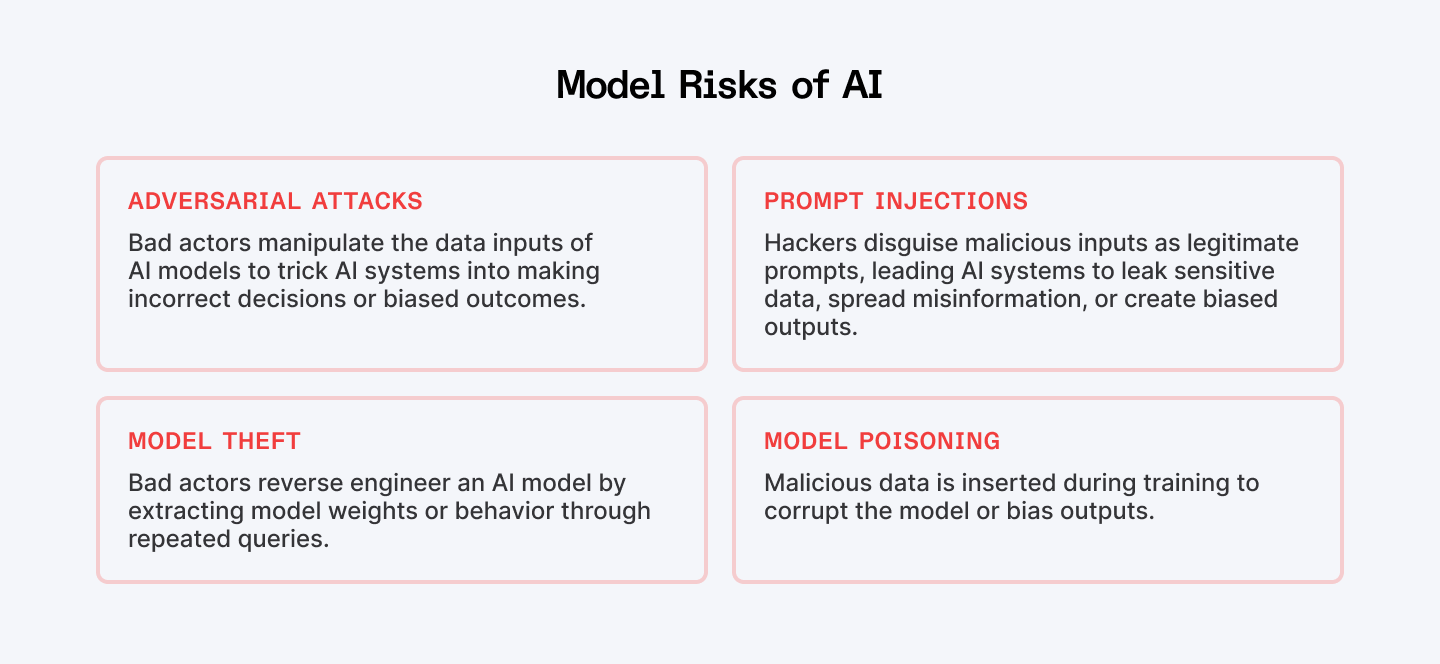

It gets worse when bad actors deliberately compromise models. They can manipulate model architecture, tamper with weights and parameters, or poison the training process to introduce backdoors. The model performs normally in most situations but fails catastrophically in specific scenarios designed by the attacker. Imagine autonomous vehicles that misclassify stop signs. Or fraud detection systems that ignore specific attack patterns. Or facial recognition that fails for particular individuals.

What you need to do

- Verify everything before deployment. Treat external and reused models like critical components requiring rigorous evaluation. Understand their origin. Who created them? Under what conditions? For what purpose? How were they trained? What data was used? What testing was performed? What known limitations exist?

- Document model provenance completely. Record the source of every model you use. What version did you acquire? When? From where? Under what license? Maintain this documentation alongside information about training data, known biases, performance benchmarks, and integration decisions.

- Understand licensing terms deeply. Many pre-trained models come with licensing restrictions that limit commercial use, require attribution, prohibit modification, or restrict certain applications. Violating these terms creates legal exposure.

- Capture metadata systematically. Beyond basic provenance, document assumptions embedded in the model, known limitations, validated use cases, and contexts where it might fail. Include ownership information, usage policies, and integration dependencies.

- Don't assume a model that worked elsewhere will work for you. Conduct extensive testing with your actual data, your real use cases, your specific edge cases. Look for performance degradation, unexpected behaviors, bias manifestation, and failure modes.

- Monitor for unexpected changes continuously. Even models that perform well initially can degrade over time or exhibit sudden changes suggesting compromise. Implement machine learning observability tools that track model behavior, prediction patterns, performance metrics, and drift indicators. Set alerts for anomalies.

- Conduct adversarial testing. Probe for vulnerabilities deliberately. Try to trick the model with adversarial examples. Test edge cases that might reveal compromises. Attempt to extract training data. See if you can manipulate predictions through careful inputs.

- Establish supply chain security. If you're sourcing models from third parties, vet your suppliers. What are their security practices? How do they validate model integrity? What protections exist against tampering? Can they provide attestation about training data and processes?

- Invest in model interpretability. For critical applications, prioritize models whose decision logic you can examine and understand. Black box models from external sources create dependencies on trust that might be misplaced. Interpretable models let you verify they're working as expected and detect when they're not.

10. Environmental Harm: The Hidden Cost of AI

AI's environmental costs are invisible to users. When you drive a car, you see the gas pump. When you heat a building, you get the utility bill. When you run AI, the energy consumption and water usage happen somewhere else, out of sight, easy to ignore.

Training a single large language model emits over 600,000+ pounds of carbon dioxide. That's nearly five times the lifetime emissions of an average car. And we're not training one model. We're training thousands, constantly, with increasingly massive datasets and complex architectures.

Training GPT-3 consumed approximately 1,287 megawatt-hours of electricity and required 5.4 million liters of water for cooling Microsoft's data centers. That's enough water to fill over two Olympic swimming pools. For one model. And every time someone uses that model, more resources burn. Research suggests that processing 10 to 50 prompts consumes roughly 500 milliliters of water, a standard water bottle's worth for a few questions.

Now multiply that across millions of users making billions of queries daily. Add the thousands of organizations training custom models. Factor in the continuous retraining, fine-tuning, and experimentation.

What you need to do

- Choose green data centers deliberately. Prioritize AI providers and data centers powered by renewable energy. Many cloud providers now offer regions powered primarily by wind, solar, or hydro. Route your AI workloads there.

- Prioritize "Small Language Models" (SLMs). Not every problem requires a supercomputer. For 80% of enterprise tasks, a highly optimized, domain-specific SLM can deliver the same results with 90% less energy. Move your strategy toward right-sizing your models. It’s faster for the user, cheaper for the company, and better for the planet.

- Optimize architectures ruthlessly. Simplify model architectures where possible without sacrificing essential performance. Remove unnecessary layers. Reduce parameter counts. Use pruning techniques that eliminate redundant connections.

- Train on less data when feasible. Evaluate whether you need all that training data or if a smaller, curated dataset performs nearly as well. The energy cost of processing data scales with volume. Cut unnecessary data and you cut unnecessary environmental damage.

- Reuse models aggressively. Stop training from scratch when you can fine-tune existing models. Transfer learning lets you adapt pre-trained models to new tasks with a fraction of the computational cost.

- Leverage serverless architectures. Serverless computing scales resources based on actual demand rather than maintaining capacity for peak loads. When AI workloads vary, serverless can dramatically reduce idle resource consumption. You pay for—and consume—only what you use.

- Deploy hardware optimized for AI. Specialized AI accelerators like GPUs, TPUs, or custom chips deliver better performance per watt than general-purpose CPUs. The upfront investment pays environmental dividends through operational efficiency.

- Set efficiency targets. Establish goals for improving AI energy efficiency. Reduce training costs by X%. Decrease inference energy by Y%. Cut water consumption by Z%. Without targets, efficiency is an afterthought. With targets, it becomes a design constraint.

- Right-size deployment infrastructure. Don't over-provision AI infrastructure "just in case." Scale resources to actual needs with ability to expand when required. Idle capacity still consumes energy for cooling and maintenance. Every underutilized server or GPU is wasted environmental resources.

- Consider edge computing where appropriate. Moving AI inference to edge devices can reduce data center load and associated energy consumption. Not every AI workload needs cloud scale.

11. Lack of Adoption: When Your Best AI Sits Unused

Here's the paradox that kills AI initiatives: the technology works brilliantly, but the humans don't cooperate. You invested millions in cutting-edge AI. The models perform beautifully. The infrastructure is solid. The business case was compelling. And six months after launch, adoption rates are dismal. Teams find workarounds to avoid the AI tools. Employees revert to manual processes. The ROI you projected never materializes because nobody's using what you built.

This is a people problem. And people's problems are harder to solve than technical ones because you can't debug human resistance with better algorithms.

The fear is real. Employees see AI and think "replacement." They worry about job security. They question whether their skills will remain relevant. They fear becoming obsolete. And organizations often do nothing to address these fears.

Then there's the trust gap. AI makes decisions people don't understand. It produces recommendations based on logic they can't see. It changes conclusions in ways they can't predict. For workers whose expertise and judgment have been valued for years, AI feels like a vote of no confidence. They're being asked to trust machines over their own experience.

And add lack of understanding to the mix. Many employees don't grasp what AI actually does, how it works, or when to trust its outputs. Without that understanding, they make one of two mistakes: they either blindly follow AI recommendations that might be wrong, or they completely ignore AI inputs that might be valuable. Both outcomes waste your investment.

What you need to do

- Involve stakeholders from day one. Don't wait until after you've built the AI to ask users what they need. Engage them during design. Understand their workflows, their concerns, their priorities. Co-create solutions with the people who'll use them.

- Be explicit and honest about how AI will change roles. Don't pretend jobs won't change. They will. But emphasize how AI handles routine tasks so humans can focus on complex judgment, relationship building, and creative problem-solving.

- Communicate vision relentlessly. Don't announce AI initiatives once and move on. Explain repeatedly why you're adopting AI, what benefits it delivers, how it aligns with organizational goals, and what it means for individuals. Address fears directly. Acknowledge uncertainty honestly.

- Invest seriously in training and enablement. You can't expect people to adopt tools they don't understand. Provide comprehensive training on what your AI does, how to use it effectively, when to trust its outputs, and when to question them. Make training ongoing, not a one-time event.

- Emphasize upskilling opportunities. Help employees see AI as expanding their capabilities rather than replacing them. Identify new skills they'll need and provide paths to develop them. Data literacy, AI collaboration, prompt engineering, output validation—these are valuable skills in an AI-augmented workplace.

- Start with quick wins. Begin with lower-stakes applications where AI can deliver visible value quickly. Let people experience success. Build confidence. Create positive associations. Quick wins generate momentum and credibility that enable larger initiatives.

- Make AI explainable to users. People trust what they understand. Ensure your AI provides visibility into its reasoning, even if simplified. When AI recommends an action, explain why. When it makes a prediction, show what factors influenced it. When it prioritizes something, reveal the logic.

- Listen to resistance and adapt. When people push back against AI, don't dismiss their concerns as change resistance. Listen carefully. Often resistance surfaces legitimate issues: the AI doesn't fit workflows, the interface is clunky, the outputs aren't reliable enough, or the training was inadequate.

- Measure adoption actively and address gaps. Track who's using AI tools, how frequently, and with what outcomes. Identify patterns. Where is adoption strong? Where is it weak? What differentiates successful adopters from resisters? Use data to target interventions.

12. Overreliance on AI: When Automation Becomes Abdication

AI is often right. It processes more data than humans can handle. It spots patterns we'd miss. It operates without fatigue or bias from emotional state. So we start trusting it. Then we start trusting it completely. Then we stop thinking for ourselves. That's when disasters happen.

Overreliance is about thinking too little because AI is available.

It's the autopilot effect. When automation is present, human vigilance drops. We assume the machine has it covered. We don't question outputs. We don't verify recommendations. We don't apply judgment. We become rubber stamps for algorithmic decisions, abdicating responsibility under the illusion that AI knows best.

Consider the hiring manager who relies completely on AI resume screening without reviewing flagged candidates personally. The AI filters out a uniquely qualified applicant because their unconventional career path doesn't match historical patterns. The company loses talent it desperately needed because a human never questioned whether the AI's rejection made sense. Or picture the financial analyst who accepts AI-generated risk assessments without scrutinizing the underlying assumptions. The model flags an investment opportunity as low-risk based on historical data. But current market conditions have fundamentally changed in ways the training data doesn't reflect. The analyst recommends the investment. It fails catastrophically. Everyone asks why nobody questioned the AI's assessment.

What you need to do

- Treat AI as an advisor, never as authority. Build this principle into every AI deployment. Models provide recommendations, insights, and analysis. Humans make decisions.

- Mandate human review for high-stakes decisions. Any AI output that significantly impacts people, finances, reputation, or operations requires human oversight. Credit approvals, hiring decisions, medical diagnoses, safety determinations, major purchases—these can't be fully automated. Build mandatory human checkpoints into workflows.

- Create processes that encourage questioning AI outputs. When something seems off, people should feel empowered to investigate. Build cultures where challenging AI is good practice, not lack of faith in technology.

- Define clear usage boundaries. Document explicitly when and how AI-generated insights should be used. What decisions can AI support? Which requires additional human input? When should AI be overridden? Make these boundaries clear, accessible, and regularly updated.

- Don't let AI atrophy human capabilities. If AI handles routine analysis, ensure humans still understand the underlying domain deeply enough to evaluate AI outputs intelligently. Invest in keeping human expertise sharp even as AI handles more tasks.

- Educate on AI limitations constantly. Help people understand what AI can and can't do. What are its failure modes? When do hallucinations occur? What context does it miss? How do changing conditions affect reliability? The more people understand AI's limitations, the more appropriately they'll rely on it.

- Review decisions where AI was followed blindly. When AI recommendations get implemented without human scrutiny, audit the outcomes. Were the recommendations actually good? What would human judgment have changed? Did we get lucky with AI being right, or was blind trust justified? Learn from both successes and failures.

- Build redundancy for critical systems. Where AI failure could be catastrophic, implement redundant checks. Multiple models with different architectures. Human review teams. Alternative data sources.

- Foster complementary human-AI collaboration. Design systems where humans and AI each contribute their strengths. AI handles data processing, pattern recognition, and statistical analysis. Humans provide context awareness, ethical judgment, and strategic thinking. Neither fully defers to the other. Both inform the outcome.

13. Job Displacement: The Workforce Reckoning

AI isn't just changing how we work. It's changing who works. And pretending otherwise is a failure of leadership.

Let's cut through the comfortable euphemisms. AI will eliminate jobs. Not just transform them. Not just evolve them. Eliminate them. Data entry positions, customer service roles, clerical work, basic analysis, routine content creation—these jobs are already disappearing. More will follow.

Nearly half of organizations surveyed by the World Economic Forum expect AI to create new jobs. That's the optimistic headline. Yes, new roles emerge—machine learning engineers, AI ethicists, prompt engineers, automation specialists. But those jobs require different skills, demand different education, and exist in different locations than the jobs being displaced. A displaced call center worker in Ohio doesn't automatically become an AI specialist in San Francisco. The creation and destruction don't balance out for the humans caught in between.

What you need to do

- Be honest about the impact. Stop hiding behind "transformation" language when you mean displacement. Employees see what's happening. Euphemisms don't make job losses less traumatic. Be direct about where AI will eliminate positions, reduce hours, or change requirements.

- Assess roles individually, not categorically. Don't assume all jobs in a category face the same AI impact. Some roles within customer service, accounting, or content creation are highly automatable. Others require judgment, relationship skills, or creativity that AI can't replicate.

- Invest in reskilling before displacement. Provide training for roles that will remain relevant or grow. Partner with educational institutions. Cover costs for certification programs. Give people time to learn during work hours.

- Transform work structures deliberately. Don't just bolt AI onto existing organizational models. Redesign how work flows. Reimagine job roles. Rethink team structures. AI enables different approaches to problems. Think deeply about what human work should look like in an AI-augmented environment, then structure accordingly.

- Establish human-machine partnerships consciously. Design clear divisions of responsibility between AI and humans. What decisions do machines make? What judgment do humans provide? How do they collaborate? When do humans have authority to override?

- Focus investment on higher-value human work. When AI handles routine tasks, free humans to tackle work that genuinely requires human judgment, creativity, emotional intelligence, or complex problem-solving. But recognize that "focus on higher-value work" requires new skills many employees don't currently have.

- Plan transitions with dignity. When displacement is unavoidable, provide substantial transition support. Generous severance. Extended healthcare. Outplacement services. References and recommendations. Job placement assistance. Treating departing employees well is strategic.

- Measure human impact alongside business metrics. Track not just productivity gains and cost reductions but also workforce effects. How many positions were eliminated? How many workers successfully transitioned? What's employee sentiment? What's the community impact? Make human metrics as visible as financial ones. What gets measured gets managed.

14. Scarcity of AI Talent: The Bottleneck Every Leader Faces

The AI talent shortage isn't coming. It's here, it's severe, and it's getting worse. A Gartner study found that 64% of organizations cite lack of AI talent as their biggest barrier to adoption. Not budget. Not strategy. Not technology availability. Talent.

You can't just buy tools and expect them to work without expert implementation, ongoing optimization, and continuous management. AI systems require skilled personnel through every phase—design, development, deployment, monitoring, maintenance, and evolution. Miss any of these phases and your AI initiative stalls, underperforms, or fails completely.

The demand-supply mismatch is staggering. Every organization wants AI capabilities. Few have people who can deliver them. Machine learning engineers, data scientists, AI architects, MLOps specialists—these roles are in massive demand and desperately short supply. The competition for qualified talent is fierce. The compensation required is eye-watering. And even when you can pay top dollar, you're competing against tech giants, well-funded startups, and every other company trying to build AI capabilities simultaneously.

What you need to do

- Upskill existing employees aggressively. Develop internal talent. Launch comprehensive training programs in AI fundamentals, machine learning, data science, and AI operations. Identify employees with aptitude and interest. Invest in their development.

- Make training substantive, not superficial. Online courses aren't enough. Provide hands-on projects. Assign mentors. Give people time to learn during work hours. Partner with universities for structured programs.

- Create clear AI career pathways. Talented people need to see where their AI skills can take them in your organization. Define roles, responsibilities, and progression. Show how AI expertise translates to advancement.

- Recruit with competitive intensity. AI talent commands premium compensation. Accept that or lose the talent war. Beyond salary, offer equity, bonuses tied to AI outcomes, and benefits that matter to skilled professionals. Flexibility in work location and hours. Cutting-edge technology to work with. Interesting problems to solve.

- Build a reputation as a place AI talent wants to work. Publish research. Contribute to open source. Speak at conferences. Participate in the AI community. Reputation attracts talent.

- Partner strategically with external experts. When internal capability is insufficient, bring in consultants, contractors, or specialized firms. But structure engagements for knowledge transfer, not just project delivery.

- Accelerate projects with external help, then internalize. Use consultants to get AI initiatives launched quickly while you build internal teams. Let external experts handle initial complexity while your people learn. Then transition ownership to internal staff once they've gained expertise through partnership.

- Retain talent relentlessly. Recruiting AI talent is hard. Replacing it is harder. Invest heavily in retention. Competitive compensation is baseline. Provide growth opportunities, interesting work, and influence over direction.

- Build cross-functional AI literacy. Not everyone needs to be an AI expert, but everyone working with AI needs basic understanding. Train product managers to specify AI requirements correctly. Help engineers understand how to integrate AI systems. Teach business leaders to evaluate AI proposals intelligently.

- Consider acquiring talent through acquisition. Sometimes the fastest way to build AI capability is acquiring companies that have it. Small AI startups might have talent you can't recruit individually. Acqui-hires specifically for team capabilities can accelerate development.

Read next: 10 must-have AI roles for the future of work.

The Choice Ahead

Most organizations will take shortcuts. They'll prioritize speed over safety, cost reduction over people impact, competitive advantage over ethical considerations. They'll address risks reactively, after damage is done, when prevention would have been cheaper and more effective.

You can do better. Build governance before crisis forces it. Invest in your people before displacement devastates them. Secure systems before breaches expose you. Create transparency before regulators demand it. Manage AI's environmental footprint before it becomes indefensible.

➡︎ Building AI responsibly starts with the right talent. Index.dev connects you with vetted AI engineers, ML specialists, and data scientists who understand not just how to build AI, but how to build it safely. Get matched with experts who can help you manage these risks from day one.

➡︎ Want to take your AI and software cost optimization skills even further? Explore practical strategies and real-world insights from our expert guides: learn 5 smart ways to reduce development costs, discover the top 5 AI tools for cloud infrastructure cost optimization, and uncover 5 cost optimization strategies for fintech infrastructure. Dive into detailed advice on AI application development cost estimation and optimization, explore code optimization strategies for faster software, and avoid common pitfalls with 10 vendor selection mistakes to avoid when choosing your AI recruiting partner. Browse these resources to sharpen your approach, save money, and make smarter AI and software investments.